Hyperband-optimized parallelized prompt and model parameter tuning for evaluating LLMs

Project description

HyperEvals

Hyperband-optimized parallelized prompt and model parameter tuning for evaluating LLMs.

Motivation

Evaluating LLMs is both notoriously challenging and yet critical before confidently deploying in production environments. Seemingly small tweaks in prompts or upgrades to the model can have a significant impact on performance across various tasks, hence the need for carefully crafted evaluations.

HyperEvals provides hyperband-optimized parallelized prompt and model parameter tuning for evaluating LLMs, inspired by W&B's sweeps combined with hyperband optimization.

Installation

pip install hyperevals

Usage

MVP Flow

- Create a CSV dataset

- Create a prompt template

- Create an executable Model file

- Create executable scorers

- Create a config file

- Run the evaluation

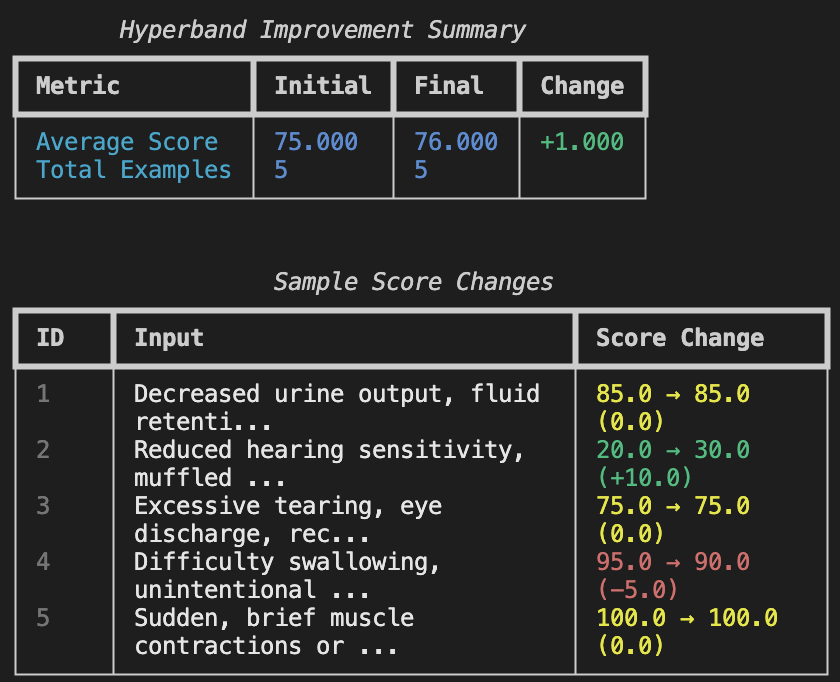

- Iterate on prompt and model parameters

- Hyperband kills bad optimizations early

- Final prompt is reported w/ accuracy

Sample Configuration

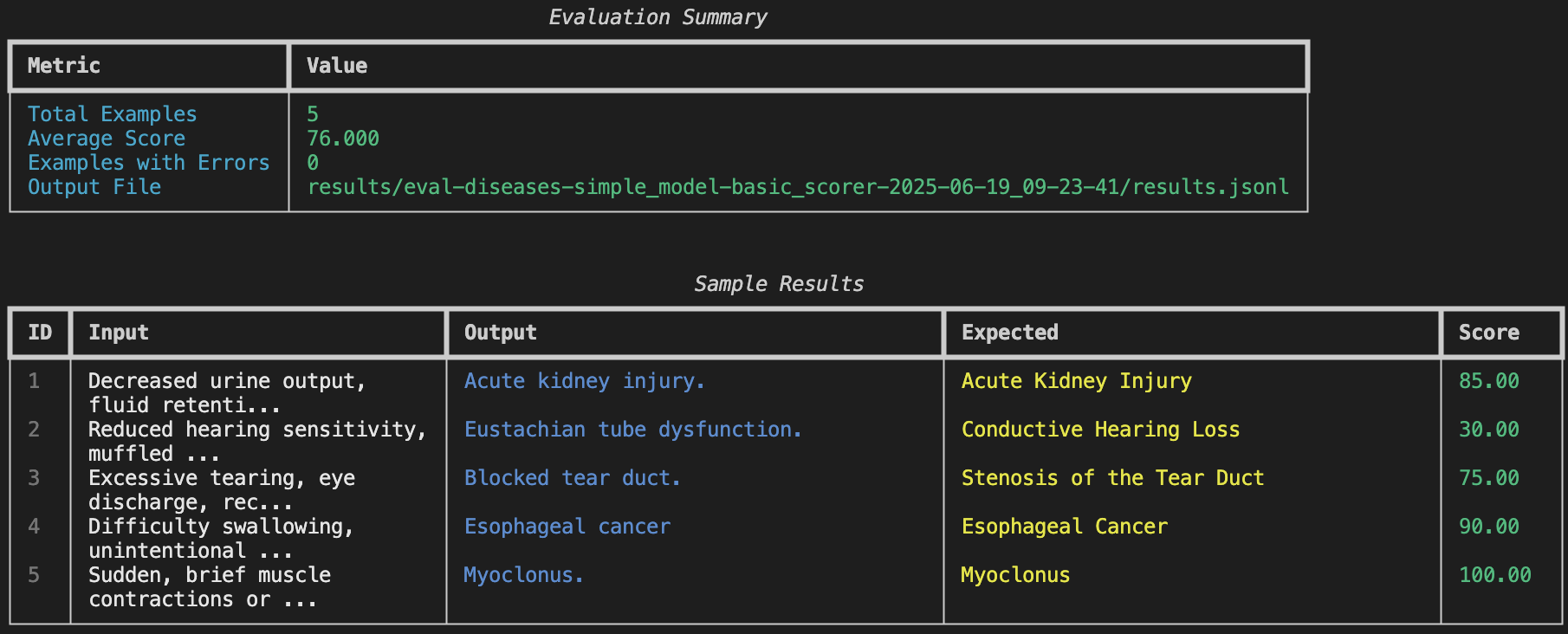

dataset: data/diseases.csv

model: models/simple_model.py

scorer: scorers/basic_scorer.py

prompts: prompts

results_dir: results

num_examples: 5

sort: random

hyperband:

num_trials: 2

min_examples: 1

TODO

- multi-step scorers for agent evals

Shape of the eval output:

| id | step | input | output | score |

|---|---|---|---|---|

| 1 | 1 | "Hey, whats your name?" | "My name is John" | 0.95 |

| 1 | 2 | "What is your favorite color?" | "My favorite color is blue" | 0.95 |

| 1 | 3 | "What is your favorite food?" | "My favorite food is pizza" | 0.95 |

| 2 | 1 | "What is your name?" | "My name is John" | 0.95 |

| 2 | 2 | "Wow great!" | "..." | 0.95 |

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file hyperevals-0.1.1.tar.gz.

File metadata

- Download URL: hyperevals-0.1.1.tar.gz

- Upload date:

- Size: 13.2 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

5dafdca79737bd104ccc832e60e3dc77f95e59112cdc266190de23573c4471d4

|

|

| MD5 |

9336b7ba155298867aee619991e79a36

|

|

| BLAKE2b-256 |

111e164d3b3666dd972c797a043d6e7df883ddca5b6a6e3413057969237eabbd

|

File details

Details for the file hyperevals-0.1.1-py3-none-any.whl.

File metadata

- Download URL: hyperevals-0.1.1-py3-none-any.whl

- Upload date:

- Size: 13.3 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

9d5553e3faaf36e1bce8f35fcb06fb3ccdbc617041d3cc87a5ff38867df65a34

|

|

| MD5 |

cb798d0a3b27ece20106e09e2864f228

|

|

| BLAKE2b-256 |

d0b9650fae5ffa80d77f210051afccc7b900b8c0bb60cc76d8f79d22eadb1e3c

|