Inspect Flow is a workflow stack built on Inspect AI that enables research organizations to run AI evaluations at scale

Project description

Inspect Flow

Workflow orchestration for Inspect AI that enables you to define, run, and manage evaluations at scale — from configuration through to production.

Why Inspect Flow?

As evaluation workflows grow in complexity—running multiple tasks across different models with varying parameters, then reviewing, validating, and promoting results—managing these experiments becomes challenging. Inspect Flow addresses this by providing:

- Declarative Configuration: Define complex evaluations with tasks, models, and parameters in type-safe schemas

- Repeatable & Shareable: Encapsulated definitions of tasks, models, configurations, and Python dependencies ensure experiments can be reliably repeated and shared

- Powerful Defaults: Define defaults once and reuse them everywhere with automatic inheritance

- Parameter Sweeping: Matrix patterns for systematic exploration across tasks, models, and hyperparameters

- Post-Evaluation Workflows: Tag, validate, and promote evaluation logs with composable steps

Inspect Flow is designed for researchers and engineers running systematic AI evaluations who need to scale beyond ad-hoc scripts.

Getting Started

Prerequisites

Before using Inspect Flow, you should:

- Have familiarity with Inspect AI

- Have an existing Inspect evaluation or use one from inspect-evals

Installation

pip install inspect-flow

Optional: VS Code extension

Optionally install the Inspect AI VS Code Extension which includes features for viewing evaluation log files.

Basic Example

FlowSpec is the main entrypoint for defining evaluation runs. At its core, it takes a list of tasks to run. Here's a simple example that runs two evaluations:

from inspect_flow import FlowSpec, FlowTask

FlowSpec(

log_dir="logs",

tasks=[

FlowTask(

name="inspect_evals/gpqa_diamond",

model="openai/gpt-4o",

),

FlowTask(

name="inspect_evals/mmlu_0_shot",

model="openai/gpt-4o",

),

],

)

To run the evaluations, run the following command in your shell. This will create a virtual environment for this spec run and install the dependencies. Note that task and model dependencies (like the inspect-evals and openai Python packages) are inferred and installed automatically.

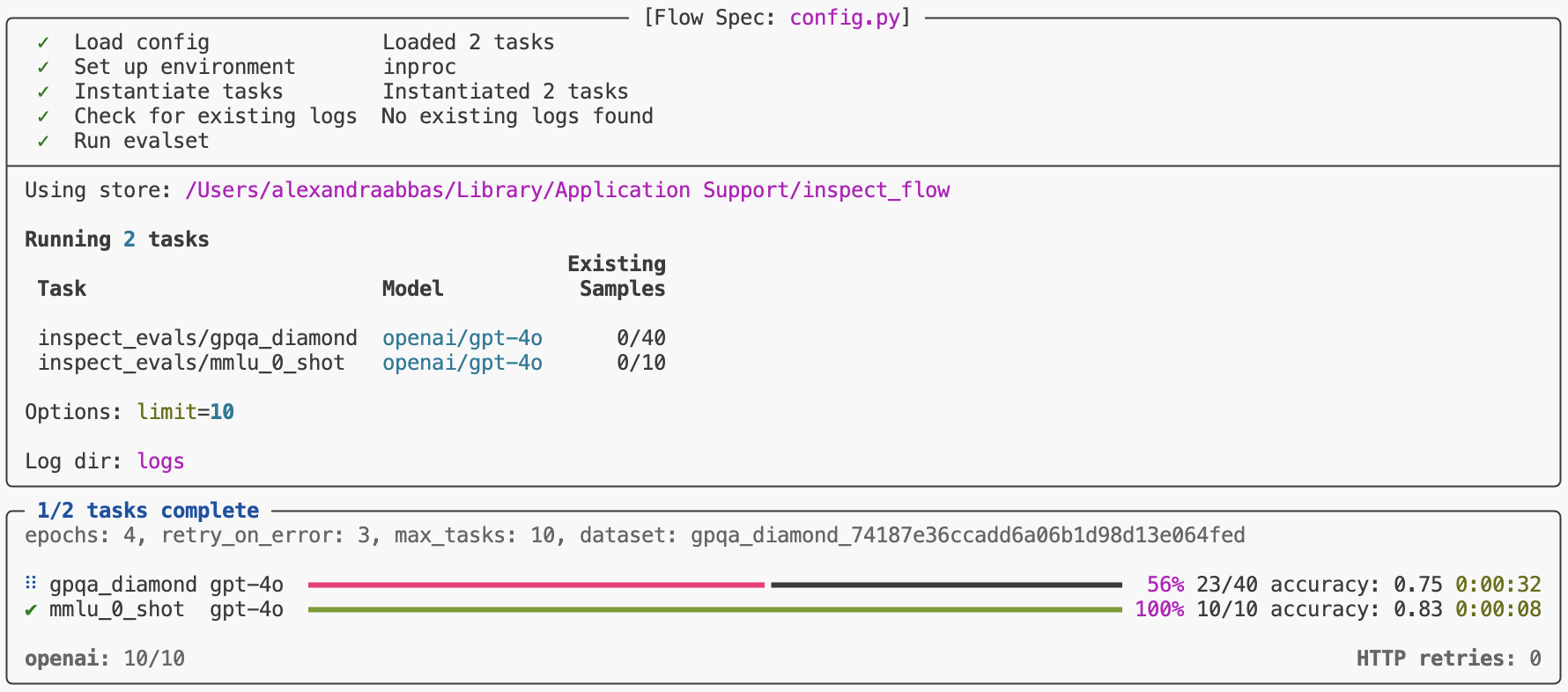

flow run config.py

This will run both tasks and display progress in your terminal.

Python API

You can run evaluations from Python instead of the command line.

from inspect_flow import FlowSpec, FlowTask

from inspect_flow.api import run

spec = FlowSpec(

log_dir="logs",

tasks=[

FlowTask(

name="inspect_evals/gpqa_diamond",

model="openai/gpt-4o",

),

FlowTask(

name="inspect_evals/mmlu_0_shot",

model="openai/gpt-4o",

),

],

)

run(spec=spec)

Matrix Functions

Often you'll want to evaluate multiple tasks across multiple models. Rather than manually defining every combination, use tasks_matrix to generate all task-model pairs:

from inspect_flow import FlowSpec, tasks_matrix

FlowSpec(

log_dir="logs",

tasks=tasks_matrix(

task=[

"inspect_evals/gpqa_diamond",

"inspect_evals/mmlu_0_shot",

],

model=[

"openai/gpt-5",

"openai/gpt-5-mini",

],

),

)

To preview the expanded config before running it, you can run the following command in your shell to ensure the generated config is the one that you intend to run.

flow config matrix.py

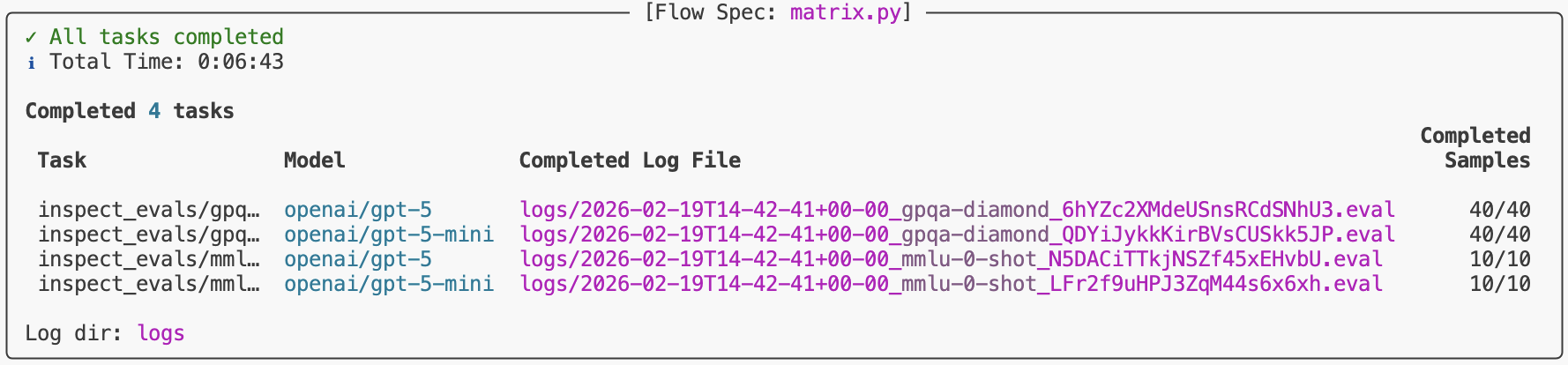

This command outputs the expanded configuration showing all 4 task-model combinations (2 tasks × 2 models).

log_dir: logs

dependencies:

- inspect-evals

tasks:

- name: inspect_evals/gpqa_diamond

model:

name: openai/gpt-5

- name: inspect_evals/gpqa_diamond

model:

name: openai/gpt-5-mini

- name: inspect_evals/mmlu_0_shot

model:

name: openai/gpt-5

- name: inspect_evals/mmlu_0_shot

model:

name: openai/gpt-5-mini

Flow provides additional matrix functions (models_matrix, configs_matrix) for sweeping over model settings, generation configs, and more. See Matrixing for details.

Run Evaluations

Before running evaluations, preview what would run with --dry-run:

flow run matrix.py --dry-run

This performs the full setup process—importing tasks from the registry, applying all defaults, expanding all matrix functions, and checking for existing logs—showing exactly what would run, but stops before actually running the evaluations.

To run the config:

flow run matrix.py

When complete, you'll find a link to the logs at the bottom of the task results summary.

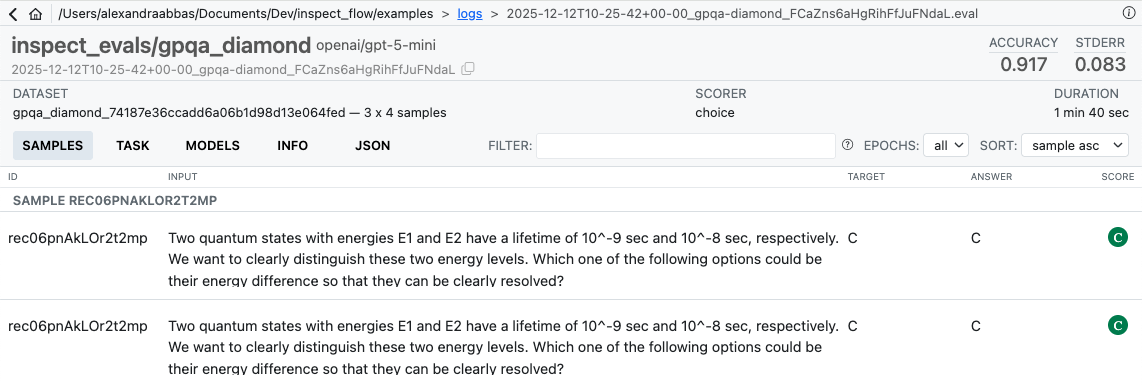

To view logs interactively, run:

inspect view --log-dir logs

After Running

Once evaluations complete, use steps to operate on the resulting logs. For example, tag logs after reviewing them:

flow step tag logs/ --add reviewed --reason "Manually inspected"

Use flow check to verify the completeness of a spec against a log directory — for example, checking how much of a production directory has been filled:

flow check matrix.py --log-dir s3://bucket/prod/logs

Steps can be composed into full workflows — filtering, tagging, and copying logs between directories. See Steps for custom steps, filters, and an end-to-end example.

Learning More

See the following articles to learn more about using Flow:

- Spec: Flow type system, config structure and basics.

- Defaults: Define defaults once and reuse them everywhere with automatic inheritance.

- Matrixing: Systematic parameter exploration with matrix and with functions.

- Steps: Post-evaluation workflows — tag, validate, and promote logs with composable steps.

- Reference: Detailed documentation on the Flow Python API and CLI commands.

Development

To work on development of Inspect Flow, clone the repository and install with the -e flag and [dev, doc] optional dependencies:

git clone https://github.com/meridianlabs-ai/inspect_flow

cd inspect_flow

uv sync

source .venv/bin/activate

Optionally install pre-commit hooks via

make hooks

Run linting, formatting, and tests via

make check

make test

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file inspect_flow-0.8.0.tar.gz.

File metadata

- Download URL: inspect_flow-0.8.0.tar.gz

- Upload date:

- Size: 99.0 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

01e140b73d17fbb0bf14d371c01a54baf763755909d6379f8f91debb6606d3eb

|

|

| MD5 |

d72a84f9742cf953487f7f544c926c86

|

|

| BLAKE2b-256 |

0a317af66bb134bf5f150add4129310823851df4e88e60fdfe2da66ab20df4d2

|

Provenance

The following attestation bundles were made for inspect_flow-0.8.0.tar.gz:

Publisher:

release.yaml on meridianlabs-ai/inspect_flow

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

inspect_flow-0.8.0.tar.gz -

Subject digest:

01e140b73d17fbb0bf14d371c01a54baf763755909d6379f8f91debb6606d3eb - Sigstore transparency entry: 1350462960

- Sigstore integration time:

-

Permalink:

meridianlabs-ai/inspect_flow@53f086c9b9e0248ce63a652ddb755242aafd9897 -

Branch / Tag:

refs/heads/main - Owner: https://github.com/meridianlabs-ai

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yaml@53f086c9b9e0248ce63a652ddb755242aafd9897 -

Trigger Event:

push

-

Statement type:

File details

Details for the file inspect_flow-0.8.0-py3-none-any.whl.

File metadata

- Download URL: inspect_flow-0.8.0-py3-none-any.whl

- Upload date:

- Size: 128.1 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

1baa30766464cb5ed3539e16c83fa92994e992940a94d284511eb9af40ab23e8

|

|

| MD5 |

06327dbdc2bdd57abbacf2f83dba9822

|

|

| BLAKE2b-256 |

95f737689df561c50640f777594d7392d4b60ed3a4dced5d9f9742d41ae391bc

|

Provenance

The following attestation bundles were made for inspect_flow-0.8.0-py3-none-any.whl:

Publisher:

release.yaml on meridianlabs-ai/inspect_flow

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

inspect_flow-0.8.0-py3-none-any.whl -

Subject digest:

1baa30766464cb5ed3539e16c83fa92994e992940a94d284511eb9af40ab23e8 - Sigstore transparency entry: 1350463035

- Sigstore integration time:

-

Permalink:

meridianlabs-ai/inspect_flow@53f086c9b9e0248ce63a652ddb755242aafd9897 -

Branch / Tag:

refs/heads/main - Owner: https://github.com/meridianlabs-ai

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yaml@53f086c9b9e0248ce63a652ddb755242aafd9897 -

Trigger Event:

push

-

Statement type: