MLflow integration for Inspect AI: experiment tracking, execution tracing, and Scout analysis

Project description

inspect-mlflow

MLflow integration for Inspect AI. Provides experiment tracking, execution tracing, and artifact logging for Inspect AI evaluations.

Install

pip install inspect-mlflow

Quick Start

Hooks auto-register via entry points when the package is installed. No code changes needed.

# Start MLflow server

mlflow server --port 5000

# Set env vars

export MLFLOW_TRACKING_URI="http://localhost:5000"

export MLFLOW_INSPECT_TRACING="true"

# Run evals as usual. Both hooks activate automatically.

inspect eval my_task.py --model openai/gpt-4o

Then open http://localhost:5000 to see runs and traces.

What it does

This package provides two hooks that run automatically during Inspect AI evaluations.

Tracking Hook

Activated when MLFLOW_TRACKING_URI is set. Creates hierarchical MLflow runs with full evaluation telemetry.

What gets logged:

- Parent run per eval invocation with nested child runs per task

- Task configuration as parameters (model, dataset, solver, temperature, top_p, max_tokens)

- Per-sample scores as step metrics (accuracy, timing per sample)

- Aggregate metrics (total_samples, completed_samples, match/accuracy, match/stderr)

- Model token usage (input/output/total tokens per model)

- Real-time event counting (total_model_calls, total_tool_calls)

- Eval artifacts: per-sample results JSON + full eval log JSON

- Trace assessments: eval scores logged as MLflow assessments via

mlflow.log_feedback(), visible in the Traces UI assessment column

Task run showing 17 metrics and parameters from a tool-using eval:

Traces table with assessment column showing eval scores (match: AVG 1.0):

Tracing Hook

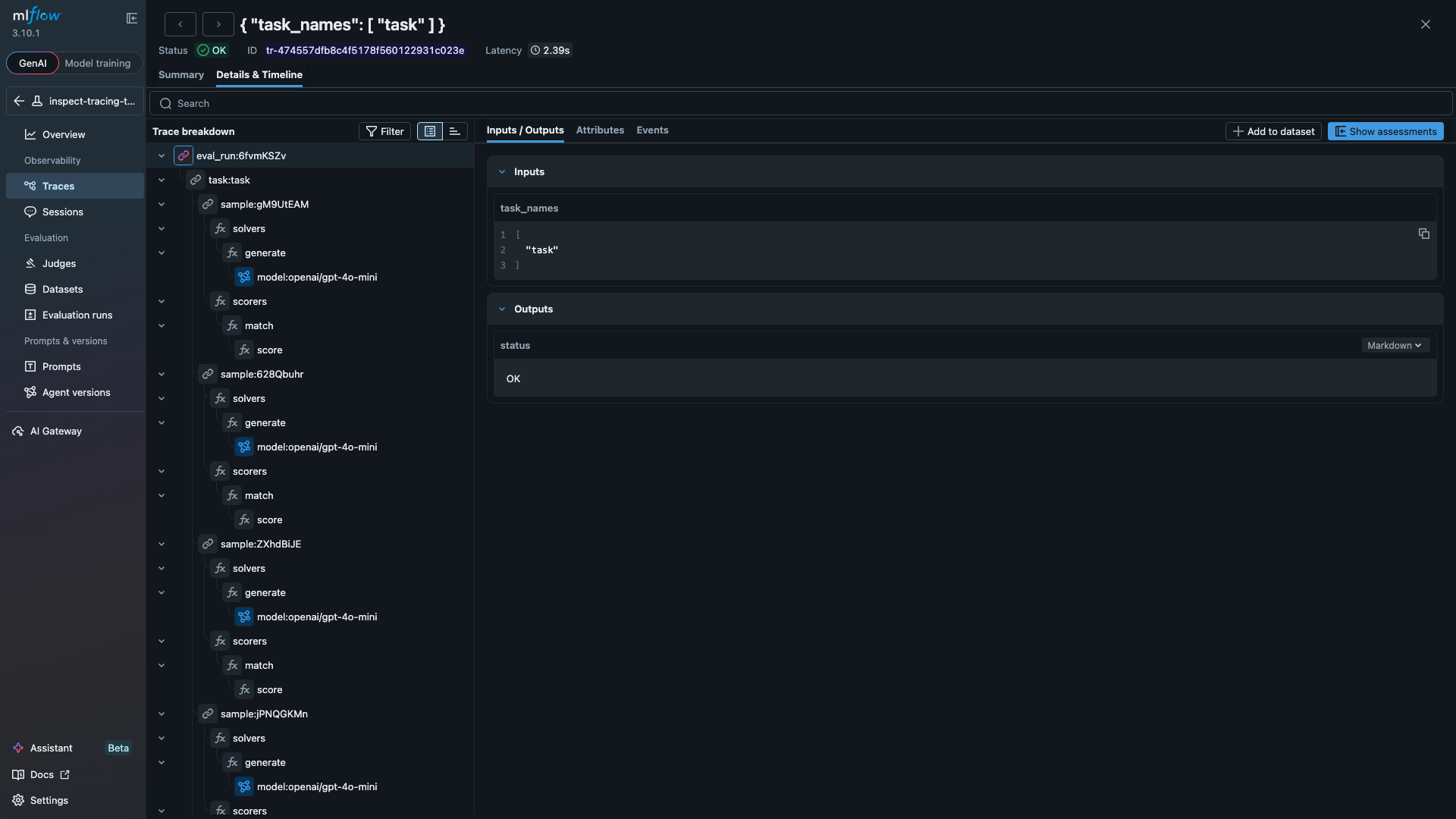

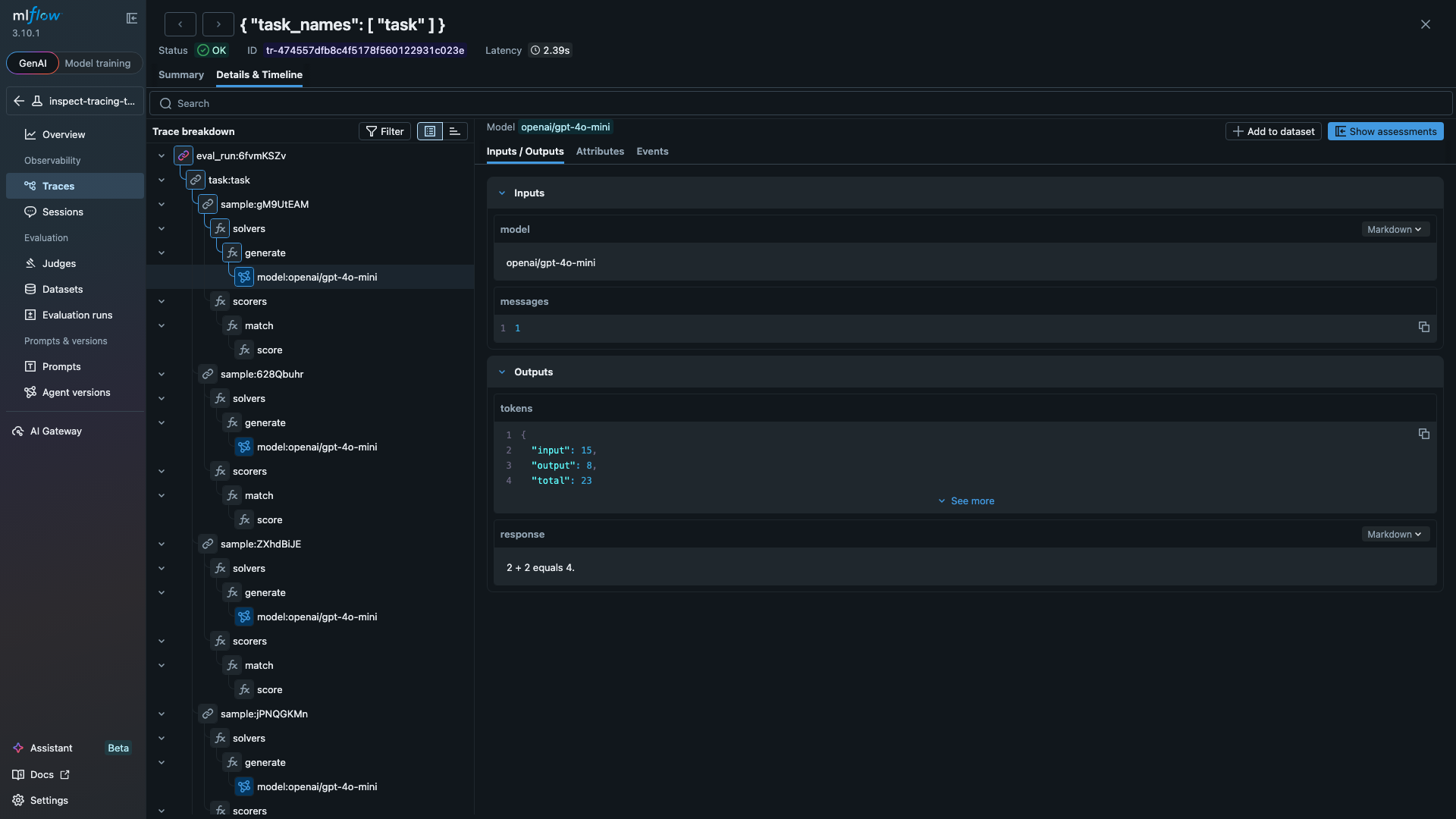

Activated when MLFLOW_INSPECT_TRACING=true is also set. Maps eval execution to MLflow trace spans, giving you a visual debugging view of every model call, tool invocation, and scoring step.

Span hierarchy:

eval_run:98h4b4KN (CHAIN)

task:task (CHAIN)

sample:keAdeL1U (CHAIN)

solvers (from SpanBeginEvent)

use_tools (solver span)

model:openai/gpt-4o-mini (LLM) - 5,167 tokens

tool:calculator (TOOL) - args: {"expression": "47 * 89"}, result: "4183"

model:openai/gpt-4o-mini (LLM) - 5,263 tokens

generate (solver span)

model:openai/gpt-4o-mini (LLM) - 182 tokens

scorers (from SpanBeginEvent)

match (scorer span)

score (EVALUATOR) - value: C

sample:HWl2wp2B (CHAIN)

...

Each span type captures different data:

| Span Type | Data Captured |

|---|---|

| CHAIN | eval run, task, and sample lifecycle with scores and timing |

| LLM | model name, input/output token counts, temperature, cache status, response text |

| TOOL | function name, arguments, result, working time, errors |

| EVALUATOR | score value, explanation, target |

Traces list showing 3 eval runs (simple math + tool-using calculator eval):

Full span tree showing solver/scorer hierarchy with tool calls:

LLM span detail with model name, token counts, and response:

Configuration

| Env var | Required | Default | Description |

|---|---|---|---|

MLFLOW_TRACKING_URI |

Yes | - | MLflow server URL |

MLFLOW_EXPERIMENT_NAME |

No | inspect_ai |

Experiment name |

MLFLOW_INSPECT_TRACING |

No | false |

Enable execution tracing |

MLFLOW_INSPECT_LOG_ARTIFACTS |

No | true |

Log eval artifacts (sample results + eval log JSON) |

Examples

Basic eval (tracking + tracing)

from inspect_ai import Task, eval

from inspect_ai.dataset import Sample

from inspect_ai.scorer import match

from inspect_ai.solver import generate

# No special imports needed. Hooks auto-register on install.

task = Task(

dataset=[

Sample(input="What is 2 + 2?", target="4"),

Sample(input="What is 3 * 5?", target="15"),

Sample(input="What is 10 - 7?", target="3"),

],

solver=generate(),

scorer=match(),

)

logs = eval(task, model="openai/gpt-4o-mini")

# MLflow now has: runs with metrics + traces with span tree

Eval with tool calls

from inspect_ai import Task, eval

from inspect_ai.dataset import Sample

from inspect_ai.scorer import match

from inspect_ai.solver import generate, use_tools

from inspect_ai.tool import tool

@tool

def calculator():

"""Perform arithmetic calculations."""

async def run(expression: str) -> str:

"""Evaluate a math expression.

Args:

expression: A math expression to evaluate, e.g. "47 * 89"

"""

allowed = {"__builtins__": {}}

return str(eval(expression, allowed))

return run

task = Task(

dataset=[

Sample(

input="Use the calculator to compute 47 * 89.",

target="4183",

),

Sample(

input="Use the calculator to compute 1024 / 16.",

target="64",

),

],

solver=[use_tools([calculator()]), generate()],

scorer=match(),

)

logs = eval(task, model="openai/gpt-4o-mini")

# Traces now include TOOL spans for each calculator() call

# with function name, arguments, and result

Development

git clone https://github.com/debu-sinha/inspect-mlflow.git

cd inspect-mlflow

uv sync --group dev

uv run pre-commit install

uv run pytest tests/ -v

See CONTRIBUTING.md for integration testing and PR guidelines.

Related

- Inspect AI - AI evaluation framework by UK AI Security Institute

- MLflow - ML experiment tracking and model management

- Inspect AI hooks docs - How hooks work

- Issue #3547 - Original proposal

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file inspect_mlflow-0.3.1.tar.gz.

File metadata

- Download URL: inspect_mlflow-0.3.1.tar.gz

- Upload date:

- Size: 3.3 MB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

04695d39ac41f7a5d23bc8d6a8a4b9cd36cd4ff0df03f093a993a09c9e919ea5

|

|

| MD5 |

5ae04fc04b8109a8cadd27f387f00756

|

|

| BLAKE2b-256 |

932db2c013d557e9beae7461a91f1c3742fc995c4142bf666cef917fbe14fb44

|

Provenance

The following attestation bundles were made for inspect_mlflow-0.3.1.tar.gz:

Publisher:

release.yml on debu-sinha/inspect-mlflow

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

inspect_mlflow-0.3.1.tar.gz -

Subject digest:

04695d39ac41f7a5d23bc8d6a8a4b9cd36cd4ff0df03f093a993a09c9e919ea5 - Sigstore transparency entry: 1156031540

- Sigstore integration time:

-

Permalink:

debu-sinha/inspect-mlflow@566d6b292c14f727b26688882c9ed5ba44319cb2 -

Branch / Tag:

refs/tags/v0.3.1 - Owner: https://github.com/debu-sinha

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yml@566d6b292c14f727b26688882c9ed5ba44319cb2 -

Trigger Event:

push

-

Statement type:

File details

Details for the file inspect_mlflow-0.3.1-py3-none-any.whl.

File metadata

- Download URL: inspect_mlflow-0.3.1-py3-none-any.whl

- Upload date:

- Size: 15.7 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3240e04965f3e85630e4031c577ffda01e333c84ae4698f4d81fc087b3e1234f

|

|

| MD5 |

5bf520ec3a3c807de9910390b682e3ca

|

|

| BLAKE2b-256 |

682fe98690a58844a3a7ec8987d400f028bc02632bd8ec4ef41e330a927c782a

|

Provenance

The following attestation bundles were made for inspect_mlflow-0.3.1-py3-none-any.whl:

Publisher:

release.yml on debu-sinha/inspect-mlflow

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

inspect_mlflow-0.3.1-py3-none-any.whl -

Subject digest:

3240e04965f3e85630e4031c577ffda01e333c84ae4698f4d81fc087b3e1234f - Sigstore transparency entry: 1156031569

- Sigstore integration time:

-

Permalink:

debu-sinha/inspect-mlflow@566d6b292c14f727b26688882c9ed5ba44319cb2 -

Branch / Tag:

refs/tags/v0.3.1 - Owner: https://github.com/debu-sinha

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yml@566d6b292c14f727b26688882c9ed5ba44319cb2 -

Trigger Event:

push

-

Statement type: