This extension aims to expand InterpretML by integrating probabilistic models while leveraging the existing explanation mechanisms provided by the library. By doing so, we enable users to analyze uncertainty, quantify probabilistic predictions, and gain deeper insights into model behavior beyond point estimates.

Project description

Extending InterpretML

As it Github page says:

"InterpretML is an open-source package that incorporates state-of-the-art machine learning interpretability techniques under one roof. With this package, you can train interpretable glassbox models and explain blackbox systems. InterpretML helps you understand your model's global behavior, or understand the reasons behind individual predictions."

Installation

The easiest way to install this extension of InterpretML is by following these steps:

- Create a new conda environment:

conda create --name {env_name} python=3.9

- Activate the environment:

conda activate {env_name}

- Navigate to the root directory and install:

pip install ./interpret/python/interpret-core

While you can choose not to use conda, it is recommended to avoid potential conflicts with dependencies.

Objective of this Extension

Currently, InterpretML implements several interpretable models, such as Explainable Boosting Machines (EBMs), decision trees, and linear models, along with various explanation techniques for blackbox models.

This extension aims to expand InterpretML by integrating probabilistic models while leveraging the existing explanation mechanisms provided by the library. By doing so, we enable users to analyze uncertainty, quantify probabilistic predictions, and gain deeper insights into model behavior beyond point estimates.

How InterpretML visualize glassbox models?

InterpretML uses two main ways to explain these type of models:

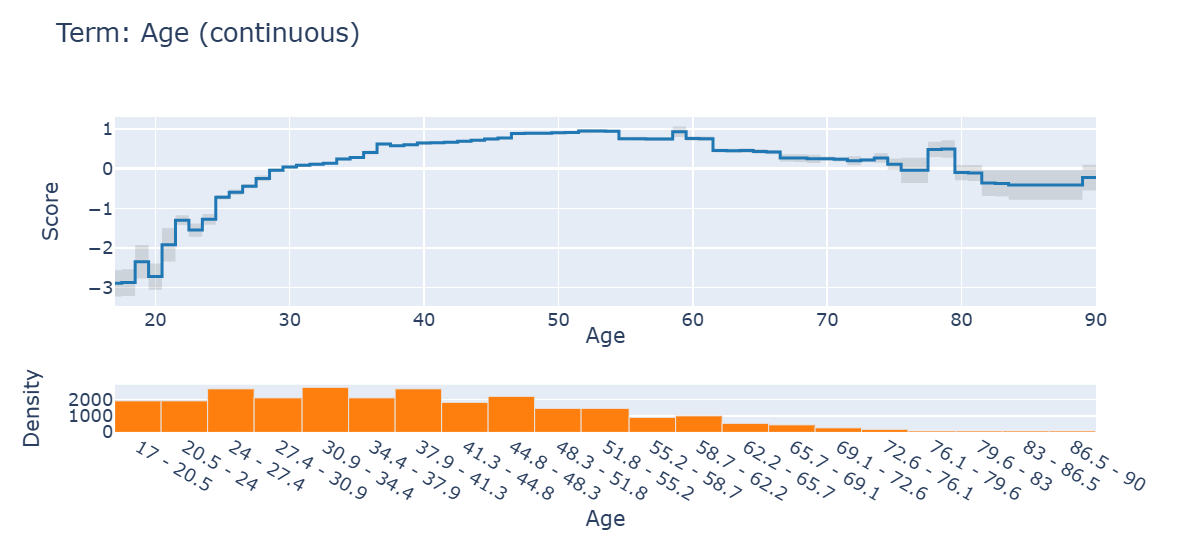

- Global Explanations: These explanations provide insights into how a model makes predictions across the entire dataset.

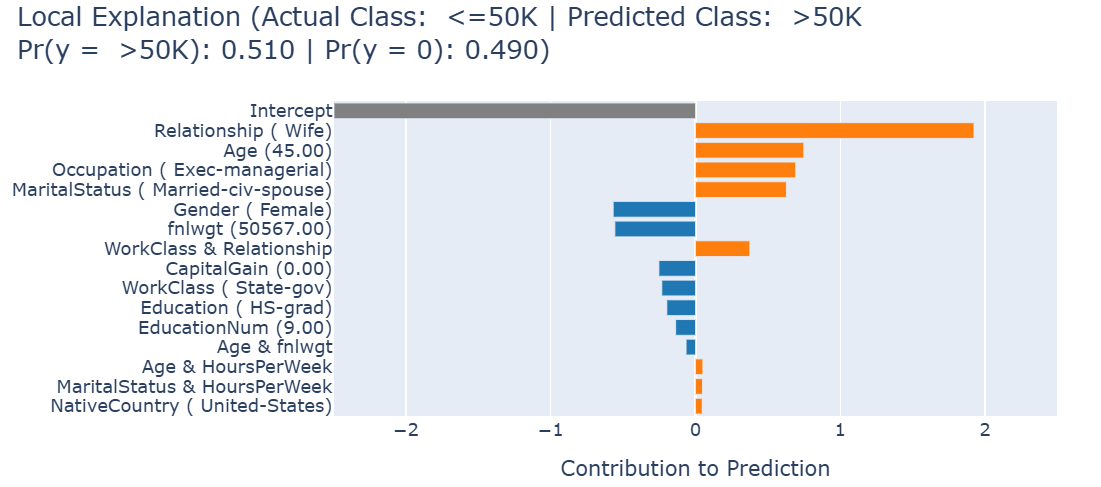

- Local Explanations: These explanations focus on individual predictions, showing why the model made a specific decision for a given instance.

The main goal will be to use both global and local visualizations for different models that InterpretML does not currently implement.

Adding new models

The new models incorporated in this extension are:

-

Naive Bayes (Gaussian and Categorical): A simple yet powerful probabilistic model based on Bayes' theorem. It assumes feature independence, making it highly efficient for classification tasks.

-

Linear Discriminant Model: A classification model that finds the linear combination of features that best separates two or more classes. It is particularly useful for dimensionality reduction while preserving class separability.

-

TAN (Tree Augmented Naive Bayes): An extension of Naive Bayes that allows for dependencies between features using a tree structure, improving its expressiveness while maintaining efficiency.

-

Bayesian Network: A probabilistic graphical model that represents variables and their conditional dependencies via a directed acyclic graph (DAG). It is useful for modeling complex probabilistic relationships.

-

NAM (Neural Additive Model): A neural network-based approach that extends Generalized Additive Models (GAMs) by learning feature contributions in a flexible and interpretable manner.

These models will be integrated with InterpretML's existing explanation mechanisms, ensuring users can interpret their predictions using global and local explanations.

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file interpret_extension-0.1.2.tar.gz.

File metadata

- Download URL: interpret_extension-0.1.2.tar.gz

- Upload date:

- Size: 240.7 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.12.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

91fed5d032a22a503313bd370e19cfe60eaf822fa6ca38fe5af803b6e3047f15

|

|

| MD5 |

85b85b41fc1a170b99cb22dd8089fd77

|

|

| BLAKE2b-256 |

7a5d0cb3dfe1ef17f7717f9dd4457c2b3e8fd6c2cdaf6c63dd418945a4cea08c

|

File details

Details for the file interpret_extension-0.1.2-py3-none-any.whl.

File metadata

- Download URL: interpret_extension-0.1.2-py3-none-any.whl

- Upload date:

- Size: 305.3 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.12.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b66ffb79f936715253be0a507f7d0e110a60e16fc6f9ac382d3037b8c51085ba

|

|

| MD5 |

3a3a42e6edc3d09f40d947e34f993ba9

|

|

| BLAKE2b-256 |

e481ebcb4f14e539c14d2a0f3ded7100fba3a5ce3764180813ec2902400bcd7b

|