This package is design to scrape Bible data on JW.org for NLP/IAgen task.

Project description

JW SOUP

jwsoup is a simple Python package that scrapes Bible data from the JW.org website. The package provides functionality for scraping Bible verses and saving them in a structured format. It supports scraping data from one or multiple pages, handling paginated content, and storing the results in a Parquet file.

Features

- Scrape Bible verses from individual or multiple pages.

- Clean the scraped verse text to remove unwanted characters.

- Store the scraped data in a Parquet file for further analysis.

- Simple interface with reusable functions.

Installation

To install jwsoup, you can use pip from PyPI:

pip install jwsoup

Alternatively, if you want to install it locally from the source, clone the repository and run the following commands:

git clone https://github.com/sawadogosalif/jwsoup.git

cd jwsoup

pip install .

Usage

Scrape text - Single Page

You can scrape a single page of Bible verses using the scrape_single_page function. This function returns a list of verses and the URL for the next page (if available).

jwsoup.text import scrape_single_page

url = "https://www.jw.org/fr/biblioth%C3%A8que/bible/bible-d-etude/livres/Gen%C3%A8se/1/"

verses, next_url = scrape_single_page(url)

# Print the scraped verses

for verse in verses:

print(f"{verse[0]}: {verse[1]}")

# Print the next URL

print(f"Next page URL: {next_url}")

Scrape text - Multiple Pages

To scrape multiple pages starting from a given URL, use the scrape_multi_page function. This function will follow pagination and save the scraped data in a Parquet file.

from jwsoup.text import scrape_multi_page

start_url = "https://www.jw.org/mos/d-s%E1%BA%BDn-yiisi/biible/nwt/books/S%C9%A9ngre/1/"

output_dir = "bible_data_moore.parquet"

res = scrape_multi_page(start_url, output_dir=output_dir, max_pages=5, page_sep="books")

Save Data to Parquet

The scraped data is stored in a Parquet file for efficient storage and querying. You can specify the output file and partition the data by page.

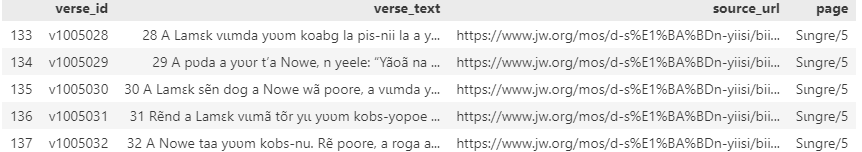

import pandas as pd

pd.read_parque(output_dir).head()

Downloads audios

start_url = "https://www.jw.org/mos/d-s%E1%BA%BDn-yiisi/biible/nwt/books/yikri"

output_dir = "audio_files"

download_audios(start_url, output_dir,max_pages=3)

License

This project is licensed under the MIT License - see the LICENSE file for details.

Author

- Salif Sawadogopro

Email: salif.sawadogopro@gmail.com

Acknowledgments

- Thanks to the

requests,beautifulsoup4,pandas,loguru, andpyarrowlibraries for making scraping and data handling easier. - Thanks to JW for providing an accessible and rich resource of Bible texts in multiple langages

Changelog

[0.0.1] - 2024-11-23

Added

- Initial release of

jw_soup. - Supports scraping of text-based Bible verses from JW.org.

- Extracts individual verses and saves them to parquet files using

pyarrow. - Includes basic error handling and logging with

loguru.

Known Limitations

- Only supports scraping textual data.

- Does not handle multimedia content (audio/video).

- Limited testing for edge cases (e.g., malformed HTML or network interruptions).

[0.0.2] - 2024-11-23

Added

- Typo correction in package descritption

[0.0.5] - 2024-11-24

Added

-

Add project url in setup

-

Fix image rendering in pypi

-

Improve next button parsing

[0.1.0] - 2025-01-10

Added

- Introduce audio dowloaders

- Improve next button parsing

[0.1.1] - 2025-01-11

Added

- Good naming of folder with urllib.parse.quote

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file jwsoup-0.1.1.tar.gz.

File metadata

- Download URL: jwsoup-0.1.1.tar.gz

- Upload date:

- Size: 9.9 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.0.1 CPython/3.12.8

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

fa60d05160279cad8740cfb8c3040373d5970b0451bbc058d697f83cb9d76fc7

|

|

| MD5 |

54a2f7d51acf49a3ecd9e342a832fa2e

|

|

| BLAKE2b-256 |

a765b3bda6e5b96b29538a59e89da00147d8b765e1695e3c7c9bedcba7986f6b

|

File details

Details for the file jwsoup-0.1.1-py3-none-any.whl.

File metadata

- Download URL: jwsoup-0.1.1-py3-none-any.whl

- Upload date:

- Size: 8.4 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.0.1 CPython/3.12.8

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

64b7f97839a12512d8e2853de40ca89a1854c2d1f1cd237231c2161deb497911

|

|

| MD5 |

bb8e29c04f9a609f594344b25f87cf90

|

|

| BLAKE2b-256 |

888d621df90decfb0e3052cd0da7df9ee8ab44085327796b05d435a28917251f

|