An MDN Layer for Keras

Project description

Keras Mixture Density Network Layer

A mixture density network (MDN) Layer for Keras using pure Keras 3 operations. This makes it a bit more simple to experiment with neural networks that predict multiple real-valued variables that can take on multiple equally likely values.

This layer can help build MDN-RNNs similar to those used in RoboJam, Sketch-RNN, handwriting generation, and maybe even world models. You can do a lot of cool stuff with MDNs!

One benefit of this implementation is that you can predict any number of real-values. The loss function implements the negative log-likelihood of a mixture of multivariate normal distributions with a diagonal covariance matrix, derived directly from the standard multivariate Gaussian (Bishop PRML Eq. 2.43). In previous work, the loss function has often been specified by hand which is fine for 1D or 2D prediction, but becomes a bit more annoying after that.

Two important functions are provided for training and prediction:

get_mixture_loss_func(output_dim, num_mixtures): This function generates a loss function with the correct output dimensions and number of mixtures.sample_from_output(params, output_dim, num_mixtures, temp=1.0, sigma_temp=1.0): This function samples from the mixture distribution output by the model.

Installation

This project requires Python 3.11+ and Keras 3 with a backend installed (TensorFlow, JAX, or PyTorch). You can install this package from PyPI via pip like so:

python3 -m pip install keras-mdn-layer

You will also need a Keras 3 backend. For example:

python3 -m pip install tensorflow # or jax/jaxlib, or torch

And finally, import the module in Python: import keras_mdn_layer as mdn

Alternatively, you can clone or download this repository and then install via poetry install.

Tested Configurations

This library is tested on CI across the following matrix:

| Python | Platforms |

|---|---|

| 3.11 | Ubuntu, macOS, Windows |

| 3.12 | Ubuntu, macOS, Windows |

| 3.13 | Ubuntu, macOS, Windows |

CI uses TensorFlow as the Keras backend. Other Keras 3 backends (JAX, PyTorch) should also work but are not regularly tested.

Testing

The test suite (54 tests) covers:

- Layer mechanics: construction, serialization (

get_config), output shapes, weight counts, model save/load - Mathematical correctness: analytical NLL verification against single and multi-component Gaussians (Bishop PRML Eq. 2.43), softmax identities, batch loss averaging

- Sampling: statistical tests for mean/std recovery, bimodal mixture separation, shape and finiteness checks

- Training convergence: loss reduction, parameter recovery for known distributions (N(0,1), N(5, 0.25))

- Numerical stability: extreme sigma values, extreme logits, large distances from mean

- End-to-end pipelines: predict/split/sample roundtrip, LSTM-MDN (MDRNN) models in both training and inference configurations, TimeDistributed MDN

Build

This project builds using poetry. To build a wheel use poetry build.

Examples

Some examples are provided in the notebooks directory.

To run these using poetry, run poetry install and then open jupyter poetry run jupyter lab.

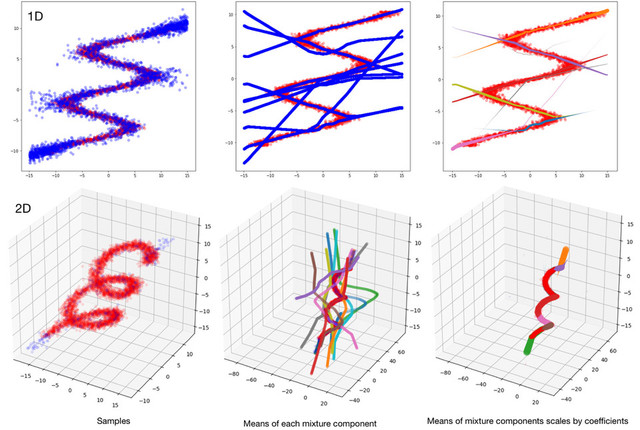

There's scripts for fitting multivalued functions, a standard MDN toy problem:

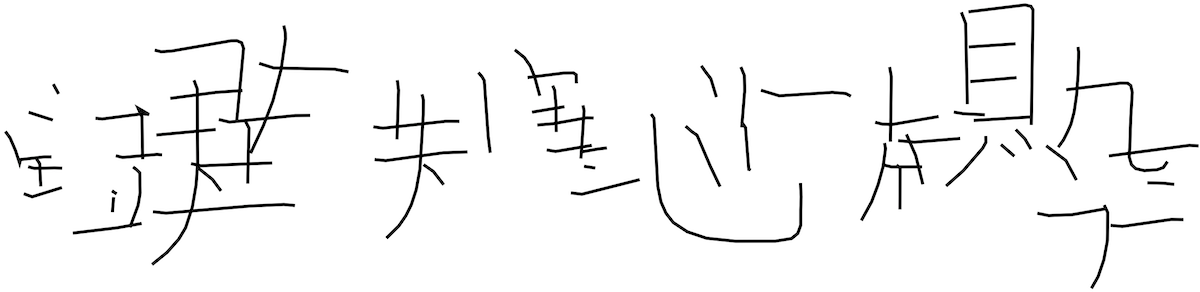

There's also a script for generating fake kanji characters:

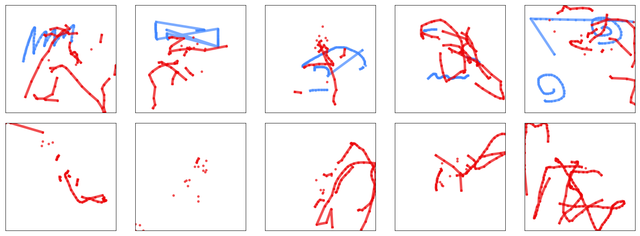

And finally, for learning how to generate musical touch-screen performances with a temporal component:

How to use

The MDN layer should be the last in your network and you should use get_mixture_loss_func to generate a loss function. Here's an example of a simple network with one Dense layer followed by the MDN.

import keras

import keras_mdn_layer as mdn

N_HIDDEN = 15 # number of hidden units in the Dense layer

N_MIXES = 10 # number of mixture components

OUTPUT_DIMS = 2 # number of real-values predicted by each mixture component

model = keras.Sequential()

model.add(keras.layers.Dense(N_HIDDEN, batch_input_shape=(None, 1), activation='relu'))

model.add(mdn.MDN(OUTPUT_DIMS, N_MIXES))

model.compile(loss=mdn.get_mixture_loss_func(OUTPUT_DIMS,N_MIXES), optimizer=keras.optimizers.Adam())

model.summary()

Fit as normal:

history = model.fit(x=x_train, y=y_train)

The predictions from the network are parameters of the mixture models, so you have to apply the sample_from_output function to generate samples.

y_test = model.predict(x_test)

y_samples = np.apply_along_axis(mdn.sample_from_output, 1, y_test, OUTPUT_DIMS, N_MIXES, temp=1.0)

See the notebooks directory for examples in jupyter notebooks!

Load/Save Model

Saving models is straight forward:

model.save('test_save.keras')

But loading requires custom_objects to be filled with the MDN layer, and a loss function with the appropriate parameters:

m_2 = keras.models.load_model('test_save.keras', custom_objects={'MDN': mdn.MDN, 'mdn_loss_func': mdn.get_mixture_loss_func(1, N_MIXES)})

TFLite / LiteRT Export

MDRNN models built with this layer can be exported to TFLite format for fast on-device inference using LiteRT (formerly TensorFlow Lite). An example script is provided in examples/tflite_mdrnn.py.

Because LSTMs require static shapes for TFLite conversion, wrap the model call in a tf.function with a fixed input signature before converting:

import tensorflow as tf

@tf.function(input_signature=[

tf.TensorSpec(shape=[1, seq_length, dimension], dtype=tf.float32)

])

def serve(inputs):

return model(inputs)

converter = tf.lite.TFLiteConverter.from_concrete_functions(

[serve.get_concrete_function()]

)

tflite_model = converter.convert()

Then load and run inference with LiteRT (pip install ai-edge-litert):

from ai_edge_litert.interpreter import Interpreter

interpreter = Interpreter(model_content=tflite_model)

interpreter.allocate_tensors()

input_details = interpreter.get_input_details()

output_details = interpreter.get_output_details()

interpreter.set_tensor(input_details[0]["index"], test_input)

interpreter.invoke()

output = interpreter.get_tensor(output_details[0]["index"])

In a benchmark of a 2-layer LSTM MDRNN (dim=8, 64 hidden units, 5 mixtures, seq_length=30), LiteRT inference was ~46x faster than model.predict() and ~180x faster than model() direct call:

| Method | Mean latency |

|---|---|

| LiteRT | 0.4 ms |

Keras predict |

19 ms |

Keras __call__ |

75 ms |

The TFLite output matches Keras output to within ~1e-6, so there is no accuracy loss. The full example with training, conversion, inference, and benchmarking is in examples/tflite_mdrnn.py.

Acknowledgements

- Hat tip to Omimo's Keras MDN layer for a starting point for this code.

- Super hat tip to hardmaru's MDN explanation, projects, and good ideas for sampling functions etc.

- Many good ideas from Axel Brando's Master's Thesis

- Mixture Density Networks in Edward tutorial.

References

- Christopher M. Bishop. 1994. Mixture Density Networks. Technical Report NCRG/94/004. Neural Computing Research Group, Aston University. http://publications.aston.ac.uk/373/

- Axel Brando. 2017. Mixture Density Networks (MDN) for distribution and uncertainty estimation. Master's thesis. Universitat Politecnica de Catalunya.

- A. Graves. 2013. Generating Sequences With Recurrent Neural Networks. ArXiv e-prints (Aug. 2013). https://arxiv.org/abs/1308.0850

- David Ha and Douglas Eck. 2017. A Neural Representation of Sketch Drawings. ArXiv e-prints (April 2017). https://arxiv.org/abs/1704.03477

- Charles P. Martin and Jim Torresen. 2018. RoboJam: A Musical Mixture Density Network for Collaborative Touchscreen Interaction. In Evolutionary and Biologically Inspired Music, Sound, Art and Design: EvoMUSART '18, A. Liapis et al. (Ed.). Lecture Notes in Computer Science, Vol. 10783. Springer International Publishing. DOI:10.1007/9778-3-319-77583-8_11

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file keras_mdn_layer-1.0.0.tar.gz.

File metadata

- Download URL: keras_mdn_layer-1.0.0.tar.gz

- Upload date:

- Size: 22.5 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: poetry/2.0.1 CPython/3.11.3 Darwin/25.3.0

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

1b1d37b3c5a28a8fd093a08c71fadf0e335bca137acf7b31d080ea0b122554bf

|

|

| MD5 |

a6e81e10f45c62cb5f6b57f51fdbd08a

|

|

| BLAKE2b-256 |

bc54fc6543309d06c452325a858df3ecdd69b88071d4bae2cce375b958294350

|

File details

Details for the file keras_mdn_layer-1.0.0-py3-none-any.whl.

File metadata

- Download URL: keras_mdn_layer-1.0.0-py3-none-any.whl

- Upload date:

- Size: 24.0 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: poetry/2.0.1 CPython/3.11.3 Darwin/25.3.0

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

09335a2c5f10a99af8e7f4e37f5a681514a48c40fa96c649bfb039558448ff02

|

|

| MD5 |

0cdea8cbf9b60b01bb8e220774ee85a4

|

|

| BLAKE2b-256 |

6a65916b5106ebf6c7dd58da40e5a9f587370fa75dd18a50f297ed51a727c27c

|