Tenant-fair LLM inference orchestration on a single GPU. No Kubernetes.

Project description

kvwarden

Tenant-fair LLM inference on one GPU. Sits in front of vLLM/SGLang, rate-limits per tenant at admission, and keeps a quiet user fast while a noisy neighbor floods the same engine.

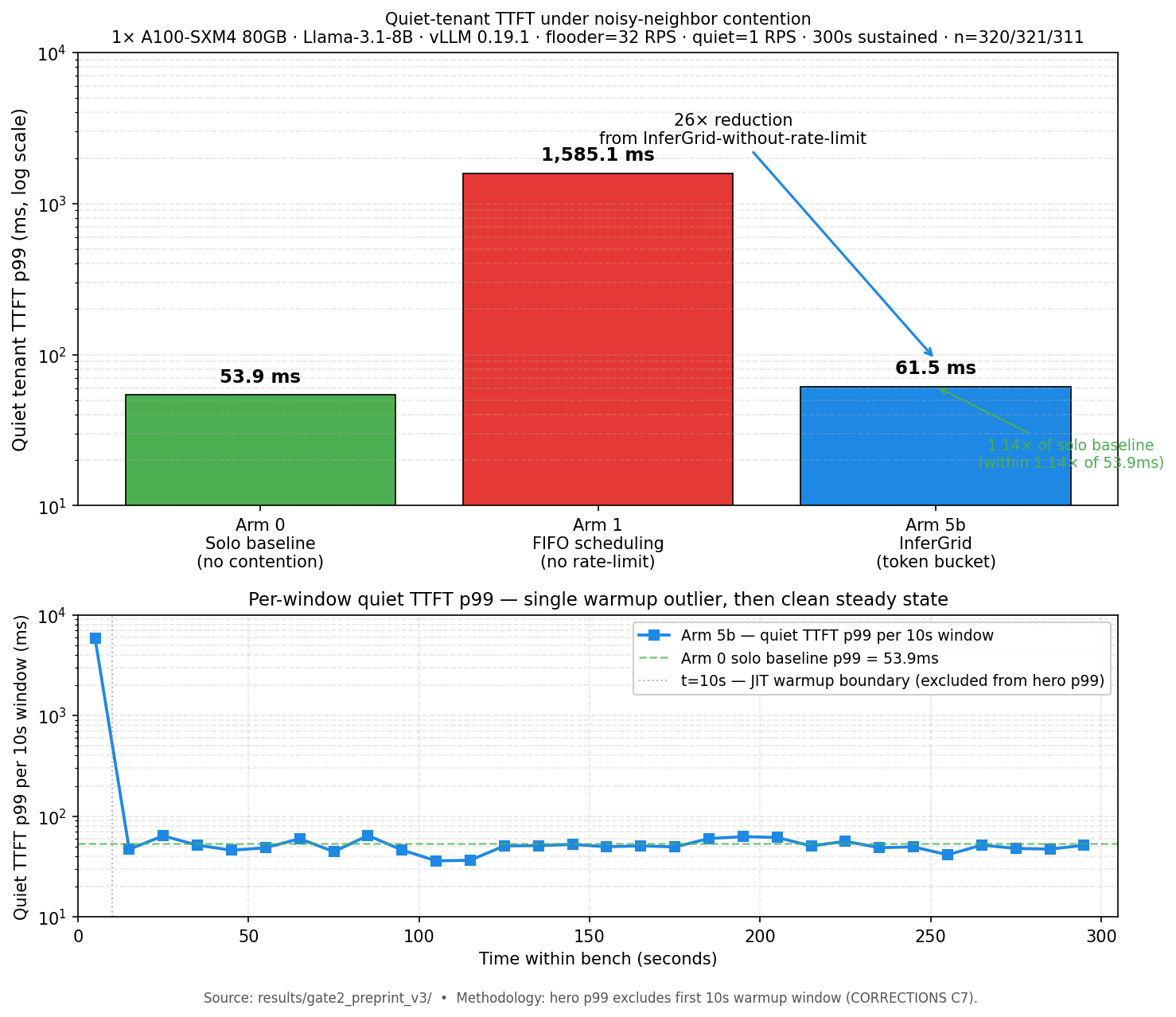

Hero number. A100-SXM4, Llama-3.1-8B, vLLM 0.19.1, two tenants sharing one engine, 300 s sustained:

| Quiet user TTFT p99 | |

|---|---|

| Solo (no contention) | 53.9 ms |

| FIFO under flooder (no rate-limit) | 1,585 ms (29x starvation) |

| kvwarden token-bucket under flooder | 61.5 ms (1.14x solo) |

Ten lines of YAML. No application code change. Raw artifacts: results/gate2_preprint_v3/.

pip install kvwarden

Quickstart

kvwarden does not bundle vLLM. Install an engine separately, then let kvwarden spawn and proxy it.

# 1. Install kvwarden + the engine.

pip install kvwarden

pip install vllm # needs a GPU box; see vLLM docs for your CUDA stack

# 2. Start kvwarden. It launches vLLM as a subprocess per the model list

# in the config and exposes one OpenAI-compatible endpoint.

kvwarden serve --config configs/quickstart_fairness.yaml

# 3. In another shell, wait for /health and send two tenants at the same model.

until curl -fs localhost:8000/health > /dev/null; do sleep 2; done

curl localhost:8000/v1/completions -H "X-Tenant-ID: noisy" \

-d '{"model":"llama31-8b","prompt":"Hello","max_tokens":64,"stream":true}'

curl localhost:8000/v1/completions -H "X-Tenant-ID: quiet" \

-d '{"model":"llama31-8b","prompt":"Hello","max_tokens":64,"stream":true}'

# 4. Watch the token bucket fire and the engine queue stay composed.

curl localhost:8000/metrics | grep -E "tenant_rejected|admission_queue_depth"

First call returns 503 until vLLM finishes loading (30-90 s on A100 for an 8B). The config at configs/quickstart_fairness.yaml is heavily commented; every knob traces back to a specific experiment.

A Docker Compose bundle is not shipping yet — if you want one, #109 is open as a good-first-issue.

Is this for me?

| Tool | Orchestration | Tenant fairness | Engines | Target scale |

|---|---|---|---|---|

| NVIDIA Dynamo | Kubernetes | No | Multi | Datacenter |

| llm-d | Kubernetes | No | vLLM | Datacenter |

| Ollama | None | No | llama.cpp | Single-user |

| kvwarden | None | Yes | vLLM + SGLang | Single-node, 2-8 tenants |

kvwarden is the "shared GPU, a handful of tenants, no cluster" cell. If you already run Kubernetes or you're a single user on your own box, you probably want one of the others.

How it works

A thin orchestration layer (~3,500 LOC src) sits between your app and vLLM/SGLang. On request arrival, a per-tenant token bucket decides admit-or-429 before the request reaches the engine queue. Admitted requests flow through a length-bucketed admission controller and a DRR priority scheduler, then out to the engine subprocess kvwarden manages. Engines never see tenant identity; kvwarden does, and that's the entire trick. Multi-model lifecycle (freq+recency eviction, hot-swap) lives at the same layer. The HTTP API is OpenAI-compatible so your client code doesn't change.

Deeper read and the component diagram live in docs/architecture/overview.md.

Reproduce the hero number

# Terminal A — start kvwarden on the hero config (vLLM 0.19.1, Llama-3.1-8B).

kvwarden serve --config configs/gate2_fairness_token_bucket.yaml --port 8000

# Terminal B — wait for /health, then run the 300-second bench.

until curl -fs localhost:8000/health > /dev/null; do sleep 5; done

kvwarden bench reproduce-hero --flavor=2tenant

Needs an A100-SXM4 80GB (or equivalent — 1xH100 works) with vLLM 0.19.1 installed. Runtime is about 300 s for the bench plus ~30 s preflight. Output goes to ./kvwarden-reproduce-<timestamp>/report.json with your numbers side-by-side against the published reference so you can file an issue with concrete data if they diverge. Other flavors: --flavor=n6, --flavor=n8. Full doc: docs/reproduce_hero.md.

Frontier coverage

The same admission mechanism holds on larger models. Gate 2.3 (70B dense, TP=4 on 4x H100) and Gate 2.4 (Mixtral-8x7B MoE, TP=2 on 2x H100) both land quiet-to-solo p50 ratios between 1.07x and 1.94x — the mechanism lives before the engine boundary, so sharding topology, expert routing, and attention shape don't change the fairness picture. Matrix, caveats, and raw bench pointers in docs/launch/frontier_coverage.md. The 8B A100 run is the only one with a single-command reproduce path today; 70B and Mixtral wrappers are a roadmap item.

About the name

kvwarden ships tenant-fair admission today. Tenant-aware KV cache eviction — the feature the name implies — is a scaffold in src/kvwarden/cache/manager.py, not a shipping feature. The path to making the name true is tracked in #103 and targets the 0.2 release. If you pip install 0.1.3 expecting KV-cache isolation today, you will not get it; you get admission-gate fairness, which is what the hero number measures.

What's next

- #102 T1: Distribution — 10 onboarding installs in week 1

- #103 T2: Name-truth — tenant-aware KV eviction for 0.2

- #104 T3: Moat — vllm-project/production-stack router + LiteLLM adapter

- #105 W1: Launch blockers — pre-launch QA + day-0 ops

Telemetry

kvwarden ships opt-in, anonymous install/usage telemetry. First interactive run prompts once; default is no; answer n or hit Enter and nothing is ever transmitted. Opt in and each command sends seven fields: a locally-minted uuid4 install ID, kvwarden version, Python major.minor, OS, bucketed GPU class, command name, and a unix timestamp. No prompts, model names, tenant IDs, or receiver-side IP capture. Toggle with kvwarden telemetry off/on/status; hard-disable with export KVWARDEN_TELEMETRY=0. Non-interactive sessions auto-opt-out. Worker source: telemetry-worker/. Full policy: docs/privacy/telemetry.md.

Tests

pytest tests/unit/ # 153 tests, no GPU needed, ~10 s

ruff check src/ tests/

ruff format --check src/ tests/

CI runs this matrix on Python 3.11 and 3.12; a red PR cannot merge.

Honesty log

Every metric we under-counted and the fix is in results/CORRECTIONS.md. TTFT measurement was rebuilt mid-project after a shadow review caught the original harness timing SSE first-frame RTT instead of first non-empty token (C2/C5). The 8B hero numbers exclude a 10 s JIT warmup window per C7; all 29 post-warmup windows sit between 36 ms and 65 ms.

Getting help

- File a bug: GitHub Issues. A

prometheus_dump.txtplusserver.logis worth more than a star. - Questions + launch ops context:

docs/ops/onboarding_playbook.md. - Contributing:

CONTRIBUTING.md. Start with a good first issue.

License

MIT. See LICENSE.

Cite as

@software{kvwarden_2026,

title = {kvwarden: tenant-fair LLM inference on a single GPU},

author = {Patel, Shrey and {Coconut Labs contributors}},

year = {2026},

version = {0.1.3},

url = {https://github.com/coconut-labs/kvwarden}

}

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file kvwarden-0.1.4.tar.gz.

File metadata

- Download URL: kvwarden-0.1.4.tar.gz

- Upload date:

- Size: 65.7 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

fdbcd674495549d7df9e9b822e0f808e49a1b8185d925436c75501dedfade330

|

|

| MD5 |

3544b8bd7386571efa5e1f2f578194e8

|

|

| BLAKE2b-256 |

545154eeecb71e88d45d925bffe95c20e5fbd78e02680674804c3400454d2b9a

|

File details

Details for the file kvwarden-0.1.4-py3-none-any.whl.

File metadata

- Download URL: kvwarden-0.1.4-py3-none-any.whl

- Upload date:

- Size: 70.5 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

4737b9f1df5f6821e49ddabb9cf72d11fbc32c4d843bc239d522d03e5f2b835a

|

|

| MD5 |

9371ffbc616f5c58484d5d1852900a38

|

|

| BLAKE2b-256 |

793a78455e9a5bb7e003419506d4a5e63903a4984f0aa0dbbd2bb2f2d3f7e21e

|