A Python package for Llama CPP.

Project description

Llama CPP

This is a Python package for Llama CPP ( https://github.com/ggml-org/llama.cpp ).

Installation

You can install the pre-built wheel from the releases page or build it from source.

pip install llama-cpp-pydist

Usage

This section provides a basic overview of how to use the llama_cpp_pydist library.

Deploying Windows Binaries

If you are on Windows, the package attempts to automatically deploy pre-compiled binaries. You can also manually trigger this process.

from llama_cpp import deploy_windows_binary

# Specify the target directory for the binaries

# This is typically within your Python environment's site-packages

# or a custom location if you prefer.

target_dir = "./my_llama_cpp_binaries"

if deploy_windows_binary(target_dir):

print(f"Windows binaries deployed successfully to {target_dir}")

else:

print(f"Failed to deploy Windows binaries or no binaries were found for your system.")

# Once deployed, you would typically add the directory containing llama.dll (or similar)

# to your system's PATH or ensure your application can find it.

# For example, if llama.dll is in target_dir/bin:

# import os

# os.environ["PATH"] += os.pathsep + os.path.join(target_dir, "bin")

Conversion Library Installation

To perform Hugging Face to GGUF model conversions, you need to install additional Python libraries. You can install them via pip:

pip install transformers numpy torch safetensors sentencepiece

Alternatively, you can install them programmatically in Python:

from llama_cpp.install_conversion_libs import install_conversion_libs

if install_conversion_libs():

print("Conversion libraries installed successfully.")

else:

print("Failed to install conversion libraries.")

Converting Hugging Face Models to GGUF

This package provides a utility to convert Hugging Face models (including those using Safetensors) into the GGUF format, which is used by llama.cpp. This process leverages the conversion scripts from the underlying llama.cpp submodule.

1. Install Conversion Libraries:

Before converting models, ensure you have the necessary Python libraries. You can install them using a helper function:

from llama_cpp import install_conversion_libs

if install_conversion_libs():

print("Conversion libraries installed successfully.")

else:

print("Failed to install conversion libraries. Please check the output for errors.")

2. Convert the Model:

Once the dependencies are installed, you can use the convert_hf_to_gguf function:

from llama_cpp import convert_hf_to_gguf

# Specify the Hugging Face model name or local path

model_name_or_path = "TinyLlama/TinyLlama-1.1B-Chat-v1.0" # Example: A small model from Hugging Face Hub

# Or, a local path: model_name_or_path = "/path/to/your/hf_model_directory"

output_directory = "./converted_gguf_models" # Directory to save the GGUF file

output_filename = "tinyllama_1.1b_chat_q8_0.gguf" # Optional: specify a filename

quantization_type = "q8_0" # Example: 8-bit quantization. Common types: "f16", "q4_0", "q4_K_M", "q5_K_M", "q8_0"

print(f"Starting conversion for model: {model_name_or_path}")

success, result_message = convert_hf_to_gguf(

model_path_or_name=model_name_or_path,

output_dir=output_directory,

output_filename=output_filename, # Can be None to auto-generate

outtype=quantization_type

)

if success:

print(f"Model converted successfully! GGUF file saved at: {result_message}")

else:

print(f"Model conversion failed: {result_message}")

# The `result_message` will contain the path to the GGUF file on success,

# or an error message on failure.

This function will download the model from Hugging Face Hub if a model name is provided and it's not already cached locally by Hugging Face transformers. It then invokes the convert_hf_to_gguf.py script from llama.cpp.

For more detailed examples and advanced usage, please refer to the documentation of the underlying llama.cpp project and explore the examples provided there.

Building and Development

For instructions on how to build the package from source, update the llama.cpp submodule, or other development-related tasks, please see BUILDING.md.

Changelog

2026-05-13: Update to llama.cpp b9129

Summary

Updated llama.cpp from b9106 to b9129, incorporating 15 upstream commits with breaking changes and new features.

Notable Changes

⚠️ Breaking Changes

- b9128: hexagon: eliminate scalar VTCM loads via HVX splat helpers (#22993)

- Scalar loads from VTCM are expensive on Hexagon. This PR removes scalar VTCM loads in matmul and flash attention, replacing them with HVX vector loads + splat (

vdelta) operations so the data stays in HVX registers end to end.

🆕 New Features

- b9106: vulkan: Support asymmetric FA in scalar/mmq/coopmat1 paths (#22589)

- Enable asymmetric K/V types in scalar/mmq/coopmat1 FA.

- I ran the backend perf tests before/after on mmq/coopmat1/coopmat2 paths and there were no regressions.

- I have read and agree with the contributing guidelines

- b9113: opencl: add q4_1 MoE for Adreno (#22856)

- Q4_1 MoE kernel optimized for Adreno OpenCL backend.

- I have read and agree with the contributing guidelines

- b9116: feat: add MiMo v2.5 vision (#22883)

- This PR adds image input mmproj support for MiMo-V2.5.

- Testing:

-

- b9119: vulkan: Fix Windows performance regression on Intel GPU BF16 workloads for Xe2 and newer (#22461)

- This is a minor fix to #18178 . At the moment Intel Windows GPU driver does not expose BF16 availability (=

VK_KHR_shader_bfloat16is not listed as device extension). Since the current code does not consider a case where coopmat is available but BF16 coopmat is unavailable, we are usingl_warptilefor BF16 scalar kernels. This is causing a regression vs non-coopmat config for n=512. - This PR addresses the regresion by using

l_warptileonly when coopmat is truly available for BF16. We are seeing 8-9% performance improvement on pp512 of gemma-4-E2B-it-BF16.gguf using Xe2/Xe3 GPUs. For Linux we see no change since BF16 is already enabled by default. - cc: @virajwad

- This is a minor fix to #18178 . At the moment Intel Windows GPU driver does not expose BF16 availability (=

- b9122: ggml-webgpu: address precision issues for multimodal (#22808)

- In this PR, I addressed the precision issues for multimodal. More specifically, when mixed types are used in models and projectors, I use f32 for precision in the flash attention (more specifically, in the tile path) for the browser. I did not edit

flash_attn.wgslsincesubgroup_matrixisn't enabled in my test environment. - Inputs:

- Tested model: LFM2.5-VL-450M-F16 with F16 mmproj.

- In this PR, I addressed the precision issues for multimodal. More specifically, when mixed types are used in models and projectors, I use f32 for precision in the flash attention (more specifically, in the tile path) for the browser. I did not edit

- b9127: ggml-opencl: add opt-in Adreno xmem F16xF32 GEMM for prefill (#22755)

- This PR adds an opt-in Adreno xmem GEMM path for OpenCL prefill matmul.

- Scope:

- build-time gated by

GGML_OPENCL_USE_ADRENO_KERNELS

- b9129: ggml-zendnn : adaptive fallback to CPU backend for small batch sizes (#22681)

- Introduces an adaptive fallback mechanism in the ZenDNN backend that ensures ZenDNN never regresses against the native CPU backend, and also updates to the latest ZendNN version (ZenDNN-2026-WW17).

- Problem

- ZenDNN's

lowoha::matmulis slower than ggml-cpu for:

🐛 Bug Fixes

- b9118: vulkan: Check shared memory size for mmq shaders (#22693)

- Calculate shared memory usage for mmq shaders, and choose smaller tile sizes when they don't fit.

- Should fix #22690.

- I have read and agree with the contributing guidelines

Additional Changes

6 minor improvements: 2 documentation, 2 examples, 2 maintenance.

Full Commit Range

- b9106 to b9129 (15 commits)

- Upstream releases: https://github.com/ggml-org/llama.cpp/compare/b9106...b9129

2026-05-11: Update to llama.cpp b9105

Summary

Updated llama.cpp from b9076 to b9105, incorporating 23 upstream commits with breaking changes and new features.

Notable Changes

⚠️ Breaking Changes

- b9080: Gemma4_26B_A4B_NvFp4 hf checkpoint convert to gguf format fixes (#22804)

- Gemma4_26B_A4B_NvFp4 hf checkpoint convert to gguf format fixes. This PR fixes the following:

-

- Excluded weight_scale, weight_scale_2, and input_scale from the existing + ".weight" rename for .experts. tensors. The original rename was causing issue with NVFP4 scale tensor names (e.g. experts.0.down_proj.weight_scale_2 => experts.0.down_proj.weight_scale_2.weight), breaking the NVFP4 lookup at _generate_nvfp4_tensors

-

- Added FFN_GATE_EXP, FFN_UP_EXP, alongside the existing FFN_GATE_UP_EXP in the GEMMA4 tensor allow-list. Originally only fused FFN_GATE_UP_EXP was allowed. HF NVFP4 checkpoints store gate/up/down as separate per-expert tensors, so the converter couldn't map them especially for NvFP4 . Other option was to re-quantize if want to fuse gate and up proj.

🆕 New Features

- b9082: Feature hexagon l2 norm (#22816)

- Add

GGML_OP_L2_NORMsupport to the Hexagon HTP backend via an HVX vectorized kernel. - I have read and agree with the contributing guidelines

- AI usage disclosure: YES, used Claude Code to generate the initial version based on other HVX code then iterated/tested/updated manually.

- Add

- b9084: hexagon: add HTP kernel for GGML_OP_GATED_DELTA_NET (#22837)

- Add a high-performance HVX kernel for

GGML_OP_GATED_DELTA_NETon Hexagon HTP, enabling Gated Delta Net models (e.g. Qwen3.5) to run the recurrence entirely on-device instead of falling back to CPU. - Key optimizations:

- Fused multi-row kernels (4-row for PP, 8-row for TG): reduces K/Q/gate vector reload overhead by 2–4×

- Add a high-performance HVX kernel for

- b9085: Add flash attention MMA / Tiles to support MiMo-V2.5 (#22812)

- MiMo-V2.5 has asymmetric head sizes for K=192, v=128 which causes a fallback to CPU when using CUDA with flash attention enabled. This PR adds the required MMA / Tiles entries to support compilation for those sizes.

llama-sweep-benchspeeds,master:-

- b9088: [SYCL] Add BF16 support to GET_ROWS operation (#21391)

- Add

GGML_TYPE_BF16support to the SYCL backend'sGET_ROWSoperation. CurrentlyGET_ROWSsupports F16, F32, and several quantized types but not BF16, causing models with BF16 tensors to fall back to CPU for this operation — triggering catastrophic performance degradation due to full GPU→CPU tensor transfers on every token. -

Disclosure: This PR was authored with the assistance of AI (GitHub Copilot / Claude). The bug was discovered through systematic debug log analysis of real-world performance issues.

- The SYCL backend's

ggml_backend_sycl_device_supports_op()does not listGGML_TYPE_BF16in theGGML_OP_GET_ROWSswitch. When a model has BF16 tensors that requireGET_ROWS, the scheduler falls back to CPU, which requires downloading the entire tensor from GPU to CPU via PCIe every single token.

- Add

- b9093: model: add sarvam_moe architecture support (#20275)

- Add support for

sarvam_moearchitecture (sarvamai/sarvam-30b). SarvamMoEForCausalLMis a straightforward extension ofBailingMoeForCausalLM(see vLLM PR #33942)- 19 layers: 1 dense FFN + 18 MoE layers (128 routed experts, top-6, 1 shared expert)

- Add support for

🐛 Bug Fixes

- b9079: common : revert reasoning budget +inf change (#22740)

- fixes #22717

- I have read and agree with the contributing guidelines

- b9081: common : do not wrap raw strings in schema parser for tagged parsers (#22827)

- Fixes #22240

- I have read and agree with the contributing guidelines

- b9094: model : fix model type check for granite/llama3 and deepseek2/glm4.7 lite (#22870)

- cont #22004

- Fixes https://github.com/ggml-org/llama.cpp/pull/22004#issuecomment-4412473268

- The checks used uninitialized

n_vocabinstead of fetching from metadata as was done before refactor.

Additional Changes

14 minor improvements: 3 documentation, 6 examples, 5 maintenance.

Full Commit Range

- b9076 to b9105 (23 commits)

- Upstream releases: https://github.com/ggml-org/llama.cpp/compare/b9076...b9105

2026-05-02: Update to llama.cpp b9002

Summary

Updated llama.cpp from b8992 to b9002, incorporating 10 upstream commits with new features and performance improvements.

Notable Changes

🆕 New Features

- b8994: ggml-webgpu: add the upscale shader (#22419)

- In this PR, I added the upscale shader. Based on the test cases, nearest, bilinear (w/t antialias) and bicubic methods are implemented with/without the aligned_corner flags. Some other combinations are currectly ignored,

- All tests passed; did not find performance tests so cannot run a comparison test.

- b8995: vulkan: Support asymmetric FA in coopmat2 path (#21753)

- There has been some recent interest/experimentation with mixed quantization types for FA. I had originally designed the cm2 FA shader with this in mind (because I didn't realize it wasn't supported at the time!), this change adds the missing pieces and enables it.

- Also support Q1_0 since people have been trying that out (seems crazy, but who knows).

- We should be able to do similar things in the coopmat1/scalar path, but there's another change open against the scalar path and I don't want to conflict.

- b8998: hexagon: enable non-contiguous row tensor support for unary ops (#22574)

- Enable hexagon support for unary ops for non-contiguous row-strided tensors.

- Relax support check to accept row-contiguous tensors (

ggml_is_contiguous_rows) instead of requiring full contiguity - Add

unary_row_offset()to compute correct DDR byte offsets using actual tensor strides for non-contiguous tensors

- b8999: llama-quant : fix

--tensor-typewhen defaultqtypeis overriden (#22572)- fix #22544 (my fault!)

- Currently, when using

--tensor-type "<regex>=GGML_TYPE", if theGGML_TYPEoverride matches the default type for the chosen outputftype, the internal heuristics inllama_tensor_get_type_implmay still take effect, rather than being locked to the specifiedGGML_TYPE. - This is my own mistake that I introduced in #19770.

- b8999: llama-quant : honor --tensor-type override when it matches the global ftype (#22559)

- Fixes #22544.

- When a user supplies an explicit

--tensor-type "<pattern>=<type>"mapping that happens to match the requested global ftype, the user's intent (lock that tensor to that exact type) is silently dropped and the imatrix/heuristic path is allowed to override it. llama_tensor_get_typeonly setmanual = truefrom inside theqtype != new_typebranch:

- b9000: hexagon: hmx flash attention (#22347)

- This PR implemented hmx based flash attetion for Hexagon backend.

- Profiling shows that the main bottleneck is the

expcomputation (about 40% of total FA runtime). I experimented with a LUT-based, lossless optimization, but it appears thatvgathercannot be effectively parallelized—multithreadedvgatherprovided no measurable speedup.I’m not sure whether this is due to an issue in my implementation or an inherent hardware limitation.As mentioned here,vgatheris aborted. - As an alternative, I implemented an FP16 version of exp to improve performance. This does introduce some numerical loss, so it is disabled by default. Enabling it via

GGML_HEXAGON_FA_EXP2_HF=ONyields an additional ~10% performance gain.

- b9000: hexagon: optimization for HMX mat_mul (#21554)

- This PR introduces two additional optimizations for the Hexagon HMX backend:

-

- Enable asynchronous HMX execution

- HMX computations are now executed asynchronously, allowing them to overlap with HVX dequantization and DMA stages within the pipeline. Previously, synchronous HMX calls blocked the main thread and limited parallelism.

🚀 Performance Improvements

- b8996: ggml-webgpu: Fix vectorized handling in mul-mat and mul-mat-id (#22578)

- This PR fixes two issues with the handling of vectorized in mul-mat.

- Remove the

dst->ne[1]check ofkey.vectorizedfrom mul-mat-fast, as it looks unnecessary in bothmul_mat_reg_tileandmul_mat_subgroup_matrix. The following shows an example of the performance improvement. - Add the missing vectorized variant name to the mul-mat-id pipeline.

🐛 Bug Fixes

- b8992: Update llama-mmap to work with 32-bit emscripten (#22497)

- When compiling to 32-bit WebAssembly through Emscripten,

std::fseekandstd::ftellreturn along, which is interpreted as a 32-bit signed value. Unfortunately, this means that any files above 2GB overflow the maximum positive integer, leading to bad results. This fixes that by delegating tofseekoandftelloin Emscripten builds, which return a 64-bitoff_tthat can be interpreted correctly in both 32-bit and 64-bit WASM builds. - Note that ggml does something similar in all cases: https://github.com/ggml-org/llama.cpp/blob/master/ggml/src/gguf.cpp#L25. However I didn't make that full change here because I'm not sure if it would lead to issues in other places.

- For a little more context, this, in combination with the origin private file system (OPFS), allows models > 2GB to be loaded by the WebGPU backend in the browser without splitting the models into shards.

- When compiling to 32-bit WebAssembly through Emscripten,

Additional Changes

1 minor improvements: 1 maintenance.

- b9002: b9002

-

Full Commit Range

- b8992 to b9002 (10 commits)

- Upstream releases: https://github.com/ggml-org/llama.cpp/compare/b8992...b9002

2026-05-01: Update to llama.cpp b8992

Summary

Updated llama.cpp from b8946 to b8992, incorporating 41 upstream commits with breaking changes and new features.

Notable Changes

⚠️ Breaking Changes

- b8946: fix(graph): remove duplicate wo_s scale after build_attn (Qwen3, LLaMA) (#22421)

- Observed that build_attn present in llama-graph already applies NVFP4 per tensor scale (wo_s) via

- llama-graph.cpp (build_lora_mm(wo, cur, wo_s) or explicit wo_s mul).

- Also observed these model builders(qwen3, qwen3moe, llama) are also multiplied the

- b8981: common : do not pass prompt tokens to reasoning budget sampler (#22488)

- cont: #22323

- Do not pass prompt tokens through the reasoning budget sampler, mirroring grammar behavior. Renamed

accept_grammartois_generatedto better convey the purpose of this flag. - Also adjusted the prefill logic to pass the generation prompt through the reasoning budget sampler as well. I removed the

prefill_tokensparameter, as it required the prefill to match the starting token sequence exactly. Instead, we simply feed each token individually so it gets processed by the state machine.

🆕 New Features

- b8950: Additional test for common/gemma4 : handle parsing edge cases (#22420)

- Add few test cases for #21760

- b8951: ggml-webgpu: fast matrix-vector multiplication for i-quants (#22344)

- Adds fast WebGPU mat-vec implementations for all nine i-quant types (IQ1_S, IQ1_M, IQ2_XXS, IQ2_XS, IQ2_S, IQ3_XXS, IQ3_S, IQ4_NL, IQ4_XS). The kernels are added to

mul_mat_vec.wgsland selected through the existinguse_fastdispatcher inggml_webgpu_mul_mat. - Numbers below are from

test-backend-ops perf, comparing this branch vs. current master for the variant

- Adds fast WebGPU mat-vec implementations for all nine i-quant types (IQ1_S, IQ1_M, IQ2_XXS, IQ2_XS, IQ2_S, IQ3_XXS, IQ3_S, IQ4_NL, IQ4_XS). The kernels are added to

- b8953: ggml-webgpu: add Q1_0 support (#22374)

- Adds WebGPU support for the Q1_0 quantization type, including a fast mat-vec kernel (

MUL_ACC_Q1_0inmul_mat_vec.wgsl), a fast mat-mat block (INIT_SRC0_SHMEM_Q1_0inmul_mat_decls.tmpl) that enables both the register-tile and subgroup-matrix paths, and aGET_ROWSdequant (Q1_0block inget_rows.wgsl), along with the dispatcher andsupports_opupdates forMUL_MATandMUL_MAT_ID. - Q1_0 was previously not supported on the WebGPU backend, so both mat-vec and mat-mat dispatched to the CPU fallback. With this PR the kernels run on WebGPU.

- Adds WebGPU support for the Q1_0 quantization type, including a fast mat-vec kernel (

- b8956: CANN: Add support for Qwen35 ops (#21204)

- This PR adds support for several missing operators in the CANN (Ascend NPU) backend for qwen3.5

- New operators:

- b8960: vulkan: add barrier after writetimestamp (#21865)

- Add a pipelinebarrier after each writetimestamp call in the perf_logger code.

- The vulkan spec doesn't prevent commands issued after a timestamp from starting to execute before the timestamp is written. The NV driver had been ordering these, but future drivers won't. So we need a barrier after each timestamp to order the timestamp vs the next commands.

- b8962: ggml-webgpu: fix buffer aliasing for ssm_scan and refactor aliasing logic (#22456)

- @SharmaRithik noticed that when running Granite 4.0 ssm_scan aliases several tensors, which this PR fixes by adding logic to merge those tensors into a single binding in the shader. After making that change, I realized that some of the logic for calculating aliasing could be refactored so that it is consistent across all operations and takes place in the shader library during preprocessing, so I made that change as well. I also added a test for the overlapping tensors for ssm_scan.

- fyi @yomaytk

- b8964: common : re-arm reasoning budget after DONE on new (#22323)

- DONE state in reasoning budget state machine absorbs start tags, causing any block after the first to run unbudgeted. This makes it so the reasoning budget is a no-op for multi-block thinking models. Using the Qwen3.6-27B model with the recommended settings causes this issue to appear [1]. The fix is to re-arm in DONE on a match and transition to COUNTING with a fresh budget. I've added a regression test in test-reasoning-budget to test for this new behavior and all 6 tests pass.

- [1] "Thinking Preservation: we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead." - https://huggingface.co/Qwen/Qwen3.6-27B

- Reproducible using:

unsloth/Qwen3.6-27B-GGUF, server flags:--reasoning-budget 128 --reasoning-format deepseek --jinja, base commit: master at15fa3c493(b8920)

- b8966: ggml-cuda: add flash-attn support for DKQ=320/DV=256 with ncols2=32 (… (#22286)

- …GQA=32)

- Adds MMA-f16 and tile kernel configs, dispatch logic, template instances, and tile .cu file for Mistral Small 4 (head sizes 320/256), restricting to ncols2=32 to support GQA ratio 32 only.

- Add fattn-kernel instantiation for dimension DQK=320 and DV-256 required for Mistal small 4. forced kernel instantiation to ncols2=32

- b8967: ggml-cuda: Repost of 21896: Blackwell native NVFP4 support (#22196)

- This is a restored clone of PR #21896 ggml-cuda: Blackwell native NVFP4 support .

- Unfortunately it closed during a rebase error and it cannot be reopened

- The exact commits are here as they were before. Sorry about this mixup!

- b8969: Added sve tuned code for gemm_q8_0_4x8_q8_0() kernel (#21916)

- This PR introduces support for SVE (Scalable Vector Extensions) kernels for the q8_0_q8_0 gemm using i8mm and vector instructions. ARM Neon support for this kernel added Earlier.

- This PR contains the SVE implementation of the gemm used to compute the Q8_0 quantization.

- b8974: ggml-cpu : disable tiled matmul on AIX to fix page boundary segfault (#22293)

- vec_xst operations in the tiled path crash on AIX when writing near 4KB page boundaries due to strict memory protection. Fall back to mnpack implementation on AIX for stable execution.

- This patch fixes segmentation faults in q4_0 model inference on AIX PowerPC systems by disabling the tiled matrix multiplication path in llamafile's sgemm implementation.

vec_xstoperations crash on AIX when writing near 4KB page boundaries due to strict memory protection. Thevec_xstinstruction cannot write across page boundaries on AIX, and when the buffer offset lands at addresses like0x1100ed000(exactly at a page boundary), the write operation attempts to access unmapped memory, triggering a segfault.

- b8979: CUDA: fuse SSM_CONV + ADD(bias) + SILU (#22478)

- Adds a CUDA fusion for

SSM_CONV + ADD(bias) + SILU. The existingSSM_CONV + SILUfusion didn't match on Mamba-1 and Mamba-2 layers (used by Nemotron-H, Granite-Hybrid, Jamba, and other Mamba-style hybrids) because of a biasADDoperation between the conv and the SILU. - | Model | Test | t/s master | t/s ssm_conv-bias-silu-fusion | Speedup |

- |:------------------|:--------------|-------------:|--------------------:|----------:|

- Adds a CUDA fusion for

- b8980: hexagon: make vmem and buffer-size configurable (#22487)

- This PR adds two new knobs to the Hexagon backend

GGML_HEXAGON_VMEM- Allows for overriding default VMEM limit. The default is the same as before (around 3.2GB)

- b8984: ggml-webgpu: add fast mat-mat path for i-quants (#22504)

- Adds i-quant support to the WebGPU fast mat-mat path. Previously i-quants (IQ1_S, IQ1_M, IQ2_XXS, IQ2_XS, IQ2_S, IQ3_XXS, IQ3_S, IQ4_NL, IQ4_XS) only had a fast mat-vec kernel; mat-mat (prefill) fell back to the legacy non-tiled

mul_mat.wgslpath. This PR adds the missingINIT_SRC0_SHMEM_IQ*blocks tomul_mat_decls.tmplso the same shared memory dequant feeds both fast paths. - Numbers below are kernel-level throughput (GFLOPS) from

test-backend-ops perf -o MUL_MATatm=4096, n=512, k=14336. The register-tile column was measured by disabling thesubgroup_matrixcapability so the fallback fast path runs directly.

- Adds i-quant support to the WebGPU fast mat-mat path. Previously i-quants (IQ1_S, IQ1_M, IQ2_XXS, IQ2_XS, IQ2_S, IQ3_XXS, IQ3_S, IQ4_NL, IQ4_XS) only had a fast mat-vec kernel; mat-mat (prefill) fell back to the legacy non-tiled

- b8990: vulkan: add get/set tensor 2d functions (#22514)

- Implement the 2d tensor copy functions that were added for TP support to the Vulkan backend. This shouldn't make a performance difference, but it was not much work since the 2d functions basically already existed.

- I also noticed that the interface comments for the functions were universally wrong, so I corrected them, too. Sorry about the pings that causes.

- I have read and agree with the contributing guidelines

🐛 Bug Fixes

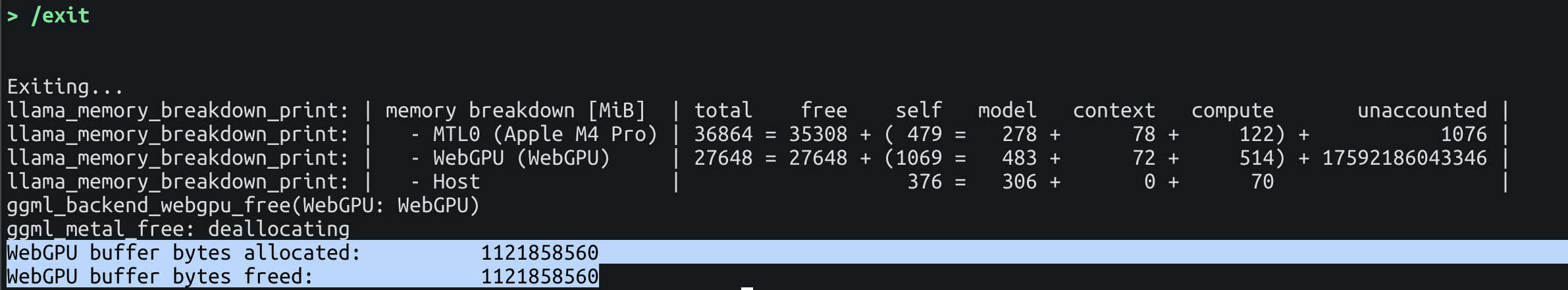

- b8948: common: Fix type casting for unaccounted memory calculation (#22424)

- fix unaccounted mem showing huge numbers (like 2^44, 2^44 = 2^64/1024/1024) when running llama-server --fit on.

- changed unaccounted from size_t to int64_t so it can show negative values properly.

- before pr:

- b8949: fix: rpc-server cache may not work in Windows environments (#22394)

- Even when cache is enabled on the rpc-server in a Windows environment, the rpc directory is not automatically created, and therefore, cache files within that directory are not created.

- Furthermore, only the first character of the cache file name is output to the log, making it difficult to notice that cache files are not being generated.

- Before

- b8957: ggml : revert to -lm linking instead of find_library (#22355)

find_library(MATH_LIBRARY m)was introduced recently, but it breaks CUDA compilation with GGML_STATIC. I could not find any valid use case where we would preferfind_libraryover the standard-lmapproach.- This commit is also meant to start a discussion if there is a valid reason to keep

find_library(MATH_LIBRARY m), we should clarify what problem it was solving and find an alternative fix that does not break CUDA with GGML_STATIC. - Found with installama.sh: https://github.com/angt/installama.sh/actions/runs/24885620138/job/72864816848

- b8968: TP: fix delayed AllReduce + zero-sized slices (#22489)

- Fixes https://github.com/ggml-org/llama.cpp/issues/22391 .

- The problem is that k-quants have a block size of 256 vs. the size of a single expert at 512. So for 3+ GPUs one of them ends up with a zero-sized slice. This would normally not be an issue since a zero-sized slice is supported; the corresponding nodes are disabled and the backend participates in the following AllReduce with a zeroed out buffer in order to receive the results of other backends. However, the interaction of a zero-sized slice and a delayed AllReduce for better MoE performance does not work correctly. For those the range of disabled nodes needs to be extended, otherwise one of the backends will have garbage data prior to the AllReduce.

- Using 3x RTX 4090 the Qwen 3.6 q4_K_M PPL on the first 512 tokens of Wikitext is 4.1590 for

-sm layer, for-sm tensoron master it's 8.3604, for-sm tensorwith this PR it's4.1554.

- b8970: common: Intentionally leak logger instance to fix hanging on Windows (#22273)

- Added workaround for #22142. There are three points in this PR:

- Intentional leak of logger instance

~common_log()called at DLL teardown phase was causing hanging on Windows. DLL teardown phase seems to be a fragile timing to do system calls like mutex lock, cond notify, thread join, etc. which did not provide sane results. We are working around this by intentionally leaking the logger instance to skip cleanup.

- b8971: ggml-webgpu: Fix bug in FlashAttention support check (#22492)

- https://github.com/ggml-org/llama.cpp/pull/22199 enabled FlashAttention in the browser (non subgroup-matrix paths). However, the check in supports-op had a fallback to the subgroup-matrix path if the new tile path wasn't supported (e.g., if the browser doesn't support subgroups). This caused an error when calculating some of the shader parameters. This PR fixes the issue by returning false early in the support check if none of the flashattention variants will work.

- fyi @ArberSephirotheca.

- b8972: ggml-cpu: cmake: append xsmtvdotii march for SpacemiT IME (#22317)

- When GGML_CPU_RISCV64_SPACEMIT=ON is set, ime1_kernels.cpp contains inline asm for the vmadot family which requires the xsmtvdotii custom extension.(problem can see in some blogs and make sure in K3 platform) The current CMakeLists does not include xsmtvdotii, so any toolchain that honours the explicit -march (tested with SpacemiT GCC 15.2) fails at the assembler stage:

- Error: unrecognized opcode `vmadot v16,v14,v0',

- extension `xsmtvdotii' required

- b8973: ggml-cuda: refactor fusion code (#22468)

- Refactor the fusion code to be a single function. Also fix a bug in the fusion code where it does not check the value of the env variable to disable fusion.

- b8982: spec : fix vocab compat checks (#22358)

- Fix the logic for checking compatibility of the special tokens in the target and draft vocabs.

- For example, this makes the vocabs of Qwen3.6 27B and Qwen3.5 0.8B compatible.

- b8986: CUDA: fix tile FA kernel on Pascal (#22541)

- Fixes https://github.com/ggml-org/llama.cpp/issues/22491 .

- The problem is that the new kernel for Mistral Small 4 is being compiled unconditionally with 32 columns / CUDA block. On Pascal that puts it above the 38 kiB / CUDA block shared memory limit. This PR makes it so that 32 columns/block continue to be used for AMD where this fits and on Pascal 2 CUDA blocks with 16 columns each are used instead.

- b8989: spec: fix cli argument typo (#22552)

- Fix a typo in cli arguments

- I have read and agree with the contributing guidelines

- b8992: Update llama-mmap to work with 32-bit emscripten (#22497)

- When compiling to 32-bit WebAssembly through Emscripten,

std::fseekandstd::ftellreturn along, which is interpreted as a 32-bit signed value. Unfortunately, this means that any files above 2GB overflow the maximum positive integer, leading to bad results. This fixes that by delegating tofseekoandftelloin Emscripten builds, which return a 64-bitoff_tthat can be interpreted correctly in both 32-bit and 64-bit WASM builds. - Note that ggml does something similar in all cases: https://github.com/ggml-org/llama.cpp/blob/master/ggml/src/gguf.cpp#L25. However I didn't make that full change here because I'm not sure if it would lead to issues in other places.

- For a little more context, this, in combination with the origin private file system (OPFS), allows models > 2GB to be loaded by the WebGPU backend in the browser without splitting the models into shards.

- When compiling to 32-bit WebAssembly through Emscripten,

Additional Changes

12 minor improvements: 1 documentation, 6 examples, 5 maintenance.

Full Commit Range

- b8946 to b8992 (41 commits)

- Upstream releases: https://github.com/ggml-org/llama.cpp/compare/b8946...b8992

2026-04-27: Update to llama.cpp b8946

Summary

Updated llama.cpp from b8863 to b8946, incorporating 63 upstream commits with breaking changes, new features, and performance improvements.

Notable Changes

⚠️ Breaking Changes

- b8917: jinja : remove unused header (#22310)

- Remove unused header

- I have read and agree with the contributing guidelines

- b8922: ggml-webgpu: enable FLASH_ATTN_EXT on browser without subgroup matrix (#22199)

- This PR addresses few things:

-

- Cleanup the vec path to remove requirement for subgroup matrix.

- b8946: fix(graph): remove duplicate wo_s scale after build_attn (Qwen3, LLaMA) (#22421)

- Observed that build_attn present in llama-graph already applies NVFP4 per tensor scale (wo_s) via

- llama-graph.cpp (build_lora_mm(wo, cur, wo_s) or explicit wo_s mul).

- Also observed these model builders(qwen3, qwen3moe, llama) are also multiplied the

🆕 New Features

- b8863: ggml-cuda: flush legacy pool on OOM and retry (#22155)

- This adds a conservative fallback for the legacy CUDA/HIP pool allocator.

- On non-VMM setups, the legacy pool can end up holding cached free buffers that are individually too small for a new request, but still occupy enough VRAM to make the next allocation fail. In that case, this patch flushes the cached legacy-pool buffers and retries the allocation once before aborting.

- The normal hit path is unchanged. This is intended as a narrow mitigation for legacy-pool OOMs, not a broader allocator redesign. I validated the retry path locally with a synthetic OOM injection on a legacy-pool build.

- b8868: llama-ext : fix exports (#22202)

- cont #22171

- Export new symbols.

- b8870: vulkan: Support F16 OP_FILL (#22177)

- Support f16 for OP_FILL. This came up in https://github.com/ggml-org/llama.cpp/pull/21149.

- I have read and agree with the contributing guidelines

- AI usage disclosure: YES, I used AI to write this, but I reviewed it.

- b8874: arg : add --spec-default (#22223)

- Add

--spec-defaultflag for enabling default configuration for speculative decoding. - I have read and agree with the contributing guidelines

- Add

- b8878: Hexagon: DAIG op (#22195)

- I have read and agree with the contributing guidelines

- AI usage disclosure: Yes, to understand some basics of how to add a hexagon op

- b8881: hexagon: add support for FILL op (#22198)

- Add support for FP32 and FP16 FILL op in hexagon backend.

test-backend-ops -b HTP0 -o FILL-

</code></pre> </li> </ul> </li> <li><strong>b8882</strong>: ggml-webgpu(shader): support conv2d kernels. (<a href="https://github.com/ggml-org/llama.cpp/pull/21964">#21964</a>) <ul> <li>In this PR, we implemented the conv2d shader kernel to support VL models that require conv2d operations.</li> <li>Backend ops tests all passed. I haven't tested this with real models yet.</li> </ul> </li> <li><strong>b8891</strong>: ggml-webgpu: Add fused RMS_NORM + MUL (<a href="https://github.com/ggml-org/llama.cpp/pull/21983">#21983</a>) <ul> <li> <!-- Describe what this PR does and why. Be concise but complete --> </li> <li>This PR adds the initial kernel fusion to WebGPU backend with RMS_NORM + MUL (it is similar to <a href="https://github.com/ggml-org/llama.cpp/pull/14800">https://github.com/ggml-org/llama.cpp/pull/14800</a>).</li> <li>The performance on the major models on my device (M2, Metal 4) is as follows, but unfortunately, the performance is almost the same on this implementation.</li> </ul> </li> <li><strong>b8892</strong>: [WebGPU] Implement async tensor api and event api (<a href="https://github.com/ggml-org/llama.cpp/pull/22099">#22099</a>) <ul> <li>This PR implements the async tensor and event api necessary for the WebGPU backend to use the async loading mode to load models. This is needed because we have strict memory requirements when running wllama with the WebGPU backend (especially on Safari and on mobile devices). The async tensor API uses only four 1MB buffers to load a model, while the default loading mode uses a single resizable buffer. Using the async tensor API reduces our memory footprint by ~20-25%.</li> <li>Some figures on memory usage in wllama with these and other changes:</li> <li> <img width="2100" height="900" alt="steady_state_bar_cold" src="https://github.com/user-attachments/assets/189bd1ee-4de1-4d9d-8da2-2e6f3a6c9e5e" /> </li> </ul> </li> <li><strong>b8893</strong>: Add hipGraph and VMM support to ROCM (<a href="https://github.com/ggml-org/llama.cpp/pull/11362">#11362</a>) <ul> <li>This adds, disabled by default, hipGraph support. Essentially this just involves adding the relevant hip defines to ggml-cuda/vendors/hip.h</li> <li>Currently is seams that hipGraph dosent improve performance at all. Looking at rocprof it seams that launching the kernels this way gains no decrease in overhead, while building the graph adds overhead. Presumably since this api was recently added to rocm and is still marked as beta (<a href="https://rocmdocs.amd.com/projects/HIP/en/latest/reference/hip_runtime_api/modules/graph_management.html">https://rocmdocs.amd.com/projects/HIP/en/latest/reference/hip_runtime_api/modules/graph_management.html</a>) It has not been tuned for performance.</li> <li>I still think its useful to have this since in the future this will likely change, and maybe on some hw configs it already helps right now.</li> </ul> </li> <li><strong>b8913</strong>: ggml-wegpu: handle the buffer aliasing for rms fuse (<a href="https://github.com/ggml-org/llama.cpp/pull/22266">#22266</a>) <ul> <li>This PR addressed an edge case of #21983. I load and run a model in the browser, and I met this error:</li> <li> <pre><code>

- ggml_webgpu: Device error! Reason: 2, Message: Writable storage buffer binding aliasing found between [BindGroup "RMS_NORM_MUL"] set at bind group index 0, binding index 0, and [BindGroup "RMS_NORM_MUL"] set at bind group index 0, binding index 2, with overlapping ranges (offset: 5242880, size: 4096) and (offset: 5242880, size: 4096) in [Buffer "tensor_buf3"].

- b8914: hexagon: add SOLVE_TRI op (#21974)

- This PR add

solve triop support for hexagon. Usehvxto accelarate the caculation. - Tests all passes with

test-backend-ops.

- b8935: opencl: add iq4_nl support (#22272)

- This PR adds support for iq4_nl. It is slightly bigger, containing both general implementation and Adreno specific implementation.

- b8944: ggml : use 64 bytes aligned tile buffers (#21058)

- While trying to fix #20824, i couldn't reproduce it so far but forcing alignment could help and doesn't hurt.

- | Model | Test | t/s OLD | t/s NEW | Speedup |

- |:---------------------------------|:-------|----------:|----------:|----------:|

🚀 Performance Improvements

- b8893: HIP: flip GGML_HIP_GRAPHS to on (#22254)

- In #11362 hip graph was disabled by default as, at the time, its performance impact was negative. Due to improvements in rocm and our usage and construction of graphs this is no longer true, so lets change the default

- gfx1100 @ 340w

- | Model | Test | t/s master | t/s hipgraph | Speedup |

- b8931: CUDA: reduce MMQ stream-k overhead (#22298)

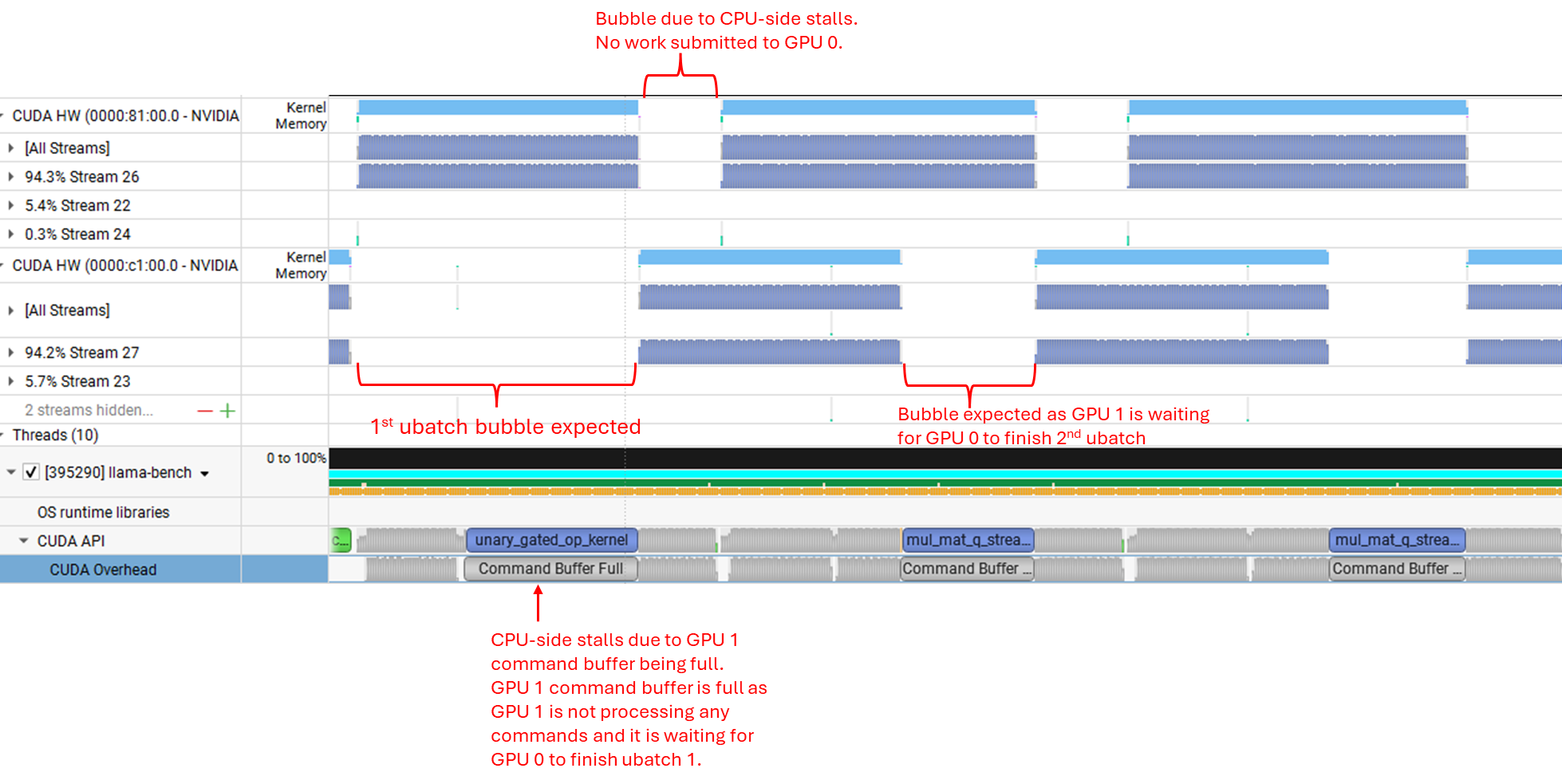

- This PR reduces the stream-k overhead in the MMQ kernel by using

fastdivwhich precomputes some values on the CPU to speed up integer divisions. Also, as originally suggested by @nisparks in https://github.com/ggml-org/llama.cpp/pull/22170 and https://github.com/ggml-org/llama.cpp/pull/22252 optionally use tiling rather than a stream-k decomposition. The implementation in this PR is different vs the ones linked: in those an extra variant of the kernel is being compiled that has the tiling hard-coded (as is done for relatively old GPUs), in this PR the number of CUDA blocks is scaled dynamically to the number of tiles so that each CUDA block works on exactly one tile; if it turns out that there is a meaningful performance difference it may make sense to still compile the extra kernels. The choice for whether or not to use stream-k does not explicitly depend on MoE in this PR, instead it is determined from the efficiency loss that would be incurred by tiling: if it is <= 10% tiling is used in order to skip the stream-k fixup. - I have read and agree with the contributing guidelines

- This PR reduces the stream-k overhead in the MMQ kernel by using

- b8936: ggml-cpu: optimize avx2 q6_k (#22345)

- Basically I took the optimizations I did for AVX a while back and brought them over to AVX2.

- PR:

- | model | size | params | backend | threads | test | t/s |

- b8941: ggml-webgpu: performance-portable matmul tuning knobs (#22241)

- This PR updates the tuning knobs for the WebGPU register tiling and subgroup matmul kernels to improve performance across GPUs. These suggested knobs are based on exhaustive data collection from four GPUs: NVIDIA RTX 5080 FE, AMD Radeon RX 7900 XT, Intel Arc B580, and Apple M2. After running a performance portability analysis on the exhaustive data, we found configurations that provide better average performance while minimizing worst-case slowdowns.

- Here is the table:

- | Path | Metric | Default | Proposed |

🐛 Bug Fixes

- b8871: metal : workaround macOS GPU interactivity watchdog (#22216)

- fix #20141

- fix #22214

- See https://github.com/ggml-org/llama.cpp/issues/20141#issuecomment-4273461320 for more information.

- b8873: Fix build for Android (#125)

- The project can be built for Android with NDK and CMake like this:

- cmake -DCMAKE_TOOLCHAIN_FILE=$NDK/build/cmake/android.toolchain.cmake -DANDROID_ABI='arm64-v8a' -DANDROID_PLATFORM=android-23 ..

- However, vdotq_* intrinsics are not available on Android. Fix this by checking for ANDROID and use the code replaced by commit 84d9015c in this case.

- b8873: Fix potential licensing issue (#126)

- I'm not an expert on Licenses BUT,

- If you attribute Facebook in the README and description, you essentially admit/imply that this repo is a modification of their repo. Facebook's repo has "GPL-3.0 license". Which means this repo should also be like that in that case, which is something that we dont want.

- This PR fixing that potential language issue.

- b8880: ggml-webgpu: reset CPU/GPU profiling time when freeing context (#22050)

- This PR fixes https://github.com/ggml-org/llama.cpp/issues/22049.

- When I ran the command as in the above issue, the result is as follows, and we can see that the profiling times are reset for each test.

- b8882: ggml webgpu: Move to no timeout for WaitAny in graph submission to avoid deadlocks (#20618)

- Another approach to see if this avoids deadlocks in the llvm-pipe Vulkan backend. After some debugging on the Github CI I've seen cases where it seems to get stuck within the

WaitAnycall itself, even after the timeout nanoseconds have passed, leading me to believe there is a bug within the interface between Dawn and llvm-pipe. Setting timeout to 0 from the WebGPU side creates a busy-wait loop on the ggml side, but hopefully avoids deadlocking in most scenarios, and in practice the busy-wait loop does not occur that often in my tests.

- Another approach to see if this avoids deadlocks in the llvm-pipe Vulkan backend. After some debugging on the Github CI I've seen cases where it seems to get stuck within the

- b8888: sycl: Improve mul_mat_id memory efficiency and add BF16 fast path (#22119)

- This PR addresses memory exhaustion issues (

UR_RESULT_ERROR_OUT_OF_HOST_MEMORY) encountered on SYCL Level Zero when handling large-vocabulary models and MoE architectures. - Key Changes:

-

- BF16 Fast Path via DNNL:

- This PR addresses memory exhaustion issues (

- b8901: metal : fix event synchronization (#22260)

- cont #20463

- cont #18919

- Fix the event synchronization logic when using virtual Metal devices.

- b8905: ci : fix build number for sycl release (#22283)

- Fix SYCL release binaries having

b1as build number. - Build number was not calculated correctly due to checkout depth.

- Fix SYCL release binaries having

- b8919: common : fix jinja warnings with clang 21 (#22313)

- Fix jinja warnings with clang 21

- b8933: chat: fix handling of space in reasoning markers (#22353)

- Extracted from #22162 (thanks @roj234 ), just the fix for the parser

- We're putting off the prefill changes for a further PR (prepared by @aldehir ) so I'm just taking this fix as a standalone.

- b8937: cpu : re-enable fast gelu_quick_f16 (#22339)

- Enable disabled

ggml_vec_gelu_quick_f16. - I couldn't find any reason why this was disabled, and the current version is 10-20x slower.

- Another puzzling fact is that we use the same table for

ggml_vec_gelu_quick_f32(asGGML_GELU_QUICK_FP16is enabled) so there should be no issue?

- Enable disabled

- b8940: [Tensor Parallel] Fix recurrent state serialization for partial reads and writes (#22362)

- The previous code worked only for full tensor reads and writes and was hitting

GGML_ASSERT(size == ggml_nbytes(tensor));assert when tested with llama-server. - I have read and agree with the contributing guidelines

- The previous code worked only for full tensor reads and writes and was hitting

Additional Changes

30 minor improvements: 7 documentation, 18 examples, 5 maintenance.

Full Commit Range

- b8863 to b8946 (63 commits)

- Upstream releases: https://github.com/ggml-org/llama.cpp/compare/b8863...b8946

2026-04-21: Update to llama.cpp b8863

Summary

Updated llama.cpp from b8831 to b8863, incorporating 32 upstream commits with breaking changes, new features, and performance improvements.

Notable Changes

⚠️ Breaking Changes

- b8839: model : refactor bias tensor variable names (#22079)

- https://github.com/ggml-org/llama.cpp/pull/21971#pullrequestreview-4118994933

- Removes duplicate tensor variables.

- b8843: cmake: remove CMP0194 policy to restore MSVC builds (#21934)

- Thanks to @oobabooga for catching this: https://github.com/ggml-org/llama.cpp/pull/21630#issuecomment-4248308373

- PR #21630 added CMP0194 NEW to silence a warning, but it broke Windows MSVC+Ninja.

- the first attempt at scoping ASM to kleidiai hit an unrelated CMake scoping issue on the ARM+KleidiAI self-hosted runner, so I pivoted to a minimal revert. This removes only the 6-line CMP0194 policy block from ggml/CMakeLists.txt. project("ggml" C CXX ASM) is left untouched, which is exactly the pre-#21630 state that was working on all platforms. The CMake 4.1+ warning returns but no platform breaks.

- b8848: HIP: Remove unesscary NCCL_CHECK (#21914)

- In an intermediate state of #19378, RCCL use was behind its own define (GGML_USE_RCCL) so this was required. Before merging, #19378 was changed so that GGML_USE_NCCL enables both NCCL and RCCL, so NCCL_CHECK in common.cu became visible on HIP. At this point NCCL_CHECK in hip.h should have been removed, but this was forgotten.

- I have read and agree with the contributing guidelines

🆕 New Features

- b8833: ggml-webgpu: fix compiler warnings and refactor FlashAttention encoding (#21052)

- This PR doesn't add new functionality, but does the following:

- Removes compiler warnings due to usage of C++20 initializers and potentially unsafe casting, which cleans up the compilation and is a step towards enabling CI on the ggml NVIDIA machine

- Refactors flashattention encoding to avoid custom structs and be more in-line with encoding of the rest of the operations

- b8841: rpc : refactor the RPC transport (#21998)

- Move all transport related code into a separate file and use the socket_t interface to hide all transport implementation details.

- I have read and agree with the contributing guidelines

- AI usage disclosure: NO

- b8843: cmake: fix CMP0194 warning on Windows with MSVC (#21630)

- Fix CMP0194 CMake policy warning when building with MSVC on Windows and CMake 4.1+.

- The

ggmlsubproject enablesASMglobally viaproject("ggml" C CXX ASM)for Metal (macOS) and KleidiAI (ARM) backends. On Windows/MSVC, no assembler sources are used, but CMake 4.1+ warns becausecl.exeis not a valid ASM compiler. - This sets

CMP0194toNEWbefore theproject()call, guarded byif (POLICY CMP0194)for backward compatibility with older CMake versions. This follows the same pattern used inggml-vulkan/CMakeLists.txt(CMP0114, CMP0147).

- b8843: cmake: fix CMP0194 warning on Windows with MSVC (#21630)

- Fix CMP0194 CMake policy warning when building with MSVC on Windows and CMake 4.1+.

- The

ggmlsubproject enablesASMglobally viaproject("ggml" C CXX ASM)for Metal (macOS) and KleidiAI (ARM) backends. On Windows/MSVC, no assembler sources are used, but CMake 4.1+ warns becausecl.exeis not a valid ASM compiler. - This sets

CMP0194toNEWbefore theproject()call, guarded byif (POLICY CMP0194)for backward compatibility with older CMake versions. This follows the same pattern used inggml-vulkan/CMakeLists.txt(CMP0114, CMP0147).

- b8850: CUDA: refactor mma data loading for AMD (#22051)

- On master the AMD support in

mma.cuhis currently in a half-finished state. This PR refactors the code a bit and makes the usage more consistent, reducing the need for special handling infattn-mma-f16.cuhandmmq.cuh. Specifically: - More generic implementations for

load_ldmatrix. The current usage ofload_genericwas not quite correct since it assumed memory alignment which is only guaranteed forload_ldmatrix. - Added a generic implementation for

load_ldmatrix_trans. I experimented with transposing the data upon load in the FA kernel but I was unable to get good performance. However, the usage ofggml_cuda_memcpy_1is beneficial, including for Volta which also uses this path.

- On master the AMD support in

- b8853: [SYCL] Fix reorder MMVQ assert on unaligned vocab sizes (#22035)

- Fixes #22020. The four SYCL reorder mul_mat_vec_q dispatchers (Q4_0, Q8_0, Q4_K, Q6_K) asserted that block_num_y was a multiple of 16 subgroups. Any model whose vocab size is not divisible by 16 aborted on load when the output projection hit the assert. The original report was HY-MT 1.5 1.8B (vocab 120818) on an Arc B570.

- I replaced the hard assert with launch-grid padding. block_num_y now rounds up to a whole number of subgroup-sized workgroups, and the kernel's existing

if (row >= nrows) return;guard skips the padded rows. The row value is uniform across a subgroup (it does not depend onget_local_linear_id), sosycl::reduce_over_groupstays safe. - For aligned-vocab models,

ceil_div(nrows, 16) * 16 == nrows, so block_num_y is unchanged and the kernel launch is identical to the pre-patch code.

- b8853: [SYCL] Fix reorder MMVQ assert on unaligned vocab sizes (#22035)

- Fixes #22020. The four SYCL reorder mul_mat_vec_q dispatchers (Q4_0, Q8_0, Q4_K, Q6_K) asserted that block_num_y was a multiple of 16 subgroups. Any model whose vocab size is not divisible by 16 aborted on load when the output projection hit the assert. The original report was HY-MT 1.5 1.8B (vocab 120818) on an Arc B570.

- I replaced the hard assert with launch-grid padding. block_num_y now rounds up to a whole number of subgroup-sized workgroups, and the kernel's existing

if (row >= nrows) return;guard skips the padded rows. The row value is uniform across a subgroup (it does not depend onget_local_linear_id), sosycl::reduce_over_groupstays safe. - For aligned-vocab models,

ceil_div(nrows, 16) * 16 == nrows, so block_num_y is unchanged and the kernel launch is identical to the pre-patch code.

- b8858: ggml-cpu: Optimized x86 and generic cpu q1_0 dot (follow up) (#21636)

- Hello, I have prepared optimized implementation of cpu q1_0 dot product (mainly for Bonsai LLM models), this is a continuation of https://github.com/PrismML-Eng/llama.cpp/pull/10 PR, list of experiments conducted and some other benchmark results can be found there

- More efficient (less bit math and multiplications) generic implementation of dot product for (q1_0; q8_0)

- x86 SIMD specific implementations of dot product for (q1_0; q8_0) for most of the realistic x86_64 targets (from SSSE3 to AVX2)

- b8860: Tensor-parallel: Fix delayed AllReduce on Gemma-4 MoE (#22129)

- Skip forward past nodes that don't consume the current node, and allow a chain of MULs.

- When

down_exps_sis set, build_moe_ffn pulls the scale tensor in via reshape/repeat/get_rows. Topological sort places those betweenmul_mat_idand the MUL that consumes it, so the existing nodes[id+1] check never sees an ADD_ID or MUL and fails. - The scale MUL is followed by a second MUL; the old code only accepted one.

- b8863: ggml-cuda: flush legacy pool on OOM and retry (#22155)

- This adds a conservative fallback for the legacy CUDA/HIP pool allocator.

- On non-VMM setups, the legacy pool can end up holding cached free buffers that are individually too small for a new request, but still occupy enough VRAM to make the next allocation fail. In that case, this patch flushes the cached legacy-pool buffers and retries the allocation once before aborting.

- The normal hit path is unchanged. This is intended as a narrow mitigation for legacy-pool OOMs, not a broader allocator redesign. I validated the retry path locally with a synthetic OOM injection on a legacy-pool build.

🚀 Performance Improvements

- b8846: Reduce CPU overhead in meta backend: cache subgraph splits when cgraph is unchanged (#22041)

- Skip per-call subgraph construction in

ggml_backend_meta_graph_computewhen the sameggml_cgraphis used consecutively. - Assign

uidto every sub-graph so that CUDA's fast uid check path hits too. - Performance on 2x RTX 5090:

- Skip per-call subgraph construction in

- b8853: [SYCL] Add Q8_0 reorder optimization for Intel GPUs (~3x token generation speedup) (#21527)

- Extends the existing SYCL reorder optimization (currently Q4_0/Q4_K/Q6_K) to support Q8_0

- Q8_0 token generation on Intel Arc Pro B70 (Xe2/Battlemage): 4.88 t/s → 15.24 t/s (3.1x faster)

- Memory bandwidth utilization improves from 21% to 66% of theoretical maximum

- b8857: ggml-webgpu: updated matrix-vector multiplication (#21738)

- Improved performance of the matrix-vector multiplication kernel.

- I have read and agree with the contributing guidelines

🐛 Bug Fixes

- b8832: CUDA: use LRU based eviction for cuda graphs (#21611)

- Since introducing graphs per node to enable multiple splits to have cuda graphs in #18934, there are cases when the node pointers in ggml_cgraph keep changing and it leads to the map being unbounded leading to memory leaks (e.g #20315)

- This PR fixes the memory leaks

- b8836: ci : free disk space for rocm release (#22012)

- Fix

Releaseby freeing up disk space on rocm runner image. - Recent failures:

- https://github.com/ggml-org/llama.cpp/actions/runs/24517121219/job/71664214247

- Fix

- b8837: Fix meta backend tensor reads for split tensors during state serialization (#22063)

- This PR fixes a crash when saving recurrent state with tensor-split models using the meta backend. The previous code assumed that a tensor read would always map to a single segment, which is not always true when -sm tensor is enabled. The fix handles multi-segment tensor reads correctly instead of hitting the split_state.n_segments == 1 assertion. This should allow checkpoint/state serialization to work reliably with tensor-parallel CUDA setups. Fixes #22058

- b8849: common/autoparser : allow space after tool call (#22073)

- Allow whitespace after tool call for tagged outputs. Nemotron Nano 3 wants to emit

<tool_call>\n, but is then constrained to produce another tool call since the last tool call is not allowed to end in\n. - fixes #22043

- Allow whitespace after tool call for tagged outputs. Nemotron Nano 3 wants to emit

- b8855: fix: GLM-DSA crash in llama-tokenize when using vocab_only (#22102)

- When running llama-tokenize with GLM-DSA models, the process crashes with a fatal error in llama-hparams.cpp. This happens because vocab_only mode skips the full hparams loading, leaving n_layer and the MLA params uninitialized, but print_info still calls n_embd_head_k_mla() which internally falls back to n_embd_head_k(0) and hits the abort when n_layer is 0. Fixed by guarding the DeepSeek2/GLM-DSA/Mistral4 print block with consistent with how other non-vocab hparams are already handled in print_info. Fixes #22026

- b8859: TP: fix 0-sized tensor slices, AllReduce fallback (#21808)

- Partially fixes https://github.com/ggml-org/llama.cpp/issues/21765 .

- With Qwen 3.5

26b a4b27b there are only 2 KV heads so with 3+ GPUs some of them will get zero-sized slices of the data. This edge case is not being handled correctly on master. This PR makes it so that the corresponding nodes are disabled and the buffer for the AllReduce memset to 0 so that after the AllReduce all GPUs have the correct data. As of right now the buffer is zeroed out viaGGML_SCALEwith a factor of0.0ffor the AllReduce fallback implementation - this is not safe w.r.t. NaNs but it seems we currently lack the tooling to properly memset a tensor as part of aggml_cgraph. The same issue is present inllm_graph_context::build_rs. - Additionally, on master the synchronization of 3+ GPUs is not being handled correctly for the AllReduce fallback. The problem is that in those cases 2+ reduction steps are needed but the same buffer is used for each step so there are race conditions. This PR extends the number of buffers accordingly.

Additional Changes

10 minor improvements: 9 examples, 1 maintenance.

Full Commit Range

- b8831 to b8863 (32 commits)

- Upstream releases: https://github.com/ggml-org/llama.cpp/compare/b8831...b8863

2026-04-17: Update to llama.cpp b8828

Summary

Updated llama.cpp from b8816 to b8828, incorporating 11 upstream commits with new features and performance improvements.

Notable Changes

🆕 New Features

- b8816: ggml: add graph_reused (#21764)

- Add

reusedmember variable toggml_cgraphso backends can take advantage of the graph reuse functionality. Currently when graph_reuse in invoked, the CUDA backend still does the props change check to figure out if the graph has changed or not, where in factgraph_reuse(to my understanding) guarantees this to be true. This helps bypass a mildly expensive O(n) check.

- b8827: opencl: refactor q8_0 set_tensor and mul_mat host side dispatch for Adreno (#21938)

- The q8_0 set_tensor and mul_mat host side dispatch code for Adreno is a bit messy. This PR does some refactoring to make it cleaner and follow the same pattern as more recently added quantizations, e.g., q4_1, etc.

- b8828: model : Gemma4 model type detection (#22027)

- Adds model type detection logic for Gemma4 31B and 26BA4B.

- This change should be purely cosmetic, fixes "?B" model names shown by

llama-bench, etc.

🚀 Performance Improvements

- b8822: opencl: add q5_K gemm and gemv kernels for Adreno (#21595)

- Add Q5_K GEMM and GEMV kernels to the Adreno backend to improve performance for Q5_K quantized models.

- b8824: hexagon: optimize HMX matmul operations (#21071)

- Type Safety and Code Robustness:

- Replaced

intwithsize_tfor variables representing sizes, indices, and tile counts throughout the codebase to prevent potential integer overflows and improve correctness (e.g.,n_col_tiles,n_row_tiles, loop indices). [1] [2] [3] [4] [5] [6] [7] - Refactored tile and row/column stride calculations to use

size_tand clarified index calculations in matrix operations, which improves code clarity and reduces the risk of subtle bugs. [1] [2]

🐛 Bug Fixes

- b8823: model: using single llm_build per arch (#21970)

- Prepare for https://github.com/ggml-org/llama.cpp/issues/21966

- Using one single

llm_build_*class per arch will make the migration a bit easier. - Example before:

Additional Changes

5 minor improvements: 1 documentation, 4 examples.

- b8825: cmake: use glob to collect src/models sources (#22005)

- The goal is to make https://github.com/ggml-org/llama.cpp/pull/22004 a bit easier

- I have read and agree with the contributing guidelines

- b8821: server: use random media marker (#21962)

- Fix https://github.com/ggml-org/llama.cpp/issues/21955

- Generate a random media marker each time we launch the server. The string is random enough that collision is impossible to happen in practice

- How random? 32 characters, 0-9a-zA-Z, making it 62^32 combinations. And according to math stackexchange:

- b8821: server: tests: fetch random media marker via /apply-template (#21962) (#21980)

- Fix CI

- I have read and agree with the contributing guidelines

- b8821: server: use random media marker (#21962)

- Fix https://github.com/ggml-org/llama.cpp/issues/21955

- Generate a random media marker each time we launch the server. The string is random enough that collision is impossible to happen in practice

- How random? 32 characters, 0-9a-zA-Z, making it 62^32 combinations. And according to math stackexchange:

- b8826: cli : use get_media_marker (#22017)

- cont #21962

- Fixes #22010

llama-clistill usedmtmd_default_markerwhich returns the old static marker.

Full Commit Range

- b8816 to b8828 (11 commits)

- Upstream releases: https://github.com/ggml-org/llama.cpp/compare/b8816...b8828

2026-04-16: Update to llama.cpp b8809

Summary

Updated llama.cpp from b8804 to b8809, incorporating 7 upstream commits with new features and performance improvements.

Notable Changes

🆕 New Features

- b8806: cuda: Q1_0 initial backend (#21629)

- Follow up after merging of Q1_0 CPU PR. This PR adds the relevant CUDA backend.

- Seems also this works for AMD in some cases that was a nice surprise :)

- See a live demo of Bonsai 8B using these CUDA kernels and

llama-serveron hugging-face space prism-ml/Bonsai-demo, using a L40S GPU and getting decent speeds. Each request running on one gpu with a naive load balancer (just for demo purposes).

🚀 Performance Improvements

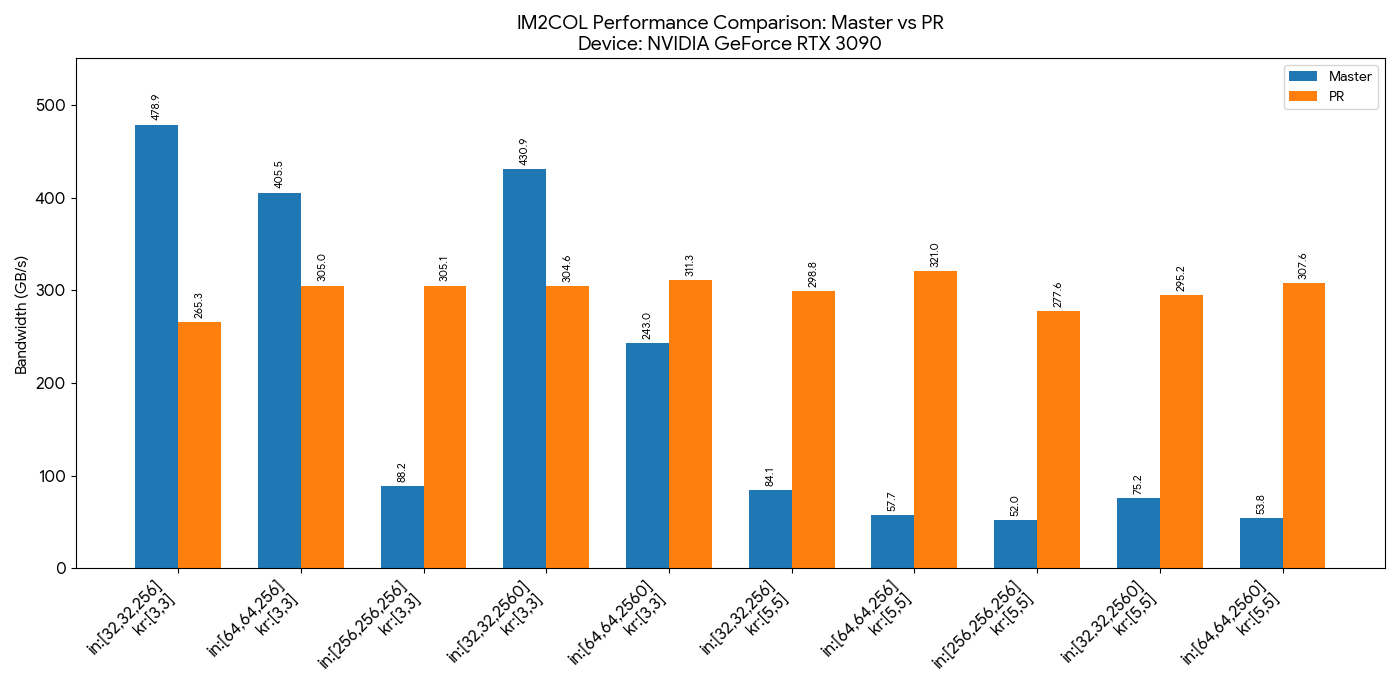

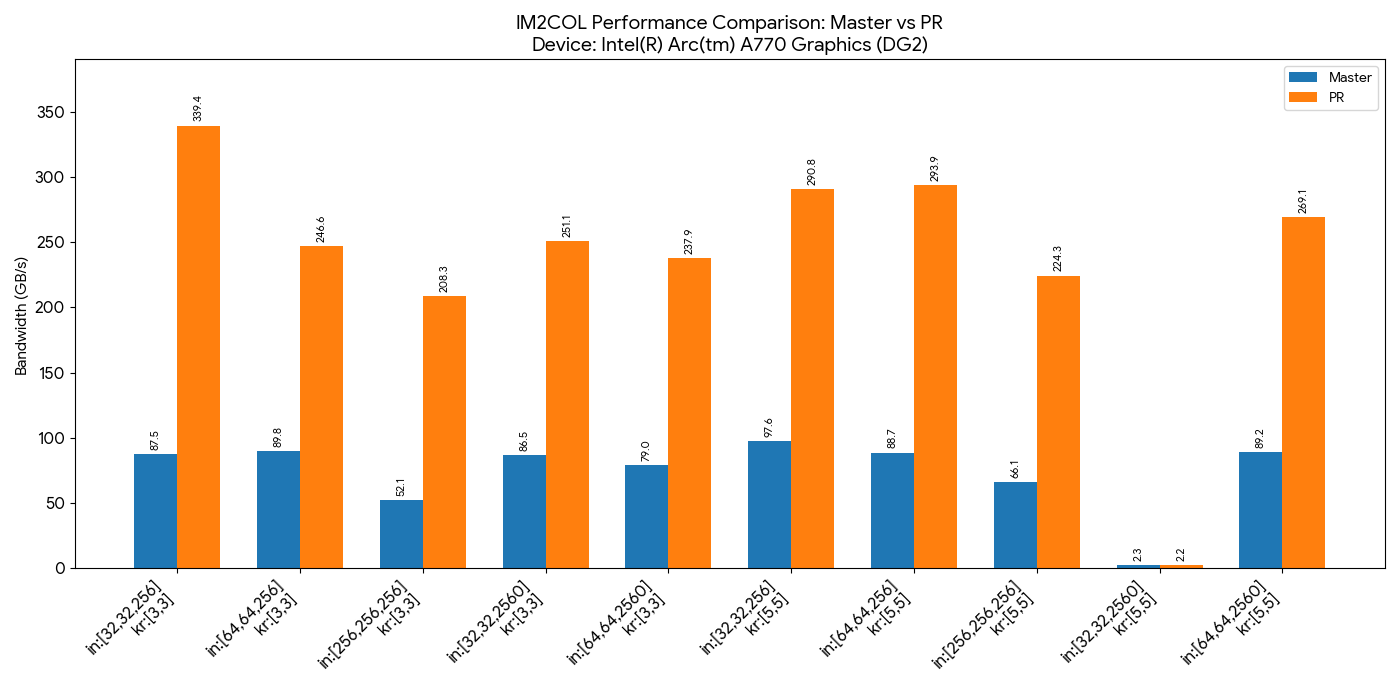

- b8807: vulkan: optimize im2col (#21713)

- The current layout is running very slow in some cases, to the point that drivers time out (#20249). I swapped the IM2COL work dimensions to enable coalesced writes. Cap the amount of workgroups spawned to avoid some bad cases.

-

-

- b8809: [SYCL] Add Q8_0 reorder optimization for Intel GPUs (~3x token generation speedup) (#21527)

- Extends the existing SYCL reorder optimization (currently Q4_0/Q4_K/Q6_K) to support Q8_0

- Q8_0 token generation on Intel Arc Pro B70 (Xe2/Battlemage): 4.88 t/s → 15.24 t/s (3.1x faster)

- Memory bandwidth utilization improves from 21% to 66% of theoretical maximum

Additional Changes

4 minor improvements: 3 documentation, 1 examples.

- b8804: CUDA: require explicit opt-in for P2P access (#21910)

- In https://github.com/ggml-org/llama.cpp/pull/19378 I had naively enabled CUDA peer-to-peer access guarded only by

cudaDeviceCanAccessPeer. However, for some motherboards and BIOS settings this seems to cause crashes or corrupted outputs. I don't think we can feasibly check for this so our only option is to make peer access an explicit opt-in. - I have read and agree with the contributing guidelines

- In https://github.com/ggml-org/llama.cpp/pull/19378 I had naively enabled CUDA peer-to-peer access guarded only by

- b8809: [SYCL] Fix Q8_0 reorder: garbage on 2nd prompt + crash on full VRAM (#21638)

- Fixes two issues with the Q8_0 reorder optimization introduced in #21527.

- Bug 1: Garbage output from second prompt onward (#21589)

- The Q8_0 reorder optimization rearranges weight data during token generation (batch=1, via DMMV/MMVQ), but the general GEMM dequantization path used during prompt processing was missing a reorder-aware variant for Q8_0. After the first tg pass reordered the weights, subsequent prompt processing read them with the standard dequantizer, producing corrupt output.

- b8809: [SYCL] Fix Q8_0 reorder: garbage on 2nd prompt + crash on full VRAM (#21638)

- Fixes two issues with the Q8_0 reorder optimization introduced in #21527.

- Bug 1: Garbage output from second prompt onward (#21589)

- The Q8_0 reorder optimization rearranges weight data during token generation (batch=1, via DMMV/MMVQ), but the general GEMM dequantization path used during prompt processing was missing a reorder-aware variant for Q8_0. After the first tg pass reordered the weights, subsequent prompt processing read them with the standard dequantizer, producing corrupt output.

- b8808: server: use random media marker (#21962)

- Fix https://github.com/ggml-org/llama.cpp/issues/21955

- Generate a random media marker each time we launch the server. The string is random enough that collision is impossible to happen in practice

- How random? 32 characters, 0-9a-zA-Z, making it 62^32 combinations. And according to math stackexchange:

Full Commit Range

- b8804 to b8809 (7 commits)

- Upstream releases: https://github.com/ggml-org/llama.cpp/compare/b8804...b8809

2026-04-15: Update to llama.cpp b8799

Summary

Updated llama.cpp from b8794 to b8799, incorporating 6 upstream commits with new features.

Notable Changes

🆕 New Features

- b8795: metal : fix FA support logic (#21898)

- cont #20797

- Add proper logic for supported quantization types of the FA operator.

- Fix https://github.com/ggml-org/llama.cpp/actions/runs/24400236380/job/71268552842#step:3:27636

- b8797: hexagon: optimization for HMX mat_mul (#21554)

- This PR introduces two additional optimizations for the Hexagon HMX backend:

-

- Enable asynchronous HMX execution

- HMX computations are now executed asynchronously, allowing them to overlap with HVX dequantization and DMA stages within the pipeline. Previously, synchronous HMX calls blocked the main thread and limited parallelism.

🐛 Bug Fixes

- b8796: ggml: remove ggml-ext.h (#21869)

- Fix https://github.com/ggml-org/llama.cpp/issues/21867 Fix https://github.com/ggml-org/llama.cpp/issues/21860

- Not quite sure if the ggml-ext.h is intended to be a public header, but I believe it should be (so that the symbols can be exposed in the dynamic library)

- b8799: autoparser: support case of JSON_NATIVE with per-call markers (#21892)

- The JSON_NATIVE case for the autoparser wasn't handling cases where the separate calls were not aggregated in a JSON array, but instead each had their own set of opening and closing markers.

- Automatically resolves autoparser detection problems with Reka-Edge, also fixes old Hermes templates.

Additional Changes

2 minor improvements: 2 examples.

- b8794: mtmd: add mtmd_image_tokens_get_decoder_pos() API (#21851)

- Add a new mtmd API:

mtmd_image_tokens_get_decoder_pos() - Deprecate

mtmd_image_tokens_get_nx/ny() - Target support https://github.com/ggml-org/llama.cpp/pull/21045

- Add a new mtmd API:

- b8798: llama-diffusion-cli: read n_ctx back after making llama_context so the cli doesn't reject all inp... (#21939)

- Read back via

llama_n_ctxthe context window size thatllama_init_from_modeldetermines, as mentioned in comments forllama_n_ctx. The prevents the cli from rejecting all inputs because it thinks the context window is 0 length. - I ran into the issue described in https://github.com/ggml-org/llama.cpp/issues/20407 myself and the fix seemed straightforward, so I did it. @am17an - sorry for the random PR, it's very minor.

- Tested on a mac like so:

- Read back via

Full Commit Range

- b8794 to b8799 (6 commits)

- Upstream releases: https://github.com/ggml-org/llama.cpp/compare/b8794...b8799

2026-04-14: Update to llama.cpp b8784

Summary

Updated llama.cpp from b8763 to b8784, incorporating 14 upstream commits with new features.

Notable Changes

🆕 New Features

- b8763: CUDA: skip compilation of superfluous FA kernels (#21768)

- Fixup to https://github.com/ggml-org/llama.cpp/pull/20998 .

- The compilation of FA kernels with head size 512 is supposed to be skipped for GQA ratios of 1 and 2 because those are never used. However, because the invocation of the corresponding template specializations is not guarded with an

if constexprthey are being compiled regardless; this PR adds them. On my server with a 64 core EPYC CPU the total compilation time of the full project without CCache goes down from 330s to 300s.

- b8771: sycl: disable Q1_0 in backend and cleanup unused variables (#21807)

- test-backend-ops was crashing because backend doesn't support Q1_0 type yet. Disable it until we add support.

- Also, cleaned up unused variables.

- b8778: common : add download cancellation and temp file cleanup (#21813)

- Add download cancellation and temp file cleanup

- b8779: vulkan: Flash Attention DP4A shader for quantized KV cache (#20797)

- This PR adds DP4A (integer dot product) support to the scalar FA shader, enabled if the GPU supports DP4A. It's only used for quantized KV cache (both q8_0 or both q4_0), and not for coopmat FA shaders.

- I also unified the GLSL vector type name preprocessor macros because we had swapped from FLOAT_TYPE_VECx to FLOAT_TYPEVx in Flash Attention, and the old naming was getting in the way of code reuse here.

- Performance graphs for q8_0 kv cache:

- b8781: chat: dedicated DeepSeek v3.2 parser + "official" template (#21785)

- Adds an "official" (tested with the official Python reference) DeepSeek v3.2 template + parser with tests.

- The parser will only work with this template, so please use them together.

🐛 Bug Fixes

- b8770: fix: crash when sending image under 2x2 pixels (#21711)

- GGML_ASSERT(src.nx >= 2 && src.ny >= 2); will crash llama.cpp when processing very small images. Fix was implemented to handle 1x1 inputs safely by updating the interpolation math and clamping pixel lookups, preventing out-of-bounds memory errors while keeping the pipeline stable.

- Code was succesfully tested in production, llama-server is running with no crashes.

- Fixes https://github.com/ggml-org/llama.cpp/issues/21420

- b8772: ggml-webgpu: Fix compilation error in

ggml_backend_webgpu_debugin debug mode (#21798)- This PR fixes a compilation error that occurs when building in debug mode (related to https://github.com/ggml-org/llama.cpp/pull/21521).

-

</code></pre> </li> <li>llama.cpp/ggml/src/ggml-webgpu/ggml-webgpu.cpp:537:9: error: invalid argument</li> </ul> </li> <li><strong>b8783</strong>: common/gemma4 : handle parsing edge cases (<a href="https://github.com/ggml-org/llama.cpp/pull/21760">#21760</a>) <ul> <li>Fix a few edge cases for Gemma 4 26B A4B. I don't see these artifacts from the 31B variant.</li> <li>If the model generates content + tool call, the template will incorrectly format the prompt without the generation prompt (<code><|turn>model\n</code>):</li> <li> <pre><code>

Additional Changes

6 minor improvements: 1 documentation, 4 examples, 1 maintenance.

Full Commit Range

- b8763 to b8784 (14 commits)

- Upstream releases: https://github.com/ggml-org/llama.cpp/compare/b8763...b8784

2026-04-12: Update to llama.cpp b8763

Summary

Updated llama.cpp from b8762 to b8763, incorporating 2 upstream commits with new features.

Notable Changes

🆕 New Features

- b8763: CUDA: skip compilation of superfluous FA kernels (#21768)

- Fixup to https://github.com/ggml-org/llama.cpp/pull/20998 .

- The compilation of FA kernels with head size 512 is supposed to be skipped for GQA ratios of 1 and 2 because those are never used. However, because the invocation of the corresponding template specializations is not guarded with an

if constexprthey are being compiled regardless; this PR adds them. On my server with a 64 core EPYC CPU the total compilation time of the full project without CCache goes down from 330s to 300s.

Additional Changes

1 minor improvements: 1 examples.

- b8762: mtmd : add MERaLiON-2 multimodal audio support (#21756)

- This adds support for MERaLiON-2 to mtmd. MERaLiON-2 is a speech-text model developed by I2R, A*STAR Singapore, available in 3B and 10B variants. It uses a Whisper large-v2 encoder paired with a Gemma2 decoder.

- New projector type:

PROJECTOR_TYPE_MERALION - The audio adaptor stacks 15 encoder frames per output token, then runs a layer norm followed by a 4-layer MLP: compression Linear+SiLU, a GLU block (gate and pool projections), and a final out_proj to match the decoder embedding dim. The implementation reuses the existing

linear_{bid}/mm_norm_pretensor naming so the change to tensor_mapping.py is just a comment update.

Full Commit Range

- b8762 to b8763 (2 commits)

- Upstream releases: https://github.com/ggml-org/llama.cpp/compare/b8762...b8763

2026-04-11: Update to llama.cpp b8762

Summary

Updated llama.cpp from b8746 to b8762, incorporating 17 upstream commits with new features and performance improvements.

Notable Changes

🆕 New Features

- b8750: ggml-webgpu: support non-square subgroup matrix configs for Intel GPUs (#21669)

- Enable WebGPU subgroup matrix support for Intel GPUs (Xe2/Battlemage).

- Intel GPUs report non-square subgroup matrix configurations (e.g. M=8, N=16, K=16) via Dawn's

ChromiumExperimentalSubgroupMatrixfeature. The existing filter only accepted square configs (M==N==K), rejecting Intel GPUs entirely despite full hardware and driver support. - Changes:

- b8753: common : better align to the updated official gemma4 template (#21704)

- Google has pushed an update to their chat template: https://huggingface.co/google/gemma-4-31B-it/commit/e51e7dcdb6febd74c182fe0cb41c236363ae2ac5

- This update includes everything within our internal workarounds, as well as the custom modifications in the

models/templates/google-gemma-31B-it-interleaved.jinjatemplate. Add support by detecting it and forgoing the workarounds. Additionally, emit a warning message so users are aware there is an update. - The existing template within GGUFs, as well as the custom interleaved template, will continue to function. I even added some of the formatting changes to the bos and think tokens.

- b8759: cpu : fix a few instances of missing GGML_TYPE_Q1_0 cases (#21716)

- Add

case GGML_TYPE_Q1_0:where it was missing. - Fixes:

- https://github.com/ggml-org/llama.cpp/actions/runs/24229986393/job/70739279184

- Add

- b8761: opencl: add basic support for q5_k (#21593)

- This PR adds basic support for Q5_K quantization on GPU. With this change, Q5_K operations remain on the GPU instead of falling back to the CPU, which improves performance for models using Q5_K quantization.

- This is a general implementation. A follow‑up PR will introduce a more optimized, Adreno‑specific implementation.

🚀 Performance Improvements

- b8749: ggml-webgpu: address quantization precision and backend lifecycle managment (#21521)

- This PR improves the stability and performance of the WebGPU backend, specifically focusing on the quantization numeric precision and backend lifecycle management.

- Quantization Precision:

🐛 Bug Fixes

- b8746: common: mark --split-mode tensor as experimental (#21684)

- Fixup to https://github.com/ggml-org/llama.cpp/pull/19378 . Since there are probably still a lot of cases where

--split-mode tensordoesn't yet work correctly I marked the PR as experimental. But I forgot to also do this in the--help. - I have read and agree with the contributing guidelines

- Fixup to https://github.com/ggml-org/llama.cpp/pull/19378 . Since there are probably still a lot of cases where

- b8747: common : fix when loading a cached HF models with unavailable API (#21670)

- Fix when loading a cached HF models with unavailable API

- b8749: ggml webgpu: Move to no timeout for WaitAny in graph submission to avoid deadlocks (#20618)

- Another approach to see if this avoids deadlocks in the llvm-pipe Vulkan backend. After some debugging on the Github CI I've seen cases where it seems to get stuck within the

WaitAnycall itself, even after the timeout nanoseconds have passed, leading me to believe there is a bug within the interface between Dawn and llvm-pipe. Setting timeout to 0 from the WebGPU side creates a busy-wait loop on the ggml side, but hopefully avoids deadlocking in most scenarios, and in practice the busy-wait loop does not occur that often in my tests.