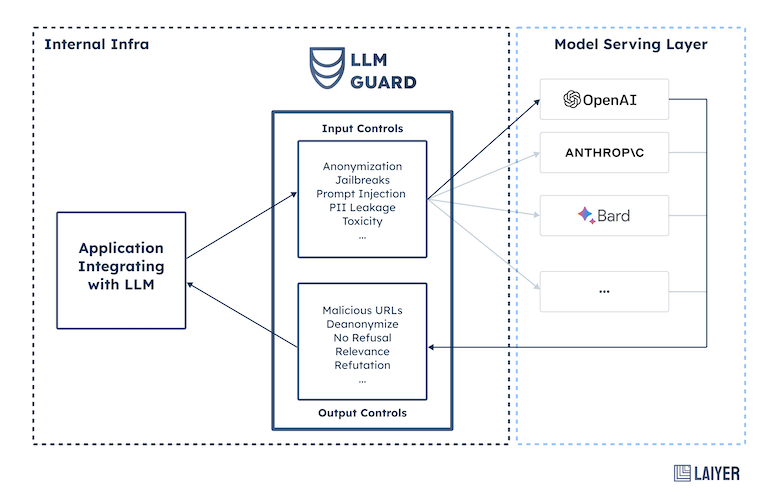

LLM-Guard is a comprehensive tool designed to fortify the security of Large Language Models (LLMs). By offering sanitization, detection of harmful language, prevention of data leakage, and resistance against prompt injection and jailbreak attacks, LLM-Guard ensures that your interactions with LLMs remain safe and secure.

Project description

LLM Guard - The Security Toolkit for LLM Interactions

LLM-Guard is a comprehensive tool designed to fortify the security of Large Language Models (LLMs). By offering sanitization, detection of harmful language, prevention of data leakage, and resistance against prompt injection and jailbreak attacks, LLM-Guard ensures that your interactions with LLMs remain safe and secure.

❤️ Proudly developed by the Laiyer.ai team.

Installation

Begin your journey with LLM Guard by downloading the package and acquiring the en_core_web_trf spaCy model (essential

for the Anonymize scanner):

pip install llm-guard

python -m spacy download en_core_web_trf

Getting Started

Important Notes:

- LLM Guard is designed for easy integration and deployment in production environments. While it's ready to use out-of-the-box, please be informed that we're constantly improving and updating the repository.

- Base functionality requires a limited number of libraries. As you explore more advanced features, necessary libraries will be automatically installed.

- Ensure you're using Python version 3.8.1 or higher. Confirm with:

python --version. - Library installation issues? Consider upgrading pip:

python -m pip install --upgrade pip.

Examples:

- Get started with ChatGPT and LLM Guard.

Supported scanners

Prompt scanners

- Anonymize

- BanSubstrings

- BanTopics

- Code

- Jailbreak

- PromptInjection

- Secrets

- Sentiment

- TokenLimit

- Toxicity

Output scanners

- BanSubstrings

- BanTopics

- Code

- Deanonymize

- MaliciousURLs

- NoRefusal

- Refutation

- Regex

- Relevance

- Sensitive

- Toxicity

Roadmap

General:

- Calculate risk score from 0 to 1 for each scanner

- Improve speed of transformers

Prompt Scanner:

- Improve Jailbreak scanner

- Better anonymizer with improved secrets detection and entity recognition

- Use Perspective API for Toxicity scanner

Output Scanner:

- Develop Fact Checking scanner

- Develop Hallucination scanner

- Develop scanner to check if the output stays on the topic of the prompt.

Contributing

Got ideas, feedback, or wish to contribute? We'd love to hear from you! Email us.

For detailed guidelines on contributions, kindly refer to our contribution guide.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file llm-guard-0.1.1.tar.gz.

File metadata

- Download URL: llm-guard-0.1.1.tar.gz

- Upload date:

- Size: 90.7 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

8787b3ae95df1a1198ec1d7b71a22c12c7bd68e449aa652f5b21207de5adce57

|

|

| MD5 |

e70cea435c35cce51675fddba24106ad

|

|

| BLAKE2b-256 |

b3338866dacb7fb7bec0d4c73bd86caee01e36fcb8047a9f7193a3b9b43bdb58

|

File details

Details for the file llm_guard-0.1.1-py3-none-any.whl.

File metadata

- Download URL: llm_guard-0.1.1-py3-none-any.whl

- Upload date:

- Size: 111.1 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

e531a78017d5a23282dffa36db1fbcf61ffc2ff650d6e3860d8a6ba467c5353d

|

|

| MD5 |

b14477ac6415cb70e5e7ef638b0ca410

|

|

| BLAKE2b-256 |

69930149b217bfa579f97889df1a538a09a15f2774725973bb69286d2bba7b22

|