LLMstudio Proxy is the module of LLMstudio that allows calling any LLM as a Service Provider in proxy server. By leveraging LLMstudio Proxy, you can have a centralized point for connecting to any provider running independently from your application.

Project description

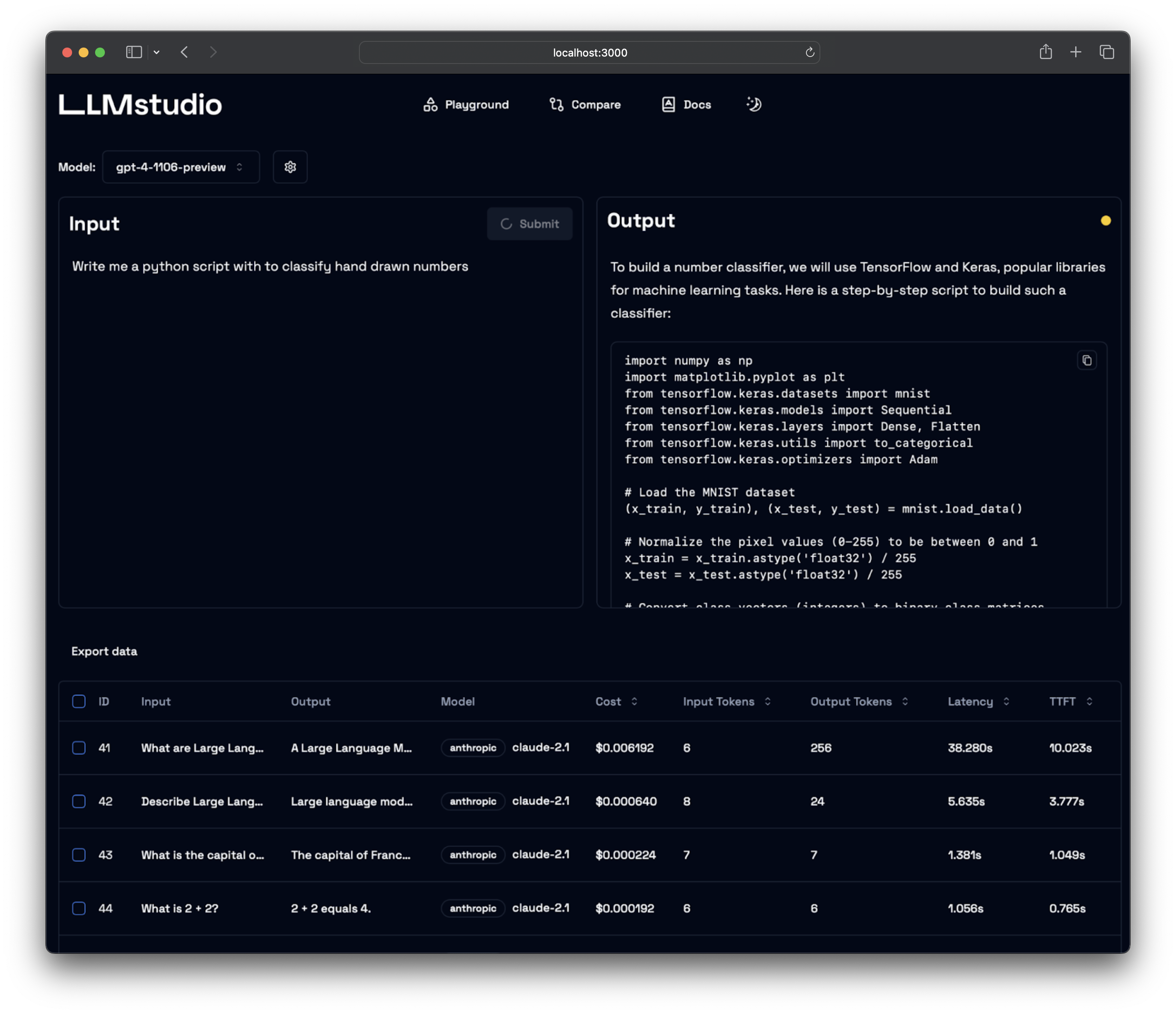

LLMstudio by TensorOps

Prompt Engineering at your fingertips

🌟 Features

- LLM Proxy Access: Seamless access to all the latest LLMs by OpenAI, Anthropic, Google.

- Custom and Local LLM Support: Use custom or local open-source LLMs through Ollama.

- Prompt Playground UI: A user-friendly interface for engineering and fine-tuning your prompts.

- Python SDK: Easily integrate LLMstudio into your existing workflows.

- Monitoring and Logging: Keep track of your usage and performance for all requests.

- LangChain Integration: LLMstudio integrates with your already existing LangChain projects.

- Batch Calling: Send multiple requests at once for improved efficiency.

- Smart Routing and Fallback: Ensure 24/7 availability by routing your requests to trusted LLMs.

- Type Casting (soon): Convert data types as needed for your specific use case.

🚀 Quickstart

Don't forget to check out https://docs.llmstudio.ai page.

Installation

Install the latest version of LLMstudio using pip. We suggest that you create and activate a new environment using conda

pip install llmstudio

Install bun if you want to use the UI

curl -fsSL https://bun.sh/install | bash

Create a .env file at the same path you'll run LLMstudio

OPENAI_API_KEY="sk-api_key"

ANTHROPIC_API_KEY="sk-api_key"

Now you should be able to run LLMstudio Proxy using the following command.

llmstudio server --proxy

When the --proxy flag is set, you'll be able to access the Swagger at http://0.0.0.0:50001/docs (default port)

📖 Documentation

- Visit our docs to learn how the SDK works (coming soon)

- Checkout our notebook examples to follow along with interactive tutorials

👨💻 Contributing

- Head on to our Contribution Guide to see how you can help LLMstudio.

- Join our Discord to talk with other LLMstudio enthusiasts.

Training

Thank you for choosing LLMstudio. Your journey to perfecting AI interactions starts here.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file llmstudio_proxy-1.0.6.tar.gz.

File metadata

- Download URL: llmstudio_proxy-1.0.6.tar.gz

- Upload date:

- Size: 6.1 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: poetry/1.8.4 CPython/3.13.0 Darwin/23.1.0

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b33104bcca10a19bfa154be0d2f7b0facb08446c900e668286081081ed3ba28b

|

|

| MD5 |

8b57f1ac6ce8135c5f02c48cc9178be8

|

|

| BLAKE2b-256 |

e27e9b8c1c3f1c2f62d4f1e00e2458c70cec367ab18de38d9719c323d282cd1c

|

File details

Details for the file llmstudio_proxy-1.0.6-py3-none-any.whl.

File metadata

- Download URL: llmstudio_proxy-1.0.6-py3-none-any.whl

- Upload date:

- Size: 7.7 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: poetry/1.8.4 CPython/3.13.0 Darwin/23.1.0

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

afc5d6fdd6031bcecbb0bf1946545235841633a6129c52f7ae2610995bb6e647

|

|

| MD5 |

d824bd37d30f685af252debf836f25de

|

|

| BLAKE2b-256 |

25f7626113e55e3ab975fc72d81a99ce0967f8a1a3209bf9066f9ff976f2ecf7

|