Add your description here

Project description

MemoryAgent: An Open, Modular Memory Framework for Agents (Beta)

MemoryAgent is a reusable memory framework for LLM-based agent systems. It provides tiered memory (working, episodic, semantic, perceptual), hot/cold storage, archive indexing, confidence-based retrieval escalation, and optional local vector search via sqlite-vec.

Highlights

- Tiered memory: working (TTL), episodic, semantic, perceptual

- Storage tiers: hot metadata (SQLite + sqlite-vec), cold archive (filesystem), archive index (vector index)

- Memory retrieval pipeline: hot -> archive -> cold hydration with rerank + context packaging

- Local mode: SQLite + sqlite-vec (optional) + filesystem

- Async-friendly with sync convenience methods

Project Layout

memoryagent/

config.py # Default system settings and retrieval thresholds

models.py # Pydantic data models for memory items, queries, bundles

system.py # MemorySystem entry point and wiring

retrieval.py # Retrieval orchestration and reranking

confidence.py # Confidence scoring components

policy.py # Conversation + routing policies

indexers.py # Episodic/semantic/perceptual indexers

workers.py # Consolidation, archiving, rehydration, compaction

storage/

base.py # Storage adapter interfaces

in_memory.py # Simple in-memory vector + graph stores

local_disk.py # SQLite metadata/features + sqlite-vec + file object store

examples/

minimal.py # Basic usage example

openai_agent.py # CLI OpenAI agent with memory retrieval

memory_api_server.py # Local API for memory + chat

memory_viz.html # Web UI for chat + memory visualization

Installation

Python 3.10+ required.

Development (sync deps from uv.lock):

uv sync

Use as a dependency:

uv add memoryagent-lib

# or

pip install memoryagent-lib

Optional extras:

uv add openai sqlite-vec

# or

pip install openai sqlite-vec

Quick Start

from memoryagent import MemoryEvent, MemorySystem

memory = MemorySystem()

owner = "user-001"

memory.write(

MemoryEvent(

content="User prefers concise summaries about climate policy.",

type="semantic",

owner=owner,

tags=["preference", "summary"],

confidence=0.7,

stability=0.8,

)

)

bundle = memory.retrieve("What policy topics did we cover?", owner=owner)

print(bundle.confidence.total)

for block in bundle.blocks:

print(block.text)

memory.flush(owner)

Enable sqlite-vec (Local Vector Search)

from memoryagent import MemorySystem, MemorySystemConfig

config = MemorySystemConfig(

use_sqlite_vec=True,

vector_dim=1536, # match your embedding model

)

memory = MemorySystem(config=config)

If sqlite-vec cannot be auto-loaded, set an explicit path:

from pathlib import Path

from memoryagent import MemorySystemConfig

config = MemorySystemConfig(

use_sqlite_vec=True,

vector_dim=1536,

sqlite_vec_extension_path=Path("/path/to/sqlite_vec.dylib"),

)

Examples

OpenAI Agent (CLI)

python -m memoryagent.examples.openai_agent

- Uses OpenAI responses + embeddings.

- Stores session transcript as a single working memory item.

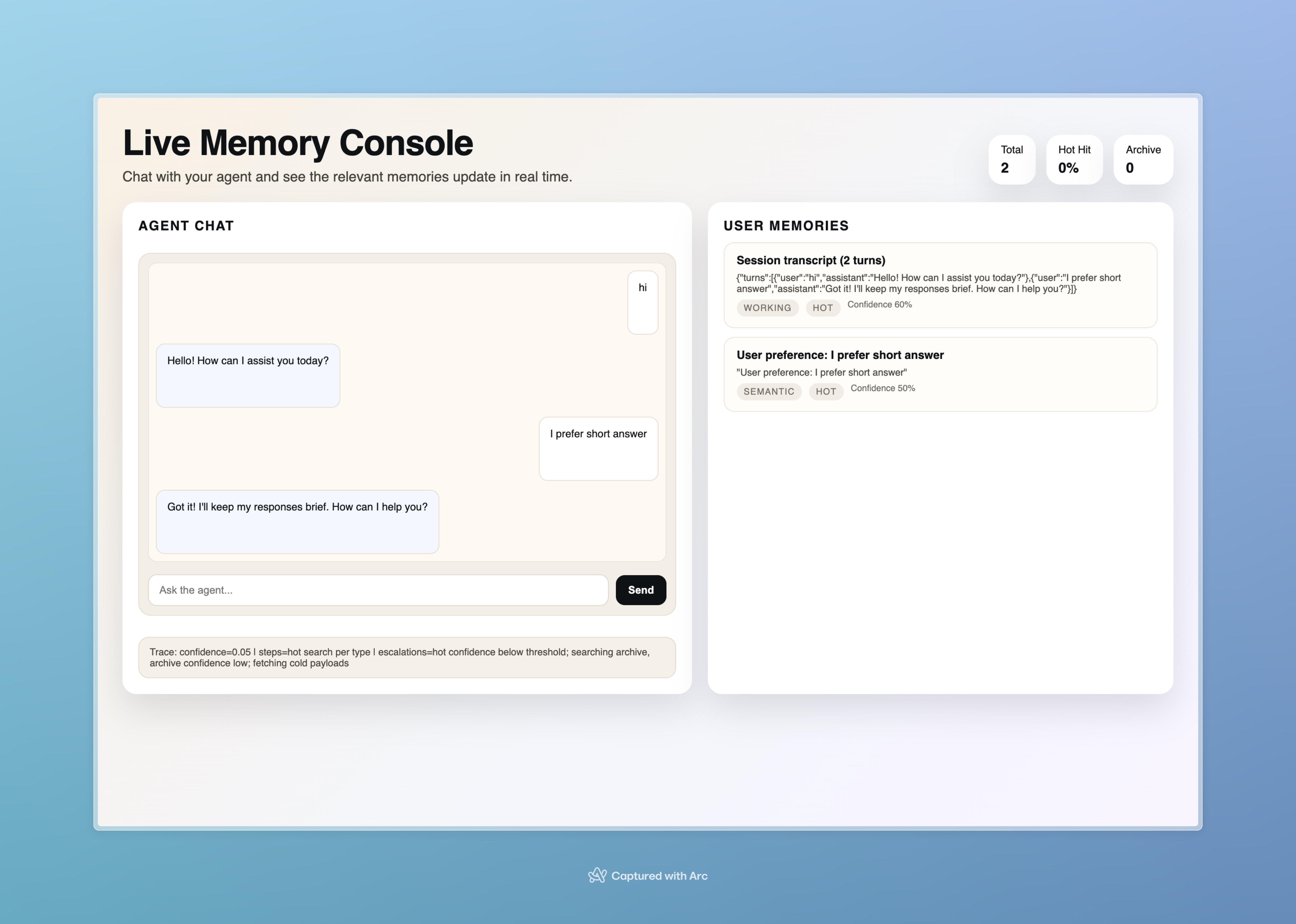

Memory Visualization + API

Start the API server:

python -m memoryagent.examples.memory_api_server

Open in browser:

http://127.0.0.1:8000/memory_viz.html

An example (System records semantic memory and updating working memory):

The page calls:

GET /api/memory?owner=user-001POST /api/chat

Policies

Conversation storage policy

HeuristicMemoryPolicy decides whether a turn should be stored and whether it becomes episodic or semantic memory.

Routing policy

MemoryRoutingPolicy decides where a memory should be written:

- Hot metadata store

- Vector index

- Feature store (perceptual)

- Cold archive (via workers)

Background Workers

ConsolidationWorker: working -> episodic/semanticArchiverWorker: hot -> cold + archive indexRehydratorWorker: cold -> hot (based on access)Compactor: cleanup/TTL

Data Stores

- Hot metadata:

.memoryagent_hot.sqlite - Vector index:

.memoryagent_vectors.sqlite(sqlite-vec) - Features:

.memoryagent_features.sqlite - Cold archive:

.memoryagent_cold/records/<owner>/YYYY/MM/DD/daily_notes.json

Data Root (Installed Usage)

The system auto-detects a project root by walking up from the current working directory and looking for pyproject.toml or .git. If it can’t find one, it uses the current directory.

Configuration

See memoryagent/config.py for defaults:

working_ttl_secondsretrieval_planthresholds and budgetsuse_sqlite_vec,vector_dim,sqlite_vec_extension_path

Notes

- Working memory is stored as a single session transcript (updated each turn).

- Episodic/semantic memories are candidates for cold archive.

License

MIT License

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file memoryagent_lib-0.1.3.tar.gz.

File metadata

- Download URL: memoryagent_lib-0.1.3.tar.gz

- Upload date:

- Size: 23.0 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

1e7fa1518019ec732732860426eba5d47612b203622ffd8af60416ac32d3e7c1

|

|

| MD5 |

53429ae8246dfcf952f6bad552a6402e

|

|

| BLAKE2b-256 |

cfaa46e69c2c3ae2bf720e2e88f84cc4258946fc442f9ae49ef186a23b966b6e

|

File details

Details for the file memoryagent_lib-0.1.3-py3-none-any.whl.

File metadata

- Download URL: memoryagent_lib-0.1.3-py3-none-any.whl

- Upload date:

- Size: 28.2 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

26d5d0e47ff0300bacfa48602fc7018cc8a51724388cfdd4023c95f869460740

|

|

| MD5 |

4150212e09c3f34f69a9f7b6fc043ad1

|

|

| BLAKE2b-256 |

e6f99a54a134af99ce846a285111611240b9cfa47c717d6b9eccfa6225aece26

|