Manifold-Constrained Hyper-Connections: Stable multi-history skip connections for deep neural networks.

Project description

mhc: Manifold-Constrained Hyper-Connections

Honey Badger Stability for Deeper, More Stable Neural Networks.

🎯 What is mHC?

mhc is a next-generation PyTorch library that reimagines residual connections. Instead of simple one-to-one skips, mHC learns to dynamically mix multiple historical network states through geometrically constrained manifolds, bringing Honey Badger toughness to your gradients.

| 🚀 High Performance | 🧠 Smart Memory | 🛠️ Drop-in Ease |

|---|---|---|

| Reach deeper than ever before with optimized gradient flow. | Dynamically mix past states for richer feature representation. | Transform any model to mHC with a single line of code. |

Installation

“We recommend uv for faster and reproducible installs, but standard pip is fully supported.”

# Using pip (standard)

pip install mhc

# Using uv (faster, recommended)

uv pip install mhc

Optional Extras

# Visualization utilities

pip install "mhc[viz]"

uv pip install "mhc[viz]"

# TensorFlow support

pip install "mhc[tf]"

uv pip install "mhc[tf]"

30-Second Example

import torch

from mhc import MHCSequential

# Create a model with mHC skip connections

model = MHCSequential([

nn.Linear(64, 64),

nn.ReLU(),

nn.Linear(64, 64),

nn.ReLU(),

nn.Linear(64, 32)

], max_history=4, mode="mhc", constraint="simplex")

# Use it like any PyTorch model

x = torch.randn(8, 64)

output = model(x)

Inject into Existing Models

Transform any model to use mHC with one line:

from mhc import inject_mhc

import torchvision.models as models

model = models.resnet50(pretrained=True)

inject_mhc(model, target_types=nn.Conv2d, max_history=4)

🤔 Why mHC?

The Gradient Bottleneck

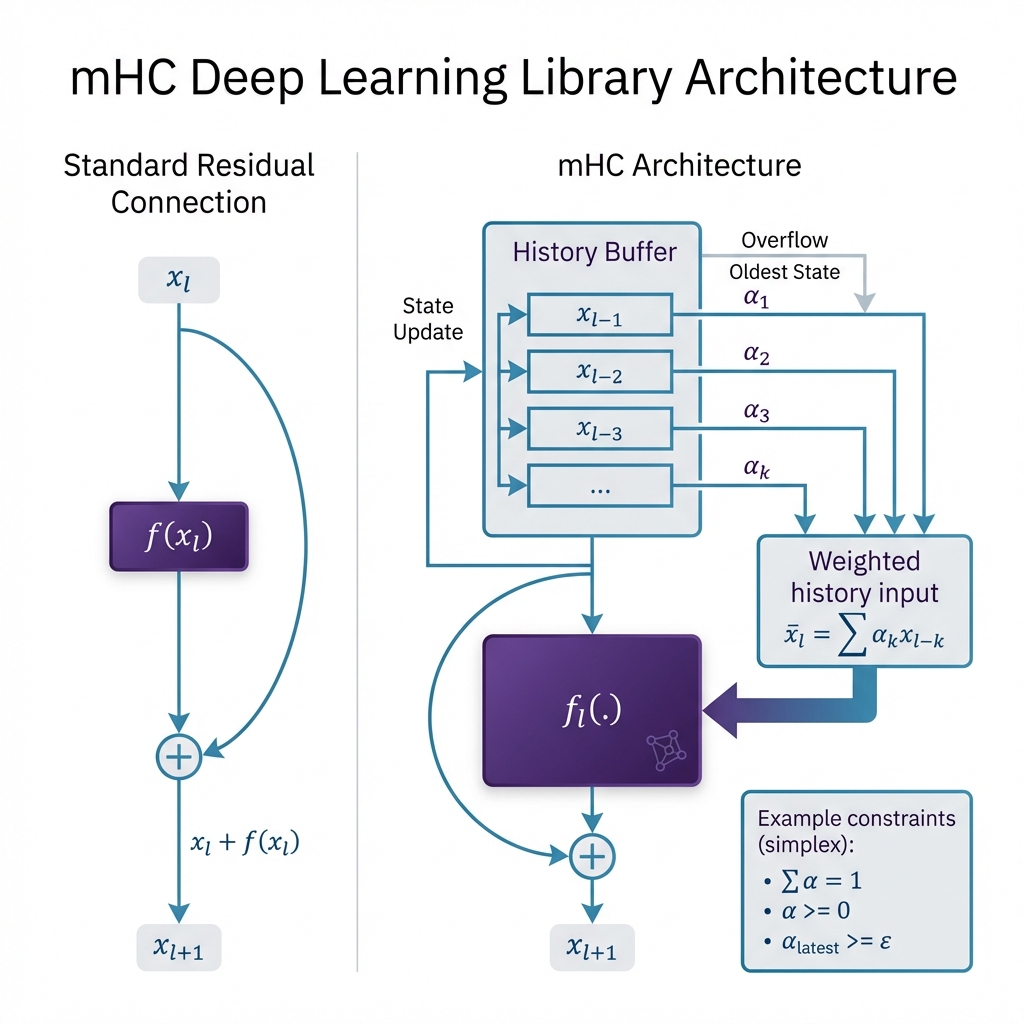

Standard residual connections only look one step back: $x_{l+1} = x_l + f(x_l)$. While revolutionary, this narrow window limits the network's ability to leverage long-range dependencies and can lead to diminishing returns in extremely deep architectures.

The mHC Breakthrough

mHC implements a history-aware manifold that mixes a sliding window of $H$ past representations:

$$ x_{l+1} = f(x_l) + \sum_{k=l-H+1}^{l} \alpha_{l,k}, x_k $$

Where:

- $\alpha_{l,k}$: Learned mixing weights optimized for feature relevance.

- Constraints: Weights are projected onto stable manifolds (Simplex, Identity-preserving, or Doubly Stochastic) to ensure mathematical convergence.

Key Advantages

| Benefit | Description |

|---|---|

| Deep Stability | Geometric constraints prevent gradient explosion even at 200+ layers. |

| Feature Fusion | Multi-history mixing allows layers to recover lost spatial or semantic info. |

| Adaptive Flow | The network learns which historical states are most important for the current layer. |

📊 Performance Highlights

Experiments with 50-layer networks show:

- ✅ 2x Faster Convergence compared to standard ResNet on deep MLPs.

- ✅ Superior Gradient Stability through geometric manifold constraints.

- ✅ Minimal Overhead (~10% additional compute for 4x history).

[!TIP] Run the benchmark yourself:

uv run python experiments/benchmark_stability.py

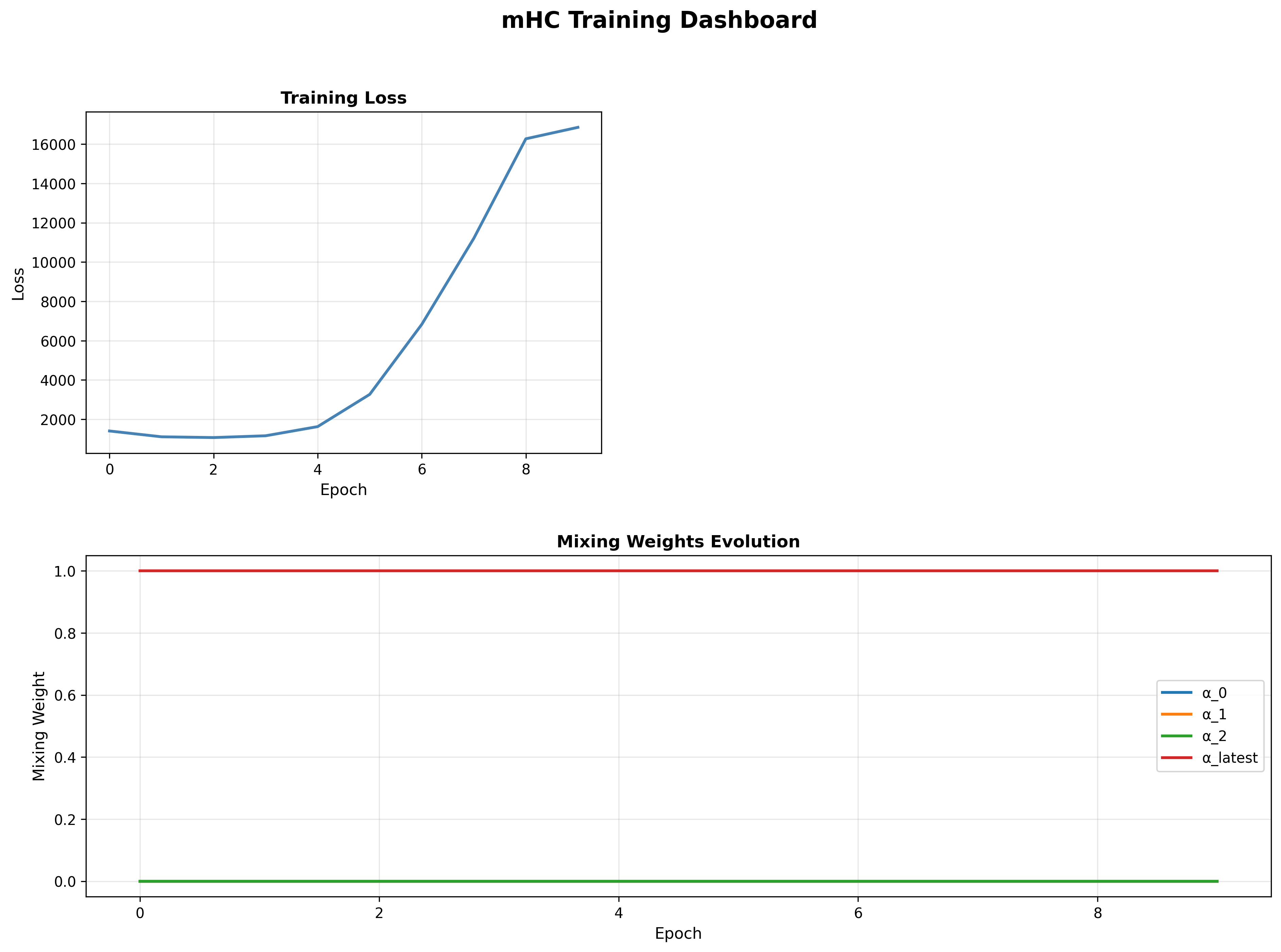

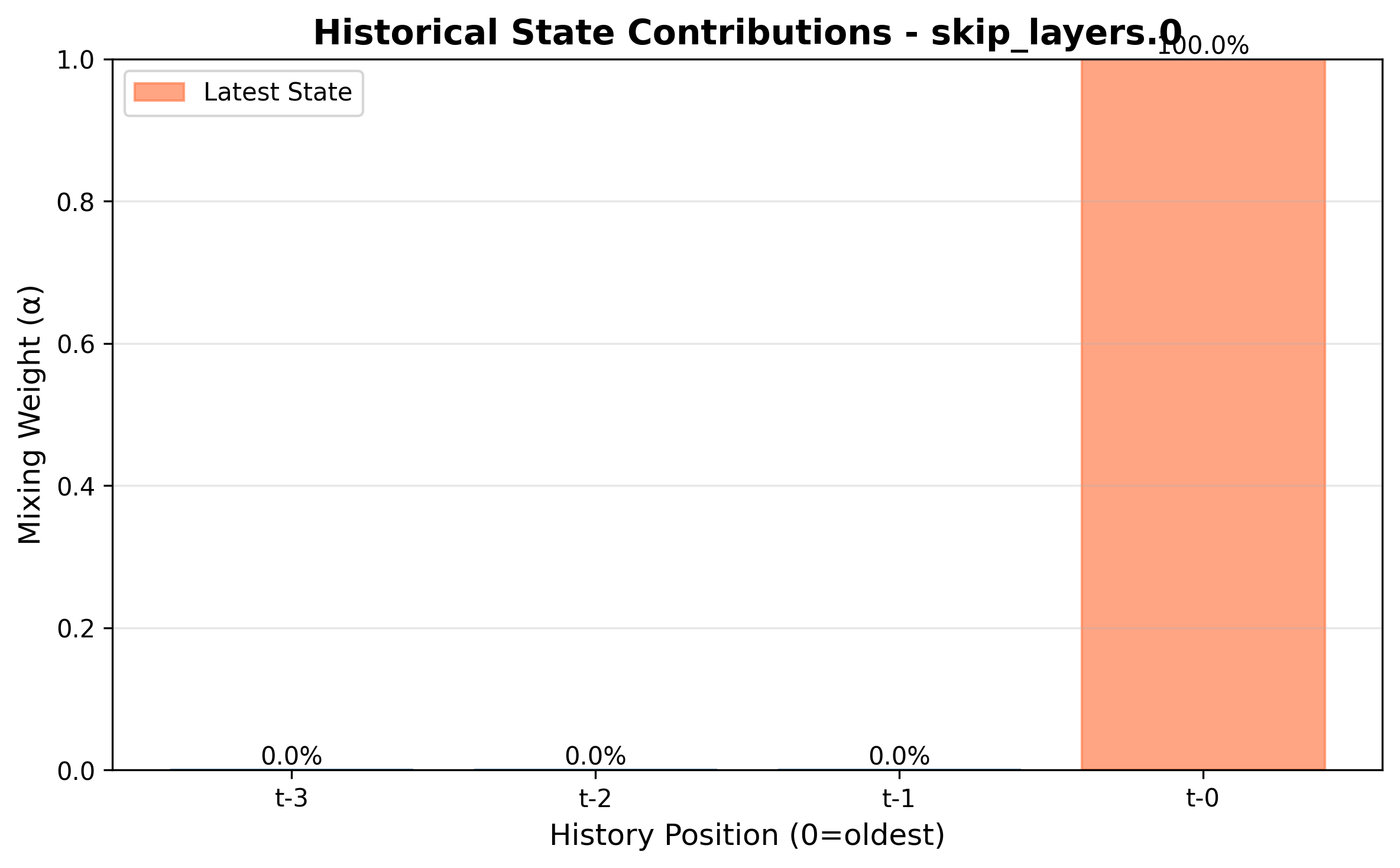

📊 Visualizing Results

| Training Dashboard | History Evolution |

|---|---|

|

|

| Loss curves & weight dynamics | Learned coefficients over time |

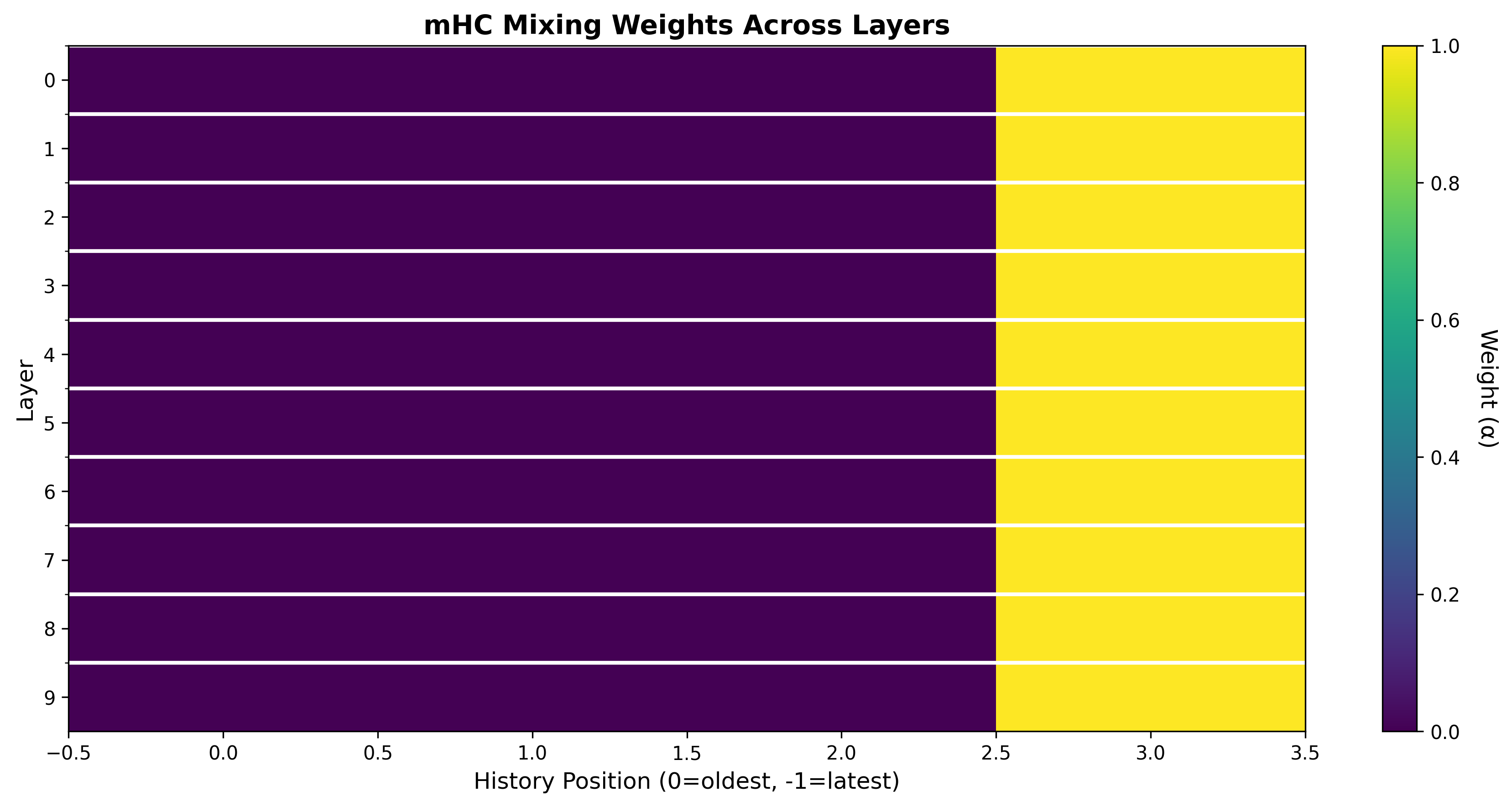

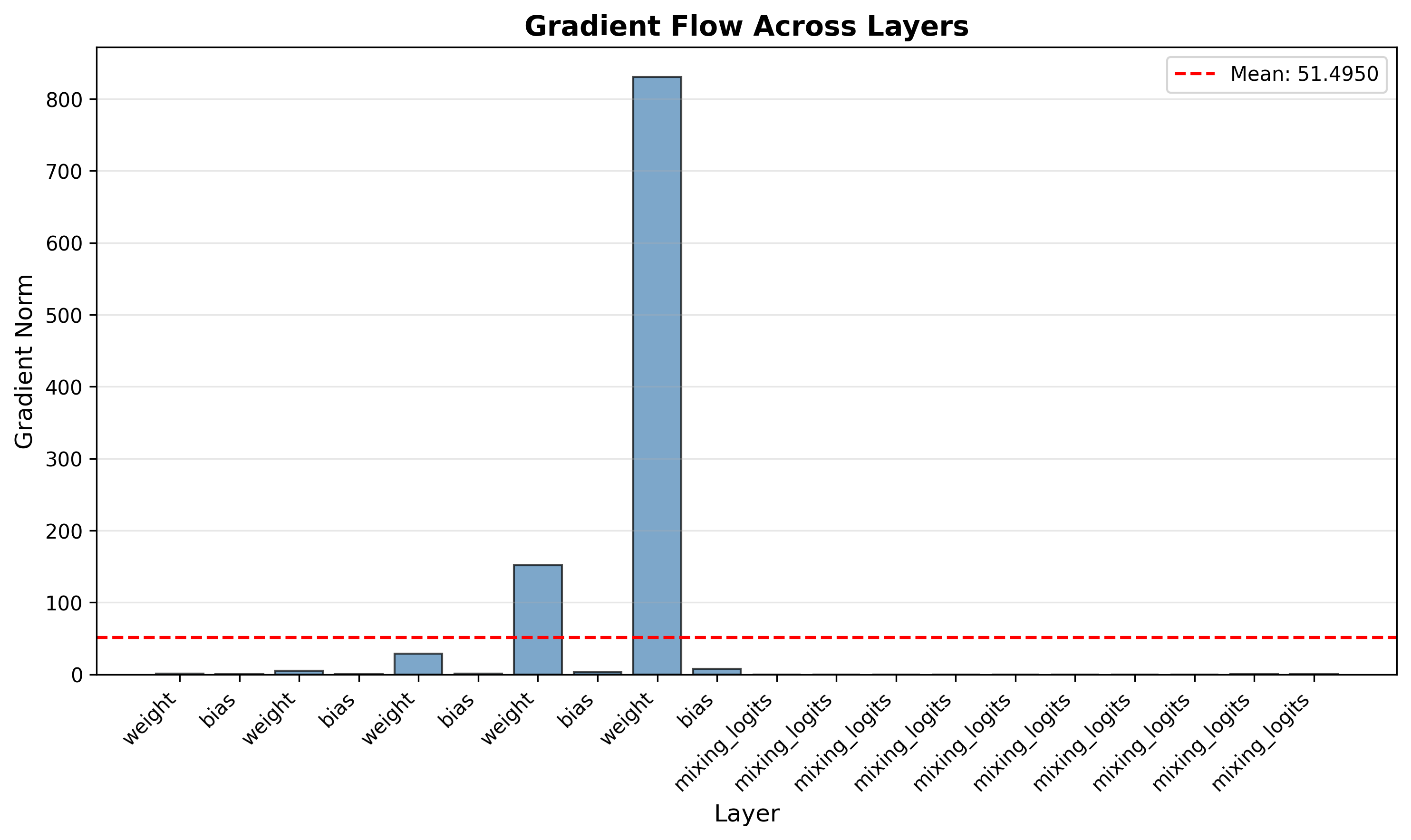

| Gradient Flow | Feature Contribution |

|---|---|

|

|

| Improved backpropagation signal | Single layer state importance |

🌳 Repository Structure

MHC/

├── mhc/ # Core package

│ ├── constraints/ # Mathematical projections

│ ├── layers/ # mHC Skip implementations

│ ├── tf/ # TensorFlow compatibility

│ └── utils/ # Injection & logging tools

├── tests/ # Robust PyTest suite

├── docs/ # Documentation sources

└── examples/ # Quick-start notebooks

🛠️ Development Installation

For contributors cloning the repository:

git clone https://github.com/gm24med/MHC.git

cd MHC

# Using uv (recommended for dev)

uv pip install -e ".[dev]"

# Or standard pip

pip install -e ".[dev]"

⭐ Star us on GitHub!

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file mhc-0.5.5.tar.gz.

File metadata

- Download URL: mhc-0.5.5.tar.gz

- Upload date:

- Size: 32.4 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

60562237d3fcadd77931572a10bf30ef14bc36711da002e1e34f84fb8f7406cd

|

|

| MD5 |

04a4fa904b1e2db1569268b13018d629

|

|

| BLAKE2b-256 |

305d64bb89542634862cd30f7a8f64f506354223d4c2e1a3f5059430354b061f

|

File details

Details for the file mhc-0.5.5-py3-none-any.whl.

File metadata

- Download URL: mhc-0.5.5-py3-none-any.whl

- Upload date:

- Size: 31.9 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

2fb07b566050107733d825a219994c4b6975461c646af95e9cb8d50f0d28ed47

|

|

| MD5 |

a7afe62fff13974220a5531148b23147

|

|

| BLAKE2b-256 |

23ff6ea35cdae3a2b0bf18629ba3ea0c69daf2e0ef683740927b9d1ef27bd777

|