Mia's Server llms-txt documentation over MCP

Project description

MCP LLMS-TXT Documentation Server (miamcpdoc)

𝕄𝕚𝕖𝕥𝕥𝕖❜𝕊𝕡𝕣𝕚𝕥𝕖 🌸

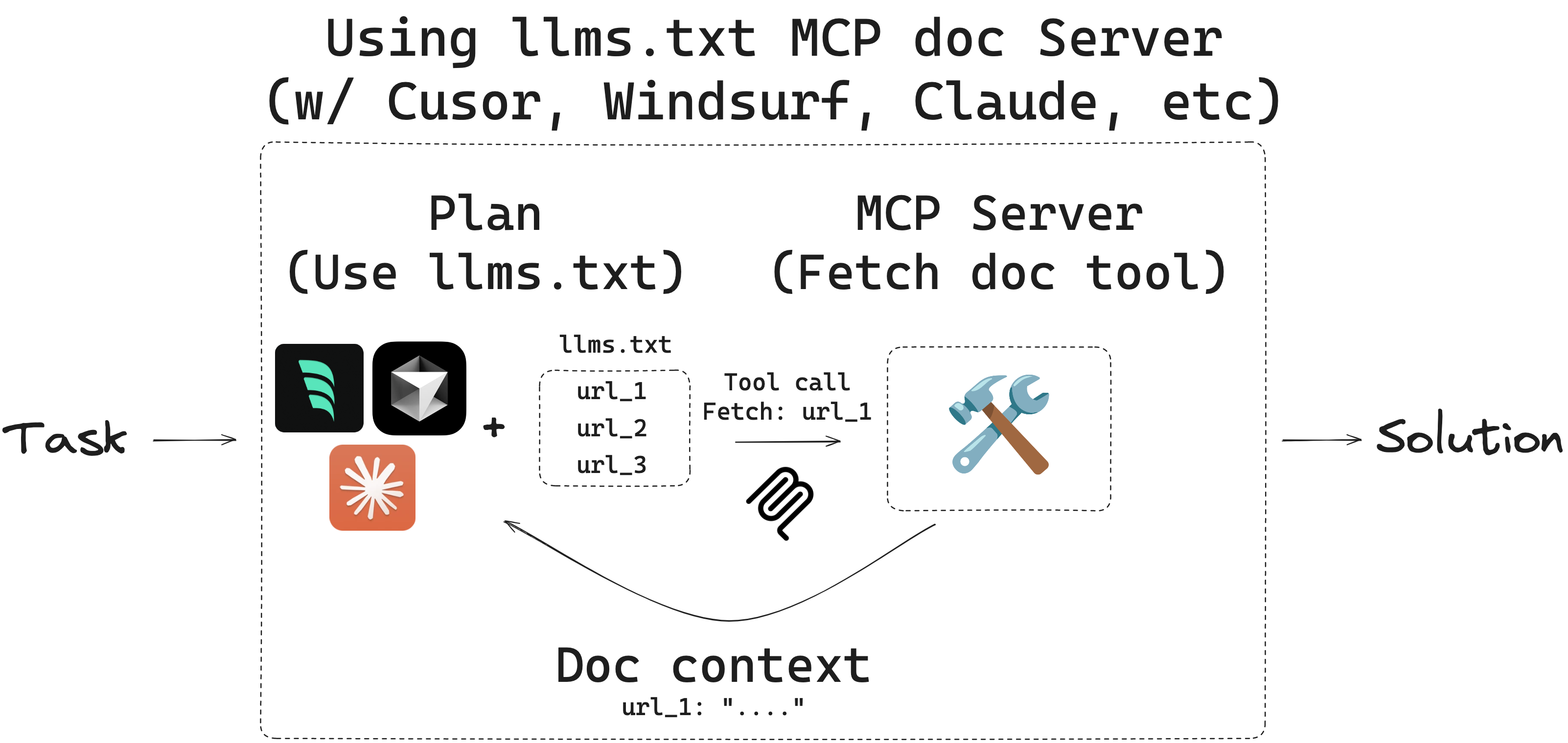

Overview

llms.txt is a website index for LLMs, providing background information, guidance, and links to detailed markdown files. IDEs like Cursor and Windsurf or apps like Claude Code/Desktop can use llms.txt to retrieve context for tasks. However, these apps use different built-in tools to read and process files like llms.txt. The retrieval process can be opaque, and there is not always a way to audit the tool calls or the context returned.

MCP offers a way for developers to have full control over tools used by these applications. Here, we create an open source MCP server to provide MCP host applications (e.g., Cursor, Windsurf, Claude Code/Desktop) with (1) a user-defined list of llms.txt files and (2) a simple fetch_docs tool read URLs within any of the provided llms.txt files. This allows the user to audit each tool call as well as the context returned.

llms-txt

You can find llms.txt files for langgraph and langchain here:

| Library | llms.txt |

|---|---|

| LangGraph Python | https://langchain-ai.github.io/langgraph/llms.txt |

| LangGraph JS | https://langchain-ai.github.io/langgraphjs/llms.txt |

| LangChain Python | https://python.langchain.com/llms.txt |

| LangChain JS | https://js.langchain.com/llms.txt |

Quickstart

Quick Setup

1. Initialize Configuration

Create a config.yaml file in your current directory:

# Install and create basic config.yaml

uvx --from miamcpdoc miamcpdoc --init

# Create config.yaml with interactive prompts

uvx --from miamcpdoc miamcpdoc --init --interactive

# Use default bundled configuration (includes LangGraph, JGTPY, Coaiapy)

uvx --from miamcpdoc miamcpdoc --default

2. Run the MCP Server

# Use your config.yaml

uvx --from miamcpdoc miamcpdoc --yaml config.yaml

# Use default bundled configuration

uvx --from miamcpdoc miamcpdoc --default

# Serve via HTTP/SSE (web-accessible)

uvx --from miamcpdoc miamcpdoc --yaml config.yaml --transport sse --host 0.0.0.0 --port 8000

Configuration Examples

Basic config.yaml (created by --init)

- name: LangGraph Python

llms_txt: https://langchain-ai.github.io/langgraph/llms.txt

description: LangGraph documentation for building stateful, multi-actor applications

- name: JGTPY Trading Framework

llms_txt: https://jgtpy.jgwill.com/llms.txt

description: JGT Python trading framework for market data and technical analysis

- name: Coaiapy AI Platform

llms_txt: https://coaiapy.jgwill.com/llms.txt

description: Coaiapy AI platform documentation and integration guides

Install uv (if needed)

- Please see official uv docs for other ways to install

uv.

curl -LsSf https://astral.sh/uv/install.sh | sh

Note: Security and Domain Access Control

For security reasons, miamcpdoc implements strict domain access controls:

Remote llms.txt files: When you specify a remote llms.txt URL (e.g.,

https://langchain-ai.github.io/langgraph/llms.txt), miamcpdoc automatically adds only that specific domain (langchain-ai.github.io) to the allowed domains list. This means the tool can only fetch documentation from URLs on that domain.Local llms.txt files: When using a local file, NO domains are automatically added to the allowed list. You MUST explicitly specify which domains to allow using the

--allowed-domainsparameter.Adding additional domains: To allow fetching from domains beyond those automatically included:

- Use

--allowed-domains domain1.com domain2.comto add specific domains- Use

--allowed-domains '*'to allow all domains (use with caution)This security measure prevents unauthorized access to domains not explicitly approved by the user, ensuring that documentation can only be retrieved from trusted sources.

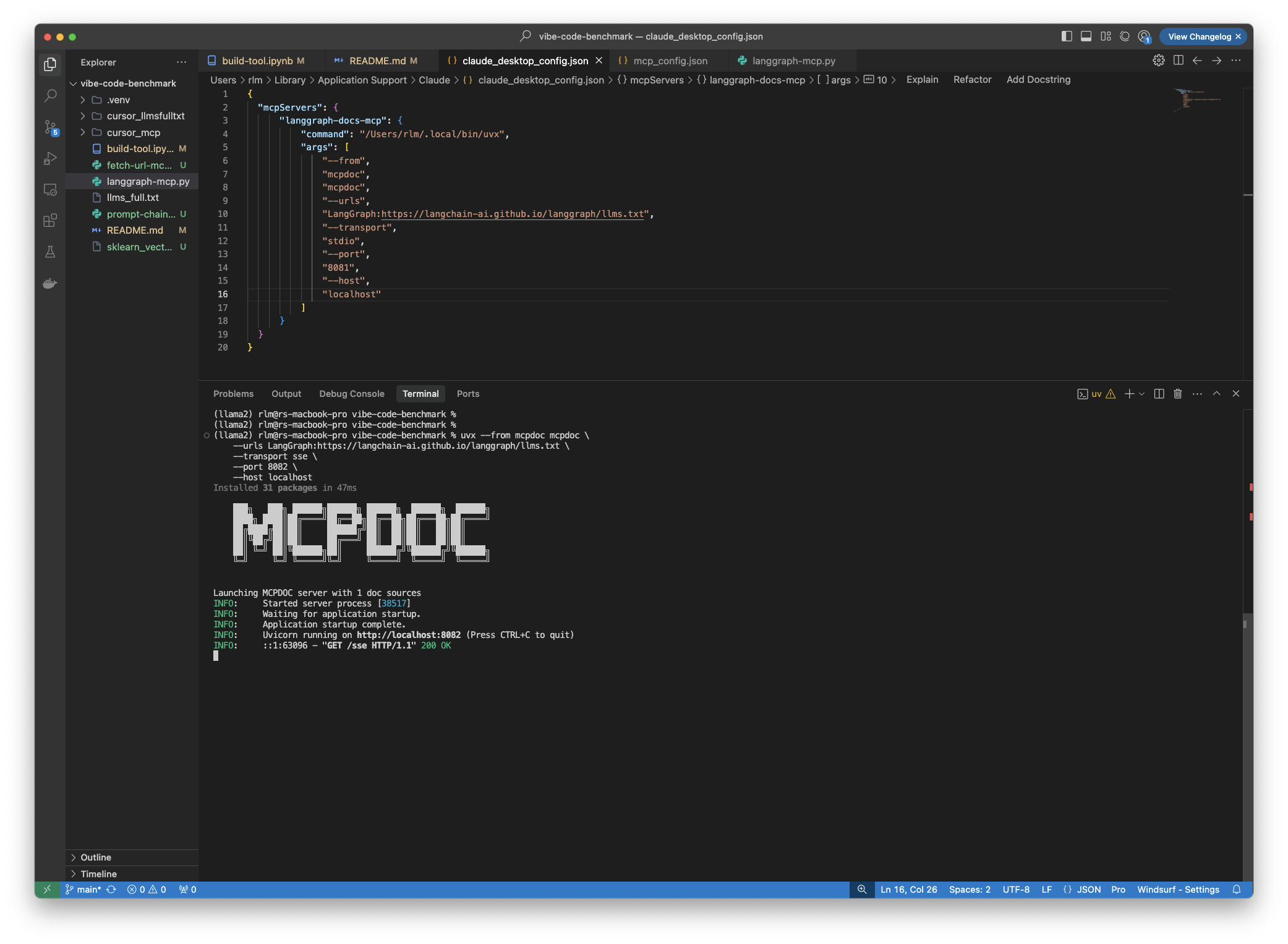

(Optional) Test the MCP server locally:

# Test with default configuration

uvx --from miamcpdoc miamcpdoc --default --transport sse --port 8082 --host localhost

# Test with custom URLs

uvx --from miamcpdoc miamcpdoc \

--urls "LangGraph:https://langchain-ai.github.io/langgraph/llms.txt" "LangChain:https://python.langchain.com/llms.txt" \

--transport sse \

--port 8082 \

--host localhost

Or use the specialized server commands:

# AI SDK documentation

uvx --from miamcpdoc miamcpdoc-aisdk

# Hugging Face documentation

uvx --from miamcpdoc miamcpdoc-huggingface

# LangGraph documentation

uvx --from miamcpdoc miamcpdoc-langgraph

# What is LLMs documentation

uvx --from miamcpdoc miamcpdoc-llms

# Creative Frameworks documentation (RISE, Narrative Remixing, Creative Orientation)

uvx --from miamcpdoc miamcpdoc-creative

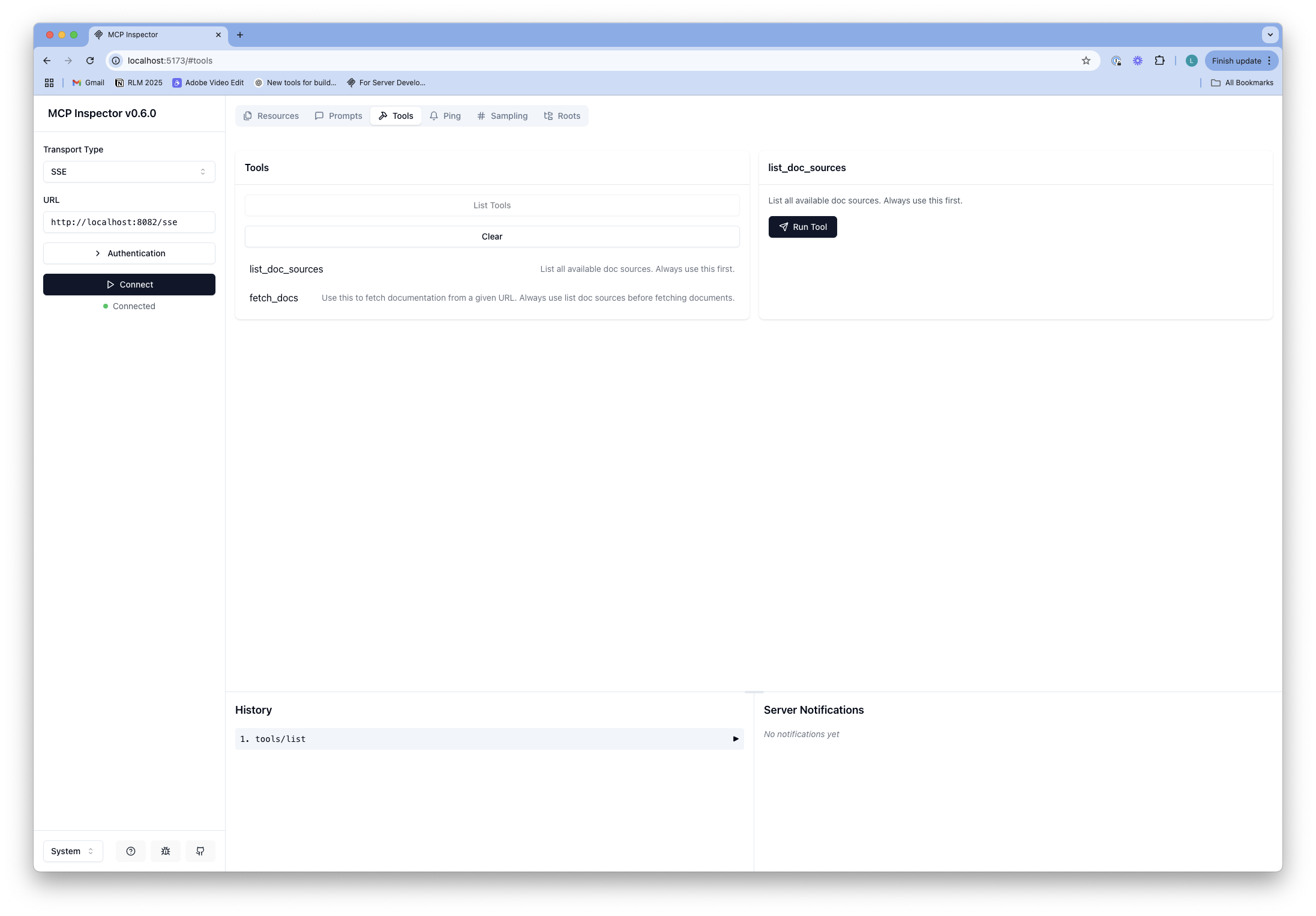

- This should run at: http://localhost:8082

- Run MCP inspector and connect to the running server:

npx @modelcontextprotocol/inspector

- Here, you can test the

toolcalls.

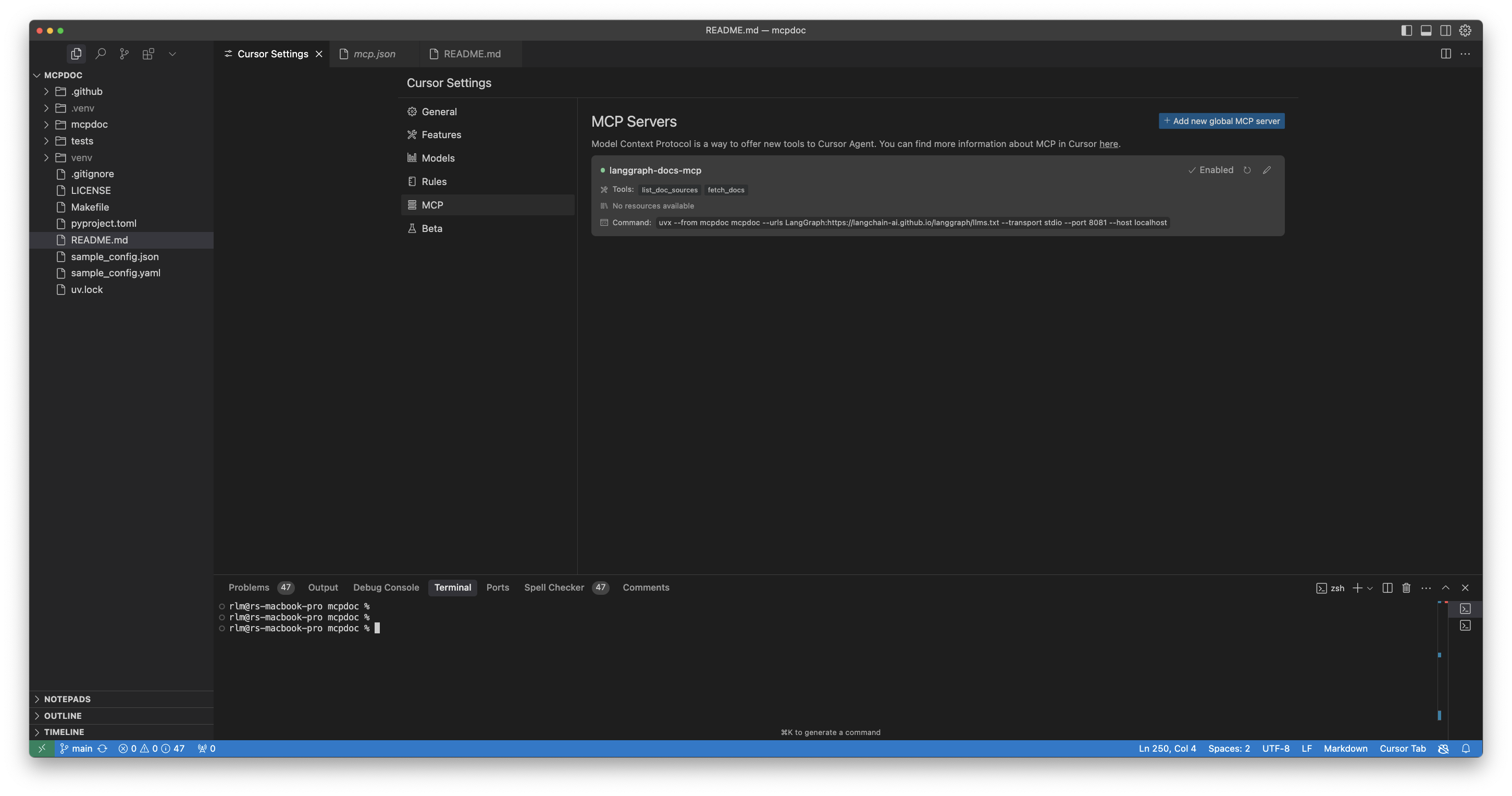

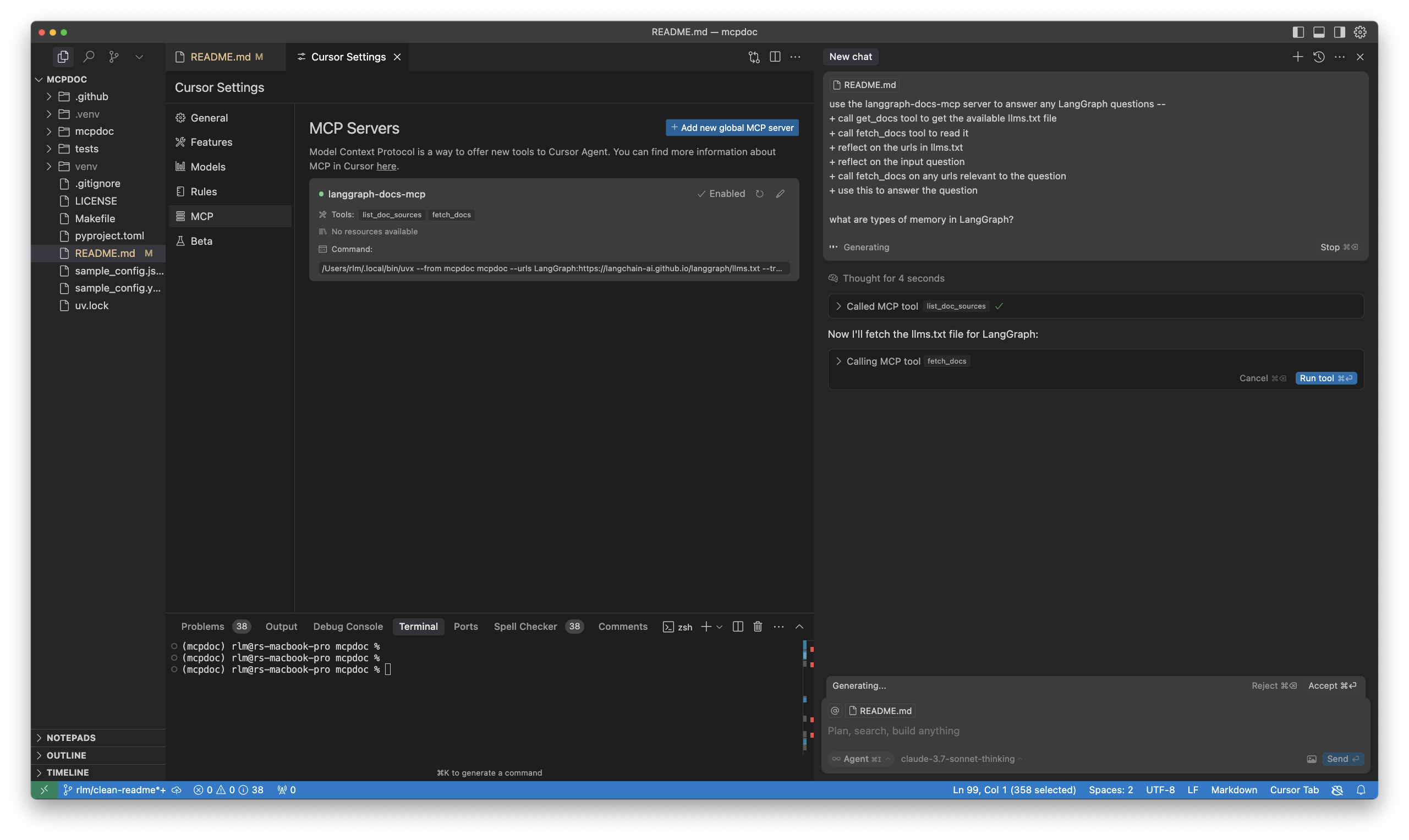

Connect to Cursor

- Open

Cursor SettingsandMCPtab. - This will open the

~/.cursor/mcp.jsonfile.

- Paste the following into the file. Choose one of these configurations:

Option 1: Use default bundled configuration (recommended)

{

"mcpServers": {

"miamcpdoc-default": {

"command": "uvx",

"args": [

"--from",

"miamcpdoc",

"miamcpdoc",

"--default"

]

}

}

}

Option 2: Use custom config.yaml

{

"mcpServers": {

"miamcpdoc-custom": {

"command": "uvx",

"args": [

"--from",

"miamcpdoc",

"miamcpdoc",

"--yaml",

"config.yaml"

]

}

}

}

Option 3: Direct URL specification

{

"mcpServers": {

"langgraph-docs-mcp": {

"command": "uvx",

"args": [

"--from",

"miamcpdoc",

"miamcpdoc",

"--urls",

"LangGraph:https://langchain-ai.github.io/langgraph/llms.txt",

"LangChain:https://python.langchain.com/llms.txt"

]

}

}

}

- Confirm that the server is running in your

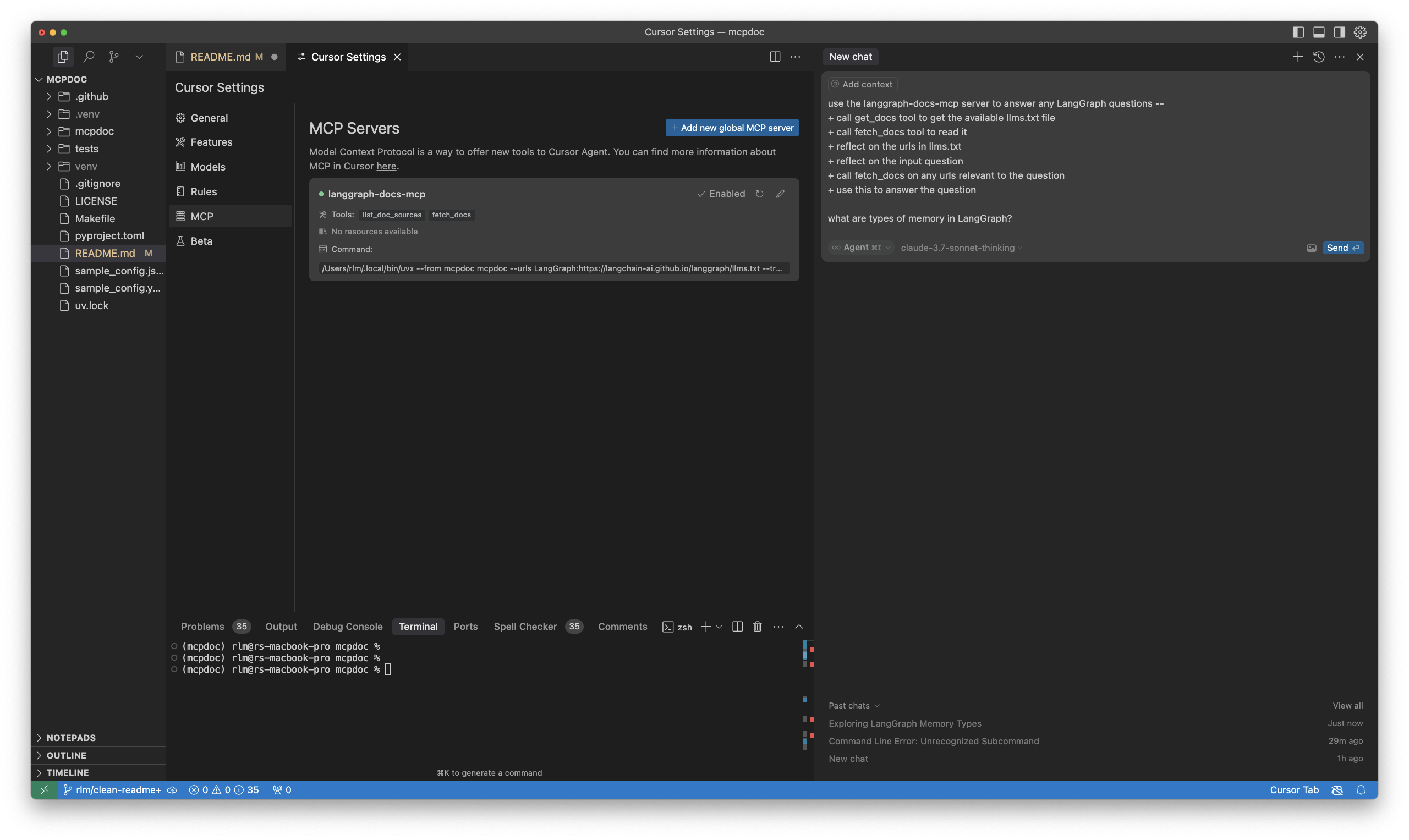

Cursor Settings/MCPtab. - Best practice is to then update Cursor Global (User) rules.

- Open Cursor

Settings/Rulesand updateUser Ruleswith the following (or similar):

for ANY question about LangGraph, use the langgraph-docs-mcp server to help answer --

+ call list_doc_sources tool to get the available llms.txt file

+ call fetch_docs tool to read it

+ reflect on the urls in llms.txt

+ reflect on the input question

+ call fetch_docs on any urls relevant to the question

+ use this to answer the question

CMD+L(on Mac) to open chat.- Ensure

agentis selected.

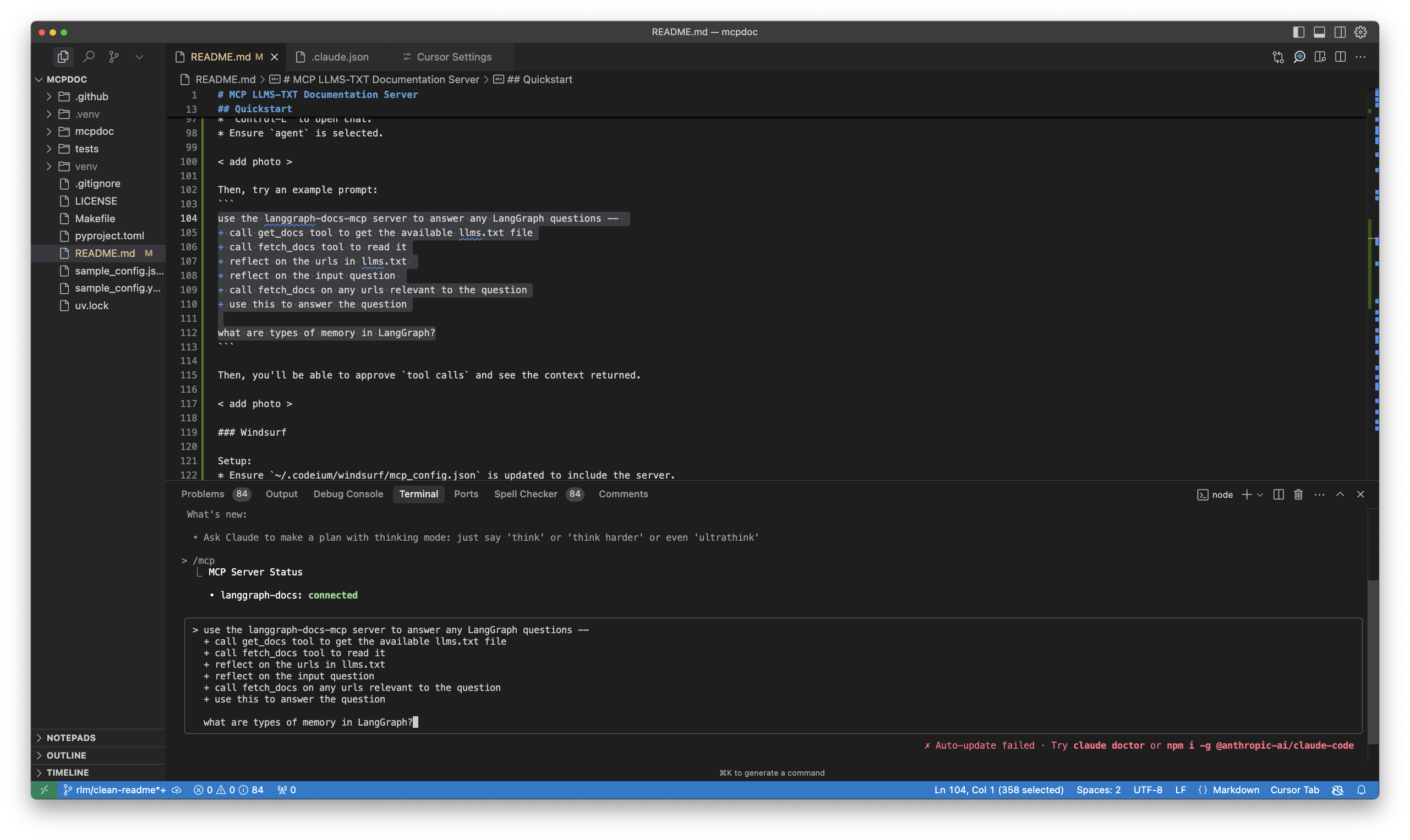

Then, try an example prompt, such as:

what are types of memory in LangGraph?

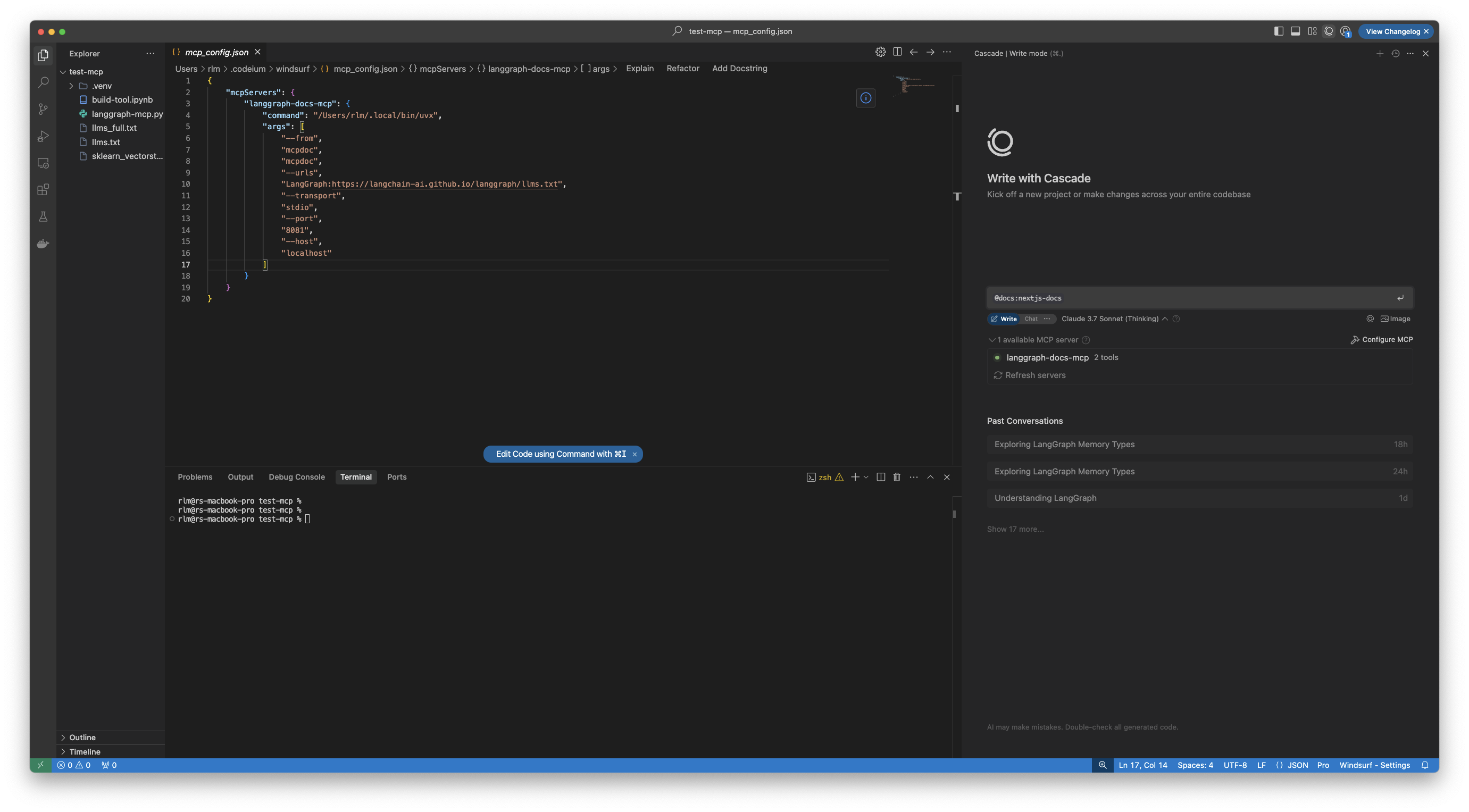

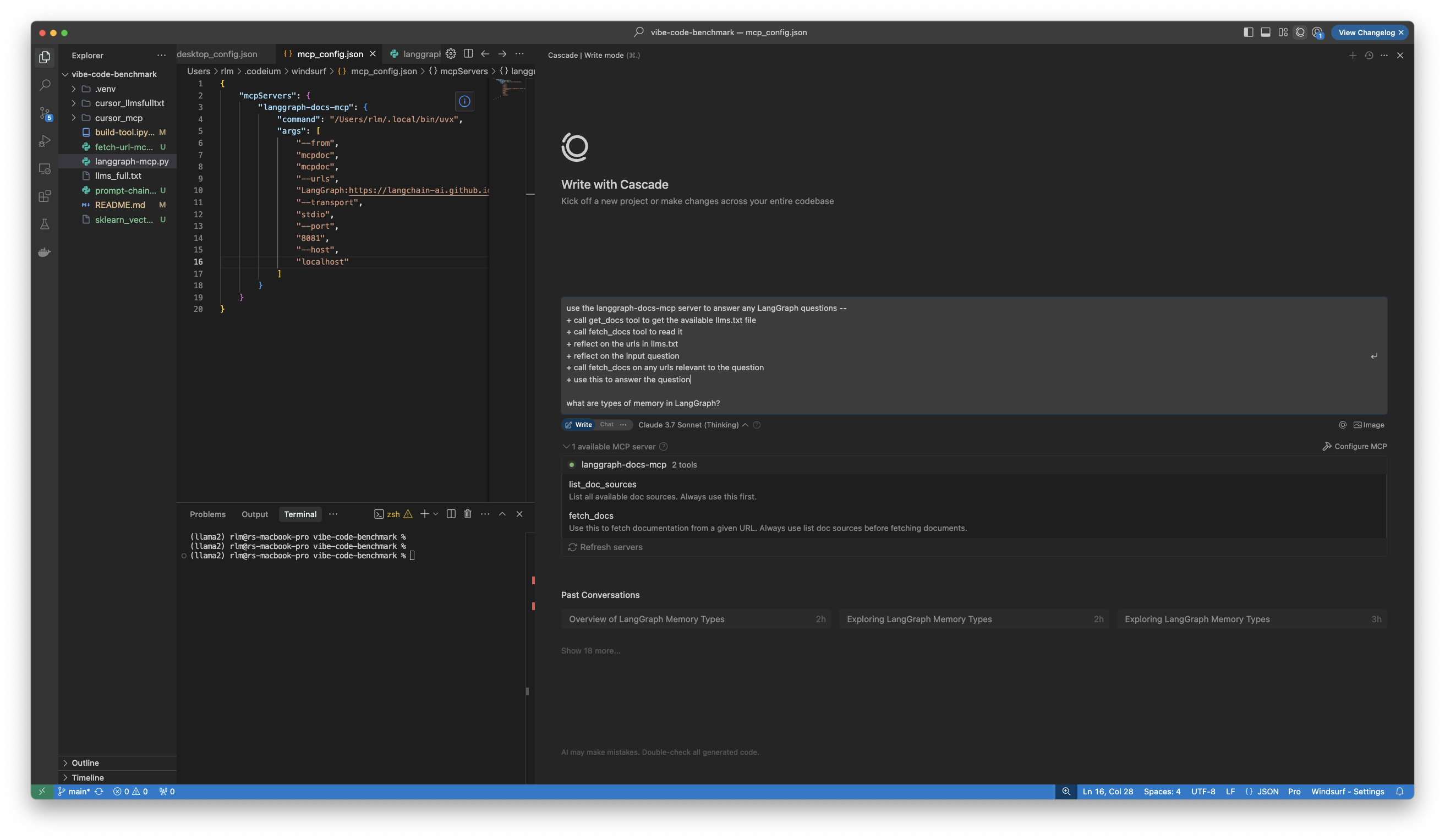

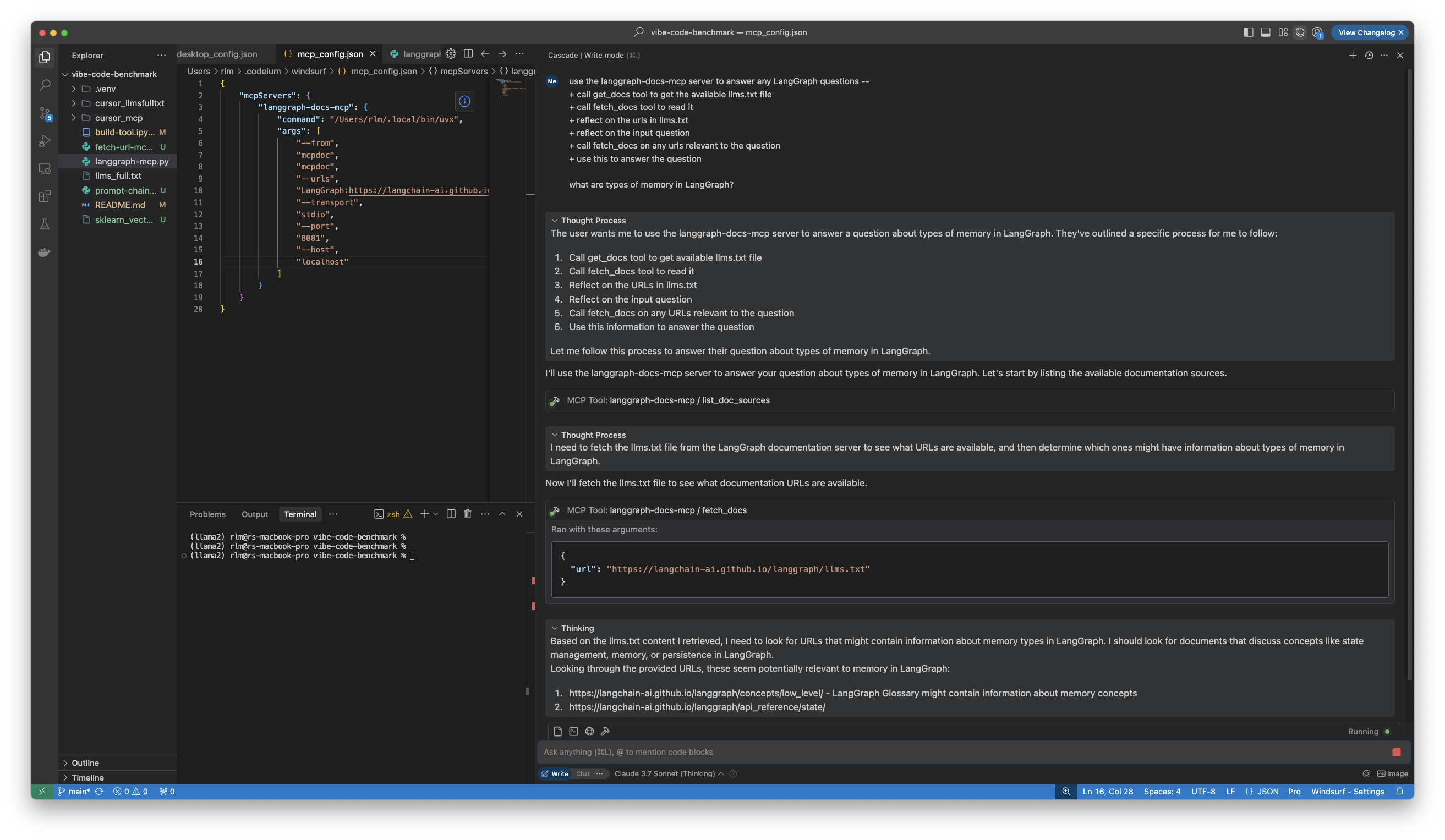

Connect to Windsurf

- Open Cascade with

CMD+L(on Mac). - Click

Configure MCPto open the config file,~/.codeium/windsurf/mcp_config.json. - Update with

langgraph-docs-mcpas noted above.

- Update

Windsurf Rules/Global ruleswith the following (or similar):

for ANY question about LangGraph, use the langgraph-docs-mcp server to help answer --

+ call list_doc_sources tool to get the available llms.txt file

+ call fetch_docs tool to read it

+ reflect on the urls in llms.txt

+ reflect on the input question

+ call fetch_docs on any urls relevant to the question

Then, try the example prompt:

- It will perform your tool calls.

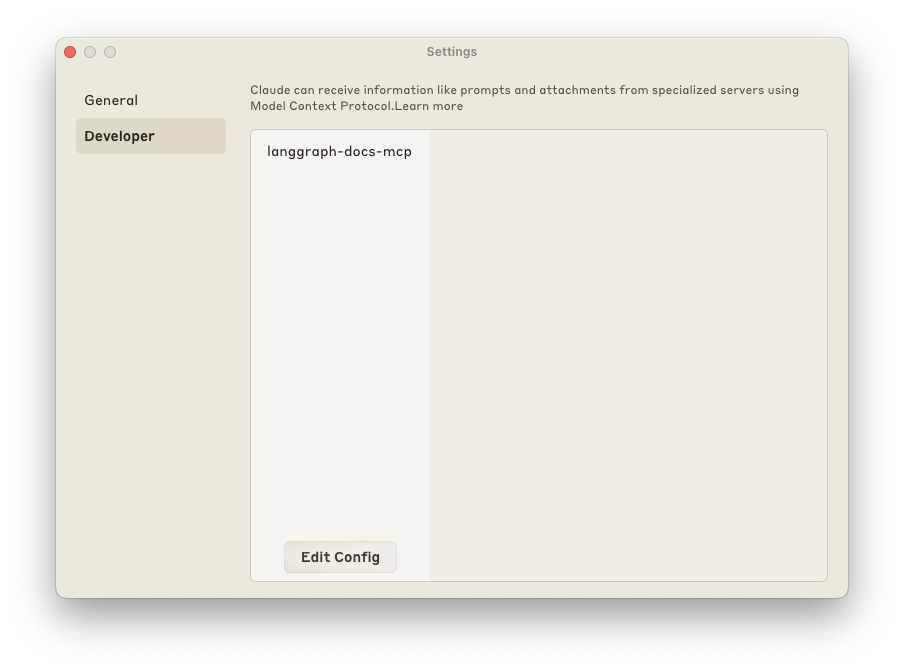

Connect to Claude Desktop

- Open

Settings/Developerto update~/Library/Application\ Support/Claude/claude_desktop_config.json. - Update with

langgraph-docs-mcpas noted above. - Restart Claude Desktop app.

[!Note] If you run into issues with Python version incompatibility when trying to add MCPDoc tools to Claude Desktop, you can explicitly specify the filepath to

pythonexecutable in theuvxcommand.Example configuration

{ "mcpServers": { "langgraph-docs-mcp": { "command": "uvx", "args": [ "--python", "/path/to/python", "--from", "miamcpdoc", "miamcpdoc", "--urls", "LangGraph:https://langchain-ai.github.io/langgraph/llms.txt", "--transport", "stdio" ] } } }

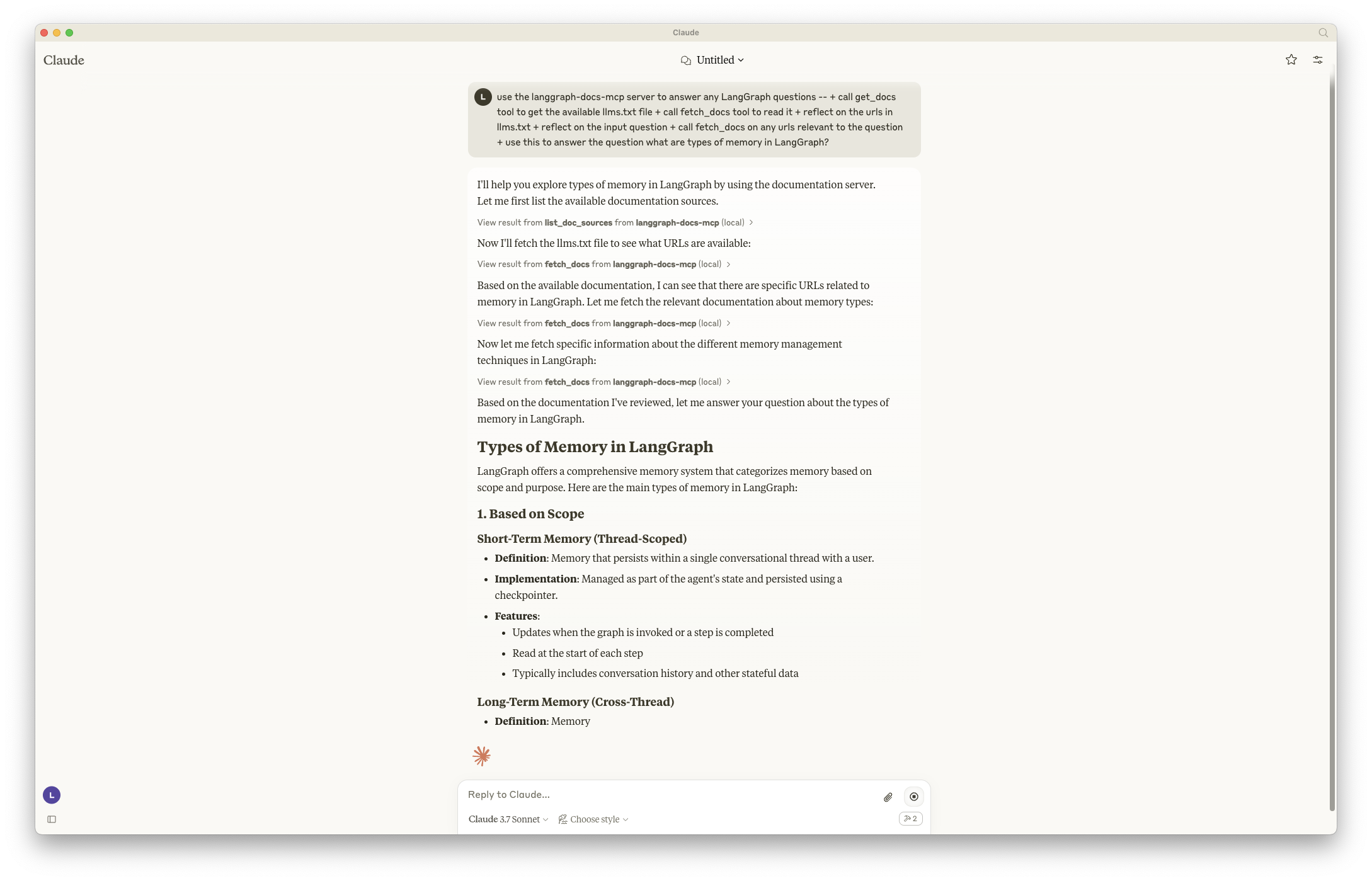

[!Note] Currently (3/21/25) it appears that Claude Desktop does not support

rulesfor global rules, so appending the following to your prompt.

<rules>

for ANY question about LangGraph, use the langgraph-docs-mcp server to help answer --

+ call list_doc_sources tool to get the available llms.txt file

+ call fetch_docs tool to read it

+ reflect on the urls in llms.txt

+ reflect on the input question

+ call fetch_docs on any urls relevant to the question

</rules>

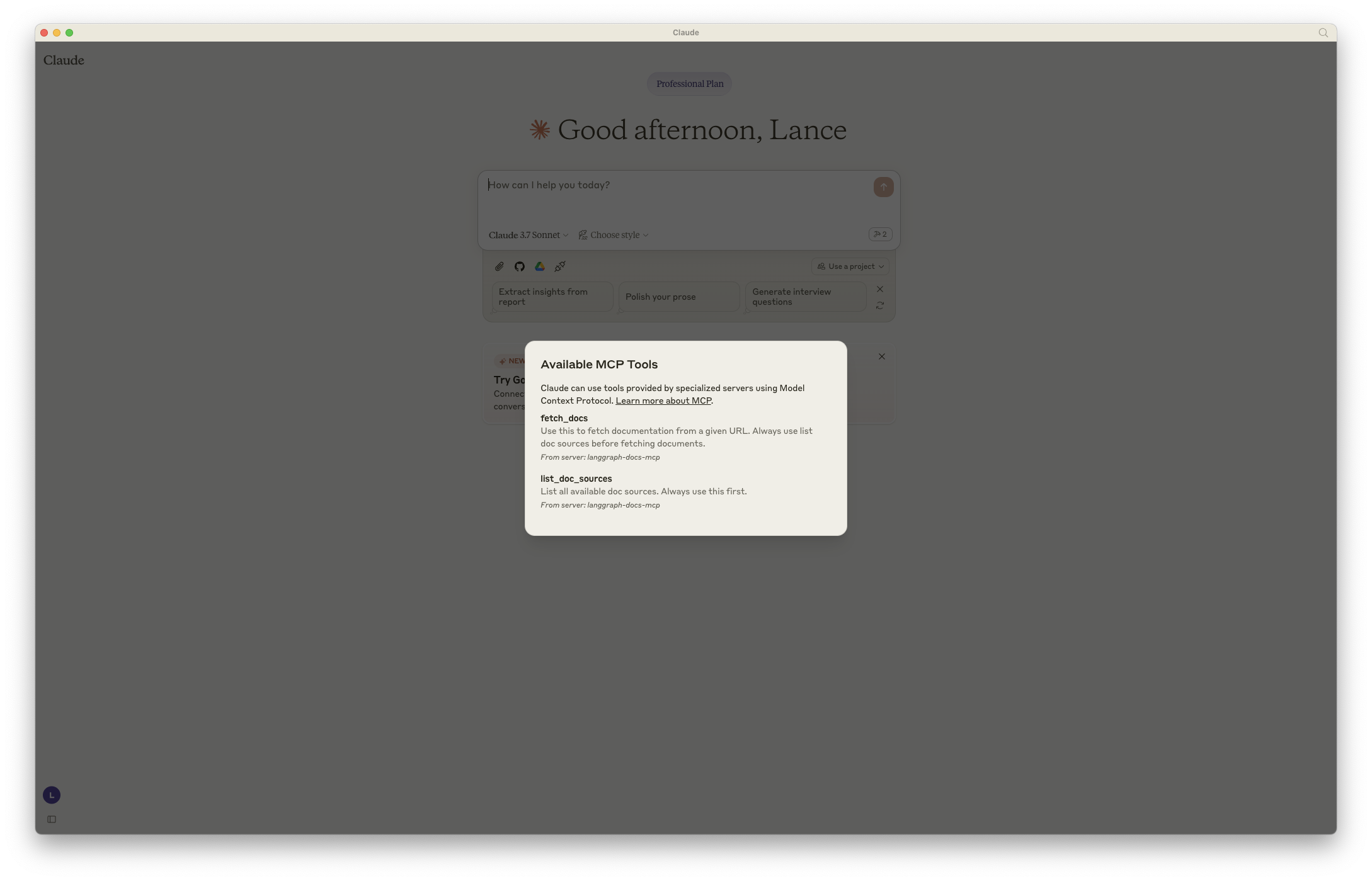

- You will see your tools visible in the bottom right of your chat input.

Then, try the example prompt:

- It will ask to approve tool calls as it processes your request.

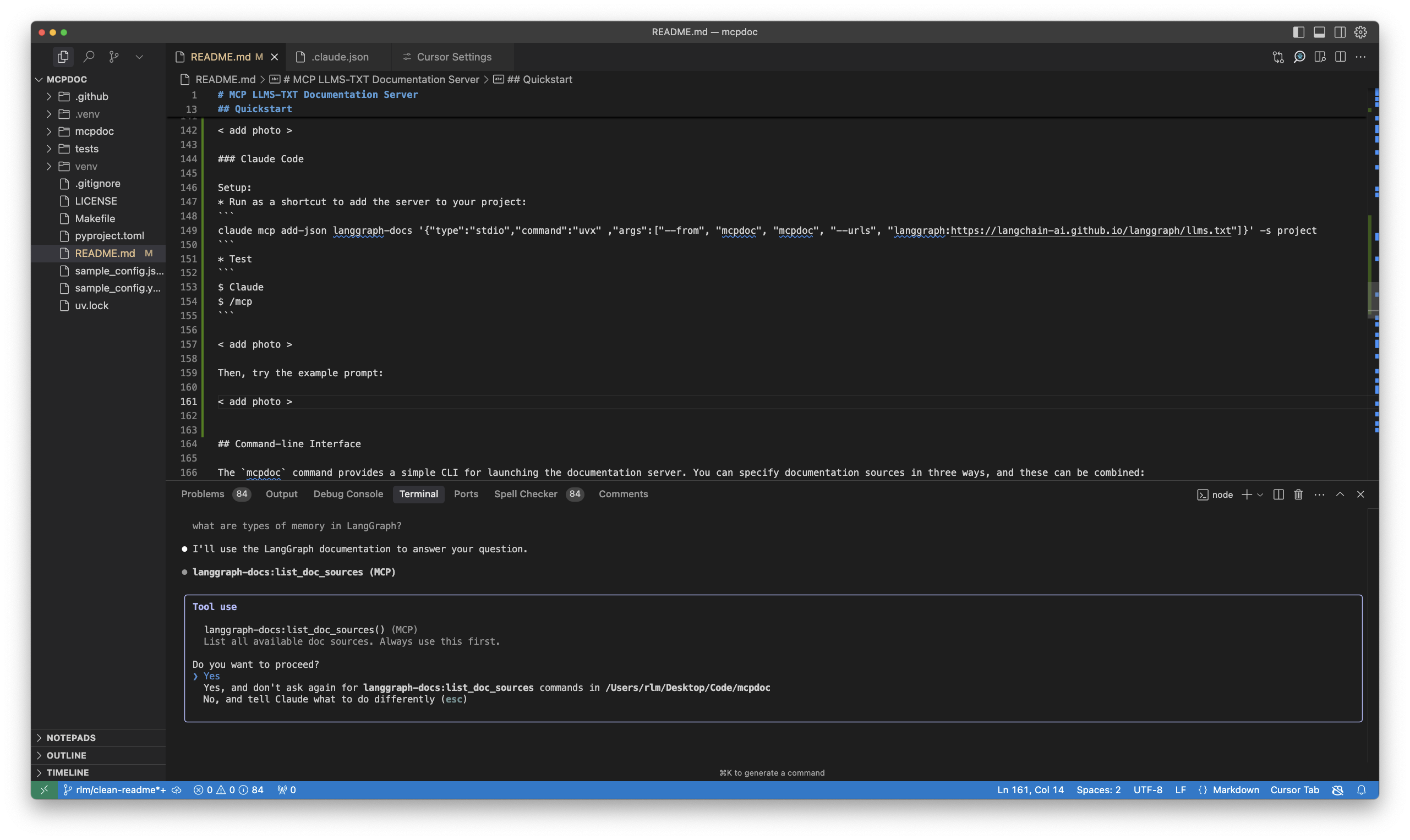

Connect to Claude Code

- In a terminal after installing Claude Code, run this command to add the MCP server to your project:

claude mcp add-json langgraph-docs '{"type":"stdio","command":"uvx" ,"args":["--from", "miamcpdoc", "miamcpdoc", "--urls", "langgraph:https://langchain-ai.github.io/langgraph/llms.txt", "--urls", "LangChain:https://python.langchain.com/llms.txt"]}' -s local

- You will see

~/.claude.jsonupdated. - Test by launching Claude Code and running to view your tools:

$ Claude

$ /mcp

[!Note] Currently (3/21/25) it appears that Claude Code does not support

rulesfor global rules, so appending the following to your prompt.

<rules>

for ANY question about LangGraph, use the langgraph-docs-mcp server to help answer --

+ call list_doc_sources tool to get the available llms.txt file

+ call fetch_docs tool to read it

+ reflect on the urls in llms.txt

+ reflect on the input question

+ call fetch_docs on any urls relevant to the question

</rules>

Then, try the example prompt:

- It will ask to approve tool calls.

Available CLI Commands

Main Command

miamcpdoc- General-purpose MCP server for custom llms.txt files

Specialized Documentation Servers

miamcpdoc-aisdk- Vercel AI SDK documentation (ai-sdk.dev)miamcpdoc-huggingface- Hugging Face ecosystem documentation (Transformers, Diffusers, Accelerate, Hub, Python Hub)miamcpdoc-langgraph- LangGraph and LangChain Python documentationmiamcpdoc-llms- What is LLMs documentation (llmstxt.org)miamcpdoc-creative- Creative Frameworks documentation (RISE, Narrative Remixing, Creative Orientation)

Creative Frameworks Server (miamcpdoc-creative)

The miamcpdoc-creative command provides access to comprehensive creative development frameworks including:

- Creative Orientation Framework - Proactive manifestation vs reactive elimination approaches

- Narrative Remixing Framework - Story transformation across domains while preserving emotional architecture

- RISE Framework - Creative-oriented reverse engineering methodology

- Non-Creative Orientation Conversion - Approaches for transforming reactive patterns

Usage

# Run the creative frameworks MCP server

miamcpdoc-creative

# Or use with uvx

uvx --from miamcpdoc miamcpdoc-creative

Available Documentation Sources

When connected to an MCP client, the server provides access to:

CreativeOrientation- Core creative vs reactive principlesNarrativeRemixing- Contextual transposition and story transformationRISEFramework- Reverse engineering for creative archaeologyNonCreativeOrientationApproach- Converting reactive approaches to creative ones

Example MCP Configuration

{

"mcpServers": {

"creative-frameworks": {

"command": "uvx",

"args": [

"--from",

"miamcpdoc",

"miamcpdoc-creative"

]

}

}

}

Other Specialized Servers

AI SDK Server (miamcpdoc-aisdk)

Provides access to Vercel AI SDK documentation for building AI-powered applications.

Usage:

miamcpdoc-aisdk

MCP Configuration:

{

"mcpServers": {

"ai-sdk-docs": {

"command": "uvx",

"args": ["--from", "miamcpdoc", "miamcpdoc-aisdk"]

}

}

}

Hugging Face Server (miamcpdoc-huggingface)

Comprehensive access to Hugging Face ecosystem documentation including Transformers, Diffusers, Accelerate, Hub, and Python Hub.

Usage:

miamcpdoc-huggingface

MCP Configuration:

{

"mcpServers": {

"huggingface-docs": {

"command": "uvx",

"args": ["--from", "miamcpdoc", "miamcpdoc-huggingface"]

}

}

}

LangGraph Server (miamcpdoc-langgraph)

Access to both LangGraph and LangChain Python documentation for building agentic applications.

Usage:

miamcpdoc-langgraph

MCP Configuration:

{

"mcpServers": {

"langgraph-docs": {

"command": "uvx",

"args": ["--from", "miamcpdoc", "miamcpdoc-langgraph"]

}

}

}

LLMs Information Server (miamcpdoc-llms)

Provides access to foundational information about LLMs and the llms.txt standard.

Usage:

miamcpdoc-llms

MCP Configuration:

{

"mcpServers": {

"llms-info": {

"command": "uvx",

"args": ["--from", "miamcpdoc", "miamcpdoc-llms"]

}

}

}

Command-line Interface

The miamcpdoc command provides a simple CLI for launching the documentation server.

You can specify documentation sources in three ways, and these can be combined:

- Using a YAML config file:

- This will load the LangGraph Python documentation from the

sample_config.yamlfile in this repo.

miamcpdoc --yaml sample_config.yaml

- Using a JSON config file:

- This will load the LangGraph Python documentation from the

sample_config.jsonfile in this repo.

miamcpdoc --json sample_config.json

- Directly specifying llms.txt URLs with optional names:

- URLs can be specified either as plain URLs or with optional names using the format

name:url. - You can specify multiple URLs by using the

--urlsparameter multiple times. - This is how we loaded

llms.txtfor the MCP server above.

miamcpdoc --urls LangGraph:https://langchain-ai.github.io/langgraph/llms.txt --urls LangChain:https://python.langchain.com/llms.txt

You can also combine these methods to merge documentation sources:

miamcpdoc --yaml sample_config.yaml --json sample_config.json --urls LangGraph:https://langchain-ai.github.io/langgraph/llms.txt --urls LangChain:https://python.langchain.com/llms.txt

Additional Options

--follow-redirects: Follow HTTP redirects (defaults to False)--timeout SECONDS: HTTP request timeout in seconds (defaults to 10.0)

Example with additional options:

miamcpdoc --yaml sample_config.yaml --follow-redirects --timeout 15

This will load the LangGraph Python documentation with a 15-second timeout and follow any HTTP redirects if necessary.

Configuration Format

Both YAML and JSON configuration files should contain a list of documentation sources.

Each source must include an llms_txt URL and can optionally include a name:

YAML Configuration Example (sample_config.yaml)

# Sample configuration for miamcpdoc server

# Each entry must have a llms_txt URL and optionally a name

- name: LangGraph Python

llms_txt: https://langchain-ai.github.io/langgraph/llms.txt

JSON Configuration Example (sample_config.json)

[

{

"name": "LangGraph Python",

"llms_txt": "https://langchain-ai.github.io/langgraph/llms.txt"

}

]

Programmatic Usage

from miamcpdoc.main import create_server

# Create a server with documentation sources

server = create_server(

[

{

"name": "LangGraph Python",

"llms_txt": "https://langchain-ai.github.io/langgraph/llms.txt",

},

# You can add multiple documentation sources

# {

# "name": "Another Documentation",

# "llms_txt": "https://example.com/llms.txt",

# },

],

follow_redirects=True,

timeout=15.0,

)

# Run the server

server.run(transport="stdio")

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file miamcpdoc-0.0.43.tar.gz.

File metadata

- Download URL: miamcpdoc-0.0.43.tar.gz

- Upload date:

- Size: 16.7 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.10.18

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

225efbf812b090173e06402cd182f79245646efb301e15c8040837ef2aad7149

|

|

| MD5 |

cd6ceabe9219464d5e2ac366757cec11

|

|

| BLAKE2b-256 |

84aee63d0b9d3df67cace7ba8b61bfc7070002a830f98d8dc7087df7dd0105a1

|

File details

Details for the file miamcpdoc-0.0.43-py3-none-any.whl.

File metadata

- Download URL: miamcpdoc-0.0.43-py3-none-any.whl

- Upload date:

- Size: 21.9 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.10.18

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

8a8e4666cd640cf77b476e5487014107b2f1784c7d1b848a95714372a9183c46

|

|

| MD5 |

e0c23091891146a19d64622a7c32029d

|

|

| BLAKE2b-256 |

2ac40eed2031eab8e863d5ad9c77e98a80828fbebb07bc1886398d1388bffcf9

|