Zero-server, in-process agentic memory system for LLM agents — Zvec + SQLite + LangGraph

Project description

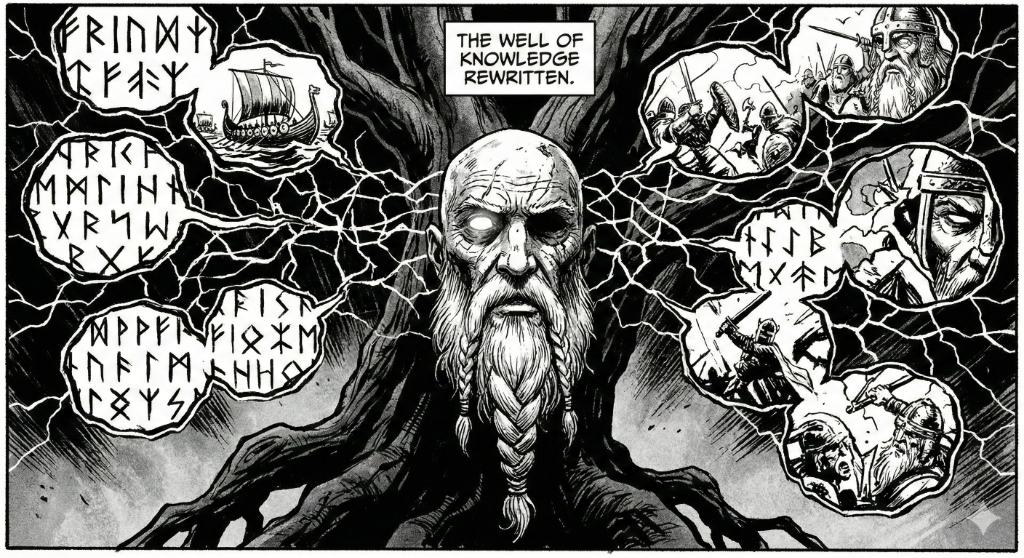

Mimir

The Well of Knowledge, Rewritten.

A zero-server, in-process agentic memory system for LLM agents.

📚 Prospectus | 🐍 PyPI | 📦 npm | 🏗️ Architecture | ⚡ Quickstart

Mimir is an open-source, in-process memory system for AI agents — combining dense vector search, bitemporal knowledge graphs, and autonomous tool-calling into a single Python process. Built on Zvec (Alibaba's battle-tested vector engine) and SQLite, it delivers production-grade semantic memory with temporal reasoning, zero infrastructure, and minimal setup.

💫 Features

- Bitemporal Memory: Facts are never deleted. Old records are time-capped, preserving full history. Ask "Where does John live?" and "Where did John live last month?" from the same dataset.

- In-Process Vector Search: Zvec runs as a C-extension inside your Python process. Sub-millisecond search, zero network hops.

- Fully Local: Embeddings via HuggingFace BGE run on CPU. Storage is file-based. No cloud, no Docker, no external databases.

- Agent-Ready: LangGraph state graph with

archive_memoryandsearch_memorytools, ready to bind to any OpenAI-compatible LLM. - REST API: Built-in FastAPI server for JS/TS and cross-language access via a single

mimir-servercommand. - Runs Anywhere: As an in-process library, Mimir runs wherever your code runs — notebooks, servers, CLI tools, or edge devices.

📦 Installation

Python

pip install mimir-memory

With Zvec (requires Python 3.10–3.12):

pip install mimir-memory[zvec]

With REST server:

pip install mimir-memory[server]

Node.js

npm install mimir-memory

Note: The npm package is a TypeScript SDK that connects to the Mimir REST server. Start the server first with

mimir-server.

✅ Supported Platforms

- macOS (ARM64 — Apple Silicon optimized)

- Linux (x86_64, ARM64)

⚡ One-Minute Example

Python (in-process)

from mimir import MimirStorage, create_agent

from langchain_core.messages import HumanMessage

# Initialize storage (creates ./mimir_data with Zvec + SQLite)

storage = MimirStorage()

# Create a LangGraph agent with memory tools bound

graph = create_agent(storage, api_key="sk-...", base_url="https://...")

# Chat — the agent autonomously archives and retrieves memories

result = graph.invoke({"messages": [

HumanMessage(content="My name is John and I live in Chennai.")

]})

print(result["messages"][-1].content)

Node.js / TypeScript

import { Mimir } from "mimir-memory";

const mimir = new Mimir(); // connects to http://localhost:8484

await mimir.archive({

content: "John lives in London",

source: "user",

relation: "lives_in",

target: "London",

scope: "user",

});

const result = await mimir.search({ query: "Where does John live?" });

console.log(result);

🏗️ Architecture

┌──────────────────────────────────────────────────┐

│ L1 & L2: Tools (archive_memory, search_memory) │

├──────────┬───────────────────────────────────────┤

│ │ L3: Bitemporal Knowledge Engine │

│ Zvec │ ┌──────────────────────────────────┐ │

│ (dense │ │ SQLite (bitemporal_graph) │ │

│ vectors)│ │ valid_from / valid_to timestamps │ │

│ │ └──────────────────────────────────┘ │

├──────────┴───────────────────────────────────────┤

│ L4: Procedural Optimizer (trajectory learning) │

└──────────────────────────────────────────────────┘

| Layer | Component | Role |

|---|---|---|

| L1 & L2 | Tools | archive_memory and search_memory — the agent's interface to storage |

| L3 | Storage | Zvec (dense vectors) + SQLite (bitemporal graph edges) |

| L4 | Optimizer | Post-session trajectory analysis and rule extraction |

📊 Stack

| Component | Technology |

|---|---|

| Vector Storage | Zvec v0.2.0 (Alibaba Proxima) |

| Graph/Relational | SQLite3 (Python stdlib) |

| Embeddings | BAAI/bge-small-en-v1.5 (384-dim, local, CPU) |

| Agent Framework | LangGraph + LangChain Core |

| REST Server | FastAPI + Uvicorn |

| LLM | Any OpenAI-compatible endpoint |

🤝 Community

- 📄 Full Prospectus — Architecture deep-dive, competitive analysis vs Letta/Mem0/Zep/LangMem, and Zvec integration details

- 🐛 Issues — Bug reports and feature requests

❤️ Contributing

We welcome contributions! Whether you're fixing a bug, adding a feature, or improving documentation — your help makes Mimir better for everyone.

📜 License

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file mimir_memory-0.3.1.tar.gz.

File metadata

- Download URL: mimir_memory-0.3.1.tar.gz

- Upload date:

- Size: 455.0 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.8

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f2c5421c02822646ad9a33d9dd4d5815829e990323adcb24d101e7e556d4b5bf

|

|

| MD5 |

e9d8b4b954fdfbd33ee452e7eb7566d0

|

|

| BLAKE2b-256 |

6694cdb2ca6b964c18dfd5d927029be02de3262267ffd3ec9c90ed603e0f1980

|

File details

Details for the file mimir_memory-0.3.1-py3-none-any.whl.

File metadata

- Download URL: mimir_memory-0.3.1-py3-none-any.whl

- Upload date:

- Size: 13.5 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.8

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f55e7a6e9b9643f2c6f0d97e7203c7cd2321bce26093734c30205be184d59ca3

|

|

| MD5 |

31bd045e509e922f2ae6a1e8c6b66d34

|

|

| BLAKE2b-256 |

4daab37bd02336e95139411fbb3fc607008dd9cccff4c565f40904dd17a07733

|