Stop forcing LLMs to answer in one pass. Give them a runtime.

Project description

minRLM

Stop forcing LLMs to answer in one pass. Give them a runtime.

Took a base model. Wrapped it in a tiny recursive loop: generate code - execute - refine - repeat.

Didn't change the model. Didn't add training. Didn't add data.

Just stopped forcing it to answer in one pass.

The performance jump is not subtle:

| Vanilla (one-shot) | minRLM (recursive) | |

|---|---|---|

| AIME 2025 | 0% | 96% |

| Sudoku Extreme | 0% | 80% |

| Overall (GPT-5.2) | 48.2% | 78.2% (+30pp) |

| Tokens used | 20,967 | 8,151 (3.6x less) |

| Cost | $7.92 | $2.86 (2.8x cheaper) |

6,600+ evaluations across 4 models and 13 tasks. Full blog post | Detailed results

Try it in 10 seconds

pip install minrlm

export OPENAI_API_KEY="sk-..."

# Analyze a file - data never enters the prompt

uvx minrlm "How many ERROR lines in the last hour?" ./server.log

# Pure computation - the REPL writes the algorithm

uvx minrlm "Return all primes up to 1,000,000, reversed."

# -> 78,498 primes in 6,258 tokens. Output: 616K chars. 25x savings.

# Pipe anything

cat huge_dataset.csv | uvx minrlm "Which product had the highest return rate?"

# Chain: solve a Sudoku, then pipe the solution to verify it

uvx minrlm -s "Solve this Sudoku:

..3|.1.|...

.4.|...|8..

...|..6|.2.

---+---+---

.8.|.5.|..1

...|...|...

5..|.8.|.6.

---+---+---

.7.|6..|...

..2|...|.5.

...|.3.|9.." \

| uvx minrlm -s 'Verify this sudoku board, is it valid? return {"board":str, "valid": bool}'

from minrlm import RLM

rlm = RLM(model="gpt-5-mini")

# 50MB CSV? Same cost as 5KB. Data never enters the prompt.

answer = rlm.completion(

task="Which product had the highest return rate in Q3?",

context=open("q3_returns.csv").read()

)

How it works

Standard LLM:

[System prompt] + [500K tokens of raw context] + [Question]

= Expensive. Slow. Accuracy degrades with length.

minRLM:

input_0 = "<500K chars in REPL memory>" # never in prompt

LLM writes: errors = [l for l in input_0.splitlines() if "ERROR" in l]

FINAL(len(errors))

= Code runs. Answer returned. ~4K tokens total.

The model writes Python to query the data. Attention runs only on the results. A 7M-character document costs the same as a 7K one.

Not ReAct. One REPL, 1-2 iterations, no growing context. Every step is Python you can read, rerun, and debug.

What makes it work

- Entropy profiling - zlib compression heatmap of the input. A needle in 7MB shows up as an entropy spike; the model skips straight to it

- Task routing - auto-detects structured data, MCQ, code retrieval, math, search & extract. Each gets a specialized code pattern

- Two-pass search - if the first pass returns "unknown", a second pass runs with keywords from first-pass evidence

- Sub-LLM delegation - outer model gathers evidence via

search(), passes it tosub_llm(task, evidence)for focused reasoning - Flat token cost - context never enters the conversation. Only the entropy map and a head/mid/tail preview do

- DockerREPL - every execution in a sandboxed container with seccomp. No network, no filesystem, stdlib only

The scaling story

The REPL isn't a crutch for weak models - it's a lever that better models pull harder.

| Model | minRLM | Vanilla | Gap | Tasks won |

|---|---|---|---|---|

| GPT-5-nano (small) | 53.7% | 63.2% | -9.5 | 4/12 |

| GPT-5-mini (mid) | 72.7% | 69.5% | +3.2 | 7/12 |

| GPT-5.4-mini (mid, newer) | 69.5% | 47.2% | +22.3 | 8/12 |

| GPT-5.2 (frontier) | 78.2% | 48.2% | +30.0 | 11/12 |

Small model? Recursion adds overhead. Frontier model? Recursion dominates.

The gap isn't model size. It's the execution model.

|

|

|

|

|

|

When to use it (and when not to)

Use it when:

- Large context (docs, logs, CSV, JSON) - cost stays flat as data grows

- You want debuggable reasoning - every step is readable Python, not hidden attention

- Token efficiency matters - 3.6x fewer tokens than comparable approaches

Skip it when:

- Short context (<8K tokens) - a direct call is simpler

- Code retrieval (RepoQA) - the one task where vanilla wins everywhere

- You need third-party packages - the sandbox is stdlib-only

REPL tools

| Function | What it does |

|---|---|

input_0 |

Your context data (string, never in the prompt) |

search(text, pattern) |

Substring search with context windows |

sub_llm(task, context) |

Recursive LLM call on a sub-chunk |

FINAL(answer) |

Return answer and stop |

Works with any OpenAI-compatible endpoint

# Local / self-hosted

rlm = RLM(model="llama-3.1-70b", base_url="http://localhost:8000/v1")

# Hugging Face

from openai import OpenAI

hf = OpenAI(base_url="https://router.huggingface.co/v1", api_key="hf_...")

rlm = RLM(model="openai/gpt-oss-120b", client=hf)

Works with: OpenAI, Hugging Face, Anthropic (via proxy), vLLM, Ollama, LiteLLM, or anything OpenAI-compatible.

More ways to run

Visualizer (Gradio UI)

git clone https://github.com/avilum/minrlm && cd minrlm

uv sync --extra visualizer

uv run python examples/visualizer.py # http://localhost:7860

OpenCode integration

1. Start the proxy:

uv run --with ".[proxy]" examples/proxy.py

# RLM Proxy initialized | model=gpt-5-mini | docker=False

# Uvicorn running on http://0.0.0.0:8000

2. Config (opencode/opencode.json): set provider.minrlm.api to http://localhost:8000/v1. See opencode/opencode.json.

3. Run:

OPENCODE_CONFIG=opencode.json opencode run "First prime after 1 million"

# > 1000003

Docker sandbox

LLM-generated code runs in isolated Docker containers. No network, read-only filesystem, memory-capped, seccomp-filtered.

rlm = RLM(model="gpt-5-mini", use_docker=True, docker_memory="256m")

Run the benchmarks yourself

git clone https://github.com/avilum/minrlm && cd minrlm

uv sync --extra eval

# Smoke test

uv run python eval/quickstart.py

# Full benchmark (reproduces the tables above)

uv run python eval/run.py \

--tasks all \

--runners minrlm-reasoning,vanilla,official \

--runs 50 --parallel 12 --task-parallel 12 \

--output-dir logs/my_eval

Full results: eval/README.md

Examples

uv run python examples/minimal.py # vanilla vs RLM side-by-side

uv run python examples/advanced_usage.py # search, sub_llm, callbacks

uv run python examples/visualizer.py # Gradio UI

uv run uvicorn examples.proxy:app --port 8000 # OpenAI-compatible proxy

Why this matters

Context window rot is real - model accuracy degrades as input grows, even when the answer is right there. Bigger windows aren't the fix. Less input, better targeted, is.

The same pattern is showing up everywhere: Anthropic's web search tool writes code to filter results, MCP standardizes code execution access, smolagents goes further. They all converge on the same idea: let the model use code to work with data instead of attending to all of it.

Feels less like "prompting" and more like giving the model a runtime.

Future work

- More models - Claude Opus 4.6, Gemini 2.5, open-weight models. Does the scaling trend hold across providers?

- Agentic pipelines - using the RLM pattern as a retrieval step inside multi-step agent workflows

- More tasks - stress-testing edge cases and domains where the approach might break

Contributions welcome. Open an issue or PR.

Credits

Built by Avi Lumelsky. Independent implementation - not a fork.

The RLM concept comes from Zhang, Kraska, and Khattab (2025). Official implementation: github.com/alexzhang13/rlm.

Citation

@misc{zhang2026recursivelanguagemodels,

title={Recursive Language Models},

author={Alex L. Zhang and Tim Kraska and Omar Khattab},

year={2026},

eprint={2512.24601},

archivePrefix={arXiv},

primaryClass={cs.AI},

url={https://arxiv.org/abs/2512.24601},

}

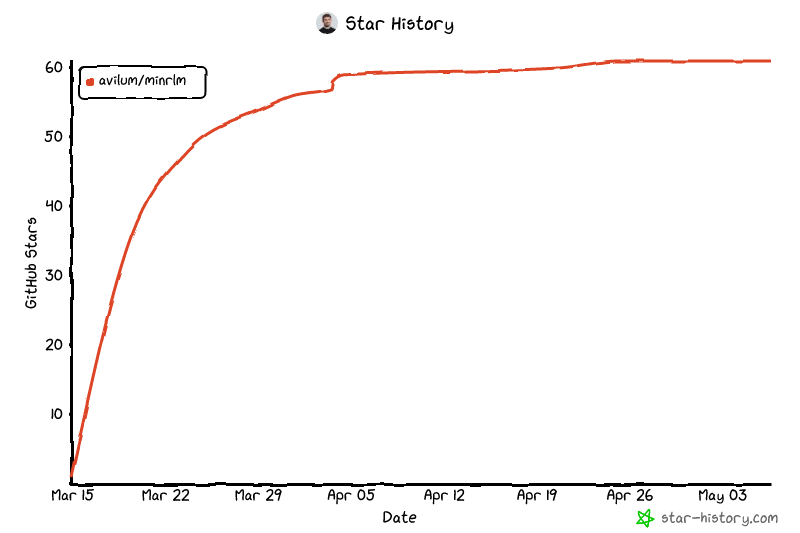

Star History

License

MIT

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distributions

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file minrlm-0.1.3-py3-none-any.whl.

File metadata

- Download URL: minrlm-0.1.3-py3-none-any.whl

- Upload date:

- Size: 52.5 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.13

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

5b55cf3d32485e42376a8f635c7ddae27a2e70992b5182a66c482cddde1f5ede

|

|

| MD5 |

186fe36c31aabf3cab1a8f981235de08

|

|

| BLAKE2b-256 |

ca9544560ea6cfbe86c44720a3def82958b886fa471c823ba5dc3e61c8235d56

|