moftransformer

Project description

MOFTransformer

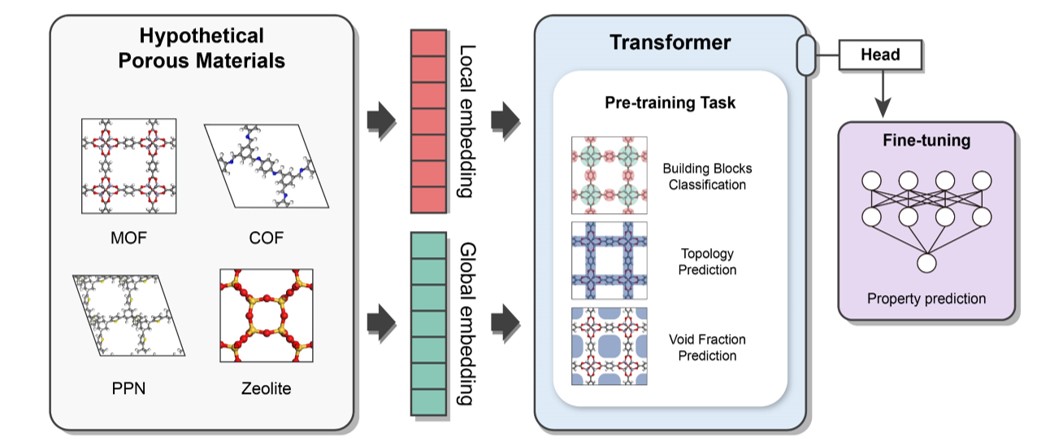

This package provides universal transfer learing for metal-organic frameworks(MOFs) to construct structure-property relationships. MOFTransformer obtains state-of-the-art performance to predict accross various properties that include gas adsorption, diffusion, electronic properties regardless of gas types. Beyond its universal transfer learning capabilityies, it provides feature importance analysis from its attentions scores to capture chemical intution.

Install

OS and hardware requirements

The package development version is tested on following systems:

Linux : Ubuntu 20.04, 22.04

For optimal performance, we recommend running with GPUs

Depedencies

python>=3.8

Given that MOFTransformer is based on pytorch, please install pytorch (>= 1.10.0) according to your environments.

Installation using PIP

$ pip install moftransformer

which should install in about 50 seconds.

Download the pretrained model (ckpt file)

- you can download the pretrained model with 1 M hMOFs in figshare or you can download with a command line:

$ moftransformer download pretrain_model

(Optional) Download dataset for CoREMOF, QMOF

- we've provide the dataset of MOFTransformer (i.e., atom-based graph embeddings and energy-grid embeddings) for CoREMOF, QMOF

$ moftransformer download coremof

$ moftransformer download qmof

Getting Started

- At first, you can run

prepare_datawith 10 cifs inmoftransformer/examples/rawdirectory.

In order to run prepare_data, you need to install GRIDAY to calculate energy grid.

You can download GRIDAY using command-line.

$ moftransformer install-griday

Example for running prepare-data

from moftransformer.examples import example_path

from moftransformer.utils import prepare_data

# Get example path

root_cifs = example_path['root_cif']

root_dataset = example_path['root_dataset']

downstream = example_path['downstream']

train_fraction = 0.7

test_fraction = 0.2

# Run prepare data

prepare_data(root_cifs, root_dataset, downstream=downstream,

train_fraciton=train_fraction, test_fraciton=test_fraction)

- Fine-tune the pretrained MOFTransformer.

import moftransformer

from moftransformer.examples import example_path

# data root and downstream from example

root_dataset = example_path['root_dataset']

downstream = example_path['downstream']

log_dir = './logs/'

# kwargs (optional)

max_epochs = 10

batch_size = 8

moftransformer.run(root_dataset, downstream, log_dir=log_dir,

max_epochs=max_epochs, batch_size=batch_size,)

which will run in about 35 seconds.

- Visualize analysis of feature importance for the fine-tuned model.

download finetuned-bandgap model before visualize.

moftransformer download finetuned_model -o ./examples

%matplotlib widget

from moftransformer.visualize import PatchVisualizer

from moftransformer.examples import visualize_example_path

model_path = "examples/finetuned_bandgap.ckpt" # or 'examples/finetuned_h2_uptake.ckpt'

data_path = visualize_example_path

cifname = 'MIBQAR01_FSR'

vis = PatchVisualizer.from_cifname(cifname, model_path, data_path)

vis.draw_graph() # or vis.draw_grid()

Architecture

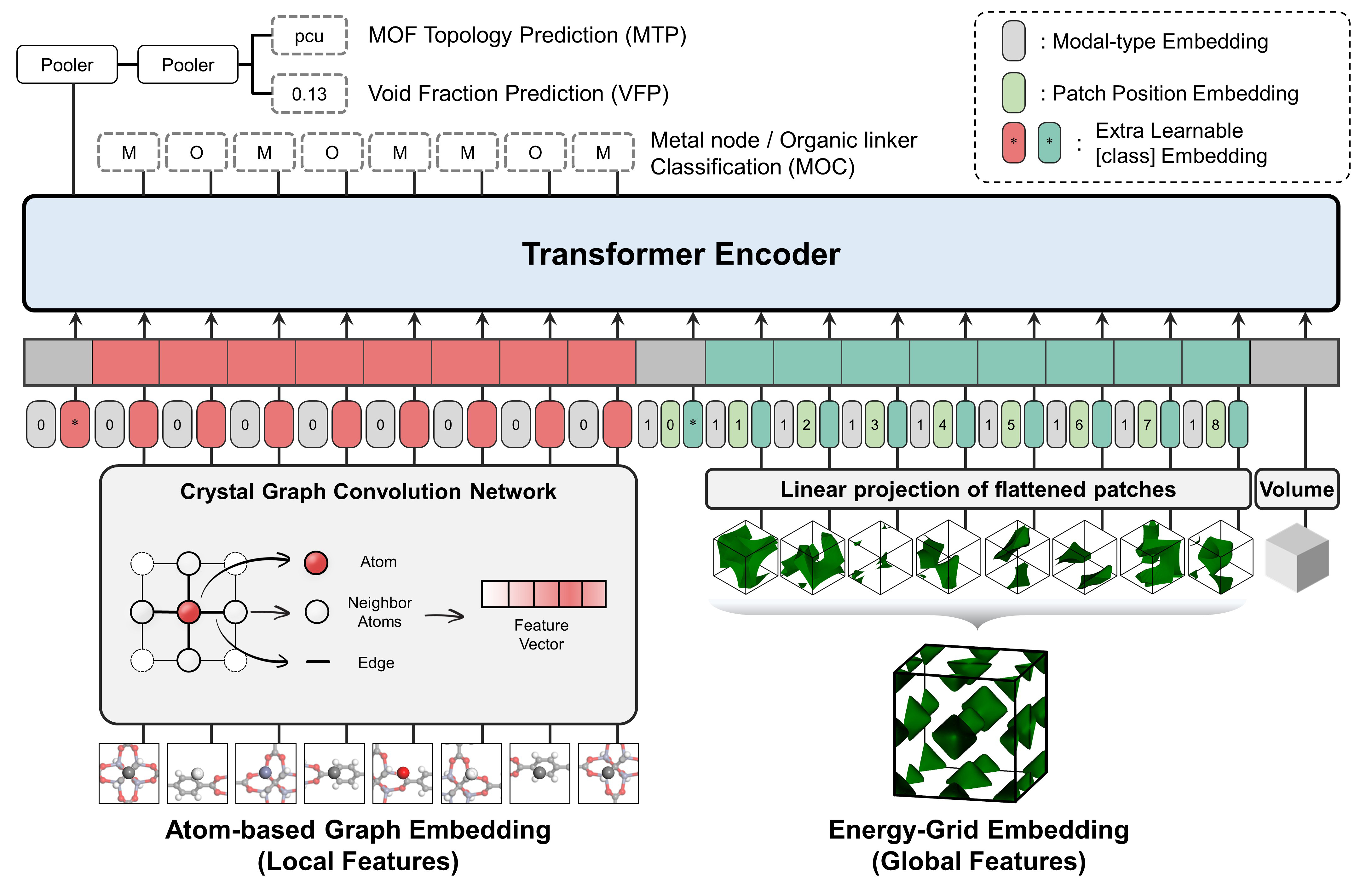

MOFTransformeris a multi-modal Transformer pre-trained with 1 million hypothetical MOFs so that it efficiently capture both local and global feeatures of MOFs.

MOFformertakes two different representations as input- Atom-based Graph Embedding : CGCNN w/o pooling layer -> local features

- Energy-grid Embedding : 1D flatten patches of 3D energy grid -> global features

Feature Importance Anaylsis

you can easily visualize feature importance analysis of atom-based graph embeddings and energy-grid embeddings.

%matplotlib widget

from visualize import PatchVisualizer

model_path = "examples/finetuned_bandgap.ckpt" # or 'examples/finetuned_h2_uptake.ckpt'

data_path = 'examples/visualize/dataset/'

cifname = 'MIBQAR01_FSR'

vis = PatchVisualizer.from_cifname(cifname, model_path, data_path)

vis.draw_graph()

vis.draw_grid()

Universal Transfer Learning

| Property | MOFTransformer | Original Paper | Number of Data | Remarks | Reference |

|---|---|---|---|---|---|

| N2 uptake | R2: 0.78 | R2: 0.71 | 5,286 | CoRE MOF | 1 |

| O2 uptake | R2: 0.83 | R2: 0.74 | 5,286 | CoRE MOF | 1 |

| N2 diffusivity | R2: 0.77 | R2: 0.76 | 5,286 | CoRE MOF | 1 |

| O2 diffusivity | R2: 0.78 | R2: 0.74 | 5,286 | CoRE MOF | 1 |

| CO2 Henry coefficient | MAE : 0.30 | MAE : 0.42 | 8,183 | CoRE MOF | 2 |

| Solvent removal stability classification | ACC : 0.76 | ACC : 0.76 | 2,148 | Text-mining data | 3 |

| Thermal stability regression | R2 : 0.44 | R2 : 0.46 | 3,098 | Text-mining data | 3 |

Reference

- Prediction of O2/N2 Selectivity in Metal−Organic Frameworks via High-Throughput Computational Screening and Machine Learning

- Understanding the diversity of the metal-organic framework ecosystem

- Using Machine Learning and Data Mining to Leverage Community Knowledge for the Engineering of Stable Metal–Organic Frameworks

Citation

If you want to cite the MOFTransformer and the pre-training and fine-tuning dataset, please refer to the publication:

Y. Kang, H. Park, B. Smit, J. Kim. "A multi-modal pre-training transformer for universal transfer learning in metal–organic frameworks", Nature Machine Intelligence, (2023) DOI: https://doi.org/10.1038/s42256-023-00628-2

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file moftransformer-1.1.3.tar.gz.

File metadata

- Download URL: moftransformer-1.1.3.tar.gz

- Upload date:

- Size: 2.4 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.9.15

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

93f118e2bfaf83131603f40945030031e4a76b24d2e2943170ece156eb2b8c62

|

|

| MD5 |

01784351472be7615289e9da0e9bb921

|

|

| BLAKE2b-256 |

35968784dc8635ff3fe23ff2860950bd09f1452de8587d4c0eeecd4f7b9ede8b

|

File details

Details for the file moftransformer-1.1.3-py3-none-any.whl.

File metadata

- Download URL: moftransformer-1.1.3-py3-none-any.whl

- Upload date:

- Size: 2.4 MB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.9.15

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

ebae0bcc56e578d28ef9f975d41ddd15c6fb172ee6c9184ac88f272872a75b4f

|

|

| MD5 |

f007745a9d677d020d9315fe79a2eaac

|

|

| BLAKE2b-256 |

85854d0d6d0de09c17c45e865dc1ec3574b425a1deceac0f8c1741ddcfed3412

|