Model Serving made Efficient in the Cloud

Project description

Model Serving made Efficient in the Cloud.

Introduction

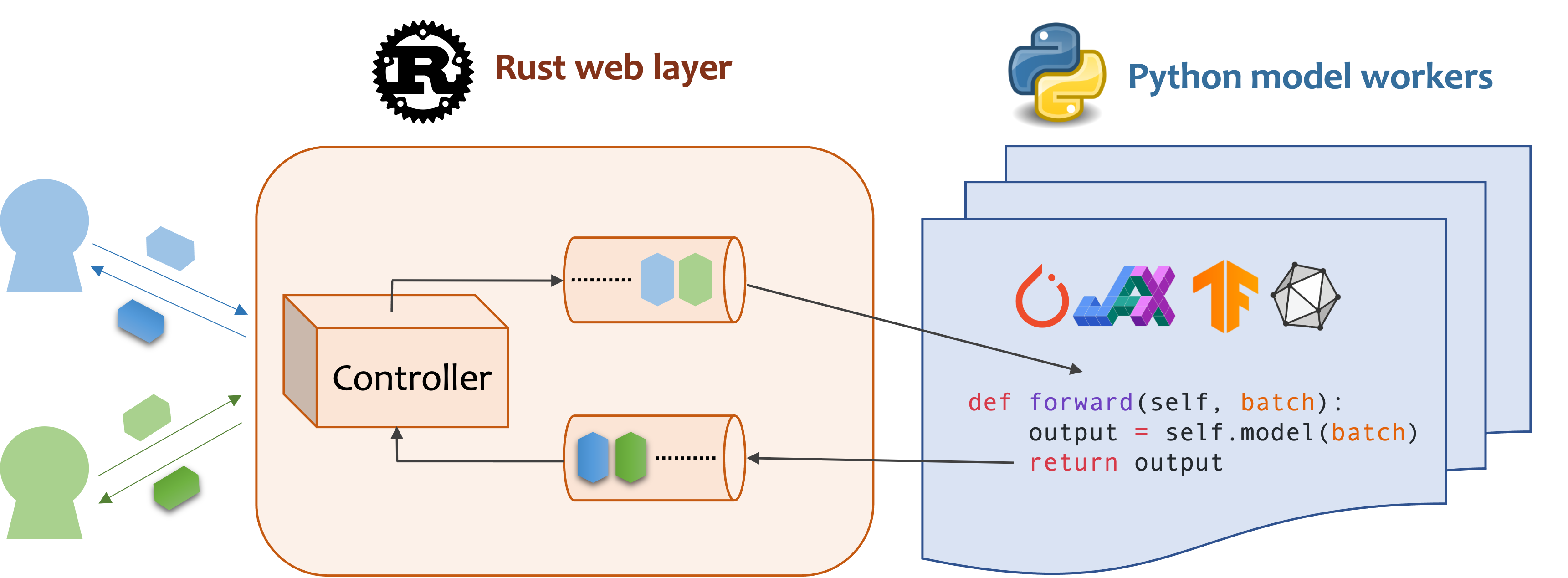

Mosec is a high-performance and flexible model serving framework for building ML model-enabled backend and microservices. It bridges the gap between any machine learning models you just trained and the efficient online service API.

- Highly performant: web layer and task coordination built with Rust 🦀, which offers blazing speed in addition to efficient CPU utilization powered by async I/O

- Ease of use: user interface purely in Python 🐍, by which users can serve their models in an ML framework-agnostic manner using the same code as they do for offline testing

- Dynamic batching: aggregate requests from different users for batched inference and distribute results back

- Pipelined stages: spawn multiple processes for pipelined stages to handle CPU/GPU/IO mixed workloads

- Cloud friendly: designed to run in the cloud, with the model warmup, graceful shutdown, and Prometheus monitoring metrics, easily managed by Kubernetes or any container orchestration systems

- Do one thing well: focus on the online serving part, users can pay attention to the model optimization and business logic

Installation

Mosec requires Python 3.7 or above. Install the latest PyPI package for Linux or macOS with:

pip install -U mosec

# or install with conda

conda install conda-forge::mosec

# or install with pixi

pixi add mosec

To build from the source code, install Rust and run the following command:

make package

You will get a mosec wheel file in the dist folder.

Usage

We demonstrate how Mosec can help you easily host a pre-trained stable diffusion model as a service. You need to install diffusers and transformers as prerequisites:

pip install --upgrade diffusers[torch] transformers

Write the server

Click me for server codes with explanations.

Firstly, we import the libraries and set up a basic logger to better observe what happens.

from io import BytesIO

from typing import List

import torch # type: ignore

from diffusers import StableDiffusionPipeline # type: ignore

from mosec import Server, Worker, get_logger

from mosec.mixin import MsgpackMixin

logger = get_logger()

Then, we build an API for clients to query a text prompt and obtain an image based on the stable-diffusion-v1-5 model in just 3 steps.

-

Define your service as a class which inherits

mosec.Worker. Here we also inheritMsgpackMixinto employ the msgpack serialization format(a). -

Inside the

__init__method, initialize your model and put it onto the corresponding device. Optionally you can assignself.examplewith some data to warm up(b) the model. Note that the data should be compatible with your handler's input format, which we detail next. -

Override the

forwardmethod to write your service handler(c), with the signatureforward(self, data: Any | List[Any]) -> Any | List[Any]. Receiving/returning a single item or a tuple depends on whether dynamic batching(d) is configured.

class StableDiffusion(MsgpackMixin, Worker):

def __init__(self):

self.pipe = StableDiffusionPipeline.from_pretrained(

"sd-legacy/stable-diffusion-v1-5", torch_dtype=torch.float16

)

self.pipe.enable_model_cpu_offload()

self.example = ["useless example prompt"] * 4 # warmup (batch_size=4)

def forward(self, data: List[str]) -> List[memoryview]:

logger.debug("generate images for %s", data)

res = self.pipe(data)

logger.debug("NSFW: %s", res[1])

images = []

for img in res[0]:

dummy_file = BytesIO()

img.save(dummy_file, format="JPEG")

images.append(dummy_file.getbuffer())

return images

[!NOTE]

(a) In this example we return an image in the binary format, which JSON does not support (unless encoded with base64 that makes the payload larger). Hence, msgpack suits our need better. If we do not inherit

MsgpackMixin, JSON will be used by default. In other words, the protocol of the service request/response can be either msgpack, JSON, or any other format (check our mixins).(b) Warm-up usually helps to allocate GPU memory in advance. If the warm-up example is specified, the service will only be ready after the example is forwarded through the handler. However, if no example is given, the first request's latency is expected to be longer. The

exampleshould be set as a single item or a tuple depending on whatforwardexpects to receive. Moreover, in the case where you want to warm up with multiple different examples, you may setmulti_examples(demo here).(c) This example shows a single-stage service, where the

StableDiffusionworker directly takes in client's prompt request and responds the image. Thus theforwardcan be considered as a complete service handler. However, we can also design a multi-stage service with workers doing different jobs (e.g., downloading images, model inference, post-processing) in a pipeline. In this case, the whole pipeline is considered as the service handler, with the first worker taking in the request and the last worker sending out the response. The data flow between workers is done by inter-process communication.(d) Since dynamic batching is enabled in this example, the

forwardmethod will wishfully receive a list of string, e.g.,['a cute cat playing with a red ball', 'a man sitting in front of a computer', ...], aggregated from different clients for batch inference, improving the system throughput.

Finally, we append the worker to the server to construct a single-stage workflow (multiple stages can be pipelined to further boost the throughput, see this example), and specify the number of processes we want it to run in parallel (num=1), and the maximum batch size (max_batch_size=4, the maximum number of requests dynamic batching will accumulate before timeout; timeout is defined with the max_wait_time=10 in milliseconds, meaning the longest time Mosec waits until sending the batch to the Worker).

if __name__ == "__main__":

server = Server()

# 1) `num` specifies the number of processes that will be spawned to run in parallel.

# 2) By configuring the `max_batch_size` with the value > 1, the input data in your

# `forward` function will be a list (batch); otherwise, it's a single item.

server.append_worker(StableDiffusion, num=1, max_batch_size=4, max_wait_time=10)

server.run()

Run the server

Click me to see how to run and query the server.

The above snippets are merged in our example file. You may directly run at the project root level. We first have a look at the command line arguments (explanations here):

python examples/stable_diffusion/server.py --help

Then let's start the server with debug logs:

python examples/stable_diffusion/server.py --log-level debug --timeout 30000

Open http://127.0.0.1:8000/openapi/swagger/ in your browser to get the OpenAPI doc.

And in another terminal, test it:

python examples/stable_diffusion/client.py --prompt "a cute cat playing with a red ball" --output cat.jpg --port 8000

You will get an image named "cat.jpg" in the current directory.

You can check the metrics:

curl http://127.0.0.1:8000/metrics

That's it! You have just hosted your stable-diffusion model as a service! 😉

Examples

More ready-to-use examples can be found in the Example section. It includes:

- Pipeline: a simple echo demo even without any ML model.

- Request validation: validate the request with type annotation and generate OpenAPI documentation.

- Multiple route: serve multiple models in one service

- Embedding service: OpenAI compatible embedding service

- Reranking service: rerank a list of passages based on a query

- Shared memory IPC: inter-process communication with shared memory.

- Customized GPU allocation: deploy multiple replicas, each using different GPUs.

- Customized metrics: record your own metrics for monitoring.

- Jax jitted inference: just-in-time compilation speeds up the inference.

- Compression: enable request/response compression.

- PyTorch deep learning models:

- sentiment analysis: infer the sentiment of a sentence.

- image recognition: categorize a given image.

- stable diffusion: generate images based on texts, with msgpack serialization.

Configuration

- Dynamic batching

max_batch_sizeandmax_wait_time (millisecond)are configured when you callappend_worker.- Make sure inference with the

max_batch_sizevalue won't cause the out-of-memory in GPU. - Normally,

max_wait_timeshould be less than the batch inference time. - If enabled, it will collect a batch either when the number of accumulated requests reaches

max_batch_sizeor whenmax_wait_timehas elapsed. The service will benefit from this feature when the traffic is high.

- Check the arguments doc for other configurations.

Deployment

- If you're looking for a GPU base image with

mosecinstalled, you can check the official imagemosecorg/mosec. For the complex use case, check out envd. - This service doesn't need Gunicorn or NGINX, but you can certainly use the ingress controller when necessary.

- This service should be the PID 1 process in the container since it controls multiple processes. If you need to run multiple processes in one container, you will need a supervisor. You may choose Supervisor or Horust.

- Remember to collect the metrics.

mosec_service_batch_size_bucketshows the batch size distribution.mosec_service_batch_duration_second_bucketshows the duration of dynamic batching for each connection in each stage (starts from receiving the first task).mosec_service_process_duration_second_bucketshows the duration of processing for each connection in each stage (including the IPC time but excluding themosec_service_batch_duration_second_bucket).mosec_service_remaining_taskshows the number of currently processing tasks.mosec_service_throughputshows the service throughput.

- Stop the service with

SIGINT(CTRL+C) orSIGTERM(kill {PID}) since it has the graceful shutdown logic.

Performance tuning

- Find out the best

max_batch_sizeandmax_wait_timefor your inference service. The metrics will show the histograms of the real batch size and batch duration. Those are the key information to adjust these two parameters. - Try to split the whole inference process into separate CPU and GPU stages (ref DistilBERT). Different stages will be run in a data pipeline, which will keep the GPU busy.

- You can also adjust the number of workers in each stage. For example, if your pipeline consists of a CPU stage for preprocessing and a GPU stage for model inference, increasing the number of CPU-stage workers can help to produce more data to be batched for model inference at the GPU stage; increasing the GPU-stage workers can fully utilize the GPU memory and computation power. Both ways may contribute to higher GPU utilization, which consequently results in higher service throughput.

- For multi-stage services, note that the data passing through different stages will be serialized/deserialized by the

serialize_ipc/deserialize_ipcmethods, so extremely large data might make the whole pipeline slow. The serialized data is passed to the next stage through rust by default, you could enable shared memory to potentially reduce the latency (ref RedisShmIPCMixin). - You should choose appropriate

serialize/deserializemethods, which are used to decode the user request and encode the response. By default, both are using JSON. However, images and embeddings are not well supported by JSON. You can choose msgpack which is faster and binary compatible (ref Stable Diffusion). - Configure the threads for OpenBLAS or MKL. It might not be able to choose the most suitable CPUs used by the current Python process. You can configure it for each worker by using the env (ref custom GPU allocation).

- Enable HTTP/2 from client side.

mosecautomatically adapts to user's protocol (e.g., HTTP/2) since v0.8.8.

Adopters

Here are some of the companies and individual users that are using Mosec:

- Modelz: Serverless platform for ML inference.

- MOSS: An open sourced conversational language model like ChatGPT.

- TencentCloud: Tencent Cloud Machine Learning Platform, using Mosec as the core inference server framework.

- TensorChord: Cloud native AI infrastructure company.

- OAT: Serving reward models for online LLM alignment.

Citation

If you find this software useful for your research, please consider citing

@software{yang2021mosec,

title = {{MOSEC: Model Serving made Efficient in the Cloud}},

author = {Yang, Keming and Liu, Zichen and Cheng, Philip},

howpublished = {https://github.com/mosecorg/mosec},

year = {2021}

}

Contributing

We welcome any kind of contribution. Please give us feedback by raising issues or discussing on Discord. You could also directly contribute your code and pull request!

To start develop, you can use envd to create an isolated and clean Python & Rust environment. Check the envd-docs or build.envd for more information.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distributions

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file mosec-0.9.7.tar.gz.

File metadata

- Download URL: mosec-0.9.7.tar.gz

- Upload date:

- Size: 167.6 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

93343630167c0f26ad74483726fc38895880278faffe5c8ea3a42d87376730d6

|

|

| MD5 |

43a1d83e2deba612fade24c59be6e848

|

|

| BLAKE2b-256 |

60f9ff60d7ecc8e3f76c1b9253e50aee94afc8a614ded0d0a8be28a902201ede

|

File details

Details for the file mosec-0.9.7-py3-none-musllinux_1_2_x86_64.whl.

File metadata

- Download URL: mosec-0.9.7-py3-none-musllinux_1_2_x86_64.whl

- Upload date:

- Size: 5.5 MB

- Tags: Python 3, musllinux: musl 1.2+ x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

5ab8e8afea6c49a07560f35c128355633931b7f1db2b78e81081446d3b1b9bfa

|

|

| MD5 |

a998ce5d7089bb4ca9d3726860584922

|

|

| BLAKE2b-256 |

f06da972e4431009bf4d5c57adaffd71e97ccd0ee1f8e2c5d1ea15ccb023a3aa

|

File details

Details for the file mosec-0.9.7-py3-none-musllinux_1_2_i686.whl.

File metadata

- Download URL: mosec-0.9.7-py3-none-musllinux_1_2_i686.whl

- Upload date:

- Size: 5.5 MB

- Tags: Python 3, musllinux: musl 1.2+ i686

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

fd35e1fe174f179a5fde5df8e943373365b50986400db7a04f6e78600d56517a

|

|

| MD5 |

a925466e13dfdcf8898d227743f9a7d6

|

|

| BLAKE2b-256 |

2167857985273ef2bdac8addcf313c6409b36f2a43576499bfc76ead70e87d7c

|

File details

Details for the file mosec-0.9.7-py3-none-musllinux_1_2_armv7l.whl.

File metadata

- Download URL: mosec-0.9.7-py3-none-musllinux_1_2_armv7l.whl

- Upload date:

- Size: 5.2 MB

- Tags: Python 3, musllinux: musl 1.2+ ARMv7l

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

cbe9f6badd765d4192312bd4894e248703928e1701c83393589bd393bacf998a

|

|

| MD5 |

d83a4307a27ea9a14b3fc628cac8e28c

|

|

| BLAKE2b-256 |

d558b273fc57a7f50d3540495b058d07693878cef7b2608f5d4184aacc857249

|

File details

Details for the file mosec-0.9.7-py3-none-musllinux_1_2_aarch64.whl.

File metadata

- Download URL: mosec-0.9.7-py3-none-musllinux_1_2_aarch64.whl

- Upload date:

- Size: 5.3 MB

- Tags: Python 3, musllinux: musl 1.2+ ARM64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

e80580b75119cfeac1cea7ce088bb14b9a4e62a2ffc61ee55984acac214615f8

|

|

| MD5 |

497a41844921a60b9424b31c86ed5ee8

|

|

| BLAKE2b-256 |

7675fe5489a4b2136c974f3a9b99dec4d26fcf033e18e096e27c8c3de5891b0a

|

File details

Details for the file mosec-0.9.7-py3-none-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: mosec-0.9.7-py3-none-manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 5.4 MB

- Tags: Python 3, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b183955efc7c1a5e3c135b3b95ed987877bdc7bdbce4f2efd2f1053e10b794c2

|

|

| MD5 |

bb77343f7f2135462075571dcd7945f2

|

|

| BLAKE2b-256 |

ba43469c155571e1af324e493d17f37511cab2d03d7c787640ec035b0d55686f

|

File details

Details for the file mosec-0.9.7-py3-none-manylinux_2_17_s390x.manylinux2014_s390x.whl.

File metadata

- Download URL: mosec-0.9.7-py3-none-manylinux_2_17_s390x.manylinux2014_s390x.whl

- Upload date:

- Size: 5.4 MB

- Tags: Python 3, manylinux: glibc 2.17+ s390x

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

192d42eb1665035350cc511bbdc5ab563064d2548306321150b1e8483c03a484

|

|

| MD5 |

0a8478845092f53b8d7117913278cca1

|

|

| BLAKE2b-256 |

281214038147d024a76f16d503aabc60432e5c63fdfe8fbe0ed8ba1b4c507785

|

File details

Details for the file mosec-0.9.7-py3-none-manylinux_2_17_ppc64le.manylinux2014_ppc64le.whl.

File metadata

- Download URL: mosec-0.9.7-py3-none-manylinux_2_17_ppc64le.manylinux2014_ppc64le.whl

- Upload date:

- Size: 5.8 MB

- Tags: Python 3, manylinux: glibc 2.17+ ppc64le

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

eec1b070e147e80d988a76b2763f3a8c257da4d16decd0d250f8f18559d17efc

|

|

| MD5 |

ee1202ffaa1958ac06f8ec23fede690d

|

|

| BLAKE2b-256 |

9d104abbfe4f50773c8e36b82392f2f51a1bbc9022e118cd6886d01abbdaf239

|

File details

Details for the file mosec-0.9.7-py3-none-manylinux_2_17_i686.manylinux2014_i686.whl.

File metadata

- Download URL: mosec-0.9.7-py3-none-manylinux_2_17_i686.manylinux2014_i686.whl

- Upload date:

- Size: 5.6 MB

- Tags: Python 3, manylinux: glibc 2.17+ i686

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

8fcf63ed53edb0025d48922ad923cc3da000c885c3c020eaa7edff511b021d16

|

|

| MD5 |

e0cb925a0e5595dd3671c37bbad53c61

|

|

| BLAKE2b-256 |

74ad4c23937d55982b94871188a69622aaf2cc92c1dc45efe8ceff3b0a3ce99b

|

File details

Details for the file mosec-0.9.7-py3-none-manylinux_2_17_armv7l.manylinux2014_armv7l.whl.

File metadata

- Download URL: mosec-0.9.7-py3-none-manylinux_2_17_armv7l.manylinux2014_armv7l.whl

- Upload date:

- Size: 5.2 MB

- Tags: Python 3, manylinux: glibc 2.17+ ARMv7l

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

e9cad854c7f1eed87f16c29aa4a078d8f6476ff86261d1912287f7adf4c44513

|

|

| MD5 |

8c4ff10a19a3a7f858f4f87059829b92

|

|

| BLAKE2b-256 |

c69089f184e01395a58d50d0cfe6bbdc5e55ac52ffd8722cb1144d6938ca91ad

|

File details

Details for the file mosec-0.9.7-py3-none-manylinux_2_17_aarch64.manylinux2014_aarch64.whl.

File metadata

- Download URL: mosec-0.9.7-py3-none-manylinux_2_17_aarch64.manylinux2014_aarch64.whl

- Upload date:

- Size: 5.3 MB

- Tags: Python 3, manylinux: glibc 2.17+ ARM64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

34acd10441c8a805e1752ea00cf8aef8da9a7a654cc778ab575f425ec0ee079a

|

|

| MD5 |

ca656e0ccb6e4a6b4963e5da21e55680

|

|

| BLAKE2b-256 |

e2fe34b3dc096d7d1fb4247c47af02fcc75f48b2e45da5e389369770024daaad

|

File details

Details for the file mosec-0.9.7-py3-none-macosx_11_0_arm64.whl.

File metadata

- Download URL: mosec-0.9.7-py3-none-macosx_11_0_arm64.whl

- Upload date:

- Size: 5.3 MB

- Tags: Python 3, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

0962ec3914c0a117f1cb7e11b96ec272ba4c247b6e6b00db1a6ddaf2deca287e

|

|

| MD5 |

16e3728bc4bc61566dfbba2fc8c0ccf7

|

|

| BLAKE2b-256 |

d87bf0747bbadd89e0656dfbb9ed3de423071206635d26dbafabf0cc02357a7d

|

File details

Details for the file mosec-0.9.7-py3-none-macosx_10_12_x86_64.whl.

File metadata

- Download URL: mosec-0.9.7-py3-none-macosx_10_12_x86_64.whl

- Upload date:

- Size: 5.3 MB

- Tags: Python 3, macOS 10.12+ x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

0fd132be534fbb26d3b6204109997a5b4d3b7ea7b44be99bb0041809bd189874

|

|

| MD5 |

6572bbb865748408cb17a32b583d008a

|

|

| BLAKE2b-256 |

82081d4ee4c7b52976dccf25b29e098ab1ff30d8fb536b16e5727f175b37a105

|