Official implementation of MoVer: Motion Verification for Motion Graphics Animations

Project description

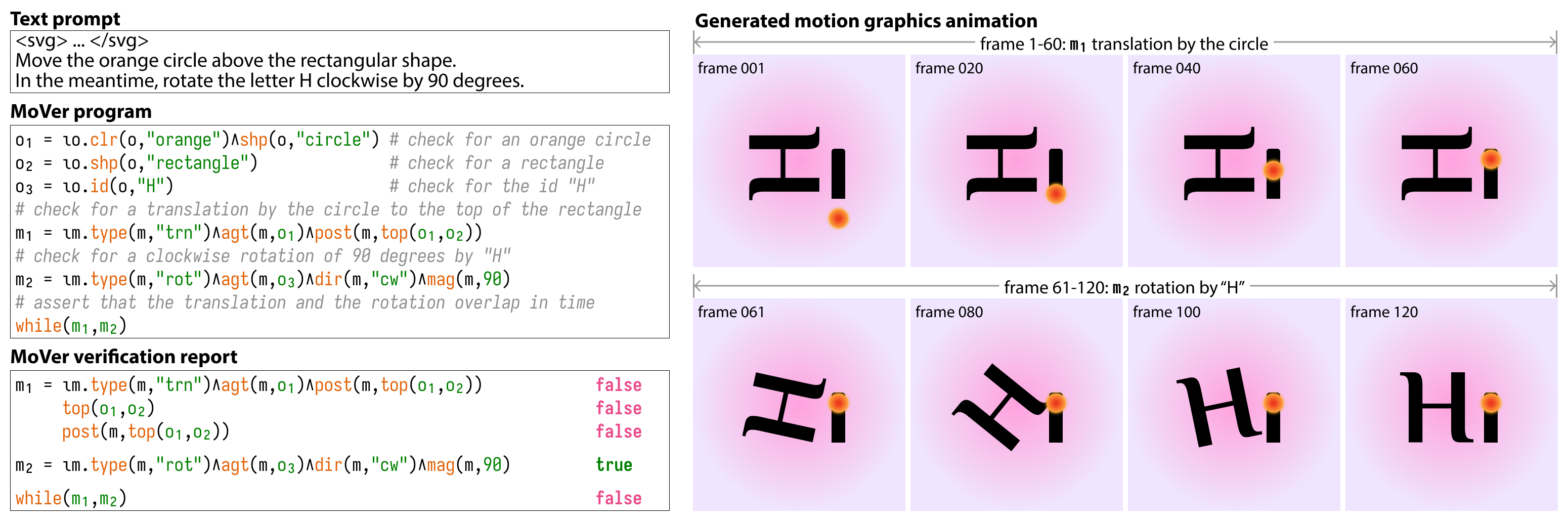

MoVer: Motion Verification for Motion Graphics Animations

Jiaju Ma and

Maneesh Agrawala

In ACM Transactions on Graphics (SIGGRAPH), 44(4), August 2025. To Appear.

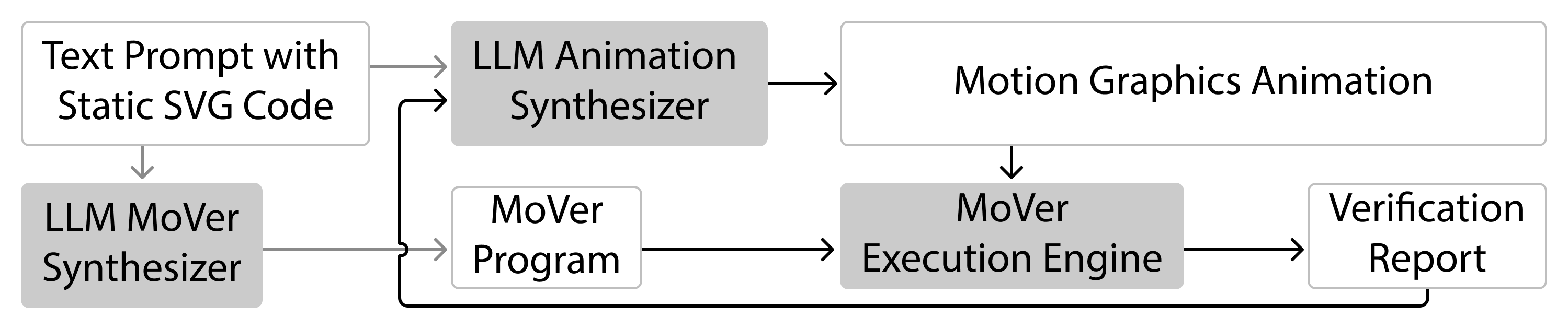

This repository contains the official implementation of MoVer, a domain-specific language based on first-order logic that can verify if various spatio-temporal properties of motion graphics are satisfied by an animation. We provide tools to use MoVer as part of an LLM-based motion graphics animation generation pipeline with verification.

Check out the project page for animation and benchmark results.

Dataset

The MoVer dataset of 5,600 prompts used in the paper can be found in mover_dataset/. Each prompt contains the ground truth MoVer program and information about the prompt's syntactic construction and whether an LLM is used as part of its generation.

We provide scripts to generate your own dataset of prompts with MoVer. See mover_dataset/create_dataset.py for details.

Installation

-

Set up a virtual environment with your favorite tool (

python>=3.10). We recommenduvfor its speed.uv venv mover_env --python 3.12 # or your favorite tool, like conda, venv, etc.

-

Make sure you have the right version of

pytorchinstalled for your system (see pytorch.org). -

Install MoVer with

pippip install git+https://github.com/jama1017/Jacinle.git && \ pip install git+https://github.com/jama1017/Concepts.git && \ pip install mover

or with

uvuv pip install git+https://github.com/jama1017/Jacinle.git && \ uv pip install git+https://github.com/jama1017/Concepts.git && \ uv pip install mover

-

Alternatively, to install MoVer for development, first clone this repository

git clone https://github.com/jama1017/MoVer.git cd MoVer

and then install the editable version of MoVer

pip install -r requirements.txt && \ pip install -e . # or with uv uv pip install -r requirements.txt && \ uv pip install -e .

-

To install the necessary browser for MoVer converter, run

playwright install -

Based on what models you plan to use, add your API keys as environment variables.

- They must be named as

OPENAI_API_KEY,GEMINI_API_KEY, andGROQ_API_KEY. - MoVer includes APIs to interface with OpenAI, Gemini (via OpenAI compatibility), and Groq by default.

- They must be named as

-

(Optional) If you plan to run locally-hosted models, install the following dependencies:

- Install

ollamaif you plan to use ollama. - Install

vLLMif you plan to use vLLM.

- Install

-

(Optional) We support rendering animations to video with OpenCV as

.mp4files, but the video produced might have limited compatibility because of codec issues. Ifffmpegis installed on your system, our converter will automatically use it to convert rendered videos.

Quick Start

Starter Example

Once you have installed MoVer, clone this repository to get access to the examples/ directory, where we have prepared some examples for you to try out. By default, OpenAI models are used, so make sure you have stored your API key as environment variables (must be named OPENAI_API_KEY). Or you can change the config file to use other models (see examples/configs/ for examples).

First, to get things started, from the root directory of this repository, run the following command to generate some simple animations with the LLM-based MoVer pipeline (using examples/configs/config_starter.yaml):

python -m mover.pipeline examples/configs/config_starter.yaml

The MoVer pipeline takes in a YAML config file as input. Here, the prompts used are stored in examples/prompts/prompts_starter.json.

If you look at the JSON file, you can see that, for each prompt, we have populated the ground_truth_program field with the MoVer program for verification.

Running this command should create a directory called example_output/prompts_starter/, where you can find the iterations of generated animations with videos.

Teaser Hi Example

Next, let's recreate the teaser Hi example in the paper by running the following command (pre-generated results are available in examples/).

python -m mover.pipeline examples/configs/config_teaser.yaml

This time, we did not fill in the ground_truth_program field in the prompts, so the pipeline will generate a MoVer program for verification and store it in the example_output/prompts_teaser/ directory as a Python script.

To create your own animations with MoVer, modify the starter config file and write your own prompts in the following format:

[

{

"svg_name": "<name of the SVG file (then specify the directory in the config file)>",

"svg_file_path": "<alternatively, you can specify the exact path to the SVG file>",

"chat_id_name": "<unique identifier for the prompt>",

"animation_prompt": "<describe the animation in detail. avoid fuzzy descriptions like 'make the square dance'>",

"ground_truth_program": "(optional) <ground truth MoVer program>",

"has_run": false

}

]

Setting has_run to true will make the pipeline ignore this prompt.

Usage Guide

Tutorial

Check out the tutorial.ipynb for a walkthrough of each part of the MoVer pipeline (animation synthesis, MoVer program synthesis, and MoVer verification).

SVG Animation

To understand how MoVer's LLM-based animation synthesizer generates SVG animations using a simple JavaScript API based on GSAP, check out the synthesizer's system message sys_msg_animation_synthesizer.md and the API itself in api.js.

- To extend the API, make sure to update

api.jsand reflect the changes in the system message. Seetutorial.ipynbfor how to pass in your own system message. - Each SVG animation is saved as an HTML file (see

examples/). To properly render the HTML file, first get all the files insrc/mover/converter/assets/and put them in the same directory as the HTML file. Then open the HTML file in your browser to see the animation in action.

MoVer DSL

The MoVer DSL is designed with predicates corresponding to spatial-temporal concepts that people commonly use in natural language to describe motions. For example, for the following animation prompt:

Translate the black square upwards by 100 px

We can write the corresponding MoVer program as:

o_1 = iota(Object, lambda o: color(o, "black") and shape(o, "square"))

m_1 = iota(Motion, lambda m: type(m, "translate") and direction(m, [0.0, 1.0]) and magnitude(m, 100.0) and agent(m, o_1))

Table 1 in the paper gives an overview of the predicates in the MoVer DSL. For more detailed documentations and examples of how they can be composed into MoVer programs, check out MoVer synthesizer's system message sys_msg_mover_synthesizer.md, figures in the paper, and the results page.

- To extend the DSL, update scripts in

src/mover/dsl/and reflect the changes in the system message.

Resolving references to similar objects and motions

For the animation prompt below, notice that we have two black squares in the scene, as well as two rightward translation motions (see the SVG here and the generated animation here).

"Translate the first black square to the right, then down, and then to the right. Translate the second black square up."

To refer to the second instances of repeated objects and motions, we can use the not predicate to exclude the first instance. This pattern generalizes to more instances of repetitions as well. For example, for the above animation prompt, we can write the corresponding MoVer program as:

o_1 = iota(Object, lambda o: color(o, "black") and shape(o, "square"))

## notice the use of not o_1 to refer to the second black square

o_2 = iota(Object, lambda o: color(o, "black") and shape(o, "square") and not o_1)

m_1 = iota(Motion, lambda m: type(m, "translate") and direction(m, [1.0, 0.0]) and agent(m, o_1))

m_2 = iota(Motion, lambda m: type(m, "translate") and direction(m, [0.0, -1.0]) and agent(m, o_1))

## notice the use of not m_1 to refer to the second rightward translation motion

m_3 = iota(Motion, lambda m: type(m, "translate") and direction(m, [1.0, 0.0]) and agent(m, o_1) and not m_1)

m_4 = iota(Motion, lambda m: type(m, "translate") and direction(m, [0.0, 1.0]) and agent(m, o_2))

t_before(m_1, m_2)

t_after(m_3, m_2)

License

This project is licensed under the Apache License 2.0 - see the LICENSE file for details.

Contact

Jiaju Ma

@jama1017

majiaju.io

hellojiajuma@gmail.com

Acknowledgments

We thank Yusong Wu for his help on getting this repository ready for release. This project builds on the wonderful foundation of the Concepts framework by Jiayuan Mao. The MoVer DSL parser and executor is based on LEFT by Joy Hsu and Jiayuan Mao. Our SVG animation API uses the one and only GSAP. The MoVer converter uses the ntc js (Name that Color JavaScript) library for converting hex colors to names.

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file mover-0.1.5.tar.gz.

File metadata

- Download URL: mover-0.1.5.tar.gz

- Upload date:

- Size: 144.4 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: uv/0.6.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

638894e543798e8b388a5e028311e1a51d397b730f91def384bede14a07bd7a9

|

|

| MD5 |

7def3c13f591d9db55ccc3b2b1a601d6

|

|

| BLAKE2b-256 |

b0579f4ac64fb261ceccd5789438eef1c81c79193177c8835ca16bae6f166daa

|

File details

Details for the file mover-0.1.5-py3-none-any.whl.

File metadata

- Download URL: mover-0.1.5-py3-none-any.whl

- Upload date:

- Size: 148.0 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: uv/0.6.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b09d5ec0819402bc3e0871fc7cc9b4c5381b25760a43e11133184e4b93788236

|

|

| MD5 |

3893f1a1e9e15b47a73084a9ae81b32e

|

|

| BLAKE2b-256 |

94f649ecdad4294d16fcd3dc544c7d3316e792126b2b1029eec25b2e82fa7d6b

|