Local-first reasoning pipeline wrapper for Ollama and LM Studio

Project description

MultiMind AI acts as an intelligent reasoning pipeline for your local AI models. It effortlessly auto-discovers endpoints like Ollama and LM Studio (OpenAI-compatible) and lets you orchestrate dedicated models for different logical phases: Planning, Execution, and Critique.

✨ Features

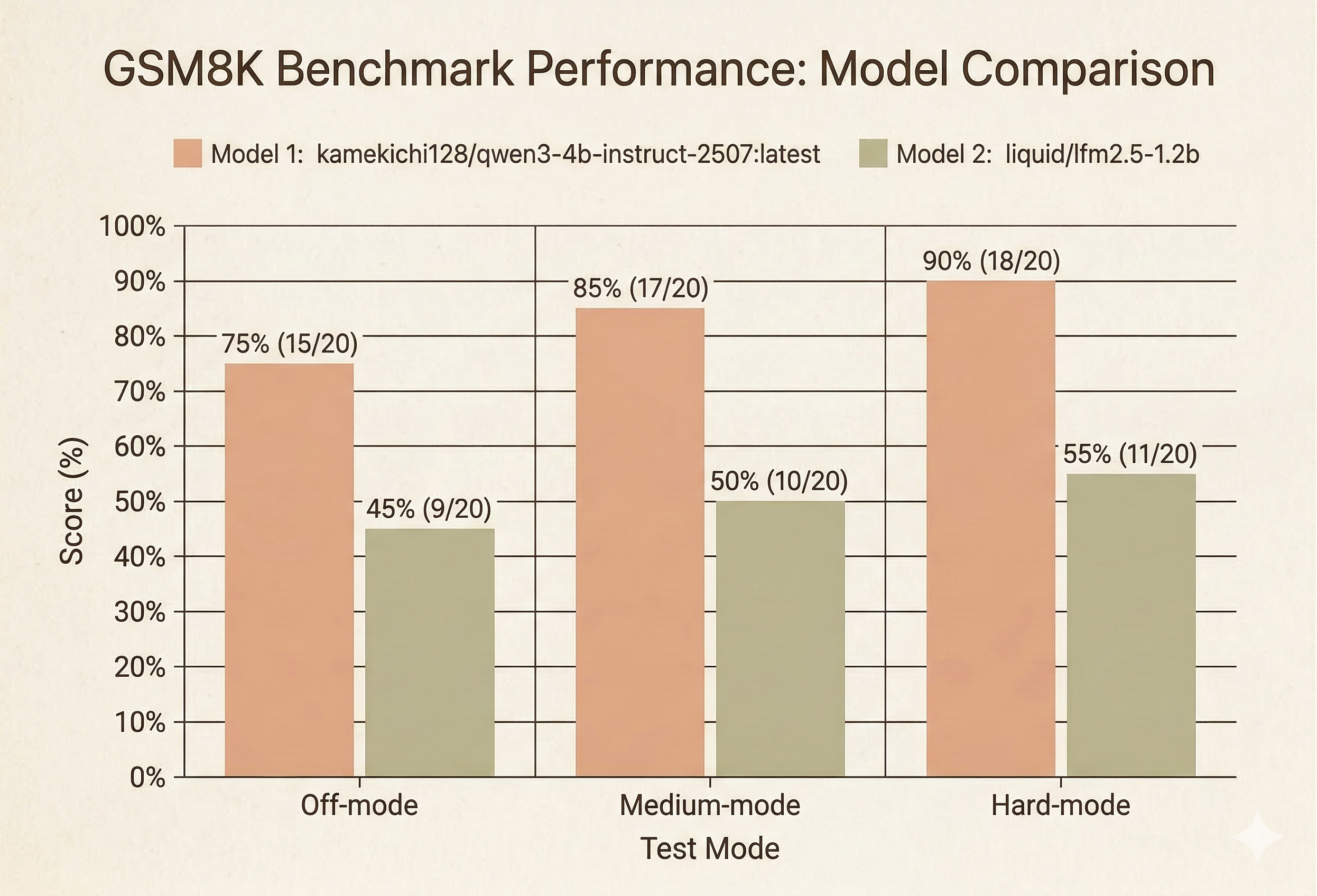

- 🧠 Adaptive Reasoning Modes: Toggle between Off, Medium, and Hard modes to dictate the depth of the model's reflection.

- 🔌 Zero-Config Auto-Discovery:

- Automatically hooks into local Ollama endpoints (

http://127.0.0.1:11434). - Supports optional discovery for LM Studio (

http://127.0.0.1:1234).

- Automatically hooks into local Ollama endpoints (

- 🎯 Precision Model Mapping: Assign distinct models to handle the different stages of thought (

plan,execute, andcritique). - 💬 Immersive UI: Enjoy a streaming timeline interface with collapsible "thought blocks" to keep your UI clean while the AI thinks.

- 📝 Native Markdown & Math Support:

- Final outputs are beautifully rendered as HTML in the chat view.

- Inline and block math equations are flawlessly typeset using a bundled local KaTeX build.

- ⚡ Frictionless Setup: Purely in-memory settings. Zero

.envsetup required for your first run.

🚀 Quick Start

Get up and running in your local environment in seconds:

# 1. Install the package via pip

pip install multimind

# 2. Launch the application

multimind

🛠 Setting up for Development / Source Install

# 1. Clone the repository

git clone https://github.com/JitseLambrichts/MultiMind-AI.git

cd MultiMind-AI

# 2. Create a virtual environment

python3 -m venv .venv

# 3. Activate the virtual environment

source .venv/bin/activate # On Windows: .venv\Scripts\activate

# 4. Install the package in editable mode

pip install -e .

# 5. Launch the application

multimind

Next: Open your browser and navigate to http://127.0.0.1:8000 🎉

🔌 Supported Backends

MultiMind AI works seamlessly with standard local APIs:

- Ollama: Connects via

/api/chatand/api/tags - OpenAI-Compatible Servers (e.g., LM Studio): Connects via

/v1/chat/completionsand/v1/models

If no provider is automatically detected, you can easily point the backend to your local OpenAI-compatible endpoint using the application's settings panel.

💡 How It Works

MultiMind AI splits inference into modular steps, elevating the capabilities of standard models:

- Plan: Formulates a structured approach to the prompt.

- Execute: Generates the primary response.

- Critique (Hard Mode): Evaluates the execution pass as a rough draft and streams refined, critiqued output as the final answer.

📝 Note: Chat history is intentionally in-memory only for the current MVP.

📊 Benchmarks

We evaluated the performance of MultiMind AI's reasoning pipeline using a subset of 20 questions from the GSM8K dataset. The results demonstrate a clear improvement in model accuracy when utilizing the different reasoning modes.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file multimind-0.1.4.tar.gz.

File metadata

- Download URL: multimind-0.1.4.tar.gz

- Upload date:

- Size: 1.5 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

a98e6e245e61da2e0a82a859b4a02d37446c55bd27394916c9db6b1ae445a4ee

|

|

| MD5 |

b684d35faec8d675ea05b1dcef3dbc2d

|

|

| BLAKE2b-256 |

6ed900a19843822cbcff59a510ccb9d51c694ab1711232a0236c327bf02a10f1

|

File details

Details for the file multimind-0.1.4-py3-none-any.whl.

File metadata

- Download URL: multimind-0.1.4-py3-none-any.whl

- Upload date:

- Size: 1.5 MB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

a9909e9f119a33ca3b2bb08b450e1d841cb52a049074f1349943374ee50076f7

|

|

| MD5 |

955a4bb8aaef3ecf9d475f226ca618cb

|

|

| BLAKE2b-256 |

fba29d0cceecd3ecc48680973715faf0d6005aa8fe07df6ae3d55b89bf07bf65

|