Simultaneous Machine Translation (SimulMT) with NLLB model optimization

Project description

NoLanguageLeftWaiting

Converts NoLanguageLeftBehind translation model to a SimulMT (Simultaneous Machine Translation) model, optimized for live/streaming use cases.

Based offline models such as NLLB suffer from eos token and punctuation insertion, inconsistent prefix handling and exponentially growing computational overhead as input length increases. This implementation aims at resolving that.

- LocalAgreement policy

- Backends: HuggingFace transformers / Ctranslate2 Translator

- Built for WhisperLiveKit

- 200 languages. See supported_languages.md for the full list.

- Working on implementing a speculative/self-speculative decoding for a faster decoder, using 600M as draft model, and 1.3B as main model. Refs: https://arxiv.org/pdf/2211.17192: https://arxiv.org/html/2509.21740v1,

Installation

pip install nllw

The textual frontend is not installed by default.

Quick Start

- Demo interface :

python textual_interface.py

- Use it as a package

import nllw

model = nllw.load_model(

src_langs=["fra_Latn"],

nllb_backend="transformers",

nllb_size="600M" #Alternative: 1.3B

)

translator = nllw.OnlineTranslation(

model,

input_languages=["fra_Latn"],

output_languages=["eng_Latn"]

)

tokens = [nllw.timed_text.TimedText('Ceci est un test de traduction')]

translator.insert_tokens(tokens)

validated, buffer = translator.process()

print(f"{validated} | {buffer}")

tokens = [nllw.timed_text.TimedText('en temps réel')]

translator.insert_tokens(tokens)

validated, buffer = translator.process()

print(f"{validated} | {buffer}")

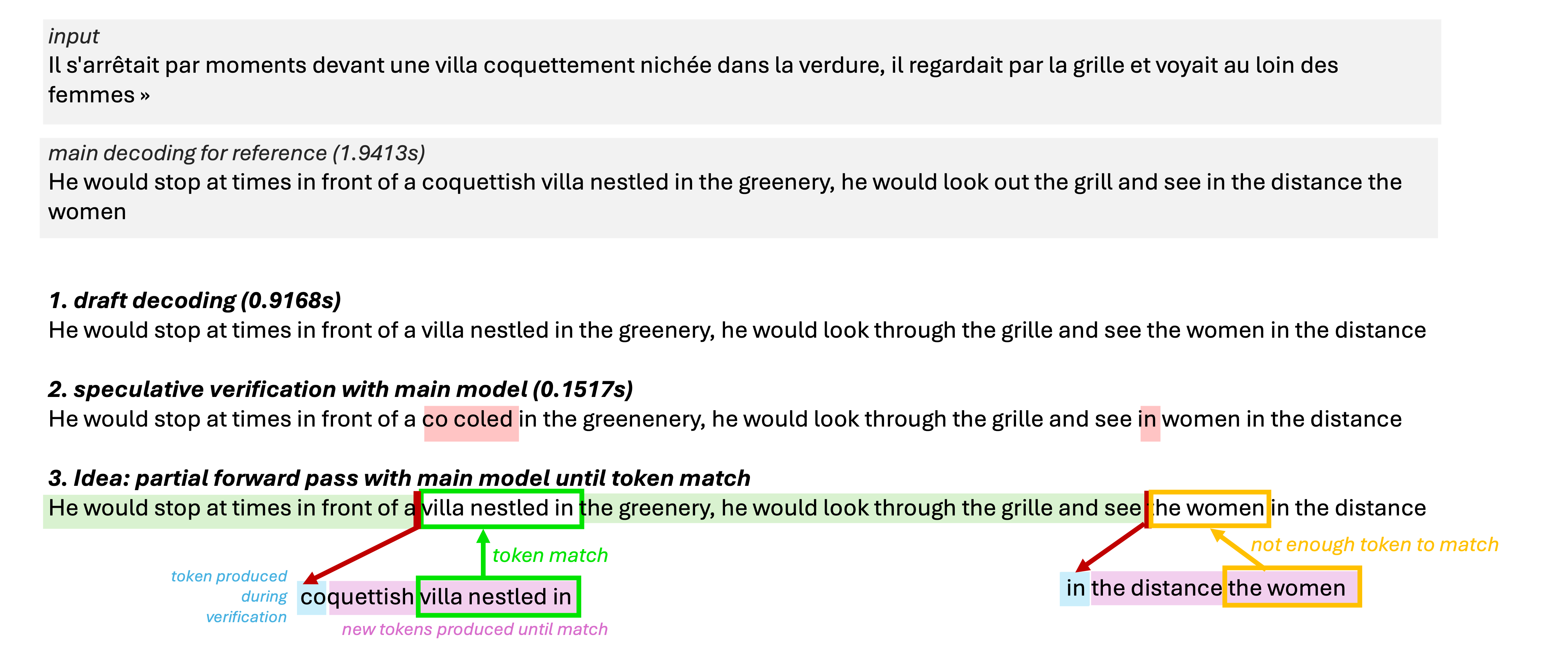

Work In Progress : Partial Speculative Decoding

Local Agreement already locks a stable prefix for the committed translation, so we cannot directly adopt Self-Speculative Biased Decoding for Faster Live Translation. Our ongoing prototype instead borrows the speculative idea only for the new tokens that need to be validated by the larger model.

The flow tested in speculative_decoding_v0.py:

- Run the 600M draft decoder once to obtain the candidate continuation and its cache.

- Replay the draft tokens through the 1.3B model, but stop the forward pass as soon as the main model reproduces a token emitted by the draft (

predicted_tokensmatches the draft output). We keep those verified tokens and only continue generation from that point. - On mismatch, resume full decoding with the 1.3B model until a match is reached again, instead of discarding the entire draft segment.

This “partial verification” trims the work the main decoder performs after each divergence, while keeping the responsiveness of the draft hypothesis. Early timing experiments from speculative_decoding_v0.py show the verification pass (~0.15 s in the example) is significantly cheaper than recomputing a full decoding step every time.

Input vs Output length:

Succesfully maintain output length, even if stable prefix tends to take time to grow.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file nllw-0.1.5.tar.gz.

File metadata

- Download URL: nllw-0.1.5.tar.gz

- Upload date:

- Size: 1.5 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.13.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

67f9c13fff6b43c2e05b2e762e7001471329dbdb34634b56a2cab639807b1b9a

|

|

| MD5 |

5953fd3660faff918fdf9ce41b067531

|

|

| BLAKE2b-256 |

4784cd9e2c8ed1ac942911f8ab9378fd875bd85a62af81025cb56b9b4435314b

|

File details

Details for the file nllw-0.1.5-py3-none-any.whl.

File metadata

- Download URL: nllw-0.1.5-py3-none-any.whl

- Upload date:

- Size: 15.1 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.13.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

6761c8767b27e12cf4c2506f9e5d5eafb78d08d281fe26d287c8413c87c42119

|

|

| MD5 |

3504c33108e4fbb26e5a8f9a0c7c1339

|

|

| BLAKE2b-256 |

7a80ee07aafbf180aa6f332bcfa91f4e8b7ee55386ea63d5a80142e3d4fde8a5

|