A library for developing earth system foundation models

Project description

The OlmoEarth models are a flexible, multi-modal, spatio-temporal family of foundation models for Earth Observations.

The OlmoEarth models exist as part of the OlmoEarth platform. The OlmoEarth Platform is an end-to-end solution for scalable planetary intelligence, providing everything needed to go from raw data through R&D, to fine-tuning and production deployment.

Installation

We recommend Python 3.12, and recommend using uv. To install dependencies with uv, run:

git clone git@github.com:allenai/olmoearth_pretrain.git

cd olmoearth_pretrain

uv sync --locked --all-groups --python 3.12

# only necessary for development

uv tool install pre-commit --with pre-commit-uv --force-reinstall

uv installs everything into a venv, so to keep using python commands you can activate uv's venv: source .venv/bin/activate. Otherwise, swap to uv run python.

Inference-Only Installation

For inference and model loading without training dependencies:

uv sync --locked

OlmoEarth is built using OLMo-core. OLMo-core's published Docker images contain all core and optional dependencies.

Model Summary

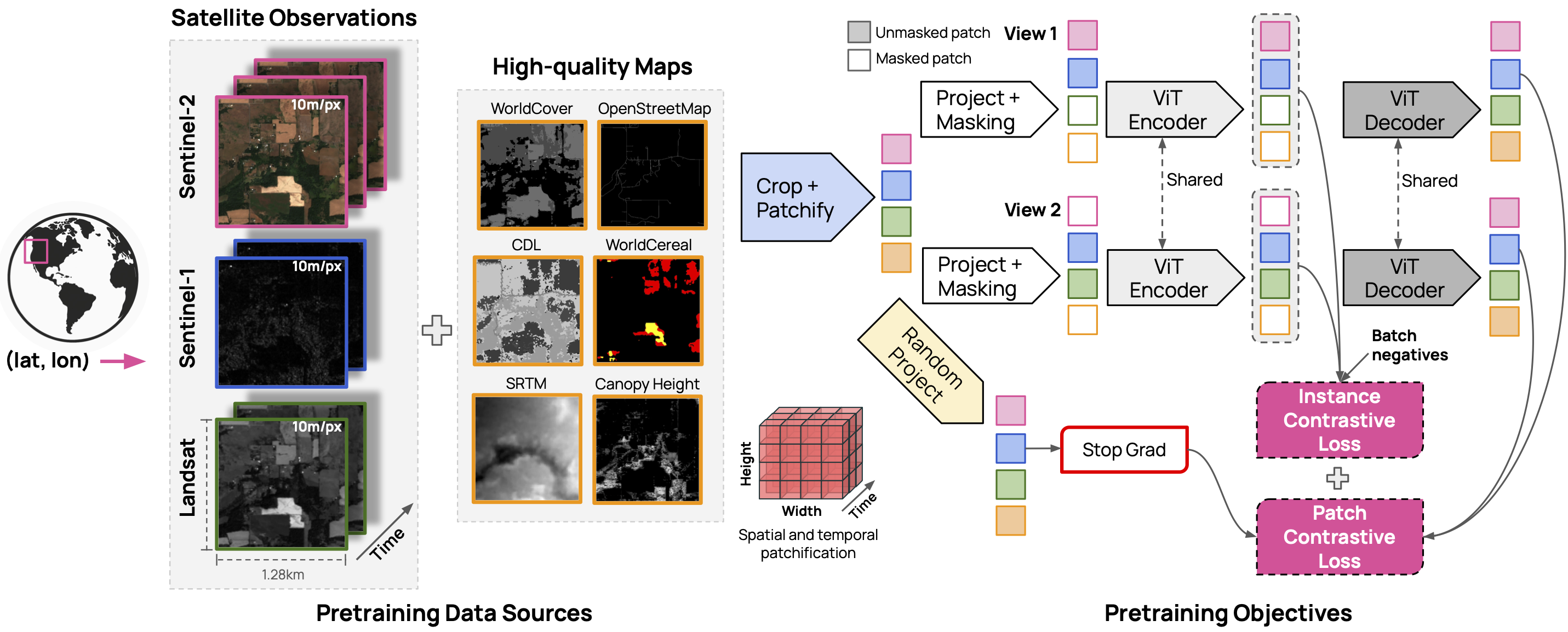

The OlmoEarth models are trained on three satellite modalities (Sentinel 2, Sentinel 1 and Landsat) and six derived maps (OpenStreetMap, WorldCover, USDA Cropland Data Layer, SRTM DEM, WRI Canopy Height Map, and WorldCereal).

| Model Size | Weights | Encoder Params | Decoder Params |

|---|---|---|---|

| Nano | link | 1.4M | 800K |

| Tiny | link | 6.2M | 1.9M |

| Base | link | 89M | 30M |

| Large | link | 308M | 53M |

Using OlmoEarth

InferenceQuickstart shows how to initialize the OlmoEarth model and apply it on a satellite image.

We also have several more in-depth tutorials for computing OlmoEarth embeddings and fine-tuning OlmoEarth on downstream tasks:

- Fine-tuning OlmoEarth for Segmentation

- Computing Embeddings using OlmoEarth

- Fine-tuning OlmoEarth in rslearn

Additionally, olmoearth_projects has several examples of active OlmoEarth deployments.

Data Summary

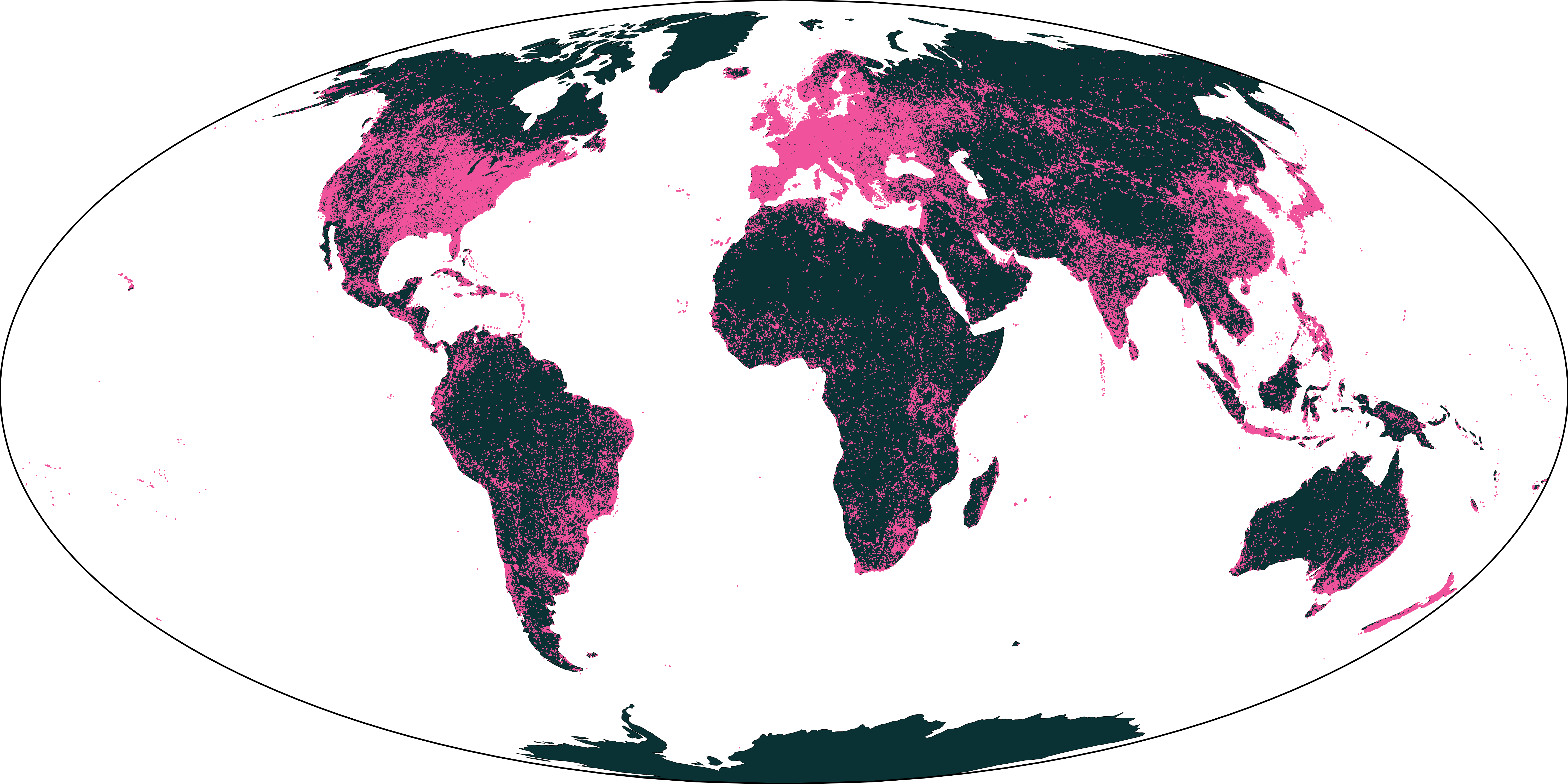

Our pretraining dataset contains 285,288 samples from around the world of 2.56km×2.56km regions, although many samples contain only a subset of the timesteps and modalities.

The distribution of the samples is available below:

The dataset can be downloaded here.

Detailed instructions on how to make your own pretraining dataset are available in the dataset README.

Training scripts

Detailed instructions on how to pretrain your own OlmoEarth model are available in Pretraining.md.

Evaluations

Detailed instructions on how to replicate our evaluations is available here:

Running Tests

Tests can be run with different dependency configurations using uv run:

# Full test suite (all dependencies - flash attn including olmo-core)

uv run --all-groups --no-group flash-attn pytest tests/

# Model loading tests with full deps (with olmo-core)

uv run --all-groups --no-group flash-attn pytest tests_minimal_deps/

# Model loading tests with minimal deps only (no olmo-core)

uv run --group dev pytest tests_minimal_deps/

The tests_minimal_deps/ directory contains tests that verify model loading works both with and without olmo-core installed. These run twice in CI to ensure compatibility.

License

This code is licensed under the OlmoEarth Artifact License.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file olmoearth_pretrain-0.1.0.tar.gz.

File metadata

- Download URL: olmoearth_pretrain-0.1.0.tar.gz

- Upload date:

- Size: 308.0 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

95132034c0c6783ccb3404fad17c94f39f0356a0feb2b519b456e6a17017c9e8

|

|

| MD5 |

e78bd0097a27754ed888ffe32fe2df13

|

|

| BLAKE2b-256 |

1244c6a8a0948d2ff992eb02a670b54d534d80ab178b883715301f9c01fb3268

|

Provenance

The following attestation bundles were made for olmoearth_pretrain-0.1.0.tar.gz:

Publisher:

publish.yml on allenai/olmoearth_pretrain

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

olmoearth_pretrain-0.1.0.tar.gz -

Subject digest:

95132034c0c6783ccb3404fad17c94f39f0356a0feb2b519b456e6a17017c9e8 - Sigstore transparency entry: 924540135

- Sigstore integration time:

-

Permalink:

allenai/olmoearth_pretrain@9cd8e77cd7e743ba5972061b39865ed4da6c040e -

Branch / Tag:

refs/tags/v0.1.0 - Owner: https://github.com/allenai

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@9cd8e77cd7e743ba5972061b39865ed4da6c040e -

Trigger Event:

release

-

Statement type:

File details

Details for the file olmoearth_pretrain-0.1.0-py3-none-any.whl.

File metadata

- Download URL: olmoearth_pretrain-0.1.0-py3-none-any.whl

- Upload date:

- Size: 404.7 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

84316688b85c151ee0bb0e477783cedcc9f4309d70ab5358b48b58a4a97701cf

|

|

| MD5 |

d7ef3b53b40d2ea0c4ad1c1b28fa5ab1

|

|

| BLAKE2b-256 |

189d3841c37302013bd1839e6617284020a9e89d9b82c159095fdd75ea31506f

|

Provenance

The following attestation bundles were made for olmoearth_pretrain-0.1.0-py3-none-any.whl:

Publisher:

publish.yml on allenai/olmoearth_pretrain

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

olmoearth_pretrain-0.1.0-py3-none-any.whl -

Subject digest:

84316688b85c151ee0bb0e477783cedcc9f4309d70ab5358b48b58a4a97701cf - Sigstore transparency entry: 924540143

- Sigstore integration time:

-

Permalink:

allenai/olmoearth_pretrain@9cd8e77cd7e743ba5972061b39865ed4da6c040e -

Branch / Tag:

refs/tags/v0.1.0 - Owner: https://github.com/allenai

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@9cd8e77cd7e743ba5972061b39865ed4da6c040e -

Trigger Event:

release

-

Statement type: