Python SDK + CLI for openinterp.org — Atlas search, SAE Traces, FabricationGuard hallucination detection, InterpScore. The operational layer for mechanistic interpretability.

Project description

openinterp

Python SDK + CLI for openinterp.org

Search the feature Atlas, generate Traces from your own SAE, rank against the public InterpScore leaderboard.

Install

pip install openinterp # lite: Atlas + CLI (no torch, ~2 MB total)

pip install "openinterp[full]" # + torch/transformers/safetensors for trace generation

Requires Python ≥ 3.10.

Part of a 5-repo ecosystem

| Repo | What's in it |

|---|---|

.github |

Org profile + shared CoC + SECURITY |

web |

Next.js site behind openinterp.org |

notebooks |

23 training + interpretability notebooks |

cli (you are here) |

pip install openinterp — Python SDK |

mechreward |

SAE features as dense RL reward |

🚀 Quick start

Search the Atlas (offline, zero GPU)

$ openinterp atlas "overconfidence"

Atlas results: 'overconfidence'

┏━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━┳━━━━━━━┳━━━━━━━━━━━━

┃ ID ┃ Name ┃ Model ┃ AUROC ┃ Description

┡━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━╇━━━━━━━╇━━━━━━━━━━━━

│ f2503 │ overconfidence_pattern │ Qwen/Qwen3.6-27B │ 0.54 │ Definitive…

│ f1847 │ urgency_assessment │ Qwen/Qwen3.6-27B │ 0.68 │ Time-critic…

└─────────┴─────────────────────────┴───────────────────┴───────┴────────────

>>> from openinterp import search_features

>>> features = search_features("overconfidence", model="Qwen/Qwen3.6-27B")

>>> features[0].id

'f2503'

Generate a Trace from your own SAE

pip install "openinterp[full]"

openinterp trace \

--model google/gemma-2-2b \

--sae-repo YOUR_HF_USER/gemma2-2b-sae-first \

--prompt "The capital of France is" \

--layer 12 \

--d-model 2304 --d-sae 16384 --k 64 \

--out my_trace.json

This:

- Loads the base model in bf16 with SDPA (no flash-attn)

- Loads your SAE from HuggingFace (sae_lens

safetensorsformat) - Generates tokens, captures residuals at layer 12

- Applies the SAE, picks top-10 active features

- Writes a

TraceJSON matching openinterp.org/observatory/trace byte-for-byte

Python API

from openinterp import generate_trace

trace = generate_trace(

model_id="google/gemma-2-2b",

sae_repo="YOUR_HF_USER/gemma2-2b-sae-first",

prompt="The capital of France is",

layer=12,

d_model=2304,

d_sae=16384,

k=64,

)

print(trace.model_dump_json(indent=2)) # Trace Theater schema

With feature labels from notebook 04

# After running 04_discover_features.ipynb (emits feature_catalog.json):

openinterp trace ... --catalog feature_catalog.json

Trace features inherit names from your catalog.

<<<<<<< Updated upstream

🛡️ FabricationGuard (v0.2.0+)

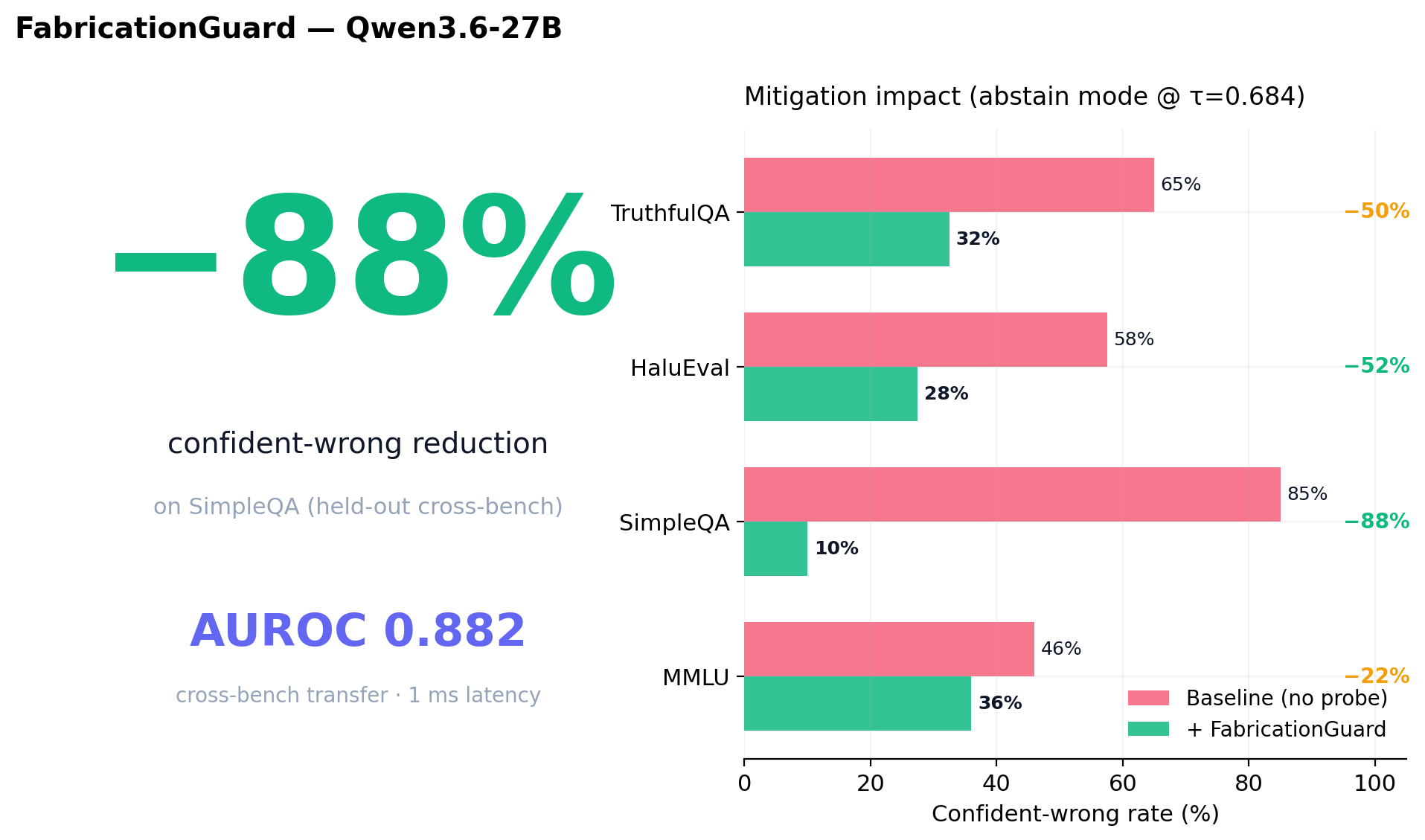

Production hallucination probe on Qwen3.6-27B. AUROC 0.88 cross-task on SimpleQA, −88% confident-wrong reduction in mitigation mode, ~1ms scoring latency.

from openinterp import FabricationGuard

guard = FabricationGuard.from_pretrained("Qwen/Qwen3.6-27B")

output = guard.generate("Who won the 2003 Nobel Prize in Aerodynamics?", mode="abstain")

# → "I don't have reliable information to answer this confidently."

Methodology lineage: extends Anthropic's persona-vectors approach (Aug 2025, tested on 7-8B) to Qwen3.6-27B (3-4× larger) with formal cross-task AUROC + bootstrap CIs + mitigation-rate evaluation. Apache-2.0 production-grade implementation, not a proprietary platform. Probe artifact: caiovicentino1/FabricationGuard-linearprobe-qwen36-27b. Live demo: openinterp.org/products/fabricationguard.

🧠 ReasonGuard v0.1 (in registry)

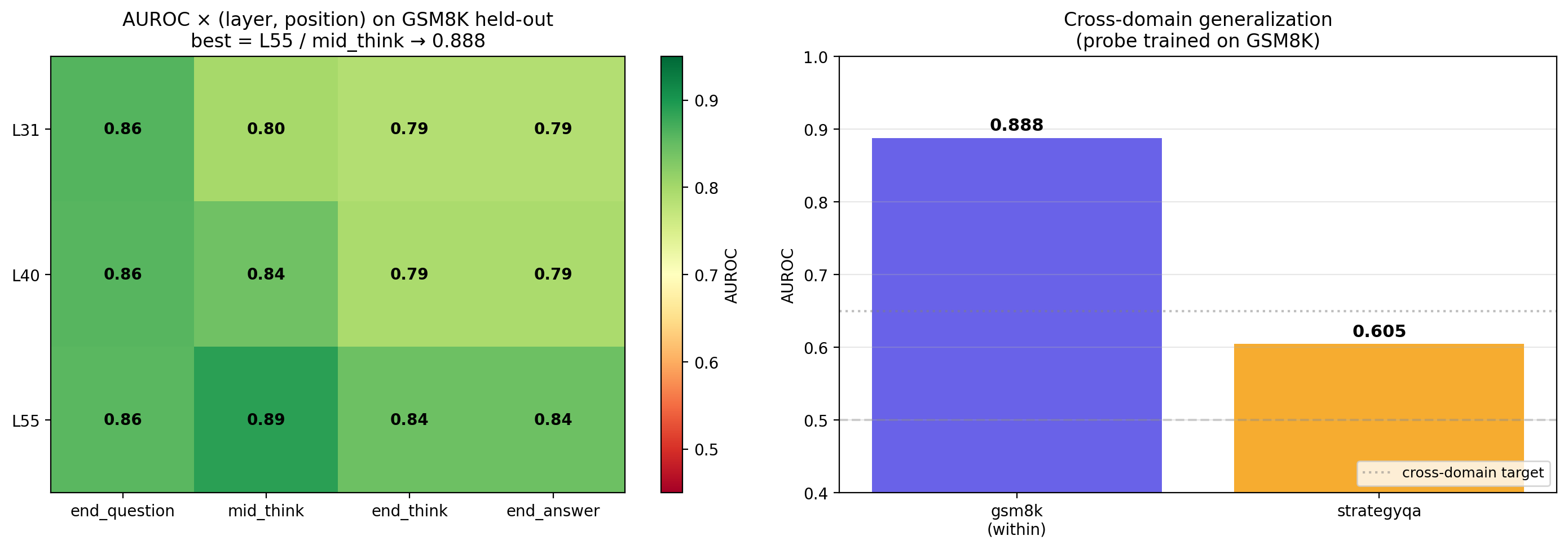

Reasoning-faithfulness probe at L55 / mid_think on Qwen3.6-27B in thinking mode. Detects wrong-answer trajectories during the <think> block. Honest narrow scope: AUROC 0.888 within math reasoning (GSM8K), 0.605 cross-domain to commonsense (StrategyQA) — domain-bound, not generalized.

Layer × position interaction (novel): shallow layers (L31) favor end_question; deep layers (L55) favor mid_think. Position-of-faithfulness migrates with depth.

ProbeBench

ProbeBench is the public registry + leaderboard of small classifiers — linear probes, SAE-feature combinations, attention-circuit probes — that turn an LLM's internal activations into a calibrated risk score for hallucination, deception, eval-awareness, reward-hacking, and more. Every probe ships with a SHA-256-pinned artifact, a reproducer notebook, calibrated test-set metrics, and an OSI-approved license. The probebench SDK is the same surface we use to ship FabricationGuard v2 (AUROC 0.88 cross-task on SimpleQA) and is open to community submissions.

Stashed changes

from openinterp import probebench

<<<<<<< Updated upstream

probe = probebench.load("openinterp/reasonguard-qwen36-27b-l55-mid_think")

score = probe.score(activations) # P(wrong-answer trajectory)

Both numbers (within + cross) registered honestly per ProbeBench's anti-Goodhart norms. Probe artifact: caiovicentino1/ReasoningGuard-linearprobe-qwen36-27b. Live on openinterp.org/probebench.

🧬 ProbeBench (v0.2.0+)

The first categorical leaderboard for activation probes — 8 categories, 7-axis ProbeScore, anti-Goodhart by construction.

from openinterp import probebench

probes = probebench.list_probes(category="hallucination")

probe = probebench.load("openinterp/fabricationguard-qwen36-27b-l31-v2")

score = probe.score(activations)

openinterp probebench list # show all registered probes

openinterp probebench load <probe-id> # download + verify SHA-256

openinterp probebench validate ./my-probe/ # check artifact spec

openinterp probebench reproduce <probe-id> # download reproducer notebook

Browse the leaderboard: openinterp.org/probebench.

📦 What's in v0.2.0

======= probe = probebench.load("openinterp/fabricationguard-qwen36-27b-l31-v2") scores = probe.score(activations) # numpy array of P(positive_class) print(probe.metadata.tagline, probe.probescore())

```bash

openinterp probebench list

openinterp probebench load openinterp/fabricationguard-qwen36-27b-l31-v2

openinterp probebench validate ./my-probe/

Browse the leaderboard at openinterp.org/probebench · contribute via probebench-registry.

📦 What's in v0.1.0

Stashed changes

| Command | Status | What it does |

|---|---|---|

openinterp atlas <query> |

✅ live | Feature search with offline fallback to curated demo features |

openinterp trace ... |

✅ live (needs [full]) |

Real SAE trace generation, sae_lens format, any HF model |

openinterp guard ... |

✅ live | FabricationGuard scoring + abstain mode on Qwen3.6-27B |

openinterp probebench {list,load,score,validate,reproduce,submit} |

✅ live | ProbeBench v0.0.1 SDK |

openinterp info |

✅ live | Version + optional-dep status |

Planned v0.3.0

openinterp upload-trace <trace.json>→ shareable openinterp.org URLopeninterp score --sae-repo X→ compute InterpScore (wraps notebook 18)openinterp steer --sae-repo X --feature Y --alpha Z→ intervention (wraps notebook 06)openinterp circuit --sae-repo X --prompt Y→ attribution graph JSON (wraps notebook 14/15)openinterp publish <repo>→ HuggingFace release with model card- ReasonGuard v0.2 — multi-bench training (math + commonsense) to fix cross-domain transfer

Open an issue on the tracker if you'd take one of these.

🛠️ Development

git clone https://github.com/OpenInterpretability/cli openinterp-cli

cd openinterp-cli

python -m venv .venv && source .venv/bin/activate

pip install -e ".[dev,full]" # dev = pytest + ruff + build; full = torch + transformers

pytest -xvs # 5 tests, ~1s

Package layout

openinterp-cli/

├── pyproject.toml # name='openinterp', hatchling build

├── openinterp/

│ ├── __init__.py # public exports + __version__

│ ├── models.py # pydantic types: AtlasFeature, Trace, TraceFeature

│ ├── atlas.py # search_features() — HF API + curated fallback

│ ├── trace.py # generate_trace() — real transformers-based impl

│ └── cli.py # click-based CLI: atlas / trace / info

├── tests/

│ ├── test_atlas.py

│ └── test_trace.py

├── CHANGELOG.md

├── CONTRIBUTING.md

└── README.md

Contribution recipe — add a new command

Full rules: CONTRIBUTING.md.

- Decide which notebook it wraps (score → 18, steer → 06, circuit → 14/15, publish → generic)

- Add a function to the matching file (

openinterp/score.py, etc.). Keep it small — actual compute lives in the notebook. - Expose it in

__init__.py - Add a

@main.command()incli.pywith click decorators - Add a smoke test in

tests/test_<name>.py - Update

CHANGELOG.mdunder[Unreleased] - PR title:

Add openinterp <command>

Hard rules:

- Python ≥ 3.10 syntax (PEP 604 unions OK)

dtype=torch.bfloat16, nevertorch_dtype=(transformers 5.x deprecated)- SDPA only, never flash-attn

- New heavy deps (

torchtier) → add to[full]extra, not base - Every new public function has type hints + docstring

🚢 Release process (maintainer)

# 1. Bump version in BOTH:

# pyproject.toml ([project] version = "X.Y.Z")

# openinterp/__init__.py (__version__ = "X.Y.Z")

# 2. Update CHANGELOG.md — move [Unreleased] → [X.Y.Z] — YYYY-MM-DD

source .venv/bin/activate

rm -rf dist/

python -m build

python -m twine check dist/*

python -m twine upload dist/* # needs PyPI token in ~/.pypirc

git tag vX.Y.Z

git push --tags

CI

Every PR runs:

pytest -xvsacross Python 3.10, 3.11, 3.12 (see.github/workflows/ci.yml)ruff check .(warn-only for now)python -m build+twine check

Green required to merge.

Community

- 💬 Discussions — API proposals, "which repo should this live in"

- 🟢 Good-first-issues

- 📦 PyPI release history

- ✉️ hi@openinterp.org

Standing on the shoulders of

- Neuronpedia · the SAE encyclopedia

- Gemma Scope · reference SAE suite

- Gao et al. 2024 · TopK + AuxK recipe

- SAELens · our safetensors format

License

Apache-2.0. Built by Caio Vicentino + OpenInterpretability. 2026.

openinterp.org · github.com/OpenInterpretability · hi@openinterp.org

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file openinterp-0.2.1.tar.gz.

File metadata

- Download URL: openinterp-0.2.1.tar.gz

- Upload date:

- Size: 39.5 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.9.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

709e60b4f0a11d30632266be74c72ee86839ef5a83a3c18d31de6b98282d264c

|

|

| MD5 |

4cb3bb7a2ab1c4d9949cd28a830094fc

|

|

| BLAKE2b-256 |

d80247f490a68f3b7c7feb418ed59856b94ddb1815888f76078318b013eb6f52

|

File details

Details for the file openinterp-0.2.1-py3-none-any.whl.

File metadata

- Download URL: openinterp-0.2.1-py3-none-any.whl

- Upload date:

- Size: 40.7 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.9.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

ebcaade904225b2a502bb924a13c1d7f0bf7cad73b7cc86934ae75e2aa21e544

|

|

| MD5 |

2a1aedadecdfc51280ee66ec71c6b2c5

|

|

| BLAKE2b-256 |

b32c23ede5c83069ea4bdd31ee3efb25bd9bffcc9df5f6506ff173077f6ab129

|