An agentic coding and automation assistant, supporting both local and cloud LLMs

Project description

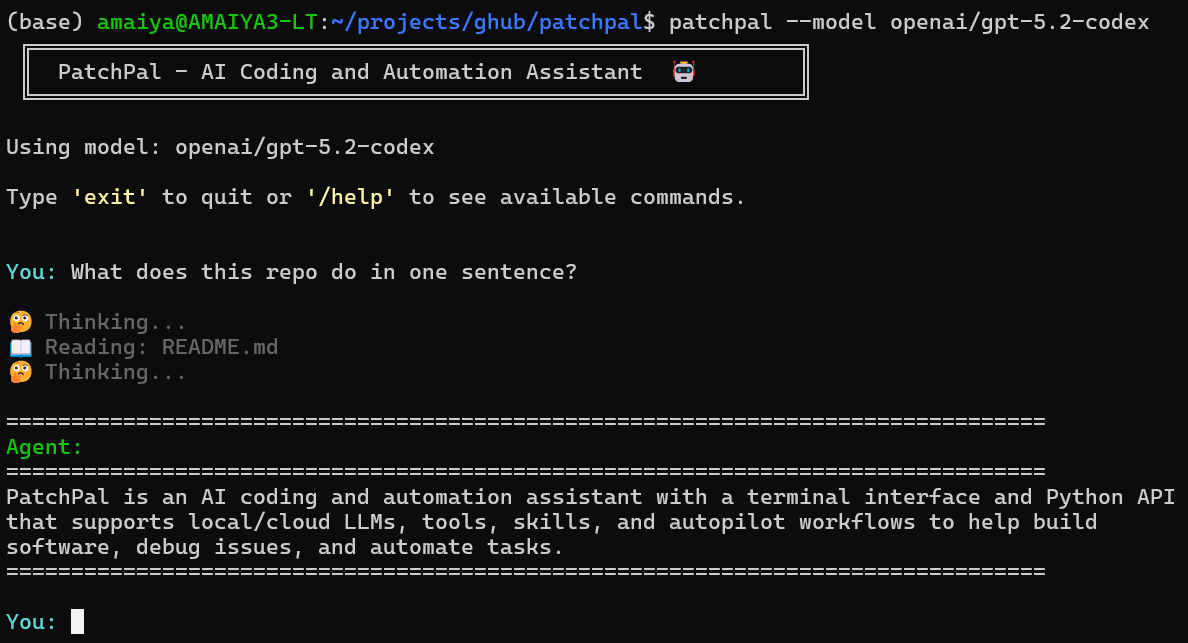

PatchPal — An Agentic Coding and Automation Assistant

Supporting both local and cloud LLMs, with autopilot mode and extensible tools.

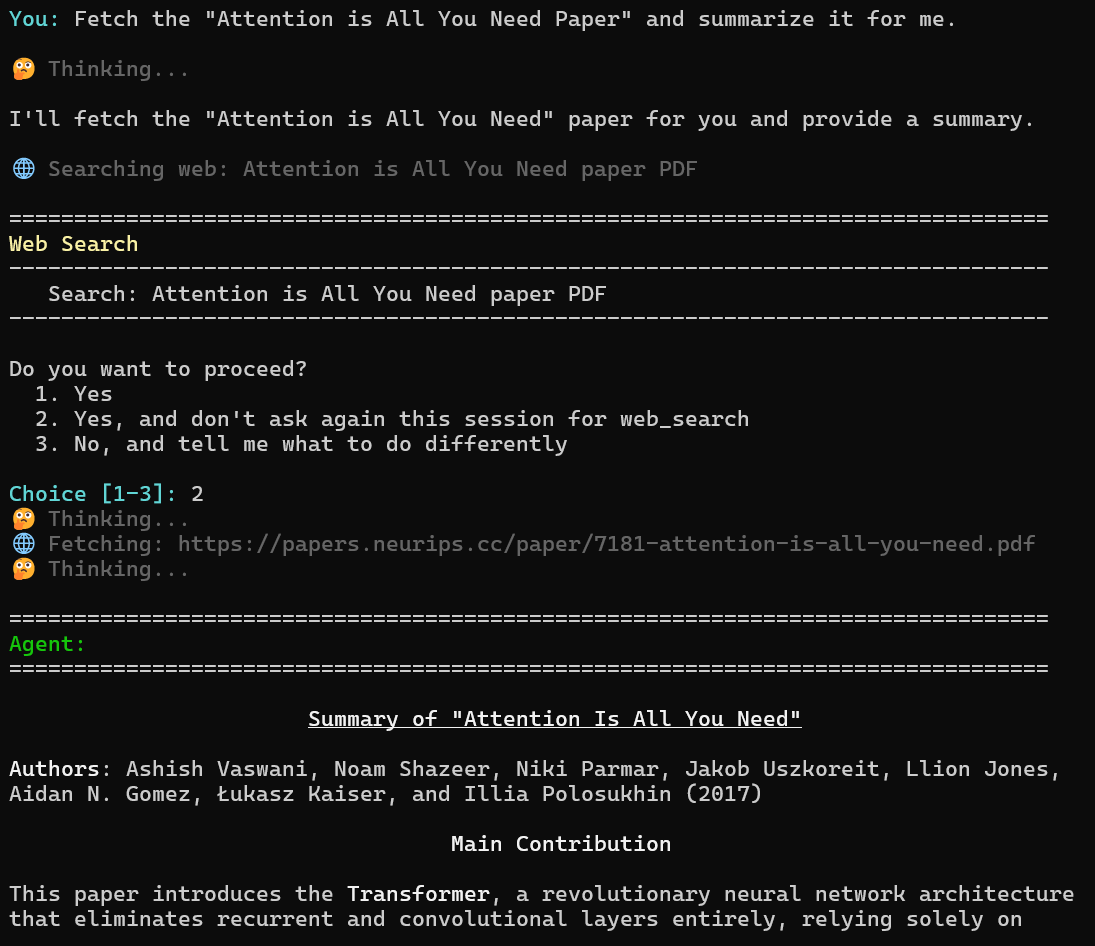

PatchPal is an AI coding agent that helps you build software, debug issues, and automate tasks. It supports agent skills, tool use, and executable Python generation, enabling interactive workflows for tasks such as data analysis, visualization, web scraping, API interactions, and research with synthesized findings.

Most agent frameworks are built in TypeScript. PatchPal is Python-native, designed for developers who want both interactive terminal use (patchpal) and programmatic API access (agent.run("task")) in the same tool—without switching ecosystems.

Key Features

- Terminal Interface for interactive development

- Python SDK for flexibility and extensibility

- Built-In and Custom Tools

- Skills System and MCP Integration

- Autopilot Mode using Ralph Wiggum loops

- Project Memory automatically loads project context from

~/.patchpal/repos/<repo-name>/MEMORY.mdat startup.

PatchPal prioritizes customizability: custom tools, custom skills, a flexible Python API, and support for any tool-calling LLM.

Full documentation is here.

Quick Start

$ pip install patchpal # install

$ patchpal # start

Platform support: Linux, macOS, and Windows are all supported

Setup

-

Install:

pip install patchpal -

Get an API key or a Local LLM Engine:

- [Cloud] For Anthropic models (default): Sign up at https://console.anthropic.com/

- [Cloud] For OpenAI models: Get a key from https://platform.openai.com/

- [Local] For vLLM: Install from https://docs.vllm.ai/ (free - no API charges) Recommended for Local Use

- [Local] For Ollama: Install from https://ollama.com/ (⚠️ requires

OLLAMA_CONTEXT_LENGTH=32768- see Ollama section below) - For other providers: Check the LiteLLM documentation

-

Set up your API key as environment variable:

# For Anthropic (default)

export ANTHROPIC_API_KEY=your_api_key_here

# For OpenAI

export OPENAI_API_KEY=your_api_key_here

# For vLLM - API key required only if configured

export HOSTED_VLLM_API_BASE=http://localhost:8000 # depends on your vLLM setup

export HOSTED_VLLM_API_KEY=token-abc123 # optional depending on your vLLM setup

# For Ollama, no API key required

# For other providers, check LiteLLM docs

- Run PatchPal:

# Use default model (anthropic/claude-sonnet-4-5)

patchpal

# Use a specific model via command-line argument

patchpal --model openai/gpt-5.2-codex # or openai/gpt-5-mini, anthropic/claude-opus-4-5, etc.

# Use vLLM (local)

# Note: vLLM server must be started with --tool-call-parser and --enable-auto-tool-choice

export HOSTED_VLLM_API_BASE=http://localhost:8000

export HOSTED_VLLM_API_KEY=token-abc123

patchpal --model hosted_vllm/openai/gpt-oss-120b

# Use Ollama (local - requires OLLAMA_CONTEXT_LENGTH=32768)

export OLLAMA_CONTEXT_LENGTH=32768

patchpal --model ollama_chat/gpt-oss:120b

# Or set the model via environment variable

export PATCHPAL_MODEL=openai/gpt-5.2

patchpal

Tip for Local Models: Local models (i.e., models served by Ollama or vLLM) may work better with the environment variable settings, PATCHPAL_MINIMAL_TOOLS=true and PATCHPAL_ENABLE_WEB=false, which provides only essential tools (read_file, read_lines, write_file, edit_file, run_shell), reducing tool confusion with smaller models.

Beyond Coding: General Problem-Solving

While originally designed for software development, PatchPal is also a general-purpose assistant. With web search, file operations, shell commands, and custom tools/skills, it can help with research, data analysis, document processing, log file analyses, etc.

Documentation

Full documentation is available here.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file patchpal-0.20.0.tar.gz.

File metadata

- Download URL: patchpal-0.20.0.tar.gz

- Upload date:

- Size: 168.6 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3b621d5f610deefb3f09617c7ca486750f3d01bed38d92856d46e59710cc2771

|

|

| MD5 |

d7a66a3b7ec8007ae2dea62d15131f59

|

|

| BLAKE2b-256 |

d3c438ab5a89109241f97076b24654e07825b1ad077171c3c18e5f29893a0104

|

Provenance

The following attestation bundles were made for patchpal-0.20.0.tar.gz:

Publisher:

release.yml on amaiya/patchpal

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

patchpal-0.20.0.tar.gz -

Subject digest:

3b621d5f610deefb3f09617c7ca486750f3d01bed38d92856d46e59710cc2771 - Sigstore transparency entry: 1046138844

- Sigstore integration time:

-

Permalink:

amaiya/patchpal@154970a12df4fa0530a6e928be3cc1d5267515e0 -

Branch / Tag:

refs/tags/0.20.0 - Owner: https://github.com/amaiya

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yml@154970a12df4fa0530a6e928be3cc1d5267515e0 -

Trigger Event:

release

-

Statement type:

File details

Details for the file patchpal-0.20.0-py3-none-any.whl.

File metadata

- Download URL: patchpal-0.20.0-py3-none-any.whl

- Upload date:

- Size: 130.2 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3358fa3b4eb0ea31c3f569d95aaef4e48c30c3694b2932ce753487e00bca8f44

|

|

| MD5 |

86e239e1f29bbd78c7fd4b3cf9e28ed5

|

|

| BLAKE2b-256 |

24b48455990119f95d30a6098fce6189516383f6cc8c960d95cc313e5eacc3c2

|

Provenance

The following attestation bundles were made for patchpal-0.20.0-py3-none-any.whl:

Publisher:

release.yml on amaiya/patchpal

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

patchpal-0.20.0-py3-none-any.whl -

Subject digest:

3358fa3b4eb0ea31c3f569d95aaef4e48c30c3694b2932ce753487e00bca8f44 - Sigstore transparency entry: 1046138901

- Sigstore integration time:

-

Permalink:

amaiya/patchpal@154970a12df4fa0530a6e928be3cc1d5267515e0 -

Branch / Tag:

refs/tags/0.20.0 - Owner: https://github.com/amaiya

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yml@154970a12df4fa0530a6e928be3cc1d5267515e0 -

Trigger Event:

release

-

Statement type: