Pathway is a data processing framework which takes care of streaming data updates for you.

Project description

Getting Started |

Deployment |

Documentation and Support |

Blog |

License

Pathway Live Data Framework

Pathway is a Python ETL framework for stream processing, real-time analytics, LLM pipelines, and RAG.

Pathway comes with an easy-to-use Python API, allowing you to seamlessly integrate your favorite Python ML libraries. Pathway code is versatile and robust: you can use it in both development and production environments, handling both batch and streaming data effectively. The same code can be used for local development, CI/CD tests, running batch jobs, handling stream replays, and processing data streams.

Pathway is powered by a scalable Rust engine based on Differential Dataflow and performs incremental computation. Your Pathway code, despite being written in Python, is run by the Rust engine, enabling multithreading, multiprocessing, and distributed computations. All the pipeline is kept in memory and can be easily deployed with Docker and Kubernetes.

You can install Pathway with pip:

pip install -U pathway

For any questions, you will find the community and team behind the project on Discord.

Use-cases and templates

Ready to see what Pathway can do?

Try one of our easy-to-run examples!

Available in both notebook and docker formats, these ready-to-launch examples can be launched in just a few clicks. Pick one and start your hands-on experience with Pathway today!

Event processing and real-time analytics pipelines

With its unified engine for batch and streaming and its full Python compatibility, Pathway makes data processing as easy as possible. It's the ideal solution for a wide range of data processing pipelines, including:

- Showcase: Real-time ETL.

- Showcase: Event-driven pipelines with alerting.

- Showcase: Realtime analytics.

- Docs: Switch from batch to streaming.

AI Pipelines

Pathway provides dedicated LLM tooling to build live LLM and RAG pipelines. Wrappers for most common LLM services and utilities are included, making working with LLMs and RAGs pipelines incredibly easy. Check out our LLM xpack documentation.

Don't hesitate to try one of our runnable examples featuring LLM tooling. You can find such examples here.

- Template: Unstructured data to SQL on-the-fly.

- Template: Private RAG with Ollama and Mistral AI

- Template: Adaptive RAG

- Template: Multimodal RAG with gpt-4o

Features

- A wide range of connectors: Pathway comes with connectors that connect to external data sources such as Kafka, GDrive, PostgreSQL, or SharePoint. Its Airbyte connector allows you to connect to more than 300 different data sources. If the connector you want is not available, you can build your own custom connector using Pathway Python connector.

- Stateless and stateful transformations: Pathway supports stateful transformations such as joins, windowing, and sorting. It provides many transformations directly implemented in Rust. In addition to the provided transformation, you can use any Python function. You can implement your own or you can use any Python library to process your data.

- Persistence: Pathway provides persistence to save the state of the computation. This allows you to restart your pipeline after an update or a crash. Your pipelines are in good hands with Pathway!

- Consistency: Pathway handles the time for you, making sure all your computations are consistent. In particular, Pathway manages late and out-of-order points by updating its results whenever new (or late, in this case) data points come into the system. The free version of Pathway gives the "at least once" consistency while the enterprise version provides the "exactly once" consistency.

- Scalable Rust engine: with Pathway Rust engine, you are free from the usual limits imposed by Python. You can easily do multithreading, multiprocessing, and distributed computations.

- LLM helpers: Pathway provides an LLM extension with all the utilities to integrate LLMs with your data pipelines (LLM wrappers, parsers, embedders, splitters), including an in-memory real-time Vector Index, and integrations with LLamaIndex and LangChain. You can quickly build and deploy RAG applications with your live documents.

Getting started

Installation

Pathway requires Python 3.10 or above.

You can install the current release of Pathway using pip:

$ pip install -U pathway

⚠️ Pathway is available on MacOS and Linux. Users of other systems should run Pathway on a Virtual Machine.

Example: computing the sum of positive values in real time.

import pathway as pw

# Define the schema of your data (Optional)

class InputSchema(pw.Schema):

value: int

# Connect to your data using connectors

input_table = pw.io.csv.read(

"./input/",

schema=InputSchema

)

#Define your operations on the data

filtered_table = input_table.filter(input_table.value>=0)

result_table = filtered_table.reduce(

sum_value = pw.reducers.sum(filtered_table.value)

)

# Load your results to external systems

pw.io.jsonlines.write(result_table, "output.jsonl")

# Run the computation

pw.run()

Run Pathway in Google Colab.

You can find more examples here.

Deployment

Locally

To use Pathway, you only need to import it:

import pathway as pw

Now, you can easily create your processing pipeline, and let Pathway handle the updates. Once your pipeline is created, you can launch the computation on streaming data with a one-line command:

pw.run()

You can then run your Pathway project (say, main.py) just like a normal Python script: $ python main.py.

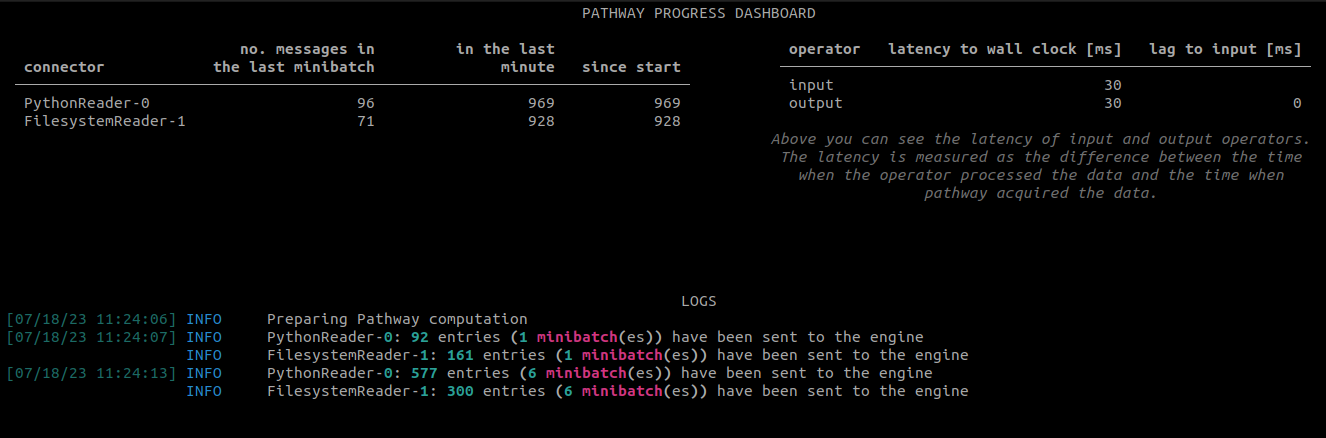

Pathway comes with a monitoring dashboard that allows you to keep track of the number of messages sent by each connector and the latency of the system. The dashboard also includes log messages.

Alternatively, you can use the pathway'ish version:

$ pathway spawn python main.py

Pathway natively supports multithreading. To launch your application with 3 threads, you can do as follows:

$ pathway spawn --threads 3 python main.py

To jumpstart a Pathway project, you can use our cookiecutter template.

Docker

You can easily run Pathway using docker.

Pathway image

You can use the Pathway docker image, using a Dockerfile:

FROM pathwaycom/pathway:latest

WORKDIR /app

COPY requirements.txt ./

RUN pip install --no-cache-dir -r requirements.txt

COPY . .

CMD [ "python", "./your-script.py" ]

You can then build and run the Docker image:

docker build -t my-pathway-app .

docker run -it --rm --name my-pathway-app my-pathway-app

Run a single Python script

When dealing with single-file projects, creating a full-fledged Dockerfile

might seem unnecessary. In such scenarios, you can execute a

Python script directly using the Pathway Docker image. For example:

docker run -it --rm --name my-pathway-app -v "$PWD":/app pathwaycom/pathway:latest python my-pathway-app.py

Python docker image

You can also use a standard Python image and install Pathway using pip with a Dockerfile:

FROM --platform=linux/x86_64 python:3.10

RUN pip install -U pathway

COPY ./pathway-script.py pathway-script.py

CMD ["python", "-u", "pathway-script.py"]

Kubernetes and cloud

Docker containers are ideally suited for deployment on the cloud with Kubernetes. If you want to scale your Pathway application, you may be interested in our Pathway for Enterprise. Pathway for Enterprise is specially tailored towards end-to-end data processing and real time intelligent analytics. It scales using distributed computing on the cloud and supports distributed Kubernetes deployment, with external persistence setup.

You can easily deploy Pathway using services like Render: see how to deploy Pathway in a few clicks.

If you are interested, don't hesitate to contact us to learn more.

Performance

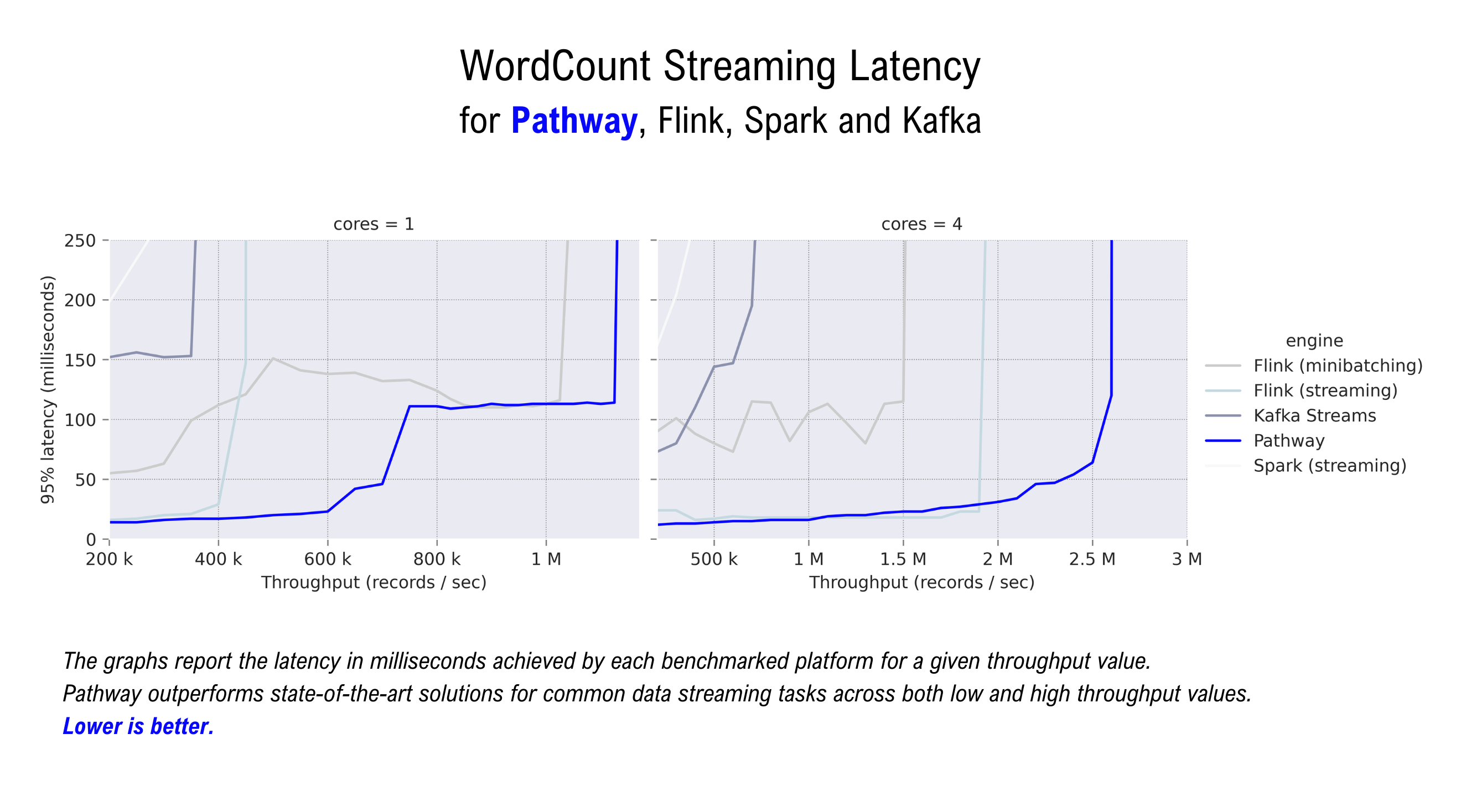

Pathway is made to outperform state-of-the-art technologies designed for streaming and batch data processing tasks, including: Flink, Spark, and Kafka Streaming. It also makes it possible to implement a lot of algorithms/UDF's in streaming mode which are not readily supported by other streaming frameworks (especially: temporal joins, iterative graph algorithms, machine learning routines).

If you are curious, here are some benchmarks to play with.

Documentation and Support

The entire documentation of Pathway is available at pathway.com/developers/, including the API Docs.

If you have any question, don't hesitate to open an issue on GitHub, join us on Discord, or send us an email at contact@pathway.com.

🤝 Featured Collaborations & Integrations

We build cutting-edge data processing pipelines and co-promote solutions that push the boundaries of what's possible with Python and streaming data. Meet the people helping us shape the future of data engineering.

| Project | Description |

|---|---|

| Databento | A simpler, faster way to get market data. |

| LangChain | LangChain is the platform for agent engineering. |

| LlamaIndex | The developer-trusted framework for building context-aware AI agents. |

| MinIO | MinIO is a high-performance, S3 compatible object store, open sourced under GNU AGPLv3 license. |

| PaddleOCR | PaddleOCR is an industry-leading, production-ready OCR and document AI engine, offering end-to-end solutions from text extraction to intelligent document understanding. |

| Redpanda | Build, operate, and govern streaming and AI applications without the complexity of Kafka. |

License

Pathway is distributed on a BSL 1.1 License which allows for unlimited non-commercial use, as well as use of the Pathway package for most commercial purposes, free of charge. Code in this repository automatically converts to Open Source (Apache 2.0 License) after 4 years. Some public repos which are complementary to this one (examples, libraries, connectors, etc.) are licensed as Open Source, under the MIT license.

Contribution guidelines

If you develop a library or connector which you would like to integrate with this repo, we suggest releasing it first as a separate repo on a MIT/Apache 2.0 license.

For all concerns regarding core Pathway functionalities, Issues are encouraged. For further information, don't hesitate to engage with Pathway's Discord community.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distributions

Built Distributions

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file pathway-0.30.1-cp310-abi3-manylinux_2_28_x86_64.whl.

File metadata

- Download URL: pathway-0.30.1-cp310-abi3-manylinux_2_28_x86_64.whl

- Upload date:

- Size: 78.1 MB

- Tags: CPython 3.10+, manylinux: glibc 2.28+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

40194aa8f9a5b41812ce4bc2ba165a1e1a483068f9e6188ef6ffb41751cc6893

|

|

| MD5 |

d66bd2ffb8b3dfbba1e8d4c6e9cff71f

|

|

| BLAKE2b-256 |

2bdc8e5789c53cf356850a08af581fa0a3eda0c9deb0e05f09730b9e4bd05ea1

|

File details

Details for the file pathway-0.30.1-cp310-abi3-manylinux_2_28_aarch64.whl.

File metadata

- Download URL: pathway-0.30.1-cp310-abi3-manylinux_2_28_aarch64.whl

- Upload date:

- Size: 73.3 MB

- Tags: CPython 3.10+, manylinux: glibc 2.28+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

76d018780744db3a6ecebe42ac90714968e872449e502d2c246cf4127086ac92

|

|

| MD5 |

935d271711453fb626e6cfb591320cce

|

|

| BLAKE2b-256 |

52e53cc4ce88b4192bffe188b906b4aba18940e07c0685df586abaf611e76c0b

|

File details

Details for the file pathway-0.30.1-cp310-abi3-macosx_14_0_arm64.whl.

File metadata

- Download URL: pathway-0.30.1-cp310-abi3-macosx_14_0_arm64.whl

- Upload date:

- Size: 67.5 MB

- Tags: CPython 3.10+, macOS 14.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

37fd4257ce7cd93838e9bb467f327e4789c585ded207ea885f7eb2550038fb21

|

|

| MD5 |

61f404612ab230fb6ea1d2072adfd80e

|

|

| BLAKE2b-256 |

75319a42b1686be5ab0eadb5f34344bcb18580209590ad2b06ac4f027812942d

|

File details

Details for the file pathway-0.30.1-cp310-abi3-macosx_10_15_x86_64.whl.

File metadata

- Download URL: pathway-0.30.1-cp310-abi3-macosx_10_15_x86_64.whl

- Upload date:

- Size: 70.7 MB

- Tags: CPython 3.10+, macOS 10.15+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

387d16064679939fdcfcee817ef903e490cd1db12203a0a02cfe5f4790f34a80

|

|

| MD5 |

59e1bc8d54dc95580e6a536de60f6fb9

|

|

| BLAKE2b-256 |

0c4dec95d57a2eee26813cfbdfcb91d2ebd1cee3d09837b10ed4dcba928e55cf

|