PGN Tokenizer, a Byte Pair Encoding (BPE) tokenizer for Chess Portable Game Notiation (PGN).

Project description

PGN Tokenizer

This is a Byte Pair Encoding (BPE) tokenizer for Chess Portable Game Notation (PGN).

Supported Languages

Note: This is part of a work-in-progress project to investigate how language models might understand chess without an engine or any chess-specific knowledge.

Installation

You can install it with your package manager of choice:

Python Package

uv

uv add pgn-tokenizer

pip

pip install pgn-tokenizer

TypeScript Package

npm

npm install @dvdagames/pgn-tokenizer

bun

bun add @dvdagames/pgn-tokenizer

Getting Started

Here's a brief overview of getting started with the Python and TypeScript versions of the PGN Tokenizer.

Python

The Python package uses the

tokenizers library for training the tokenizer and the transformers library to create a PreTrainedTokenizerFast interface from the PGN Tokenizer configuration, and provides minimal interface with .encode() and .decode() methods, and a .vocab_size property, but you can also access the underlying tokenizer class via the .tokenizer property.

from pgn_tokenizer import PGNTokenizer

# Initialize the tokenizer

tokenizer = PGNTokenizer()

# Tokenize a PGN string into a List of Token IDs

tokens = tokenizer.encode("1.e4 e5 2.Nf6 Nc3 3.Bc4")

# Decode a List of Token IDs into a String

decoded = tokenizer.decode(tokens)

# print the vocab size

print(f"Tokenizer vocabulary: {tokenzier.vocabSize}")

TypeScript

The TypeScript package uses the same underlying PGN Tokenizer configuration as the Python package, which is the output of the tokenizers training, but the @huggingface/transformers package does not fully support all of the same tokenizer options that the PGN Tokenizer relies on, so the encoding and decoding logic has been implemented manually following the same Byte Pair Encoding (BPE) algorithm.

The most basic use case is to import the encode or decode methods and use them directly:

import { encode, decode } from "@dvdagames/pgn-tokenizer";

// convert a string into an Array of token IDs

tokens = encode("1.e4 e5 2.Nf6 Nc3 3.Bc4");

// decode an encoded Array of token IDs into a String

decoded = decode(tokens);

But you can also import the underlying pgnTokenizer class to have access to the vocab_size property, too:

import pgnTokenizer from "@dvdagames/pgn-tokenizer";

// convert a string into an Array of token IDs

tokens = pgnTokenizer.encode("1.e4 e5 2.Nf6 Nc3 3.Bc4");

// decode an encoded Array of token IDs into a String

decoded = pgnTokenizer.decode(tokens);

// print the vocab size

console.log(`Tokenizer vocabulary: ${pgnTokenizer.vocab_size}`);

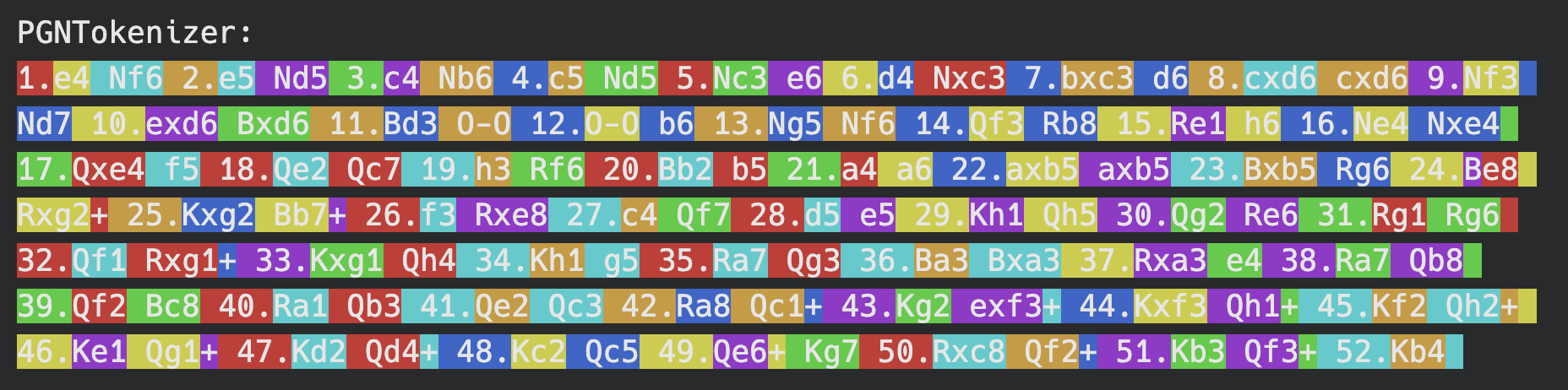

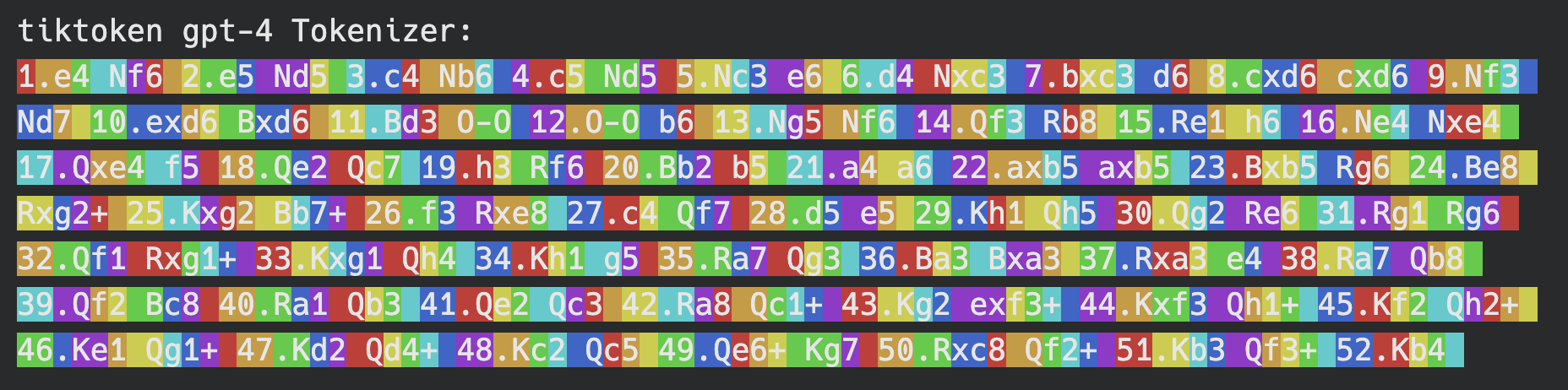

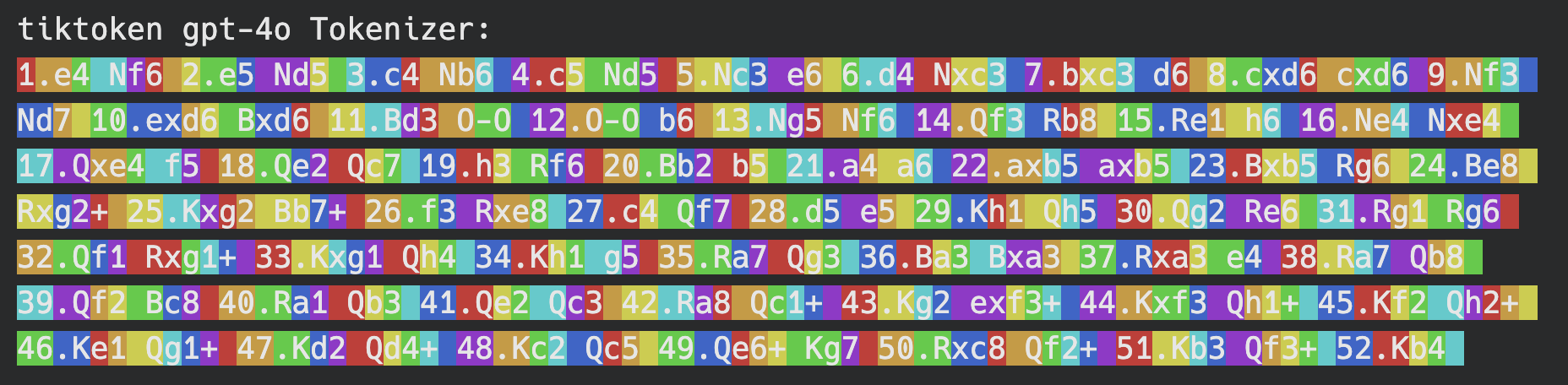

Tokenizer Comparison

More traditional, language-focused BPE tokenizer implementations are not suited for PGN strings because they are more likely to break the actual moves apart.

For example 1.e4 e5 would likely be tokenized as 1, .e, 4, e, 5 (or 1, ., e, 4, e, 5 depending on the tokenizer), but using this specialized PGN Tokenizer it will be tokenized as 1., e4, e5. This allows models to be trained with more accurate connections between tokens in PGN strings and more accurate inference when completing PGN strings.

Visualization

Here is a visualization of the vocabulary of this specialized PGN tokenizer compared to the BPE tokenizer vocabularies of the cl100k_base (the vocabulary for the gpt-3.5-turbo and gpt-4 models' tokenizer) and the o200k_base (the vocabulary for the gpt-4o model's tokenizer):

PGN Tokenizer

Note: The tokenizer was trained with ~2.8 Million chess games in PGN notation with a target vocabulary size of 4096.

GPT-3.5-turbo and GPT-4 Tokenizers

GPT-4o Tokenizer

Note: These visualizations were generated with a function adapted from an educational Jupyter Notebook in the tiktoken repository.

Acknowledgements

- @karpathy for the Let's build the GPT Tokenizer tutorial

- Hugging Face for the

tokenizersandtransformerslibraries. - Kaggle user MilesH14, whoever you are, for the (now-unpublished) dataset of 3.5 million chess games cleaned from the Chess Research Project

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file pgn_tokenizer-1.0.0.tar.gz.

File metadata

- Download URL: pgn_tokenizer-1.0.0.tar.gz

- Upload date:

- Size: 1.2 MB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: uv/0.11.2 {"installer":{"name":"uv","version":"0.11.2","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":null,"ci":true}

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

2b7b3f7d257132efa227468e4694e61d00fc339c97452fc59cc3667a64fd1c0e

|

|

| MD5 |

88727cd59c07ff8ed8f30e650fc0846c

|

|

| BLAKE2b-256 |

9760a20de108b736eb2ef7eb4d8f273ea347920cead298a1833842abf334392e

|

File details

Details for the file pgn_tokenizer-1.0.0-py3-none-any.whl.

File metadata

- Download URL: pgn_tokenizer-1.0.0-py3-none-any.whl

- Upload date:

- Size: 72.2 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: uv/0.11.2 {"installer":{"name":"uv","version":"0.11.2","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":null,"ci":true}

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

2b6698ae046866c060b795371263d5a03d227fe63aa971b96878a01d55d1c252

|

|

| MD5 |

eff6d82fcef228c1c9eda74a02bbf1c8

|

|

| BLAKE2b-256 |

eb97e549afef4726fa0e23430cbc06336c48dc9fa0d995fa1479a04080774205

|