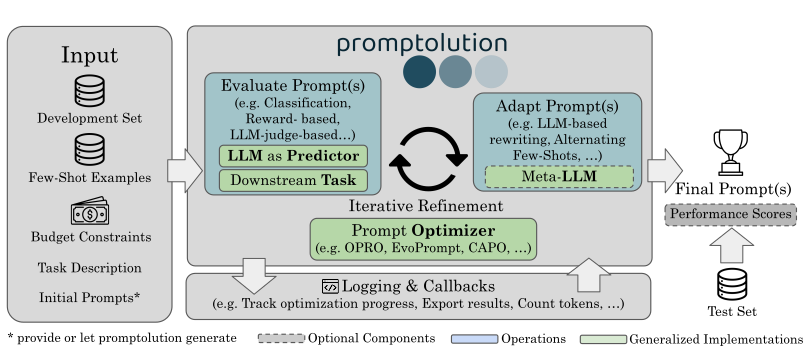

A framework for prompt optimization and a zoo of prompt optimization algorithms.

Project description

🚀 What is Promptolution?

Promptolution is a unified, modular framework for prompt optimization built for researchers and advanced practitioners who want full control over their experimental setup. Unlike end-to-end application frameworks with high abstraction, promptolution focuses exclusively on the optimization stage, providing a clean, transparent, and extensible API. It allows for simple prompt optimization for one task up to large-scale reproducible benchmark experiments.

Key Features

- Implementation of many current prompt optimizers out of the box.

- Unified LLM backend supporting API-based models, Local LLMs, and vLLM clusters.

- Built-in response caching to save costs and parallelized inference for speed.

- Detailed logging and token usage tracking for granular post-hoc analysis.

Have a look at our Release Notes for the latest updates to promptolution.

📦 Installation

pip install promptolution[api]

Local inference via vLLM or transformers:

pip install promptolution[vllm,transformers]

From source:

git clone https://github.com/automl/promptolution.git

cd promptolution

poetry install

🔧 Quickstart

Start with the Getting Started tutorial: https://github.com/automl/promptolution/blob/main/tutorials/getting_started.ipynb

Full docs: https://automl.github.io/promptolution/

🧠 Featured Optimizers

| Name | Paper | Init prompts | Exploration | Costs | Parallelizable | Few-shot |

|---|---|---|---|---|---|---|

CAPO |

Zehle et al., 2025 | required | 👍 | 💲 | ✅ | ✅ |

EvoPromptDE |

Guo et al., 2023 | required | 👍 | 💲💲 | ✅ | ❌ |

EvoPromptGA |

Guo et al., 2023 | required | 👍 | 💲💲 | ✅ | ❌ |

OPRO |

Yang et al., 2023 | optional | 👎 | 💲💲 | ❌ | ❌ |

🏗 Components

Task– Manages the dataset, evaluation metrics, and subsampling.Predictor– Defines how to extract the answer from the model's response.LLM– A unified interface handling inference, token counting, and concurrency.Optimizer– The core component that implements the algorithms that refine prompts.ExperimentConfig– A configuration abstraction to streamline and parametrize large-scale scientific experiments.

🤝 Contributing

Open an issue → create a branch → PR → CI → review → merge.

Branch naming: feature/..., fix/..., chore/..., refactor/....

Please ensure to use pre-commit, which assists with keeping the code quality high:

pre-commit install

pre-commit run --all-files

We encourage every contributor to also write tests, that automatically check if the implementation works as expected:

poetry run python -m coverage run -m pytest

poetry run python -m coverage report

Developed by Timo Heiß, Moritz Schlager, and Tom Zehle (LMU Munich, MCML, ELLIS, TUM, Uni Freiburg).

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distributions

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file promptolution-2.2.0-py3-none-any.whl.

File metadata

- Download URL: promptolution-2.2.0-py3-none-any.whl

- Upload date:

- Size: 61.2 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: poetry/2.2.1 CPython/3.11.14 Linux/6.11.0-1018-azure

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

1f7bee403b7353d8ac447491290d895943d239c91edeb58c75262f965b29b2de

|

|

| MD5 |

2f0117e46992966e69da768ffc220aa4

|

|

| BLAKE2b-256 |

445638d117a110c0bc528336bb32c173d26fcd9329ef4579e5c095b0386311a4

|