A Comprehensive and Scalable Python Library for Outlier Detection (Anomaly Detection)

Project description

Deployment & Documentation & Stats & License

PyOD is the most comprehensive and scalable Python toolkit for detecting outlying objects in multivariate data. This exciting yet challenging field is commonly referred as Outlier Detection or Anomaly Detection.

PyOD includes more than 40 detection algorithms, from classical LOF (SIGMOD 2000) to the latest ECOD (TKDE 2022). Since 2017, PyOD has been successfully used in numerous academic researches and commercial products [38] [39] with more than 6 million downloads. It is also well acknowledged by the machine learning community with various dedicated posts/tutorials, including Analytics Vidhya, KDnuggets, Towards Data Science, and awesome-machine-learning.

PyOD is featured for:

Unified APIs, detailed documentation, and interactive examples across various algorithms.

Advanced models, including classical ones by distance and density estimation, latest deep learning methods, and emerging algorithms like ECOD.

Optimized performance with JIT and parallelization using numba and joblib.

Fast training & prediction with SUOD [39].

Outlier Detection with 5 Lines of Code:

# train an ECOD detector

from pyod.models.ecod import ECOD

clf = ECOD()

clf.fit(X_train)

# get outlier scores

y_train_scores = clf.decision_scores_ # raw outlier scores on the train data

y_test_scores = clf.decision_function(X_test) # predict raw outlier scores on testCiting PyOD:

PyOD paper is published in Journal of Machine Learning Research (JMLR) (MLOSS track). If you use PyOD in a scientific publication, we would appreciate citations to the following paper:

@article{zhao2019pyod,

author = {Zhao, Yue and Nasrullah, Zain and Li, Zheng},

title = {PyOD: A Python Toolbox for Scalable Outlier Detection},

journal = {Journal of Machine Learning Research},

year = {2019},

volume = {20},

number = {96},

pages = {1-7},

url = {http://jmlr.org/papers/v20/19-011.html}

}

or:

Zhao, Y., Nasrullah, Z. and Li, Z., 2019. PyOD: A Python Toolbox for Scalable Outlier Detection. Journal of machine learning research (JMLR), 20(96), pp.1-7.

Key Links and Resources:

Table of Contents:

Installation

It is recommended to use pip or conda for installation. Please make sure the latest version is installed, as PyOD is updated frequently:

pip install pyod # normal install

pip install --upgrade pyod # or update if neededconda install -c conda-forge pyodAlternatively, you could clone and run setup.py file:

git clone https://github.com/yzhao062/pyod.git

cd pyod

pip install .Required Dependencies:

Python 3.6+

combo>=0.1.3

joblib

numpy>=1.13

numba>=0.35

scipy>=1.3.1

scikit_learn>=0.20.0

six

statsmodels

Optional Dependencies (see details below):

combo (optional, required for models/combination.py and FeatureBagging)

keras/tensorflow (optional, required for AutoEncoder, and other deep learning models)

matplotlib (optional, required for running examples)

pandas (optional, required for running benchmark)

suod (optional, required for running SUOD model)

xgboost (optional, required for XGBOD)

Warning 1: PyOD has multiple neural network based models, e.g., AutoEncoders, which are implemented in both PyTorch and Tensorflow. However, PyOD does NOT install DL libraries for you. This reduces the risk of interfering with your local copies. If you want to use neural-net based models, please make sure Keras and a backend library, e.g., TensorFlow, are installed. Instructions are provided: neural-net FAQ. Similarly, models depending on xgboost, e.g., XGBOD, would NOT enforce xgboost installation by default.

Warning 2: PyOD contains multiple models that also exist in scikit-learn. However, these two libraries’ API is not exactly the same–it is recommended to use only one of them for consistency but not mix the results. Refer Differences between scikit-learn and PyOD for more information.

API Cheatsheet & Reference

Full API Reference: (https://pyod.readthedocs.io/en/latest/pyod.html). API cheatsheet for all detectors:

fit(X): Fit detector. y is ignored in unsupervised methods.

decision_function(X): Predict raw anomaly score of X using the fitted detector.

predict(X): Predict if a particular sample is an outlier or not using the fitted detector.

predict_proba(X): Predict the probability of a sample being outlier using the fitted detector.

predict_confidence(X): Predict the model’s sample-wise confidence (available in predict and predict_proba) [28].

Key Attributes of a fitted model:

decision_scores_: The outlier scores of the training data. The higher, the more abnormal. Outliers tend to have higher scores.

labels_: The binary labels of the training data. 0 stands for inliers and 1 for outliers/anomalies.

Model Save & Load

PyOD takes a similar approach of sklearn regarding model persistence. See model persistence for clarification.

In short, we recommend to use joblib or pickle for saving and loading PyOD models. See “examples/save_load_model_example.py” for an example. In short, it is simple as below:

from joblib import dump, load

# save the model

dump(clf, 'clf.joblib')

# load the model

clf = load('clf.joblib')It is known that there are challenges in saving neural network models. Check #328 and #88 for temporary workaround.

Fast Train with SUOD

Fast training and prediction: it is possible to train and predict with a large number of detection models in PyOD by leveraging SUOD framework [39]. See SUOD Paper and SUOD example.

from pyod.models.suod import SUOD

# initialized a group of outlier detectors for acceleration

detector_list = [LOF(n_neighbors=15), LOF(n_neighbors=20),

LOF(n_neighbors=25), LOF(n_neighbors=35),

COPOD(), IForest(n_estimators=100),

IForest(n_estimators=200)]

# decide the number of parallel process, and the combination method

# then clf can be used as any outlier detection model

clf = SUOD(base_estimators=detector_list, n_jobs=2, combination='average',

verbose=False)Implemented Algorithms

PyOD toolkit consists of three major functional groups:

(i) Individual Detection Algorithms :

Type |

Abbr |

Algorithm |

Year |

Ref |

|---|---|---|---|---|

Probabilistic |

ECOD |

Unsupervised Outlier Detection Using Empirical Cumulative Distribution Functions |

2022 |

|

Probabilistic |

ABOD |

Angle-Based Outlier Detection |

2008 |

|

Probabilistic |

FastABOD |

Fast Angle-Based Outlier Detection using approximation |

2008 |

|

Probabilistic |

COPOD |

COPOD: Copula-Based Outlier Detection |

2020 |

|

Probabilistic |

MAD |

Median Absolute Deviation (MAD) |

1993 |

|

Probabilistic |

SOS |

Stochastic Outlier Selection |

2012 |

|

Probabilistic |

KDE |

Outlier Detection with Kernel Density Functions |

2007 |

|

Probabilistic |

Sampling |

Rapid distance-based outlier detection via sampling |

2013 |

|

Linear Model |

PCA |

Principal Component Analysis (the sum of weighted projected distances to the eigenvector hyperplanes) |

2003 |

|

Linear Model |

MCD |

Minimum Covariance Determinant (use the mahalanobis distances as the outlier scores) |

1999 |

|

Linear Model |

CD |

Use Cook’s distance for outlier detection |

1977 |

|

Linear Model |

OCSVM |

One-Class Support Vector Machines |

2001 |

|

Linear Model |

LMDD |

Deviation-based Outlier Detection (LMDD) |

1996 |

|

Proximity-Based |

LOF |

Local Outlier Factor |

2000 |

|

Proximity-Based |

COF |

Connectivity-Based Outlier Factor |

2002 |

|

Proximity-Based |

(Incremental) COF |

Memory Efficient Connectivity-Based Outlier Factor (slower but reduce storage complexity) |

2002 |

|

Proximity-Based |

CBLOF |

Clustering-Based Local Outlier Factor |

2003 |

|

Proximity-Based |

LOCI |

LOCI: Fast outlier detection using the local correlation integral |

2003 |

|

Proximity-Based |

HBOS |

Histogram-based Outlier Score |

2012 |

|

Proximity-Based |

kNN |

k Nearest Neighbors (use the distance to the kth nearest neighbor as the outlier score) |

2000 |

|

Proximity-Based |

AvgKNN |

Average kNN (use the average distance to k nearest neighbors as the outlier score) |

2002 |

|

Proximity-Based |

MedKNN |

Median kNN (use the median distance to k nearest neighbors as the outlier score) |

2002 |

|

Proximity-Based |

SOD |

Subspace Outlier Detection |

2009 |

|

Proximity-Based |

ROD |

Rotation-based Outlier Detection |

2020 |

|

Outlier Ensembles |

IForest |

Isolation Forest |

2008 |

|

Outlier Ensembles |

FB |

Feature Bagging |

2005 |

|

Outlier Ensembles |

LSCP |

LSCP: Locally Selective Combination of Parallel Outlier Ensembles |

2019 |

|

Outlier Ensembles |

XGBOD |

Extreme Boosting Based Outlier Detection (Supervised) |

2018 |

|

Outlier Ensembles |

LODA |

Lightweight On-line Detector of Anomalies |

2016 |

|

Outlier Ensembles |

SUOD |

SUOD: Accelerating Large-scale Unsupervised Heterogeneous Outlier Detection (Acceleration) |

2021 |

|

Neural Networks |

AutoEncoder |

Fully connected AutoEncoder (use reconstruction error as the outlier score) |

[1] [Ch.3] |

|

Neural Networks |

VAE |

Variational AutoEncoder (use reconstruction error as the outlier score) |

2013 |

|

Neural Networks |

Beta-VAE |

Variational AutoEncoder (all customized loss term by varying gamma and capacity) |

2018 |

|

Neural Networks |

SO_GAAL |

Single-Objective Generative Adversarial Active Learning |

2019 |

|

Neural Networks |

MO_GAAL |

Multiple-Objective Generative Adversarial Active Learning |

2019 |

|

Neural Networks |

DeepSVDD |

Deep One-Class Classification |

2018 |

(ii) Outlier Ensembles & Outlier Detector Combination Frameworks:

Type |

Abbr |

Algorithm |

Year |

Ref |

|---|---|---|---|---|

Outlier Ensembles |

Feature Bagging |

2005 |

||

Outlier Ensembles |

LSCP |

LSCP: Locally Selective Combination of Parallel Outlier Ensembles |

2019 |

|

Outlier Ensembles |

XGBOD |

Extreme Boosting Based Outlier Detection (Supervised) |

2018 |

|

Outlier Ensembles |

LODA |

Lightweight On-line Detector of Anomalies |

2016 |

|

Outlier Ensembles |

SUOD |

SUOD: Accelerating Large-scale Unsupervised Heterogeneous Outlier Detection (Acceleration) |

2021 |

|

Combination |

Average |

Simple combination by averaging the scores |

2015 |

|

Combination |

Weighted Average |

Simple combination by averaging the scores with detector weights |

2015 |

|

Combination |

Maximization |

Simple combination by taking the maximum scores |

2015 |

|

Combination |

AOM |

Average of Maximum |

2015 |

|

Combination |

MOA |

Maximization of Average |

2015 |

|

Combination |

Median |

Simple combination by taking the median of the scores |

2015 |

|

Combination |

majority Vote |

Simple combination by taking the majority vote of the labels (weights can be used) |

2015 |

(iii) Utility Functions:

Type |

Name |

Function |

Documentation |

|---|---|---|---|

Data |

generate_data |

Synthesized data generation; normal data is generated by a multivariate Gaussian and outliers are generated by a uniform distribution |

|

Data |

generate_data_clusters |

Synthesized data generation in clusters; more complex data patterns can be created with multiple clusters |

|

Stat |

wpearsonr |

Calculate the weighted Pearson correlation of two samples |

|

Utility |

get_label_n |

Turn raw outlier scores into binary labels by assign 1 to top n outlier scores |

|

Utility |

precision_n_scores |

calculate precision @ rank n |

Algorithm Benchmark

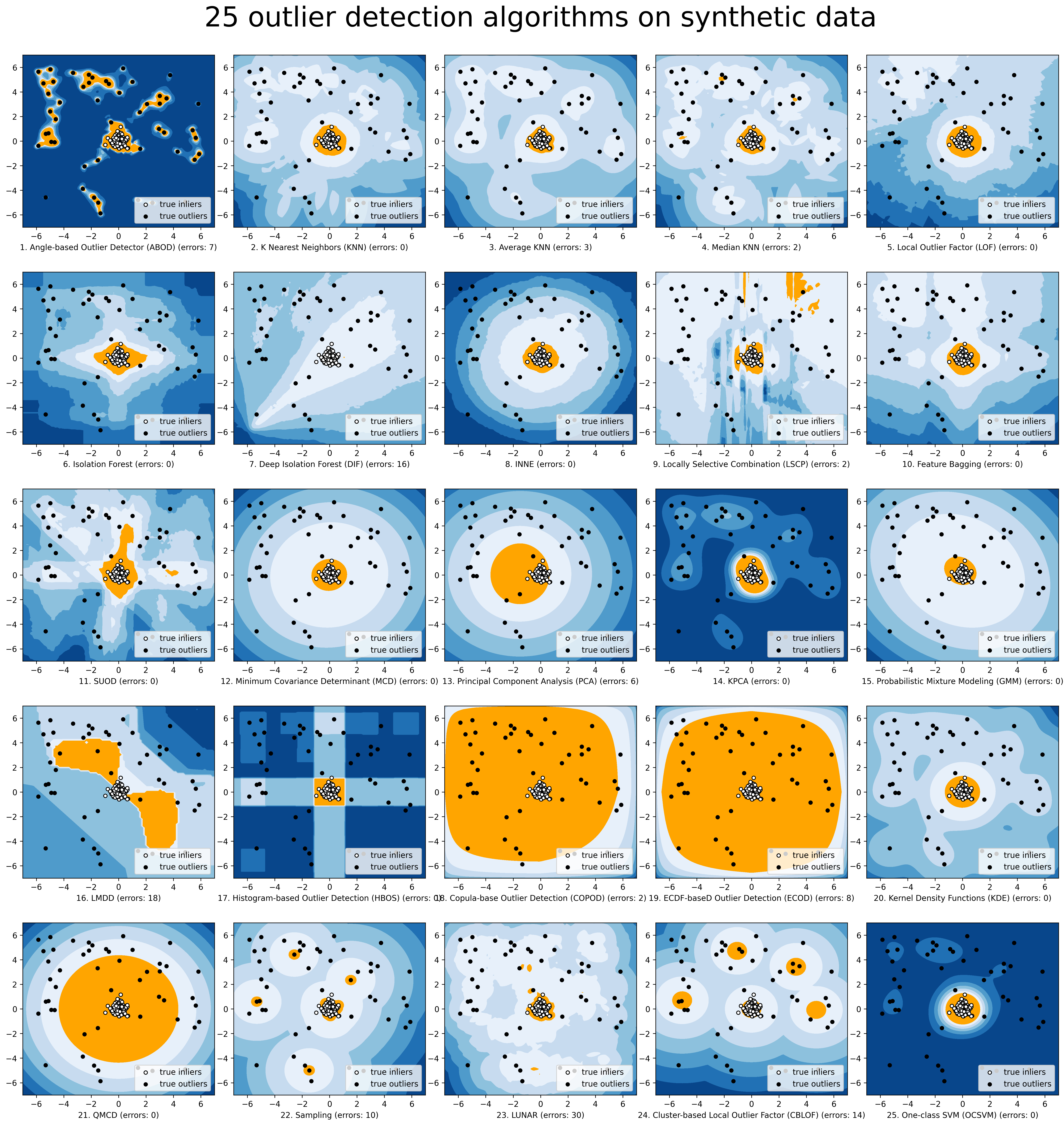

The comparison among of implemented models is made available below (Figure, compare_all_models.py, Interactive Jupyter Notebooks). For Jupyter Notebooks, please navigate to “/notebooks/Compare All Models.ipynb”.

A benchmark is supplied for select algorithms to provide an overview of the implemented models. In total, 17 benchmark datasets are used for comparison, which can be downloaded at ODDS.

For each dataset, it is first split into 60% for training and 40% for testing. All experiments are repeated 10 times independently with random splits. The mean of 10 trials is regarded as the final result. Three evaluation metrics are provided:

The area under receiver operating characteristic (ROC) curve

Precision @ rank n (P@N)

Execution time

Check the latest benchmark. You could replicate this process by running benchmark.py.

Quick Start for Outlier Detection

PyOD has been well acknowledged by the machine learning community with a few featured posts and tutorials.

Analytics Vidhya: An Awesome Tutorial to Learn Outlier Detection in Python using PyOD Library

KDnuggets: Intuitive Visualization of Outlier Detection Methods, An Overview of Outlier Detection Methods from PyOD

Towards Data Science: Anomaly Detection for Dummies

Computer Vision News (March 2019): Python Open Source Toolbox for Outlier Detection

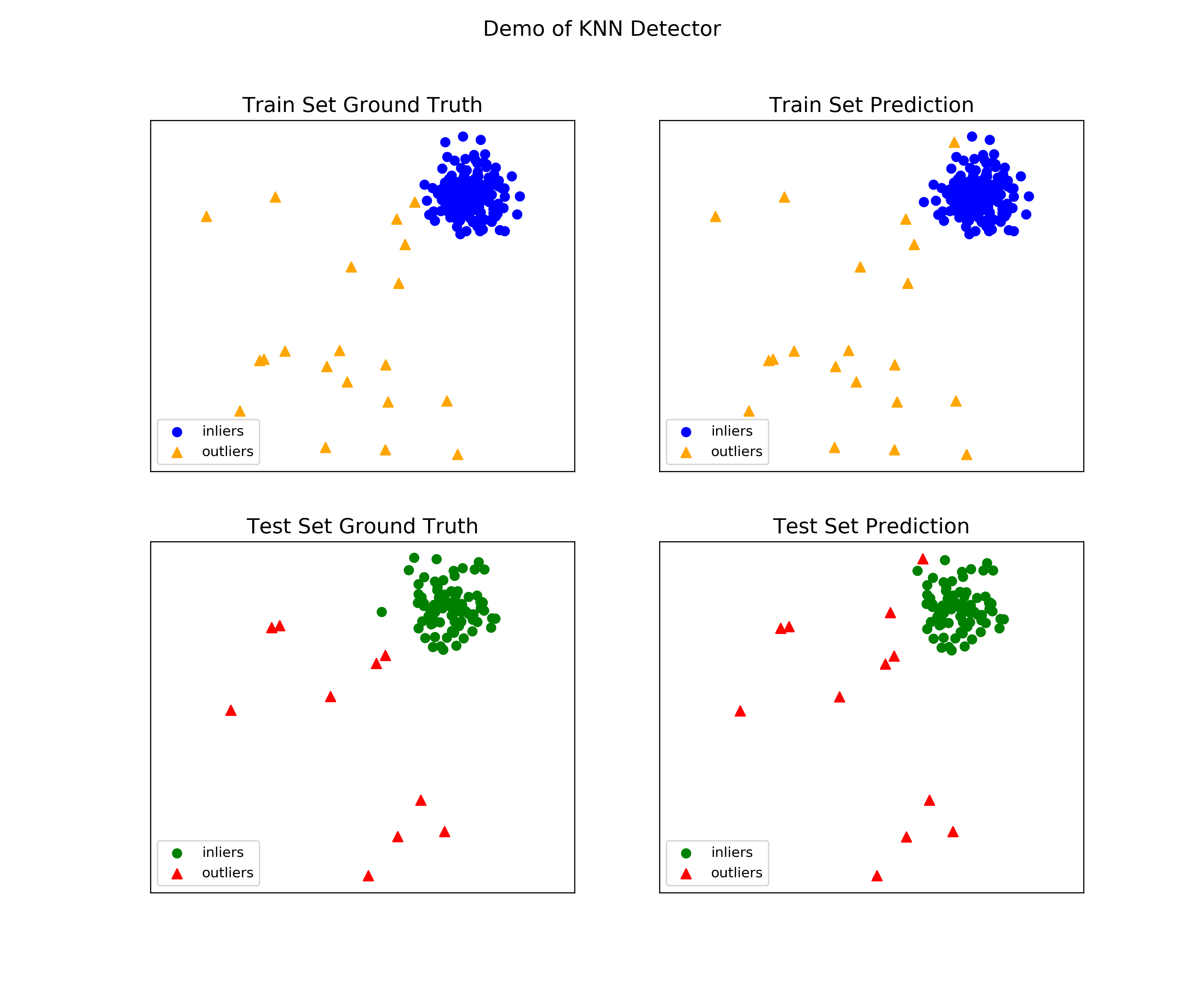

“examples/knn_example.py” demonstrates the basic API of using kNN detector. It is noted that the API across all other algorithms are consistent/similar.

More detailed instructions for running examples can be found in examples directory.

Initialize a kNN detector, fit the model, and make the prediction.

from pyod.models.knn import KNN # kNN detector # train kNN detector clf_name = 'KNN' clf = KNN() clf.fit(X_train) # get the prediction label and outlier scores of the training data y_train_pred = clf.labels_ # binary labels (0: inliers, 1: outliers) y_train_scores = clf.decision_scores_ # raw outlier scores # get the prediction on the test data y_test_pred = clf.predict(X_test) # outlier labels (0 or 1) y_test_scores = clf.decision_function(X_test) # outlier scores # it is possible to get the prediction confidence as well y_test_pred, y_test_pred_confidence = clf.predict(X_test, return_confidence=True) # outlier labels (0 or 1) and confidence in the range of [0,1]Evaluate the prediction by ROC and Precision @ Rank n (p@n).

from pyod.utils.data import evaluate_print # evaluate and print the results print("\nOn Training Data:") evaluate_print(clf_name, y_train, y_train_scores) print("\nOn Test Data:") evaluate_print(clf_name, y_test, y_test_scores)See a sample output & visualization.

On Training Data: KNN ROC:1.0, precision @ rank n:1.0 On Test Data: KNN ROC:0.9989, precision @ rank n:0.9visualize(clf_name, X_train, y_train, X_test, y_test, y_train_pred, y_test_pred, show_figure=True, save_figure=False)

Visualization (knn_figure):

How to Contribute

You are welcome to contribute to this exciting project:

Please first check Issue lists for “help wanted” tag and comment the one you are interested. We will assign the issue to you.

Fork the master branch and add your improvement/modification/fix.

Create a pull request to development branch and follow the pull request template PR template

Automatic tests will be triggered. Make sure all tests are passed. Please make sure all added modules are accompanied with proper test functions.

To make sure the code has the same style and standard, please refer to abod.py, hbos.py, or feature_bagging.py for example.

You are also welcome to share your ideas by opening an issue or dropping me an email at zhaoy@cmu.edu :)

Inclusion Criteria

Similarly to scikit-learn, We mainly consider well-established algorithms for inclusion. A rule of thumb is at least two years since publication, 50+ citations, and usefulness.

However, we encourage the author(s) of newly proposed models to share and add your implementation into PyOD for boosting ML accessibility and reproducibility. This exception only applies if you could commit to the maintenance of your model for at least two year period.

Reference

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

File details

Details for the file pyod-1.0.0.tar.gz.

File metadata

- Download URL: pyod-1.0.0.tar.gz

- Upload date:

- Size: 118.8 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.7.1 importlib_metadata/4.11.3 pkginfo/1.8.2 requests/2.27.1 requests-toolbelt/0.9.1 tqdm/4.63.0 CPython/3.8.13

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

7b3875c91dc6bc629c297f8ec2b59200fa8b1cbca986dfe4abd21e75560818af

|

|

| MD5 |

1240839859742e910f3f0f468ad1d8d5

|

|

| BLAKE2b-256 |

683f9a89f5b727ca12a33530bd4e7a87d367e26c5ff4e8181a934655bc73a35c

|