Pythinker Code is your next CLI agent.

Project description

Pythinker Code

Pythinker Code

Your terminal-native AI engineering agent.

Read code. Edit files. Run commands. Search the web. Plug into your IDE. All from the shell you already live in.

🌐 Website · ⚡ Quick Start · ✨ Features · 🧩 IDE Integration · 🔌 MCP · 🔐 Privacy · 🛠️ Develop

💡 What is Pythinker?

Pythinker Code is an open-source AI coding agent that lives in your terminal. Unlike chat-based assistants stuck behind a browser tab, Pythinker can read your repo, edit files, run shell commands, browse the web, and call MCP tools — all in a single iterative loop driven by the model of your choice.

It speaks the Agent Client Protocol (ACP), so it slots cleanly into ACP-aware editors like Zed and JetBrains. It loads Model Context Protocol (MCP) servers, so the same tools your other agents use just work. And it's hackable: subagents, skills, hooks, and plugins are all first-class extension points.

🎯 One agent, one shell, one workflow. No tab-switching. No context loss. No magic.

🆕 What's New in 2.1.0

A focused refresh of the TUI and slash-command UX.

- Selectors package — interactive

/theme,/thinking,/model,/login,/settings,/extension, and/show-imagespanels replace the old numeric/text prompts. /thinkingslash command — toggle reasoning effort live, mid-session./settingspanel — a realSettingsListover yourConfig(theme, default model, TUI style, default thinking, telemetry, loop limits, background tasks).- Card-style TUI polish — bordered shell card, footer/toolbar, and a full set of tool renderers (read / write / edit / grep / find / bash / agent), plus a diff component. Subagent cards show a running-dots spinner while they work.

- Selector framework —

SelectorHeadersentinel and per-rowon_changecallback for richer custom selectors. - Prompt templates — discovery is now

~/.pythinker/promptsand<project>/.pythinker/prompts. The legacy directory lookup has been retired. - TUI style flag — only

card(default) andpythinkerare accepted; the legacy alias has been dropped.

Upgrade with pythinker update or pip install --upgrade pythinker-code.

✨ Features

🖥️ Terminal-FirstPlan, edit, run, and verify without leaving your shell. Every action is visible, scriptable, and auditable. |

⚡ Shell Command ModePress |

🧩 ACP IDE IntegrationRun |

🔌 MCP Tool LoadingManage stdio and HTTP MCP servers with |

🤖 Subagents & SkillsDelegate focused work to built-in subagents. Load reusable instructions via |

🪝 Hooks & PluginsObserve or block tool execution with hook events. Install community extensions with |

🌐 Web & Visualization UIsOptional web frontend and visualization frontend ship alongside the CLI for richer inspection workflows. |

🤖 Bring Your Own ModelSwap providers and models per-session: |

[!NOTE] Built-in shell commands such as

cdare not yet supported in shell command mode.

⚡ Quick Start

✨ Recommended install (clean, with logo)

curl -fsSL https://raw.githubusercontent.com/mohamed-elkholy95/Pythinker-Code/main/scripts/install.sh | sh

Windows PowerShell:

irm https://raw.githubusercontent.com/mohamed-elkholy95/Pythinker-Code/main/scripts/install.ps1 | iex

The installer fetches uv if missing, installs pythinker-code quietly, and prints a single-line confirmation instead of the full dependency wall.

🚀 One-off run with uvx

uvx pythinker-code

📦 Install as a uv tool

uv tool install pythinker-code

pythinker

🔐 Authenticate (optional)

For hosted Pythinker models or ACP terminal auth:

pythinker login

💬 Try it out

# Interactive session

pythinker

# One-shot prompt

pythinker --prompt "summarize this repository and suggest the next test to add"

# Pick a specific model

pythinker --model openai/gpt-5.5

# Inline config override

pythinker --config '{"default_thinking": true}'

🏠 Using Local Models (LM Studio & Ollama)

Run Pythinker entirely on your own machine — no API key, no cloud. Pythinker speaks each runtime's OpenAI-compatible API, so tools, streaming, JSON mode, vision, and reasoning_effort all work the same as with hosted providers.

LM Studio

1. Set up LM Studio.

- Install LM Studio and download at least one chat model.

- In the LM Studio app, open the model and raise its Context Length (gear icon → Context Length). See Context length matters below.

- Start the server: Developer → Status: Running (or

lms server start --port 1234).

2. Connect Pythinker.

pythinker login --lm-studio

This auto-discovers every chat-capable model loaded in LM Studio, registers each as lm-studio/<model-id>, and picks the largest-context one as your default. Embedding models are filtered out.

3. Use it.

# Default LM Studio model

pythinker -p "explain quicksort"

# Specific model

pythinker -m lm-studio/qwen/qwen3-coder-next -p "write a python http server"

# Interactive shell, then switch models with /model

pythinker

4. Disconnect.

pythinker logout --lm-studio

Ollama

# 1. start the server in one terminal

ollama serve

# 2. pull a model

ollama pull llama3.1:8b

# 3. connect Pythinker

pythinker login --ollama

# 4. use it

pythinker -p "explain monad transformers"

pythinker -m ollama/llama3.1:8b -p "..."

pythinker logout --ollama

Discovery uses Ollama's /api/tags for the model list and /api/show per model to read the real context window.

Remote LM Studio / Ollama (LAN host or alternate port)

pythinker login --lm-studio --base-url http://192.168.1.10:1234/v1

pythinker login --ollama --base-url http://lan-box:11434/v1

The override is saved in your config and used by every subsequent run.

From inside the interactive shell

The same wiring is available as slash commands:

/login lm-studio # or /login lmstudio (no dash also accepted)

/login ollama

/logout lm-studio

/logout ollama

/login # opens a chooser; entries 9 and 10 are the local providers

/model lm-studio/google/gemma-4-e4b # switch model mid-session

⚠️ Context length matters (a common gotcha)

Pythinker's agent prompt — system instructions + tool schemas + skills + your message + recent history — is large. Tens of thousands of tokens before you've even sent your first message.

LM Studio loads a model with a small default context window (often 4096). If you start chatting against that, you'll see:

LLM provider error: Error: The number of tokens to keep from the initial

prompt is greater than the context length (n_keep: 16690 >= n_ctx: 4096).

The shell now prints a friendly recovery hint when this happens, but the cure is in LM Studio:

- In LM Studio, open the model in the Chat tab and click the gear/settings icon (or My Models → Edit).

- Set Context Length to at least

32768, and prefer131072if your VRAM allows. Practical experience: 64k still triggers errors during longer sessions; 128k is a safer floor. - Reload the model (LM Studio prompts you).

- Restart Pythinker so it picks up the new state (

Ctrl+Dthenpythinker, orpythinker -r <session-id>to resume).

Tip: the bigger you set the context, the more VRAM the model uses. If you OOM, try a smaller quantization (e.g., Q4_K_M instead of Q8_0) or a smaller model variant.

Ollama configures context per-request and Pythinker reads the model's max from /api/show, so this gotcha is mostly LM-Studio-specific.

VRAM-friendly model picks

Local models vary wildly in memory use. Rough guide on a 16 GB GPU (e.g., RTX 5080 mobile):

| Model size | Quant | Approx. VRAM | Fits 16 GB? |

|---|---|---|---|

| 2-4 B | Q4-Q8 | 2-4 GB | Yes, easily |

| 7-8 B | Q4 | 5-6 GB | Yes |

| 7-8 B | Q8 | 8-9 GB | Yes |

| 13-14 B | Q4 | 8-10 GB | Yes |

| 27-31 B | Q4 | 17-20 GB | Tight / no |

| 27-31 B | Q8 | 30-35 GB | No |

If LM Studio errors with Failed to load model, you've exceeded VRAM — pick a smaller model or lower-bit quantization.

Environment variables

These override the defaults at both login and runtime:

| Variable | Purpose |

|---|---|

LM_STUDIO_BASE_URL |

Override http://localhost:1234/v1 |

LM_STUDIO_API_KEY |

Set if you've enabled token auth in LM Studio |

OLLAMA_BASE_URL |

Override http://localhost:11434/v1 |

OLLAMA_API_KEY |

Rarely needed (Ollama is unauthenticated by default) |

Example:

LM_STUDIO_BASE_URL=http://workstation.lan:1234/v1 pythinker -p "..."

Refreshing the model list

If you load/unload models in LM Studio (or ollama pull/rm), re-run login to refresh:

pythinker login --lm-studio # or --ollama

(Pythinker intentionally does NOT auto-refresh local providers in the background — login owns that state, so manual edits to your config aren't silently overwritten.)

🧩 IDE Integration via ACP

Pythinker speaks Agent Client Protocol natively. Point your ACP-compatible editor at pythinker acp and you get a multi-session agent server inside your IDE.

📝 Configuration for Zed / JetBrains

{

"agent_servers": {

"Pythinker Code": {

"type": "custom",

"command": "pythinker",

"args": ["acp"],

"env": {}

}

}

}

The ACP server provides:

| Capability | Description |

|---|---|

| 🔑 Terminal auth | pythinker login flow exposed to the IDE |

| 📂 Session listing & resume | Pick up where you left off |

| 🔄 Hot model swap | Change models for a running ACP session |

🔌 MCP Tooling

Pythinker loads Model Context Protocol tools from persistent config or ad-hoc files. Same tools, every agent — no rewriting.

🛠️ Manage persistent MCP servers

# 🌐 Streamable HTTP server with API key

pythinker mcp add --transport http context7 https://mcp.context7.com/mcp \

--header "CONTEXT7_API_KEY: ctx7sk-your-key"

# 🔐 Streamable HTTP server with OAuth

pythinker mcp add --transport http --auth oauth linear https://mcp.linear.app/mcp

# 💻 stdio server

pythinker mcp add --transport stdio chrome-devtools -- npx chrome-devtools-mcp@latest

# 📋 List, authorize, test, and remove

pythinker mcp list

pythinker mcp auth linear

pythinker mcp test chrome-devtools

pythinker mcp remove chrome-devtools

📄 Use an ad-hoc MCP config file

{

"mcpServers": {

"context7": {

"url": "https://mcp.context7.com/mcp",

"headers": {

"CONTEXT7_API_KEY": "YOUR_API_KEY"

}

},

"chrome-devtools": {

"command": "npx",

"args": ["-y", "chrome-devtools-mcp@latest"]

}

}

}

pythinker --mcp-config-file /path/to/mcp.json

🧬 Extensibility

Pythinker is a small, extensible runtime — not a monolith. Build on it.

| Extension Point | What it does | Where to look |

|---|---|---|

| 🤖 Agents & subagents | YAML specs define tools, prompts, and built-in subagent types | src/pythinker_code/agents/ |

| 🎓 Skills | /skill:<name> loads reusable instructions on demand |

bundled & user-defined |

| 🌊 Flows | /flow:<name> executes bundled prompt flows |

bundled & user-defined |

| 🪝 Hooks | Observe or block tool execution; integrate policy or automation | hook events API |

| 🧩 Plugins | Installable extension packages | pythinker plugin |

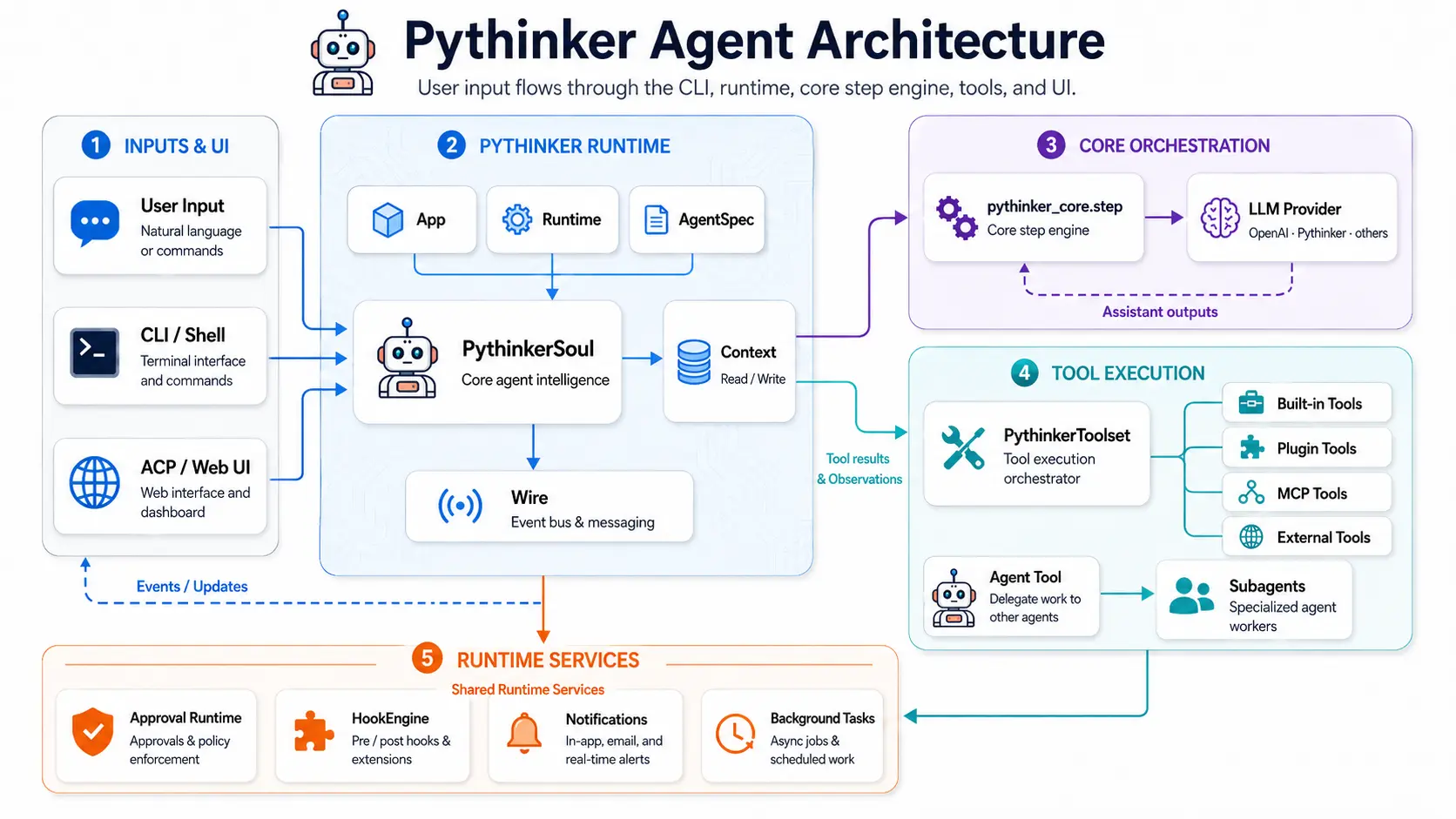

🏗️ Architecture

🔐 Privacy & Telemetry

Pythinker is the agent framework, not the LLM. You bring your own API key (OpenAI, Anthropic, your local LM Studio model, etc.); your prompts and the model's responses go directly between your terminal and the model provider you configured. Pythinker never sees, stores, or forwards them.

To improve the framework itself we collect a small amount of diagnostic telemetry about how the agent runs. It's strictly anonymous, never includes your prompts, model output, file contents, file paths, or any user-identifying data. Two channels:

| Channel | What lands there | Endpoint |

|---|---|---|

| Errors (Sentry-protocol) | Unhandled exceptions and crash stack traces, with absolute paths above site-packages/ rewritten to <env>/ so home directories don't leak |

errors.pythinker.com (self-hosted Bugsink) |

| Traces + structured logs (OpenTelemetry) | Lifecycle events (session_started, started, model_switch), agent-loop spans (pythinker.turn / pythinker.llm / pythinker.tool), and per-event counters |

otel.pythinker.com (self-hosted SigNoz) |

What we collect

- Lifecycle events: session start, command-line flags actually used (booleans only), startup timing, model name (just the identifier, e.g.

claude-opus-4-7), thinking-mode toggle, plan-mode toggle. - Agent-loop spans: turn duration, step count, stop reason (

no_tool_calls/max_steps/error), tool name (Read,Bash,Edit, …), tool success/failure, tool duration, LLM call duration, input/output token counts (numbers — never the content). - Crashes: exception class name, scrubbed stack trace, library versions. We do not send local variable values.

- Static context: pythinker version, OS family, Python version, terminal type (

TERM_PROGRAM), CI flag (CIenv var presence), locale. - A persistent, random

device_idso we can count "how many distinct installs" without identifying a person.

What we never collect

- Your prompts, the model's responses, or any conversation content

- File contents, file paths, working directory names, or workspace structure

- Your API keys, OAuth tokens, environment variables

- Your real name, email, IP address, hostname (host name field is dropped at the edge collector)

- Tool arguments (e.g. what file you read, what command you ran)

Opting out

Pick whichever fits your workflow — all three are equivalent:

# 1. Per-invocation CLI flag

pythinker --no-telemetry

# 2. Environment variable (works in shells, .env files, CI configs)

export PYTHINKER_DISABLE_TELEMETRY=1

pythinker

# 3. Permanently in your config file (~/.pythinker/config.toml)

[default]

telemetry = false

Setting any of these at startup short-circuits Sentry initialization, OTel exporter creation, and the in-process event sink. No network requests are made to the telemetry endpoints.

Pointing telemetry at your own infrastructure

If you operate pythinker for a team and want telemetry routed to your own SigNoz / Bugsink instead, override the endpoints via environment variables:

export PYTHINKER_SENTRY_DSN="https://<key>@your-bugsink.example.com/<project>"

export PYTHINKER_OTEL_ENDPOINT="https://your-otel-collector.example.com"

export PYTHINKER_OTEL_TOKEN="<your bearer token>"

The defaults point at infrastructure operated by the pythinker maintainers; you don't need to set anything to use them.

🛠️ Development

🏁 Prepare the workspace

git clone https://github.com/mohamed-elkholy95/Pythinker-Code.git

cd Pythinker-Code

make prepare

🧰 Common commands

|

▶️ Run & iterate uv run pythinker # CLI from source

make format # format all packages

make check # lint + type-check

|

🧪 Test make test # all unit + e2e tests

make ai-test # AI-driven tests

make test-pythinker-code # CLI only

make test-pythinker-core # Core only

make test-pythinker-host # Host only

make test-pythinker-sdk # SDK only

|

|

🌐 Frontends make web-back # web backend

make web-front # web frontend

make vis-back # vis backend

make vis-front # vis frontend

|

📦 Build make build # Python packages

make build-bin # standalone binary

make help # all targets

|

💡

make buildandmake build-binbuild and embed the web and visualization frontends before packaging.

🗂️ Project Layout

pythinker-code/

├── 📦 src/pythinker_code/ CLI runtime · tools · UIs · ACP · MCP · hooks · plugins · skills · web · vis backends

├── 🧱 packages/

│ ├── pythinker-core/ Provider-agnostic message, tool, and chat-provider abstractions

│ ├── pythinker-host/ Local/remote host filesystem and command execution

│ └── pythinker-code/ Console-script distribution package

├── 🧰 sdks/pythinker-sdk/ Python SDK

└── 🧪 tests/ · tests_e2e/ · tests_ai/ Unit · wire/CLI e2e · AI-driven test suites

🤝 Contributing

Contributions are warmly welcome — bug reports, PRs, plugins, skills, and docs all help.

- 📖 Start with

CONTRIBUTING.md - 🔐 See

SECURITY.mdfor responsible disclosure - 📜 Skim

AGENTS.mdfor the agent design notes

If Pythinker helps you, a ⭐ on GitHub goes a long way.

📜 License

Distributed under the Apache-2.0 License. See LICENSE for the full text and NOTICE for attributions.

Built with ❤️ for engineers who live in the terminal.

🌐 pythinker.com · 📦 PyPI · 🐙 GitHub · 🧩 ACP · 🔌 MCP

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file pythinker_code-2.1.1.tar.gz.

File metadata

- Download URL: pythinker_code-2.1.1.tar.gz

- Upload date:

- Size: 5.9 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

ed0d37586503eff728d0e2a08469bd1dfba7606d97c06c80f4a0a8f0bce54acf

|

|

| MD5 |

679ac074f4b1095f04676094e7f40a7e

|

|

| BLAKE2b-256 |

3da893c14d3cdcdba8ecc96dbc2088074332e394e67a436735ae75b462db6d19

|

File details

Details for the file pythinker_code-2.1.1-py3-none-any.whl.

File metadata

- Download URL: pythinker_code-2.1.1-py3-none-any.whl

- Upload date:

- Size: 6.3 MB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

62a8d3ff6a90fcf3d26b8f3f58255c74471533829f69b63d40c7548673350ccb

|

|

| MD5 |

b2168a8ee9d8fef827044dd56fa611ab

|

|

| BLAKE2b-256 |

13e21d8bfc2a1b8dc97f9dda760cd53f49268737f465c3e6b322020635b3f113

|