A library of procedural dataset generators for training reasoning models

Project description

Reasoning Gym

Reasoning Gym

🧠 About

Reasoning Gym is a community-created Python library of procedural dataset generators and algorithmically verifiable reasoning environments for training reasoning models with reinforcement learning (RL). The goal is to generate virtually infinite training data with adjustable complexity.

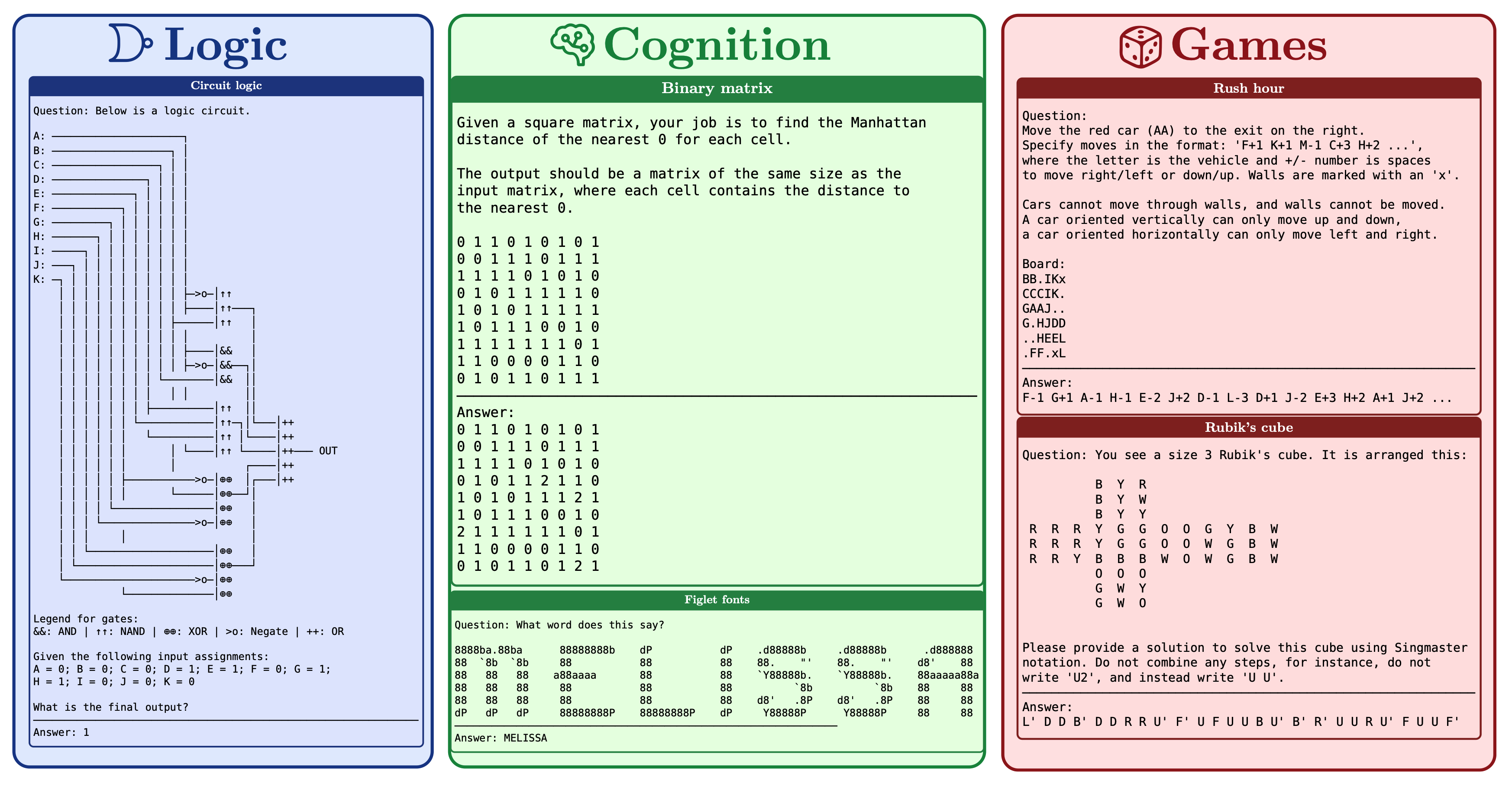

It currently provides more than 100 tasks over many domains, including but not limited to algebra, arithmetic, computation, cognition, geometry, graph theory, logic, and many common games.

Some tasks have a single correct answer, while others, such as Rubik‘s Cube and Countdown, have many correct solutions. To support this, we provide a standard interface for procedurally verifying solutions.

🖼️ Dataset Gallery

In GALLERY.md, you can find example outputs of all datasets available in reasoning-gym.

⬇️ Installation

The reasoning-gym package requires Python >= 3.10.

Install the latest published package from PyPI via pip:

pip install reasoning-gym

Note that this project is currently under active development, and the version published on PyPI may be a few days behind main.

✨ Quickstart

Starting to generate tasks using Reasoning Gym is straightforward:

import reasoning_gym

data = reasoning_gym.create_dataset('leg_counting', size=10, seed=42)

for i, x in enumerate(data):

print(f'{i}: q="{x['question']}", a="{x['answer']}"')

print('metadata:', x['metadata'])

# use the dataset's `score_answer` method for algorithmic verification

assert data.score_answer(answer=x['answer'], entry=x) == 1.0

Output:

0: q="How many legs are there in total if you have 1 sea slug, 1 deer?", a="4"

metadata: {'animals': {'sea slug': 1, 'deer': 1}, 'total_legs': 4}

1: q="How many legs are there in total if you have 2 sheeps, 2 dogs?", a="16"

metadata: {'animals': {'sheep': 2, 'dog': 2}, 'total_legs': 16}

2: q="How many legs are there in total if you have 1 crab, 2 lobsters, 1 human, 1 cow, 1 bee?", a="42"

...

Use keyword arguments to pass task-specific configuration values:

reasoning_gym.create_dataset('leg_counting', size=10, seed=42, max_animals=20)

Create a composite dataset containing multiple task types, with optional relative task weightings:

from reasoning_gym.composite import DatasetSpec

specs = [

# here, leg_counting tasks will make up two thirds of tasks

DatasetSpec(name='leg_counting', weight=2, config={}), # default config

DatasetSpec(name='figlet_font', weight=1, config={"min_word_len": 4, "max_word_len": 6}), # specify config

]

reasoning_gym.create_dataset('composite', size=10, seed=42, datasets=specs)

For the simplest way to get started training models with Reasoning Gym, we recommend using the verifiers library, which directly supports RG tasks. See examples/verifiers for details. However, RG data can be used with any major RL training framework.

🔍 Evaluation

Instructions for running the evaluation scripts are provided in eval/README.md.

Evaluation results of different reasoning models will be tracked in the reasoning-gym-eval repo.

🤓 Training

The training/ directory has full details of the training runs we carried out with RG for the paper. In our experiments, we utilise custom Dataset code to dynamically create RG samples at runtime, and to access the RG scoring function for use as a training reward. See training/README.md to reproduce our runs.

For a more plug-and-play experience, it may be easier to build a dataset ahead of time. See scripts/hf_dataset/ for a simple script allowing generation of RG data and conversion to a HuggingFace dataset. To use the script, build your dataset configurations in the YAML. You can find a list of tasks and configurable parameters in the dataset gallery. Then run save_hf_dataset.py with desired arguments.

The script will save each dataset entries as a row with question, answer, and metadata columns. The RG scoring functions expect the entry object from each row along with the model response to obtain reward values. Calling the scoring function is therefore simple:

from reasoning_gym import get_score_answer_fn

for entry in dataset:

model_response = generate_response(entry["question"])

rg_score_fn = get_score_answer_fn(entry["metadata"]["source_dataset"])

score = rg_score_fn(model_response, entry)

# do something with the score...

👷 Contributing

Please see CONTRIBUTING.md.

If you have ideas for dataset generators please create an issue here or contact us in the #reasoning-gym channel of the GPU-Mode discord server.

🚀 Projects Using Reasoning Gym

Following is a list of awesome projects building on top of Reasoning Gym:

- Verifiers: Reinforcement Learning with LLMs in Verifiable Environments

- (NVIDIA) ProRL: Prolonged Reinforcement Learning Expands Reasoning Boundaries in Large Language Models

- (Nous Research) Atropos - an LLM RL Gym

- (PrimeIntellect) SYNTHETIC-2: a massive open-source reasoning dataset

- (Gensyn) RL Swarm: a framework for planetary-scale collaborative RL

- (Axon RL) GEM: a comprehensive framework for RL environments

- (FAIR at Meta) OptimalThinkingBench: Evaluating Over and Underthinking in LLMs

- (Gensyn) Sharing is Caring: Efficient LM Post-Training with Collective RL Experience Sharing

- (MILA) Self-Evolving Curriculum for LLM Reasoning

- (MILA) Recursive Self-Aggregation Unlocks Deep Thinking in Large Language Models

- (NVIDIA) BroRL: Scaling Reinforcement Learning via Broadened Exploration

- (NVIDIA) Nemotron 3 Super: Open, Efficient Mixture-of-Experts Hybrid Mamba-Transformer Model for Agentic Reasoning

- (Apple) Multilingual Reasoning Gym: Multilingual Scaling of Procedural Reasoning Environments

📝 Citation

If you use this library in your research, please cite the paper:

@misc{stojanovski2025reasoninggymreasoningenvironments,

title={REASONING GYM: Reasoning Environments for Reinforcement Learning with Verifiable Rewards},

author={Zafir Stojanovski and Oliver Stanley and Joe Sharratt and Richard Jones and Abdulhakeem Adefioye and Jean Kaddour and Andreas Köpf},

year={2025},

eprint={2505.24760},

archivePrefix={arXiv},

primaryClass={cs.LG},

url={https://arxiv.org/abs/2505.24760},

}

⭐️ Star History

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file reasoning_gym-0.1.25.tar.gz.

File metadata

- Download URL: reasoning_gym-0.1.25.tar.gz

- Upload date:

- Size: 6.8 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.11.11

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

932247c760dd23e09fb14d013d29ec4c8804e62846bd50473f6915dc854adad2

|

|

| MD5 |

275acddceec11d32ba5d982108ef4e1c

|

|

| BLAKE2b-256 |

f07f4023ce1f8f961ac1d9bf6c0b054c8c7dd4eceaaa8b064f53208306afe0ca

|

File details

Details for the file reasoning_gym-0.1.25-py3-none-any.whl.

File metadata

- Download URL: reasoning_gym-0.1.25-py3-none-any.whl

- Upload date:

- Size: 7.0 MB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.11.11

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

7f17a3eddb13c015d7d4a755ed576a061df889faf9468bcc2cca334ebe9e0435

|

|

| MD5 |

c061a7ff761dc2369126d8fe51bdc0b2

|

|

| BLAKE2b-256 |

4221bb9f6d2f76424f8b516fe83f8026e52e466b0202341bb4ee7a9861e06277

|