RL4CO: an Extensive Reinforcement Learning for Combinatorial Optimization Benchmark

Project description

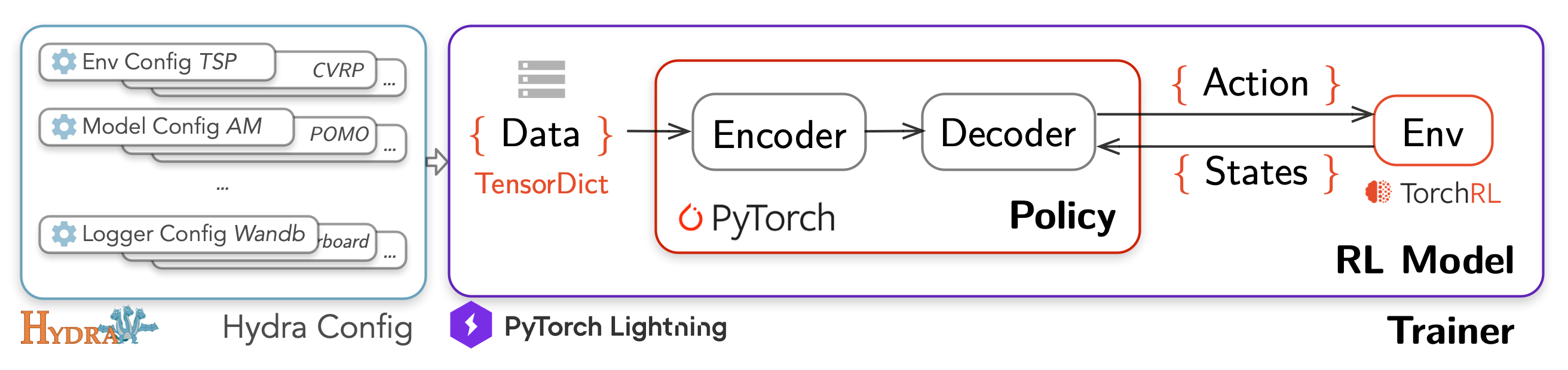

An extensive Reinforcement Learning (RL) for Combinatorial Optimization (CO) benchmark. Our goal is to provide a unified framework for RL-based CO algorithms, and to facilitate reproducible research in this field, decoupling the science from the engineering.

RL4CO is built upon:

- TorchRL: official PyTorch framework for RL algorithms and vectorized environments on GPUs

- TensorDict: a library to easily handle heterogeneous data such as states, actions and rewards

- PyTorch Lightning: a lightweight PyTorch wrapper for high-performance AI research

- Hydra: a framework for elegantly configuring complex applications

Getting started

RL4CO is now available for installation on pip!

pip install rl4co

Local install and development

If you want to develop RL4CO or access the latest builds, we recommend you to install it locally with pip in editable mode:

git clone https://github.com/kaist-silab/rl4co && cd rl4co

pip install -e .

[Optional] Automatically install PyTorch with correct CUDA version

These commands will automatically install PyTorch with the right GPU version for your system:

pip install light-the-torch

python3 -m light_the_torch install -r --upgrade torch

Note:

condais also a good candidate for hassle-free installation of PyTorch: check out the PyTorch website for more details.

To get started, we recommend checking out our quickstart notebook or the minimalistic example below.

Usage

Train model with default configuration (AM on TSP environment):

python run.py

Change experiment

Train model with chosen experiment configuration from configs/experiment/ (e.g. tsp/am, and environment with 42 cities)

python run.py experiment=routing/am env.num_loc=42

Disable logging

python run.py experiment=routing/am logger=none '~callbacks.learning_rate_monitor'

Note that ~ is used to disable a callback that would need a logger.

Create a sweep over hyperparameters (-m for multirun)

python run.py -m experiment=routing/am train.optimizer.lr=1e-3,1e-4,1e-5

Minimalistic Example

Here is a minimalistic example training the Attention Model with greedy rollout baseline on TSP in less than 30 lines of code:

from rl4co.envs import TSPEnv

from rl4co.models import AttentionModel

from rl4co.utils import RL4COTrainer

# Environment, Model, and Lightning Module

env = TSPEnv(num_loc=20)

model = AttentionModel(env,

baseline="rollout",

train_data_size=100_000,

test_data_size=10_000,

optimizer_kwargs={'lr': 1e-4}

)

# Trainer

trainer = RL4COTrainer(max_epochs=3)

# Fit the model

trainer.fit(model)

# Test the model

trainer.test(model)

Testing

Run tests with pytest from the root directory:

pytest tests

Contributing

Have a suggestion, request, or found a bug? Feel free to open an issue or submit a pull request. If you would like to contribute, please check out our contribution guidelines here. We welcome and look forward to all contributions to RL4CO!

We are also on Slack if you have any questions or would like to discuss RL4CO with us. We are open to collaborations and would love to hear from you 🚀

Contributors

Cite us

If you find RL4CO valuable for your research or applied projects:

@article{berto2023rl4co,

title = {{RL4CO}: an Extensive Reinforcement Learning for Combinatorial Optimization Benchmark},

author={Federico Berto and Chuanbo Hua and Junyoung Park and Minsu Kim and Hyeonah Kim and Jiwoo Son and Haeyeon Kim and Joungho Kim and Jinkyoo Park},

journal={arXiv preprint arXiv:2306.17100},

year={2023},

url = {https://github.com/kaist-silab/rl4co}

}

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file rl4co-0.2.1.tar.gz.

File metadata

- Download URL: rl4co-0.2.1.tar.gz

- Upload date:

- Size: 137.8 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/4.0.2 CPython/3.11.5

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

91781891aa0be078206a900f13c035e3345bc69428e82b72445e9b4dcc52908f

|

|

| MD5 |

0da31fb6ca37230d947f2f9bdcbe7d04

|

|

| BLAKE2b-256 |

544678f1bcc646613592d6699b6f89389253a8f01ce9c9c894252c0d4cd5d3a8

|

File details

Details for the file rl4co-0.2.1-py3-none-any.whl.

File metadata

- Download URL: rl4co-0.2.1-py3-none-any.whl

- Upload date:

- Size: 181.4 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/4.0.2 CPython/3.11.5

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

d43c602252768775ec5b84b547c09dba675d10a8895c1f4a1290c64ab79c7e0e

|

|

| MD5 |

2eea97483ffce8449cb676ae34258bd9

|

|

| BLAKE2b-256 |

3211f2ea6e5fe8748544ddefb8aed86c6d9f18773412ab4fccdc1413ca7f4bb2

|