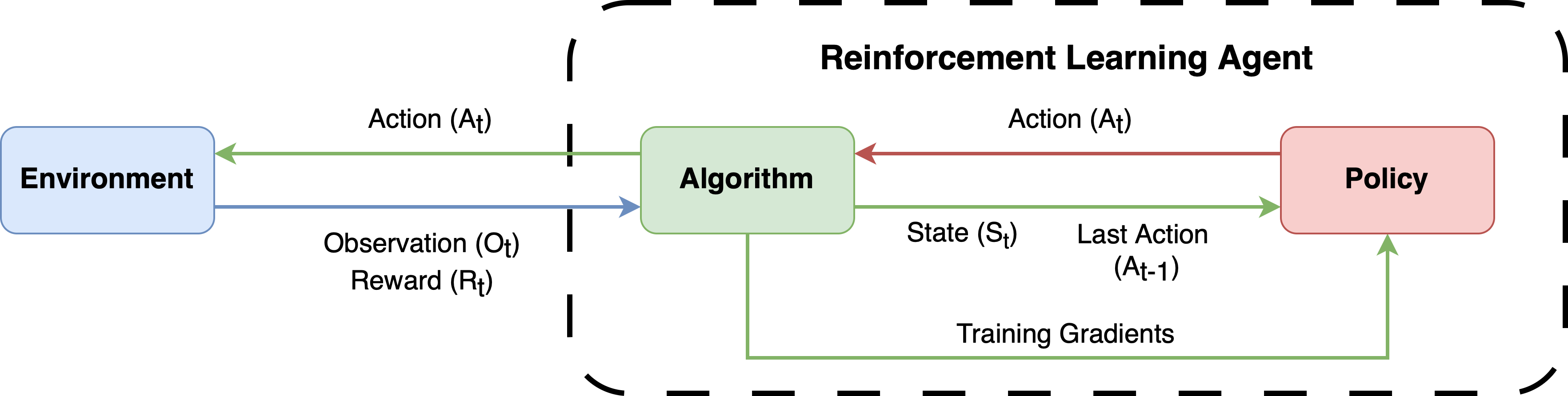

Reinforcement learning framework for portfolio optimization tasks.

Project description

RLPortfolio is a Python package which provides several features to implement, train and test reinforcement learning agents that optimize a financial portfolio:

- A training simulation environment that implements the state-of-the-art mathematical formulation commonly used in the research field.

- Two policy gradient training algorithms that are specifically built to solve the portfolio optimization task.

- Four cutting-edge deep neural networks implemented in PyTorch that can be used as the agent policy.

Click here to access the library documentation!

Note: This project is mainly intended for academic purposes. Therefore, be careful if using RLPortfolio to trade real money and consult a professional before investing, if possible.

About RLPortfolio

This library is composed by the following components:

| Component | Description |

|---|---|

| rlportfolio.algorithm | A compilation of specific training algorithms to portfolio optimization agents. |

| rlportfolio.data | Functions and classes to perform data preprocessing. |

| rlportfolio.environment | Training reinforcement learning environment. |

| rlportfolio.policy | A collection of deep neural networks to be used in the agent. |

| rlportfolio.utils | Utility functions for convenience. |

A Modular Library

RLPortfolio is implemented with a modular architecture in mind so that it can be used in conjunction with several other libraries. To effectively train an agent, you need three constituents:

- A training algorithm.

- A simulation environment.

- A policy neural network (depending on the algorithm, a critic neural network might be necessary tools).

The figure below shows the dynamics between those components. All of them are present in this library, but users are free to use other libraries or custom implementations.

Modern Standards and Libraries

Differently than other implementations of the research field, this library utilizes modern versions of libraries (PyTorch, Gymnasium, Numpy and Pandas) and follows standards that allows its utilization in conjunction with other libraries.

Easy to Use and Customizable

RLPortfolio aims to be easy to use and its code is heavily documented using Google Python Style so that users can understand how to utilize the classes and functions. Additionaly, the training components are very customizable and, thus, different training routines can be run without the need to directly modify the code.

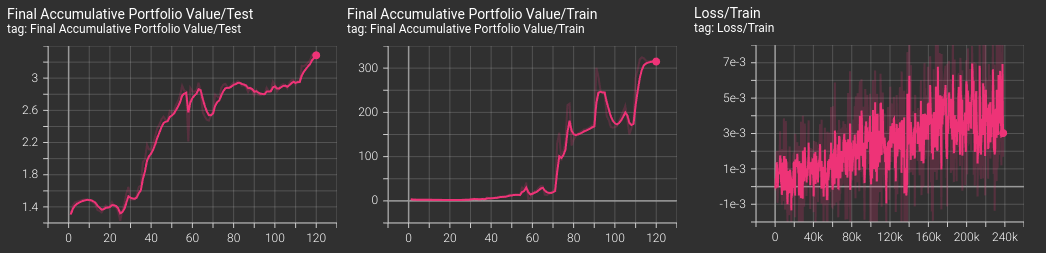

Integration with Tensorboard

The algorithms implemented in the package are integrated with Tensorboard, automatically providing graphs of the main metrics during training, validation and testing.

Focus on Reliability

In order to be as reliable as possible, this project has a strong focus in implementing unit tests for new implementations. Therefore, RLPortfolio can be easily used to reproduce and compare research studies.

Installation

You can install this package using pip with:

$ pip install rlportfolio

Additionally, you can also install it by cloning this repository and running the following command:

$ pip install .

Interface

RLPortfolio's interface is very easy to use. In order to train an agent, you need to instantiate an environment object. The environment makes use of a dataframe which contains the time series of price of stocks.

import pandas as pd

from rlportfolio.environment import PortfolioOptimizationEnv

# dataframe with training data (market price time series)

df_train = pd.read_csv("train_data.csv")

environment = PortfolioOptimizationEnv(

df_train, # data to be used

100000 # initial value of the portfolio

)

Then, it is possible to instantiate the policy gradient algorithm to generate an agent that actuates in the created environment.

from rlportfolio.algorithm import PolicyGradient

algorithm = PolicyGradient(environment)

Finally, you can train the agent using the defined algorithm through the following method:

# train the algorithm for 10000

algorithm.train(10000)

It's now possible to test the agent's performance in another environment which contains data of a different time period.

# dataframe with testing data (market price time series)

df_test = pd.read_csv("test_data.csv")

environment_test = PortfolioOptimizationEnv(

df_test, # data to be used

100000 # initial value of the portfolio

)

# test the agent in the test environment

algorithm.test(environment_test)

The test function will return a dictionary with the metrics of the test.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file rlportfolio-0.3.0.tar.gz.

File metadata

- Download URL: rlportfolio-0.3.0.tar.gz

- Upload date:

- Size: 37.0 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/5.0.0 CPython/3.12.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3e12550edfeb047da19eeec9cda183d31a38f3312a60d63c5fc62c5f5b6da442

|

|

| MD5 |

743d56e8755eae5809aa13be176c8347

|

|

| BLAKE2b-256 |

56af101ca24d42c2142402b6d1f0b89599195859c7903b2f3e3fc8ee993cc534

|

File details

Details for the file rlportfolio-0.3.0-py3-none-any.whl.

File metadata

- Download URL: rlportfolio-0.3.0-py3-none-any.whl

- Upload date:

- Size: 40.4 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/5.0.0 CPython/3.12.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

6354c995977e036428ef4288009214fd0298f588b32b494063fdd25b759a2c03

|

|

| MD5 |

5950f818c0896c622dc729e07c04e442

|

|

| BLAKE2b-256 |

573a79e2deb79bdc3c8f3128c3b7f6b1b2955577642de0c80b4e73d39cfd596f

|