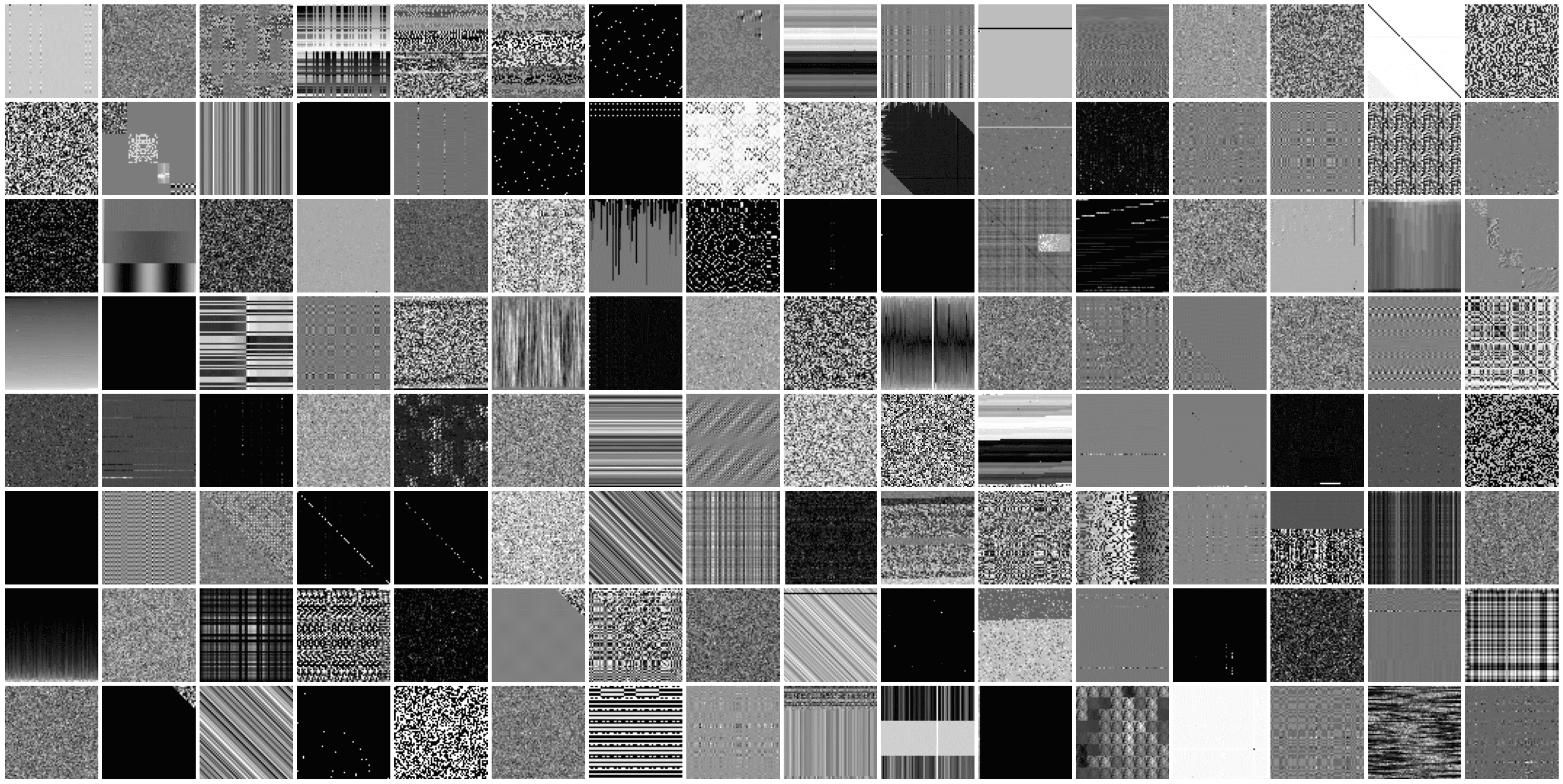

Random structured matrices.

Project description

rstmat

With rstmat you can generate random structured matrices:

from rstmat import random_matrix

matrices = []

for i in range(128):

matrices.append(random_matrix((64, 64))) # is torch.Tensor

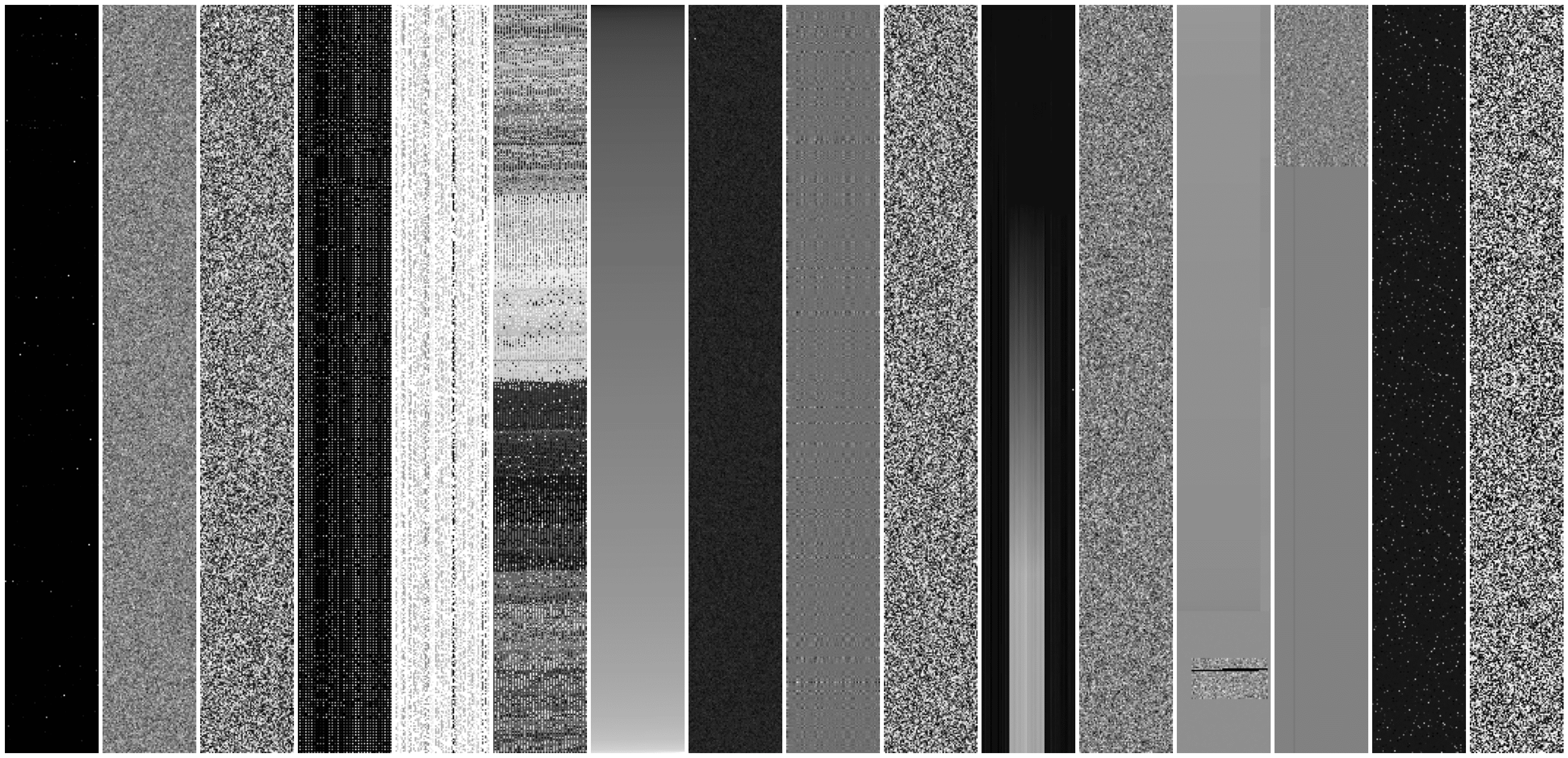

It can also generate rectangular matrices

for i in trange(16):

matrices.append(random_matrix((512, 64))

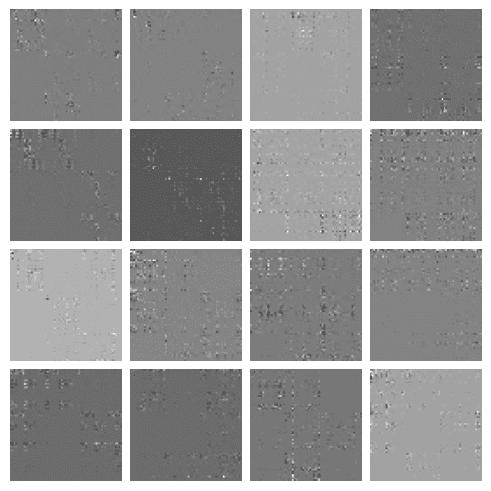

You can also generate a batch of matrices which is faster. However note that all matrices in one batch will share the same tree of operations, so they will basically look similar:

batch = random_matrix((16, 64, 64))

Installation

pip install rstmat

Why

If you want to test some algorithm on random matrices or fit some linear algebra approximation model, performance on matrices with normally-distributed entries rarely generalizes to performance on real tasks. So I tried to fix that by generating random structured matrices.

How it works

It picks a random matrix type of which there are many. For example RandomNormal generates a matrix with normally distributed entries.

However many matrix types generate other matrices by calling random_matrix recursively which is how you get structured matrices.

For example, AAT generates another matrix and computes $A A^\text{T}$. AB generates two matrices and computes their product. RowCat generates two matrices and concatenates rows. The matrices those generate might themselves request to generate more matrices.

Distribution of generated matrices

I tried to make the matrices to be reasonable on average, but outliers happen too. We can look at various statistics to see what matrices are generated.

Value range

Matrices generally have reasonable values but sometimes you get matrices with very large values, although you will never get nans or infinities. So if applicable, it might be a good idea to normalize the generated matrices.

Statistics for 32 sample matrices

matrices = []

for i in range(32):

m = random_matrix((128, 128))

print(f'min = {m.min().item():.5f}, max={m.max().item():.5f}, mean={m.mean().item():.5f}, std={m.std().item():.5f}')

output:

min = 0.00000, max=0.36568, mean=0.00170, std=0.01367

min = -0.00160, max=0.00799, mean=0.00136, std=0.00310

min = 0.00000, max=1.00000, mean=0.99530, std=0.05258

min = -0.00000, max=253.00000, mean=127.00000, std=52.77071

min = -4.12703, max=4.27076, mean=-0.00004, std=1.00000

min = -9.12002, max=17.89858, mean=0.71432, std=1.44218

min = -629844.50000, max=535442.31250, mean=641.08203, std=35570.25781

min = -3.12680, max=1.00000, mean=-0.05708, std=0.41463

min = -24.00136, max=31.31566, mean=0.00074, std=3.94008

min = 0.00000, max=1.63427, mean=0.40847, std=0.70762

min = -1648.49512, max=1648.49512, mean=-2.10210, std=576.00549

min = -6.42549, max=5.25735, mean=0.09184, std=0.54934

min = 0.00048, max=0.35305, mean=0.00781, std=0.00293

min = -0.10753, max=0.14890, mean=0.00001, std=0.01139

min = -29.89031, max=29.92242, mean=-0.20419, std=9.60233

min = 0.00000, max=0.41209, mean=0.00781, std=0.01416

min = -11.19279, max=-0.00000, mean=-8.53553, std=2.88449

min = 0.04000, max=6.61000, mean=2.68506, std=0.89763

min = -88875.07812, max=58857.13281, mean=30.58776, std=2343.74390

min = 0.00000, max=0.27113, mean=0.06860, std=0.05070

min = 54.00000, max=32738.00000, mean=16383.07324, std=7126.35986

min = -0.03120, max=0.03276, mean=0.00016, std=0.00781

min = 14.66208, max=221.25452, mean=68.65743, std=25.70100

min = -3.81714, max=3.63805, mean=-0.01195, std=1.01938

min = -0.81774, max=0.96052, mean=0.01563, std=0.23068

min = -0.99982, max=0.99983, mean=0.00088, std=0.07071

min = 0.00000, max=0.07785, mean=0.00439, std=0.00472

min = -0.00781, max=0.00781, mean=0.00008, std=0.00781

min = -0.77746, max=0.97658, mean=0.00157, std=0.03935

min = -0.99463, max=1.02445, mean=0.00028, std=0.15895

min = -965.15588, max=-79.21937, mean=-152.21527, std=76.95900

min = -8.93437, max=16.87033, mean=0.02283, std=0.62715

Rank and condition number

Around 75% of generated matrices are full-rank, condition number varies greatly. Although this depends on size of the matrix.

Statistics for 32 sample matrices

matrices = []

for i in range(32):

m = random_matrix((128, 128))

print(f'rank = {torch.linalg.matrix_rank(m).item()}/128, cond={torch.linalg.cond(m).item()}')

output:

rank = 7/128, cond=inf

rank = 127/128, cond=212265.09375

rank = 26/128, cond=inf

rank = 128/128, cond=17851.337890625

rank = 128/128, cond=408.2425231933594

rank = 128/128, cond=2853.546630859375

rank = 68/128, cond=inf

rank = 97/128, cond=17166084.0

rank = 128/128, cond=861.2491455078125

rank = 3/128, cond=inf

rank = 64/128, cond=3450434816.0

rank = 126/128, cond=278033.75

rank = 128/128, cond=3300.02783203125

rank = 127/128, cond=40797840.0

rank = 128/128, cond=220.8697052001953

rank = 127/128, cond=72893.875

rank = 3/128, cond=inf

rank = 128/128, cond=1.0

rank = 128/128, cond=444.19927978515625

rank = 115/128, cond=880844.1875

rank = 127/128, cond=199054.265625

rank = 120/128, cond=361688704.0

rank = 128/128, cond=29997.986328125

rank = 128/128, cond=509.33160400390625

rank = 128/128, cond=11920.4072265625

rank = 2/128, cond=inf

rank = 8/128, cond=inf

rank = 79/128, cond=9.32627568546049e+22

rank = 13/128, cond=932012032000.0

rank = 128/128, cond=1.0

rank = 128/128, cond=3316.430419921875

rank = 121/128, cond=11409373184.0

Performance

Generating large matrices (e.g. 4096 by 4096) might take up to a few seconds depending on how complicated the tree of operations is. You can make penalties stronger, for example:

A = random_matrix((4096, 4096), branch_penalty=0.7, ops_penalty=0.7, device='cuda')

This will penalize very long operation trees, so you get simpler matrices, but it will be faster.

Most of the operations happen on the device you specify (default is torch.get_default_device() which defaults to CPU). Smaller matrices (under around 128 by 128) are typically faster to generate on CPU. Larger matrices become significantly faster on GPU.

For large matrices it is recommended to install opt_einsum and enable it:

import torch.backends.opt_einsum

torch.backends.opt_einsum.enabled = True

Other stuff

It's easy to define new matrix types, you can look at the code in https://github.com/inikishev/rstmat/blob/main/rstmat/matrix.py .

What I found however is that when you make even a slight change to weights of matrix types, it might significantly impact final distribution, where some particular kind of matrix (e.g. sparse) becomes to dominate. All matrices have weights which determine the probabilities of getting picked, those are somewhat tuned to get diverse matrices.

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file rstmat-0.1.67.tar.gz.

File metadata

- Download URL: rstmat-0.1.67.tar.gz

- Upload date:

- Size: 20.7 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3786326ff435589e7ee617288c6566b74d2836a0316ceeab8f9ff7beecd51146

|

|

| MD5 |

0cc68d8c35ab62164c2f01a7ee2d4838

|

|

| BLAKE2b-256 |

191cb58f8900081d1c1fbcd6e397dae7b535d56fe8e77cd0ac7277d396e9c3b4

|

Provenance

The following attestation bundles were made for rstmat-0.1.67.tar.gz:

Publisher:

python-publish.yml on inikishev/rstmat

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

rstmat-0.1.67.tar.gz -

Subject digest:

3786326ff435589e7ee617288c6566b74d2836a0316ceeab8f9ff7beecd51146 - Sigstore transparency entry: 702385167

- Sigstore integration time:

-

Permalink:

inikishev/rstmat@38418382ea7b9973909433aa2b3234f096c0aa60 -

Branch / Tag:

refs/tags/0.1.67 - Owner: https://github.com/inikishev

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

python-publish.yml@38418382ea7b9973909433aa2b3234f096c0aa60 -

Trigger Event:

push

-

Statement type:

File details

Details for the file rstmat-0.1.67-py3-none-any.whl.

File metadata

- Download URL: rstmat-0.1.67-py3-none-any.whl

- Upload date:

- Size: 17.4 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

6426c31e252ed34f610956e4206f16601f8f883831a663e3d9b3018ee49ff8f6

|

|

| MD5 |

4964264cdc973ce680e2ef1b06d666b7

|

|

| BLAKE2b-256 |

eb99ae4e29d389b6a1bfa7585d90e85188bf397627b49ca94ec86d34e8d3c839

|

Provenance

The following attestation bundles were made for rstmat-0.1.67-py3-none-any.whl:

Publisher:

python-publish.yml on inikishev/rstmat

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

rstmat-0.1.67-py3-none-any.whl -

Subject digest:

6426c31e252ed34f610956e4206f16601f8f883831a663e3d9b3018ee49ff8f6 - Sigstore transparency entry: 702385168

- Sigstore integration time:

-

Permalink:

inikishev/rstmat@38418382ea7b9973909433aa2b3234f096c0aa60 -

Branch / Tag:

refs/tags/0.1.67 - Owner: https://github.com/inikishev

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

python-publish.yml@38418382ea7b9973909433aa2b3234f096c0aa60 -

Trigger Event:

push

-

Statement type: