A package to consume events from an Google Pub/Sub, process log files, and forward them to a HTTP endpoint or file.

Project description

GCP Log Forwarder

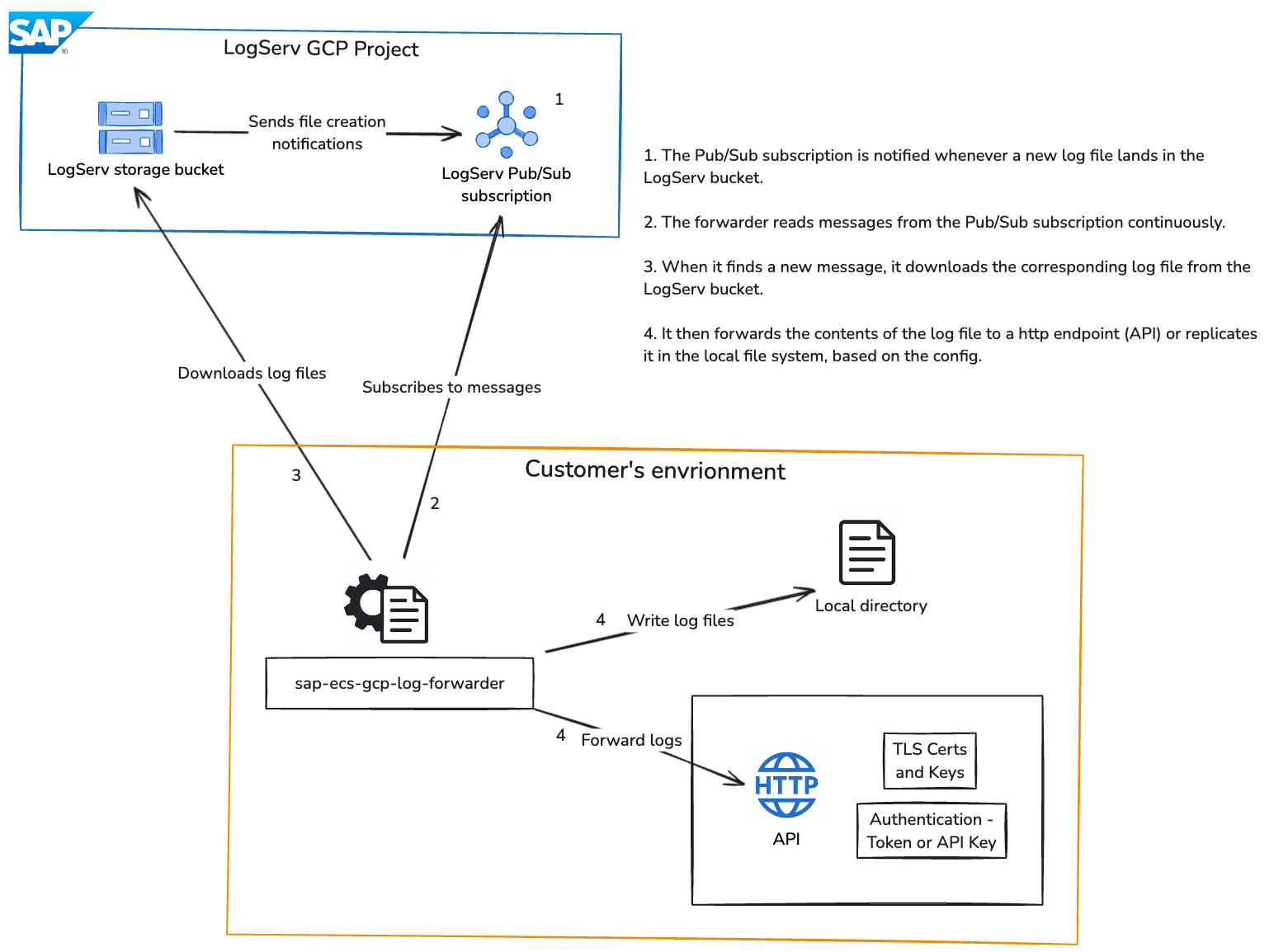

GCP Log Forwarder is a Python package that monitors a GCP Pub/Sub subscription for object creation messages, downloads and processes the corresponding log files from a Google Cloud Storage, and forwards the log data to a configured HTTP endpoint or writes it to local files.

Features

- Monitors a GCP Pub/Sub subscription for object creation messages.

- Processes logs and forwards them to a configurable HTTP endpoint or writes them to local files.

- Supports both mutual TLS and standard HTTP connections.

- Offers flexible HTTP authentication via bearer tokens or API keys.

- Fully configurable through environment variables or a

.envfile in the working directory. - Provides an inactivity timeout to gracefully terminate when the queue is empty.

Prerequisites

- Python 3.10 or higher

- A GCP project with Pub/Sub and Cloud Storage enabled.

- GCP Service Account in your own project with appropriate permissions:

roles/pubsub.subscriberfor the Pub/Sub subscriptionroles/storage.objectViewerfor accessing Cloud Storage objects

Installation

With direct internet access:

pip install sap-ecs-gcp-log-forwarder

or

pip install sap-ecs-gcp-log-forwarder==<version>

Without direct internet access:

- Download the wheel

On a machine with internet access, visit the PyPI files page forsap-ecs-gcp-log-forwarderand download the.whlfile matching your Python version (e.g.,sap_ecs_gcp_log_forwarder-1.0.2-py3-none-any.whl). - Transfer the file

Copy the downloaded wheel to the target (offline) machine using SCP, or another secure method. - Install from file

On the offline machine, run:pip install /path/to/sap_ecs_gcp_log_forwarder-<version>-py3-none-any.whl

Configuration

Configure the GCP Log Forwarder by setting environment variables in your shell or in a .env file in the current working directory.

-

GOOGLE_APPLICATION_CREDENTIALS: Path to your service account key JSON file. (Required) -

LOGSERV_PROJECT_ID: The ID of the Google Cloud Project where the Cloud Storage Bucket is in. (Required) -

LOGSERV_PUBSUB_SUBSCRIPTION_NAME: The name of the Pub/Sub subscription to consume events from. (Not the full path) (Required) -

OUTPUT_METHOD: Method to forward logs (httporfiles). (Required)http: Forward logs to an HTTP endpoint.files: Write logs to files in the specified output directory.

-

TIMEOUT_DURATION: Time in seconds to wait for messages before exiting. (Optional) -

LOGSERV_LOG_FILTERS: Comma-separated list of log type filters. Only messages whose subject paths contain at least one of these filters will be processed (e.g.,hana,hanaaudit,linux). (Optional) -

HTTP_ENDPOINT: HTTP endpoint to forward logs to. (Required ifOUTPUT_METHOD=http) -

TLS_CERT_PATH: Path to the TLS certificate for mutual TLS connections. (Optional) -

TLS_KEY_PATH: Path to the TLS key for mutual TLS connections. (Optional) -

AUTH_METHOD: Authentication method. (Required ifOUTPUT_METHOD=http)token: Use a bearer/OAuth token for authentication.api_key: Use an API key for authentication.

-

AUTH_TOKEN: Bearer/OAuth token for HTTP endpoint authentication. (Required ifAUTH_METHOD=token) -

API_KEY: API key for HTTP endpoint authentication. (Required ifAUTH_METHOD=api_key) -

OUTPUT_DIR: Output directory to write log files to. (Required ifOUTPUT_METHOD=files) -

USE_ONLY_DATE_FOLDERS: Outputs the files to a single folder structure based on the file date ( Optional )true: Will create use the${OUTPUT_DIR}and the file date, like:${OUTPUT_DIR}/2025/11/03/myfile.json.gzfalse: Use the standard folder structure

-

COMPRESS_OUTPUT_FILE: Whether to gzip-compress output files. (Defaults totrue)true: Compress output files using gzip.false: Do not compress output files.

-

LOG_LEVEL: Log level for the forwarder application. (DEBUG,INFO,WARNING,ERROR,CRITICAL) (Defaults toINFO)

Example of setting environment variables in a shell:

export GOOGLE_APPLICATION_CREDENTIALS="path/to/your-service-account-key.json"

export LOGSERV_PROJECT_ID="id-of-the-google-project-where-the-cloud-storage-bucket-is-in"

export LOGSERV_PUBSUB_SUBSCRIPTION_NAME="pubsub-subscription-name-to-consume-events-from"

export TIMEOUT_DURATION=120 # Timeout after 120 seconds of inactivity. DO NOT set for indefinite runs.

export LOGSERV_LOG_FILTERS="hana"

# For HTTP output

export OUTPUT_METHOD="http"

export HTTP_ENDPOINT="https://your-http-endpoint.com"

export TLS_CERT_PATH="/path/to/tls_cert.pem"

export TLS_KEY_PATH="/path/to/tls_key.pem"

export AUTH_METHOD="token"

export AUTH_TOKEN="your_token"

export AUTH_METHOD="api_key"

export API_KEY="your_api_key"

# For file output

export OUTPUT_METHOD="files"

export USE_ONLY_DATE_FOLDERS="false"

export OUTPUT_DIR="/path/to/output/"

export COMPRESS_OUTPUT_FILE="false"

# Log level

export LOG_LEVEL="DEBUG" # Options: DEBUG, INFO, WARNING, ERROR, CRITICAL. Default is INFO.

Example of setting environment variables in a local .env file:

GOOGLE_APPLICATION_CREDENTIALS="path/to/your-service-account-key.json"

LOGSERV_PROJECT_ID="id-of-the-google-project-where-the-cloud-storage-bucket-is-in"

LOGSERV_PUBSUB_SUBSCRIPTION_NAME="pubsub-subscription-name-to-consume-events-from"

TIMEOUT_DURATION=120 # Timeout after 120 seconds of inactivity. DO NOT set for indefinite runs.

LOGSERV_LOG_FILTERS="hana"

# For HTTP output

OUTPUT_METHOD="http"

HTTP_ENDPOINT="https://your-http-endpoint.com"

TLS_CERT_PATH="/path/to/tls_cert.pem"

TLS_KEY_PATH="/path/to/tls_key.pem"

AUTH_METHOD="token"

AUTH_TOKEN="your_token"

AUTH_METHOD="api_key"

API_KEY="your_api_key"

# For file output

OUTPUT_METHOD="files"

USE_ONLY_DATE_FOLDERS="false"

OUTPUT_DIR="/path/to/output/"

COMPRESS_OUTPUT_FILE="false"

# Log level

LOG_LEVEL="DEBUG" # Options: DEBUG, INFO, WARNING, ERROR, CRITICAL. Default is INFO.

Usage

To run the GCP Log Forwarder, use:

sap-ecs-gcp-log-forwarder

This will start the process of consuming messages from the Pub/Sub subscription, processing log files, and forwarding them according to the specified method. The program will exit if no messages are found within the specified timeout duration. If not timeout duration is specified, the program will run indefinitely.

Examples

Forwarding specific log types

To forward only specific log types, set LOGSERV_LOG_FILTERS to a comma-separated list of filters.

For example, to forward HANA Audit logs, set the following environment variable:

In your shell:

export LOGSERV_LOG_FILTERS="hanaaudit"

Or in your .env file:

LOGSERV_LOG_FILTERS="hanaaudit"

Only files matching at least one of these filters will be processed and forwarded. If you want to forward all log types, simply omit the LOGSERV_LOG_FILTERS variable or set it to an empty string. Please reach out to your SAP contact for the correct container structure or run the forwarder without the filter to see the available log types and their structure.

Things to remember

- If

TIMEOUT_DURATIONis not set, the program will run indefinitely. - The service account must have

Storage Object ViewerandPub/Sub Subscriberpermissions over the bucket and subscription respectively.

License

This application and its source code are licensed under the SAP Developer License Agreement. See the LICENSE file for more information.

Release Notes

1.0.1

- Initial release

1.0.2

- Updated README with additional instructions for installing the package without direct internet access.

1.0.5

- Improved error handling for permission issues and retry mechanisms.

- Validates the existence of the Pub/Sub subscription before consuming messages from it.

1.0.6

- Point dotenv to the current working directory for the .env file.

1.0.8

- Added a new configuration option (LOGSERV_LOG_FILTERS) to filter log types.

- Added a new configuration option (COMPRESS_OUTPUT_FILE) to set the compression option for output files.

- Updated README with instructions on setting the log type filters and compression option.

- Skipped 1.0.7 to fix a versioning issue.

1.1.0

- Added a new configuration option ( USE_ONLY_DATE_FOLDERS ) to use only the date folder structure.

- This is usefull for customers that will get only one folder from the GCS Bucket.

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distributions

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file sap_ecs_gcp_log_forwarder-1.1.1-py3-none-any.whl.

File metadata

- Download URL: sap_ecs_gcp_log_forwarder-1.1.1-py3-none-any.whl

- Upload date:

- Size: 15.4 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

0caae2f453044b14c451cec10f9a5f2cb535d6e8e84d0188115fe649218d458d

|

|

| MD5 |

affc1d17982f4f9d00074ad4842f26a6

|

|

| BLAKE2b-256 |

7e49a3c81d4a7054051ec83b3e7e8bd0df38a7c170109cd0e4a69685bc6a0f94

|