Scrape data in one-shot

Project description

SCRAPEGOAT

Scrape data in one shot.

Scrapegoat is a python library that can be used to scrape the websites from internet based on the relevance of the given topic irrespective of language using Natural Language Processing. It can be mainly used for non-English language to get accurate and relevant scraped text.

Concept

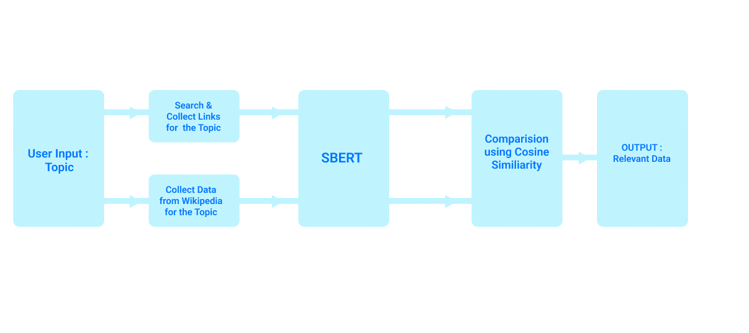

Initially the data is scraped from a website and processed ( to remove English words if the data required is in other language). The BERT model is feed with processed data and topic to compute the cosine similarity of the given topic with each word of the scraped data then mean of cosine similarity scores of is computed. If the mean is greater than threshold then scraped data is generated as output. Also there is a section where we are using Adaptive threshold.

BERT Model

BERT, which stands for Bidirectional Encoder Representations from Transformers, is based on Transformers, a deep learning model in which every output element is connected to every input element, and the weightings between them are dynamically calculated based upon their connection. The BERT framework was pre-trained using text from Wikipedia. The transformer is the part of the model that gives BERT its increased capacity for understanding context and ambiguity in language. The transformer does this by processing any given word in relation to all other words in a sentence, rather than processing them one at a time. By looking at all surrounding words, the Transformer allows the BERT model to understand the full context of the word, and therefore better understand searcher intent.

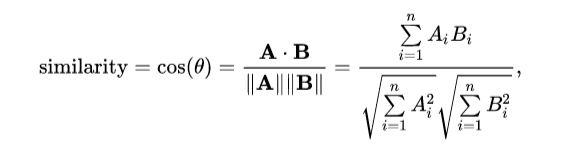

Cosine Similarity

Cosine similarity is one of the metrics to measure the text-similarity between two documents irrespective of their size in Natural language Processing. A word can be represented in the vector form, therefore the text documents are represented in n-dimensional vector space. If the Cosine similarity score is 1, it means two vectors have the same orientation. The value closer to 0 indicates that the two documents have less similarity. The Cosine similarity of two documents will range from 0 to 1.

Multi Processing

The multiprocessing module allows the programmer to fully leverage multiple processors on a given machine. The basic ideology of Multi-Processing is that if you have an algorithm that can be divided into different workers (small processors/cores), then you can speed up the program. Machines nowadays come with 4,6,8 and 16 cores, therefore parts of the code can be deployed in parallel.

Using Scrapegoat

The examples/test.py file contains these

from scrapegoat.main import getLinkData

from scrapegoat.main import generateData

if __name__=="__main__":

# scrape one link and get the relevence score

topic = " cricket"

language = 'kn'

url = "https://vijaykarnataka.com/sports/cricket/news/ind-vs-eng-brian-lara-congratulates-jasprit-bumrah-for-breaking-his-world-record-in-test-cricket/articleshow/92628545.cms"

text,score = getLinkData(url, topic, language=language)

print(score)

# scrape and download data

topic = " cricket"

language = 'hi'

generateData(topic, language, n_links=20)

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file scrapegoat-1.0.0.5.tar.gz.

File metadata

- Download URL: scrapegoat-1.0.0.5.tar.gz

- Upload date:

- Size: 6.4 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.8.0 pkginfo/1.8.3 readme-renderer/34.0 requests/2.25.1 requests-toolbelt/0.9.1 urllib3/1.26.9 tqdm/4.41.0 importlib-metadata/1.6.0 keyring/23.4.1 rfc3986/1.5.0 colorama/0.4.4 CPython/3.6.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

1fdb732effc8d1c174849a4b745e609b02cee8b90ed41cc01d66dd39d748055a

|

|

| MD5 |

a2d1346dc526a448ae1cdcf261f65d93

|

|

| BLAKE2b-256 |

275f416e2d74ee5625a7e8ba08fa67f271fe27a90bdadd9536a3a1ebbde190fb

|

File details

Details for the file scrapegoat-1.0.0.5-py3-none-any.whl.

File metadata

- Download URL: scrapegoat-1.0.0.5-py3-none-any.whl

- Upload date:

- Size: 7.3 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.8.0 pkginfo/1.8.3 readme-renderer/34.0 requests/2.25.1 requests-toolbelt/0.9.1 urllib3/1.26.9 tqdm/4.41.0 importlib-metadata/1.6.0 keyring/23.4.1 rfc3986/1.5.0 colorama/0.4.4 CPython/3.6.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

50b643247f407c56a1439356d21e5995fc3b3a13b48a8c540b573d37a99e0a09

|

|

| MD5 |

55c908e308f1eabe6cc0737e2e7145e1

|

|

| BLAKE2b-256 |

976911bf346d63f20e27cad32da0c938442362bc24e8d97eba3edf658dde9347

|