Single-Input-Reasoning: one LLM call, full action graph execution with evolutionary memory

Project description

SIR — Single-Input-Reasoning

One LLM call. Full action graph. Evolutionary memory.

SIR is a Python SDK that delegates complex multi-step tasks to an LLM with a single inference call. The LLM produces an entire Directed Acyclic Graph (DAG) of actions in one shot. SIR then executes it locally with parallelism, fan-out, retry, conditional branching, speculative execution, and DAG branching.

🌐 Visit the SIR website for a clear and easy introduction.

Table of Contents

- What Makes SIR Different

- Installation

- Quick Start

- How It Works

- Benchmarks

- Architecture

- Tool Modes

- Evolutionary Memory

- Advanced Features

- Providers

- Configuration

- CLI

- Web Dashboard

- DAG Visualization

- Roadmap

- License

What Makes SIR Different

| Feature | ReAct | Plan & Execute | Chain-of-Tools | SIR |

|---|---|---|---|---|

| LLM calls per task | N (one per step) | 1 + N | 1 | 1 or 1+1 |

| Parallel execution | No | No | No | Full DAG |

| Adaptive tool selection | Yes (slow) | Yes (slow) | No, hardcoded | Yes (1 call) |

| Conditional branching | Via LLM re-call | Via LLM re-call | No | Local eval |

| Fan-out (map-reduce) | Manual | Manual | No | Built-in |

| Speculative execution | No | No | No | Yes |

| DAG branching (multi-path) | No | No | No | Yes |

| Post-execution reasoning | No | No | No | Yes (same session) |

| Post-LLM graph optimization | No | No | No | Yes |

| Evolutionary memory | No | No | No | dags.bin |

| Token efficiency | Low | Low | Medium | Compressed |

| Cost | High (N calls) | High (1+N) | Medium (1) | Minimal (1) |

Installation

pip install sir-agent # core only

pip install sir-agent[ollama] # + Ollama support

pip install sir-agent[openai] # + OpenAI support

pip install sir-agent[claude] # + Anthropic Claude support

pip install sir-agent[gemini] # + Google Gemini support

pip install sir-agent[bedrock] # + AWS Bedrock support

pip install sir-agent[openrouter] # + OpenRouter support

pip install sir-agent[perplexity] # + Perplexity support

pip install sir-agent[mistral] # + Mistral support

pip install sir-agent[all] # everything

Requires Python 3.10+.

Quick Start

from sir import SIR, tool

@tool

def search_web(query: str) -> str:

"""Search the web."""

return requests.get(f"https://api.search.com?q={query}").text

@tool

def summarize(text: str) -> str:

"""Summarize text."""

return text[:200] + "..."

@tool

def translate(text: str, lang: str) -> str:

"""Translate text."""

return translated_text

sir = SIR(model="qwen2.5:14b")

result = sir.run(

"Search latest AI news, summarize, and translate to Italian",

tools=[search_web, summarize, translate],

)

print(result.final_result)

One LLM call. Full DAG. Parallel execution. Result.

How It Works

sir.run(prompt, tools)

|

v

+----------------------------------+

| 1. Memory Lookup | Semantic vector search in dags.bin

| 2. Prompt Compilation | Compressed tool schemas + memory

| 3. Single LLM Call | One inference -> full action graph

| 4. Graph Optimization | Dead-step elimination, dedup, dep inference

| 5. Parallel Graph Execution | Topological sort -> async + speculative

| 6. Reasoning Pass (if needed) | LLM reasons on real tool results

| 7. Evolutionary Scoring | Score steps, deprecate bad ones

| 8. Memory Persistence | Save to dags.bin

+----------------------------------+

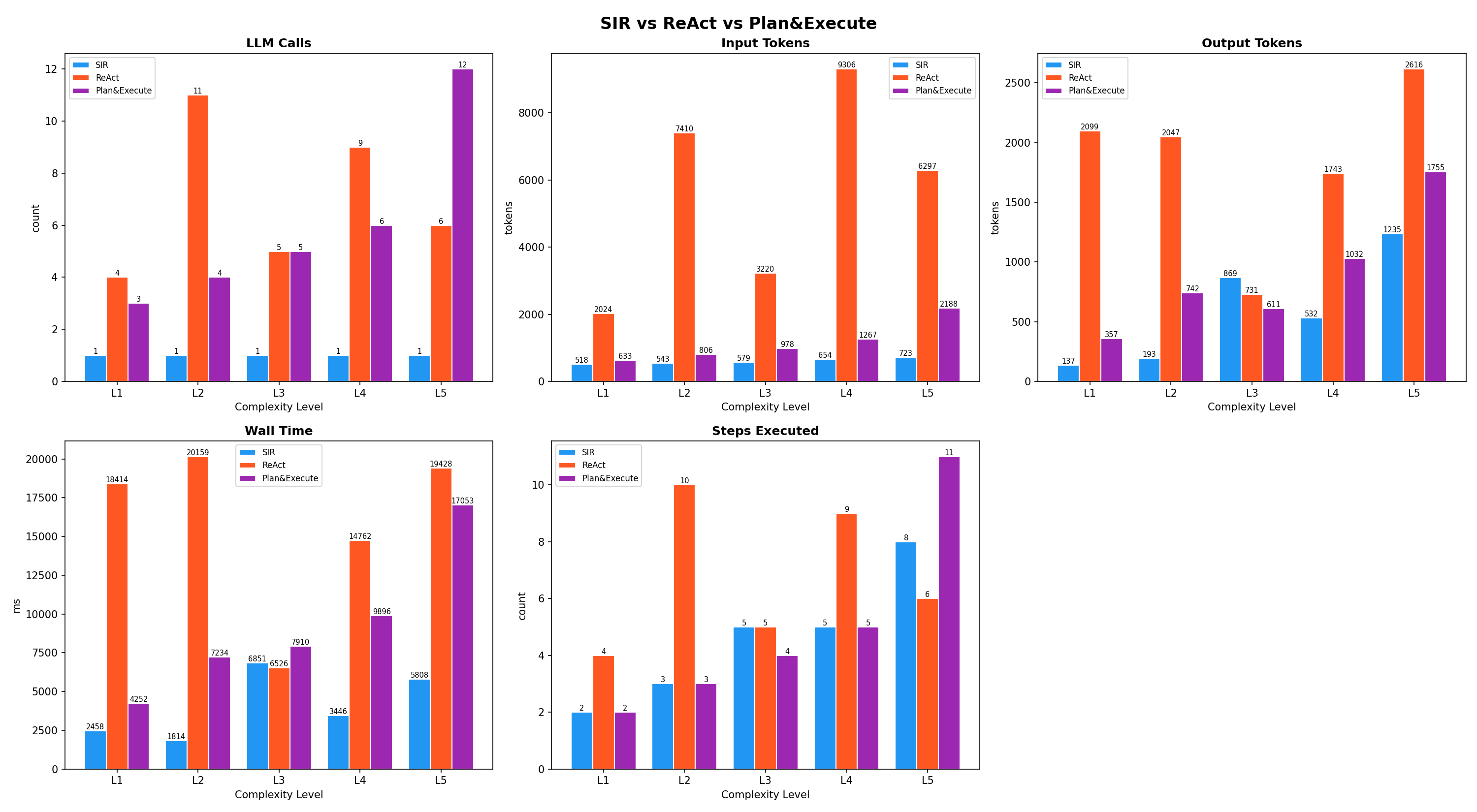

Benchmarks

Overview

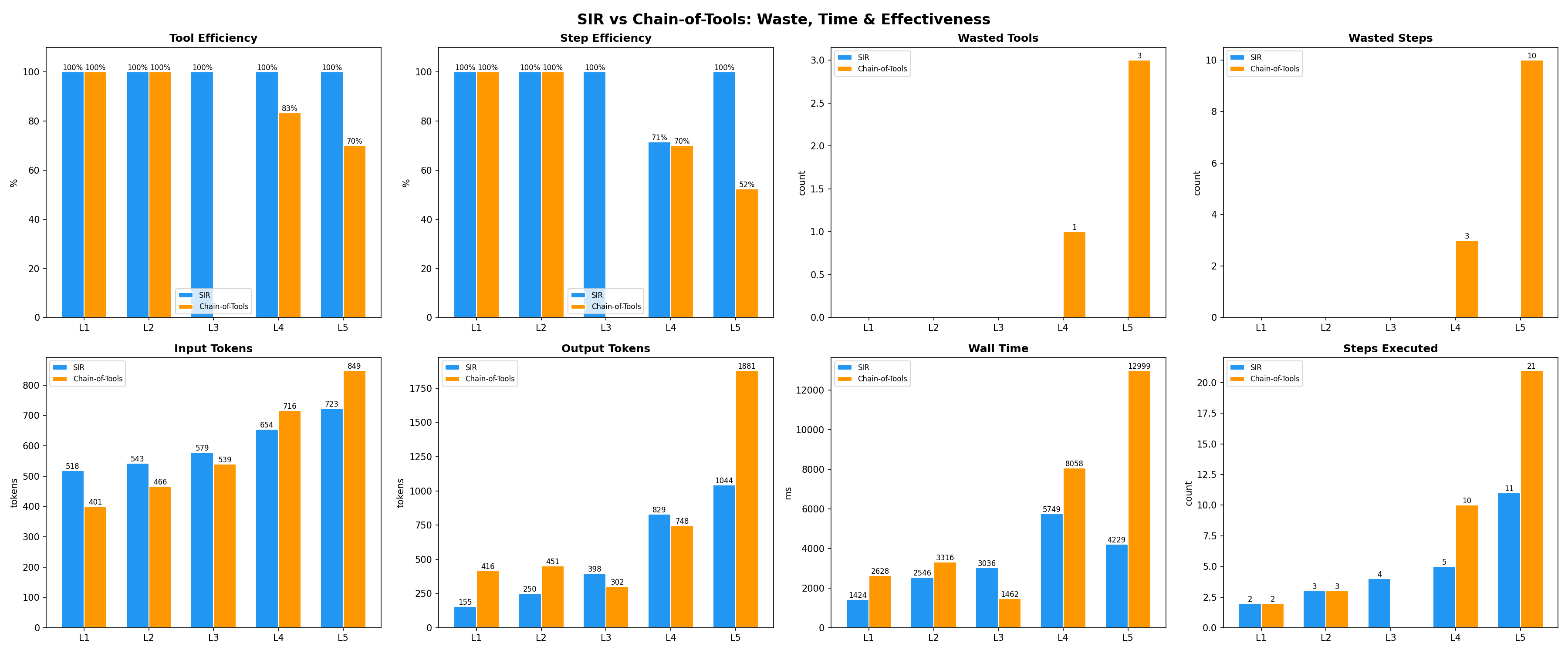

SIR-Agent vs Chain-of-Tools

SIR adaptively selects only the tools needed. Chain-of-Tools uses a hardcoded pipeline with unnecessary steps.

Benchmarked across 5 complexity levels (L1: 2 tools, L5: 11 parallel steps) using the same LLM:

| Metric | SIR | Chain-of-Tools |

|---|---|---|

| Avg Tool Efficiency | 100% | 71% |

| Avg Step Efficiency | 94% | 64% |

| Total Wasted Tools | 0 | 4 |

| Total Wasted Steps | 0 | 13 |

| Total Tokens | 5,693 | 6,769 (-16%) |

| Total Wall Time | 17s | 28s (-40%) |

Architecture

Graph Optimization (post-LLM)

After the LLM generates the DAG, SIR runs three compiler passes before execution:

- Dependency inference -- adds missing dependencies by analyzing

$sNreferences in step arguments - Dead-step elimination -- removes steps whose output is never referenced

- Duplicate merge -- merges steps calling the same tool with identical args

Speculative Execution

While the current layer executes, SIR speculatively launches steps from the next layer if their dependencies are already available. This reduces total wall time on deep DAGs.

Reasoning Pass

When a task requires understanding, analysis, or synthesis of tool results, SIR automatically activates a reasoning pass. After all tools execute, their results are injected back into the same conversation and the LLM produces a final reasoned answer. The LLM decides during planning whether reasoning is needed (via the nr flag in the DAG). For pure data pipelines (fetch, transform, translate), no reasoning pass is triggered and SIR stays at 1 LLM call.

As a fallback, SIR includes a lightweight heuristic that detects reasoning intent in the prompt. This is critical for smaller local models (7B-14B parameters) that may not reliably set the nr flag. The heuristic ensures that prompts like "explain", "summarize in your own words", or "what is the sentiment" always trigger the reasoning pass, regardless of model capability. This makes SIR production-ready across the full spectrum of LLMs — from local quantized models to frontier APIs.

DAG Branching (Multi-Path)

Steps can define alternatives -- multiple tool strategies that race in parallel:

{

"id": "s1",

"tool": "search",

"args": {"query": "AI news"},

"alternatives": [{"tool": "fetch_details", "args": {"entity": "AI"}}],

"select": "fastest"

}

Strategies: fastest (first to succeed wins), shortest, longest.

Token Compression

SIR uses compressed JSON aliases to minimize token usage:

| Full key | Alias | Savings |

|---|---|---|

tool | t | 3 tokens |

args | a | 3 tokens |

depends_on | d | 9 tokens |

condition | c | 8 tokens |

foreach | f | 6 tokens |

final_step | fs | 9 tokens |

The parser auto-expands aliases and is fully backward-compatible with full key names.

Tool Modes

SIR gives developers control over how much autonomy the LLM has in selecting tools:

| Mode | Behavior | Use case |

|---|---|---|

adaptive (default) | LLM picks the minimum tools needed | Generic prompts, many tools available |

strict | ALL tools passed must be used; LLM decides order and parallelism only | Predictable pipelines |

required | Tools marked required=True are mandatory, others optional | Mix of fixed and flexible |

# Adaptive -- LLM chooses

sir = SIR(tool_mode="adaptive")

# Strict -- all tools must be used

sir = SIR(tool_mode="strict")

# Required -- mark optional tools

@tool(required=False)

def cache(key: str, value: str) -> str: ...

sir = SIR(tool_mode="required")

Evolutionary Memory

SIR persists every executed action graph in a binary file (dags.bin) using msgpack with vector embeddings for semantic retrieval.

Run 1: LLM generates plan -> execute -> score -> store in dags.bin

Run 2: Load prior plan -> LLM sees scores/notes -> improves plan -> update

Run 3: Step X scored 2.1 -> DEPRECATED -> LLM replaces with better alternative

Run N: Converges to optimal action graph for this task

Each step stores:

- score (0-10) -- exponential moving average

- notes -- LLM annotations from previous runs

- executions -- how many times it ran

- deprecated -- true if score falls below threshold after 3 or more runs

Advanced Features

Conditional Branching

{"id":"s3","t":"notify","a":{"msg":"$s2.result"},"d":["s2"],

"c":{"ref":"$s2.result","op":"contains","val":"error"}}

Fan-out (Map-Reduce)

{"id":"s2","t":"process","a":{"item":"$item"},"d":["s1"],"f":"$s1.result"}

Supports both $sN.result references and inline arrays:

{"id":"s1","t":"search","a":{"query":"$item"},"f":["topic A","topic B"]}

Retry Policy

{"id":"s1","t":"unreliable_api","a":{"url":"..."},"r":3}

Providers

All providers read API keys from environment variables by default. You can also pass them explicitly.

Ollama (default)

from sir.providers import OllamaProvider

sir = SIR(provider=OllamaProvider(model="qwen2.5:14b"))

OpenAI

from sir.providers import OpenAIProvider

sir = SIR(provider=OpenAIProvider(model="gpt-4o")) # reads OPENAI_API_KEY

Claude (Anthropic)

from sir.providers import ClaudeProvider

sir = SIR(provider=ClaudeProvider(model="claude-sonnet-4-20250514")) # reads ANTHROPIC_API_KEY

Gemini (Google)

from sir.providers import GeminiProvider

sir = SIR(provider=GeminiProvider(model="gemini-2.5-flash")) # reads GEMINI_API_KEY

AWS Bedrock

from sir.providers import BedrockProvider

sir = SIR(provider=BedrockProvider(model="anthropic.claude-sonnet-4-20250514-v1:0")) # reads AWS_REGION + AWS_BEARER_TOKEN_BEDROCK

OpenRouter

from sir.providers import OpenRouterProvider

sir = SIR(provider=OpenRouterProvider(model="openai/gpt-4o")) # reads OPENROUTER_API_KEY

Perplexity

from sir.providers import PerplexityProvider

sir = SIR(provider=PerplexityProvider(model="sonar-pro")) # reads PERPLEXITY_API_KEY

Mistral

from sir.providers import MistralProvider

sir = SIR(provider=MistralProvider(model="mistral-large-latest")) # reads MISTRAL_API_KEY

Custom Provider

from sir.providers.llm import LLMProvider

class MyProvider(LLMProvider):

async def generate(self, messages, **kwargs) -> str:

return await my_custom_llm(messages)

Configuration

sir = SIR(

provider=OllamaProvider(model="qwen2.5:14b"),

embed_provider=OpenAIProvider(model="unused", embed_model="text-embedding-3-small"),

memory_path="dags.bin", # binary memory file

enable_memory=True, # toggle memory system

enable_optimizer=True, # toggle graph compression

enable_speculation=True, # toggle speculative execution

enable_reasoning=True, # toggle reasoning pass

tool_mode="adaptive", # "adaptive" | "strict" | "required"

deprecation_threshold=3.0, # score below this -> deprecated

similarity_threshold=0.78, # semantic memory match threshold

max_tokens=4096, # LLM output limit

llm_retries=2, # retry on LLM/parse failure

)

CLI

sir run "Search AI news and summarize" -t tools.py

sir run "..." -t tools.py --stream # live streaming

sir ui # launch web dashboard

sir ui --port 8080 # custom port

sir inspect # view evolutionary memory

sir clear # clear memory

Web Dashboard

SIR includes a local web dashboard for real-time DAG visualization, execution monitoring, and memory inspection.

pip install 'sir-agent[ui]'

sir ui

Opens at http://127.0.0.1:7437. Features:

- Playground -- enter a prompt, select provider/model, and run. View the reasoning output, raw LLM JSON, token count, and whether the task was completed in 1 or 1+1 LLM calls.

- Evolution explorer -- browse all stored DAGs with an interactive canvas visualization. Inspect step scores, execution counts, deprecated steps, and score evolution over time.

- Raw LLM output -- view the exact JSON the LLM produced for debugging.

Environment Variables

The dashboard reads credentials from a .env file in the working directory. Supported variables:

# Ollama (default, no key needed)

OLLAMA_HOST=http://localhost:11434

# OpenAI

OPENAI_API_KEY=sk-...

# Anthropic Claude

ANTHROPIC_API_KEY=sk-ant-...

# Google Gemini

GEMINI_API_KEY=...

# AWS Bedrock

AWS_REGION=us-east-1

AWS_BEARER_TOKEN_BEDROCK=...

# OpenRouter

OPENROUTER_API_KEY=sk-or-...

# Perplexity

PERPLEXITY_API_KEY=pplx-...

# Mistral

MISTRAL_API_KEY=...

The chat provider and embedding provider can be configured independently -- for example, use Bedrock for chat and OpenAI for embeddings.

Tools File

The dashboard loads tools from a Python file. Create a file with @tool decorated functions:

# tools.py

from sir import tool

@tool

def search(query: str) -> str:

"""Search the web."""

return requests.get(f"https://api.example.com?q={query}").text

@tool

def summarize(text: str) -> str:

"""Summarize text."""

return text[:200]

Then enter tools.py in the Tools file field.

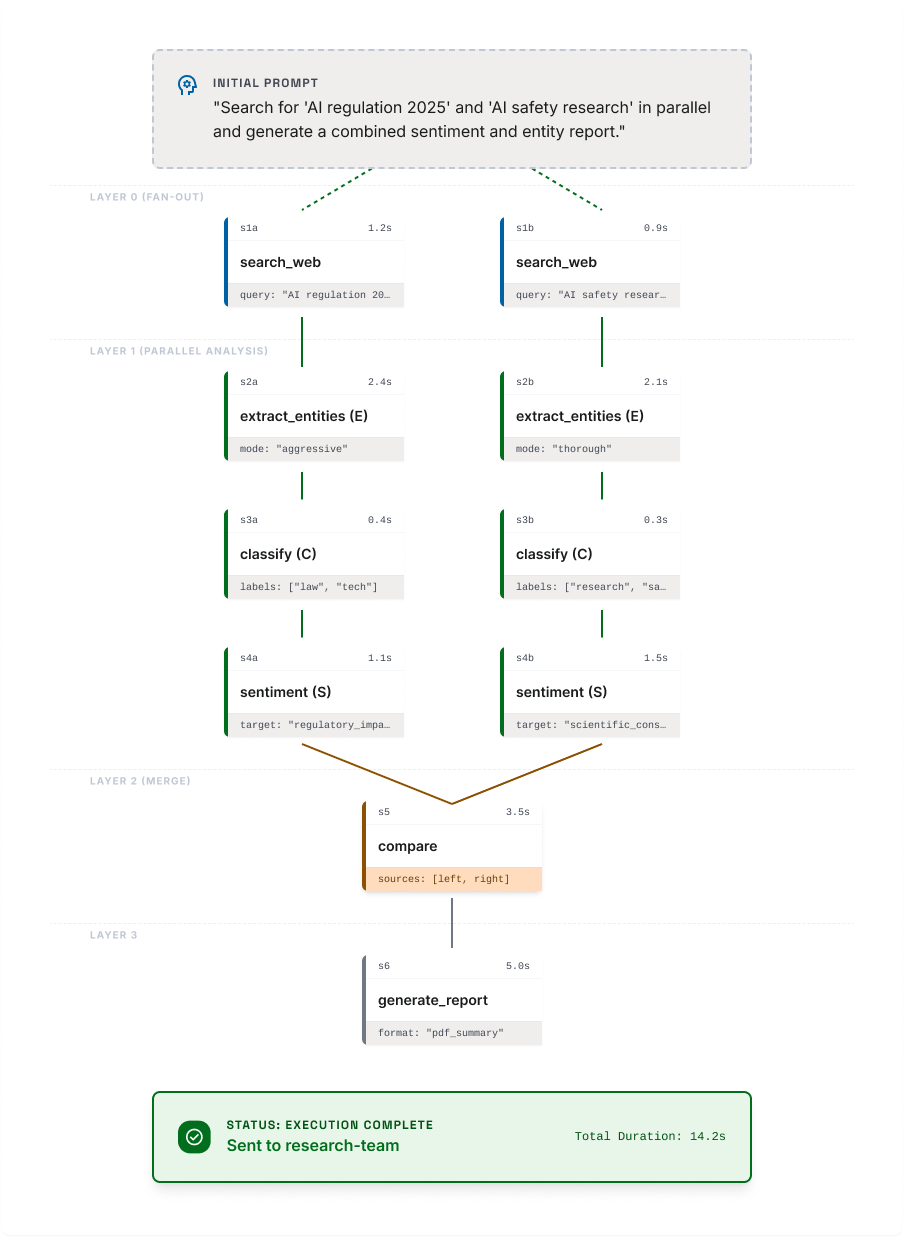

DAG Visualization

The following diagram shows an example of a DAG generated by SIR from a single LLM call. Each node represents a tool invocation, and edges represent data dependencies between steps.

🌐 For more details visit the SIR website.

Roadmap

The following features are under active development.

Cross-Agent DAG Federation

Multiple SIR instances collaborating on a single distributed DAG. Steps can be delegated to specialized agents, with results flowing back into the parent graph. Multi-agent orchestration in a single-shot planning cycle.

Self-Healing DAGs ✅

When a step fails, SIR generates a targeted micro-DAG in one additional LLM call to repair only the broken branch -- without re-executing the entire graph. A natural extension of evolutionary memory applied to runtime fault recovery.

Compile-Once, Run-Anywhere DAG Caching ✅

Optimized DAGs are compiled into a binary executable format that no longer requires the LLM. For recurring tasks, SIR becomes a pure runtime with zero inference latency and zero token cost.

License

AGPL-3.0 -- See LICENSE for details.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file sir_agent-0.0.3.tar.gz.

File metadata

- Download URL: sir_agent-0.0.3.tar.gz

- Upload date:

- Size: 82.6 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.14.0

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

90edbd6b6253004ed61896ecfc4cf655dc6540190454580783352975b39e4a86

|

|

| MD5 |

71fbe7a08aba236eae5aa756577f1fb4

|

|

| BLAKE2b-256 |

ba781ff60b0083295145e05a8639eb184dc1c005635b63694c7fbc513ba8ec3e

|

File details

Details for the file sir_agent-0.0.3-py3-none-any.whl.

File metadata

- Download URL: sir_agent-0.0.3-py3-none-any.whl

- Upload date:

- Size: 61.0 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.14.0

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

ab73fa0b4c01ea293c72ac7688a633b1953e08cf99b4c8fad0fad86f75527cec

|

|

| MD5 |

b3d8a02644ed6b0ca8d3e1bd22f63e95

|

|

| BLAKE2b-256 |

4b5407d0b98e37c63e5e6d402f70a05fc2349603b792f119e9602c8d50f33367

|