PyTorch implementation of 'From Sparse to Soft Mixtures of Experts'

Project description

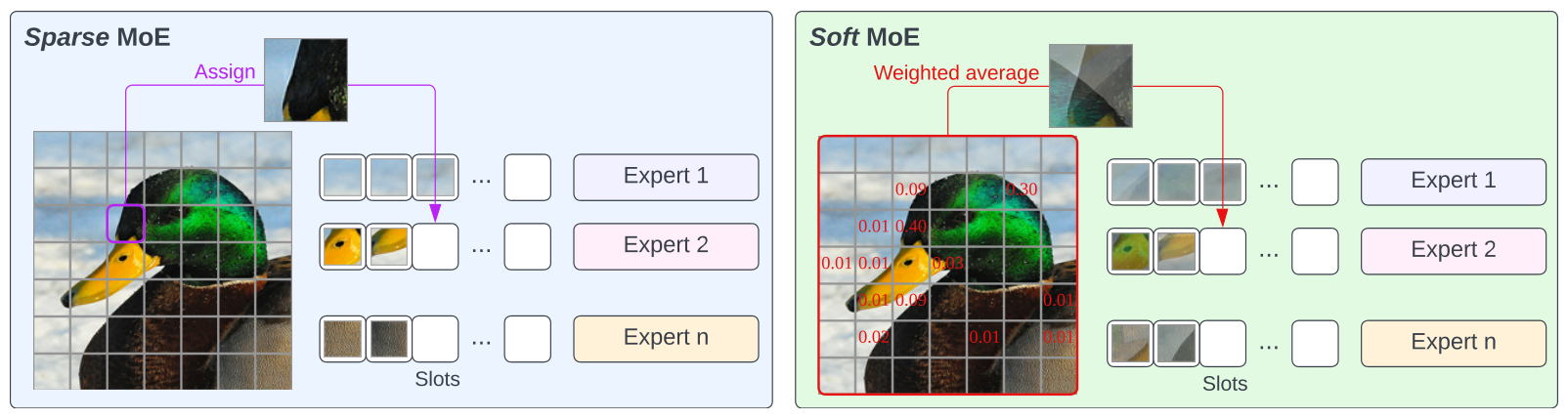

Soft Mixture of Experts

PyTorch implementation of Soft Mixture of Experts (Soft-MoE) from "From Sparse to Soft Mixtures of Experts".

This implementation extends the timm library's VisionTransformer class to support Soft-MoE MLP layers.

Installation

pip install soft-moe

Or install the entire repo with:

git clone https://github.com/bwconrad/soft-moe

cd soft-moe/

pip install -r requirements.txt

Usage

Initializing a Soft Mixture of Experts Vision Transformer

import torch

from soft_moe import SoftMoEVisionTransformer

net = SoftMoEVisionTransformer(

num_experts=128,

slots_per_expert=1,

moe_layer_index=6,

img_size=224,

patch_size=32,

num_classes=1000,

embed_dim=768,

depth=12,

num_heads=12,

mlp_ratio=4,

)

img = torch.randn(1, 3, 224, 224)

preds = net(img)

Functions are also available to initialize default network configurations:

from soft_moe import (soft_moe_vit_base, soft_moe_vit_huge,

soft_moe_vit_large, soft_moe_vit_small,

soft_moe_vit_tiny)

net = soft_moe_vit_tiny()

net = soft_moe_vit_small()

net = soft_moe_vit_base()

net = soft_moe_vit_large()

net = soft_moe_vit_huge()

net = soft_moe_vit_tiny(num_experts=64, slots_per_expert=2, img_size=128)

Setting the Mixture of Expert Layers

The moe_layer_index argument sets at which layer indices to use MoE MLP layers instead of regular MLP layers.

When an int is given, all layers starting from that depth index will be MoE layers.

net = SoftMoEVisionTransformer(

moe_layer_index=6, # Blocks 6-12

depth=12,

)

When a list is given, all specified layers will be MoE layers.

net = SoftMoEVisionTransformer(

moe_layer_index=[0, 2, 4], # Blocks 0, 2 and 4

depth=12,

)

- Note:

moe_layer_indexuses 0-index convention.

Creating a Soft Mixture of Experts Layer

The SoftMoELayerWrapper class can be used to make any network layer, that takes a tensor of shape [batch, length, dim], into a Soft Mixture of Experts layer.

import torch

import torch.nn as nn

from soft_moe import SoftMoELayerWrapper

x = torch.rand(1, 16, 128)

layer = SoftMoELayerWrapper(

dim=128,

slots_per_expert=2,

num_experts=32,

layer=nn.Linear,

# nn.Linear arguments

in_features=128,

out_features=32,

)

y = layer(x)

layer = SoftMoELayerWrapper(

dim=128,

slots_per_expert=1,

num_experts=16,

layer=nn.TransformerEncoderLayer,

# nn.TransformerEncoderLayer arguments

d_model=128,

nhead=8,

)

y = layer(x)

- Note: If the name of a layer argument overlaps with one of other arguments (e.g.

dim) you can pass a partial function tolayer.- e.g.

layer=partial(MyCustomLayer, dim=128)

- e.g.

Citation

@article{puigcerver2023sparse,

title={From Sparse to Soft Mixtures of Experts},

author={Puigcerver, Joan and Riquelme, Carlos and Mustafa, Basil and Houlsby, Neil},

journal={arXiv preprint arXiv:2308.00951},

year={2023}

}

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file soft_moe-0.0.1.tar.gz.

File metadata

- Download URL: soft_moe-0.0.1.tar.gz

- Upload date:

- Size: 13.8 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

6d2aaace545cf301d1c596f5bad2e296ce9ae7b8d76fb373d20124e5de9a286f

|

|

| MD5 |

eff480acfebe86a4cf84fdcbc03945c4

|

|

| BLAKE2b-256 |

24802a0615570c6fa8020d92039ed0bc3a702db275c15c57d366d28fea264c59

|

File details

Details for the file soft_moe-0.0.1-py3-none-any.whl.

File metadata

- Download URL: soft_moe-0.0.1-py3-none-any.whl

- Upload date:

- Size: 14.4 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

573f82e0e883d0a3bdcb05592a64c7cf15a959fcd62afa1b79dda0359945945f

|

|

| MD5 |

e997bb1628d062cca6609d4a00df8f74

|

|

| BLAKE2b-256 |

ca01edd5b1efc5f08c32ad1c97802e4df5abdd57339e474ed0cad71b9d9571cd

|