Jupyter Notebook & Lab extension to monitor Apache Spark jobs from a notebook

Project description

SparkMonitor

An extension for Jupyter Lab & Jupyter Notebook to monitor Apache Spark (pyspark) job execution from notebooks.

About

|

+ |  |

= |  |

Requirements

- JupyterLab 4 or Jupyter Notebook 4.4.0 or later

- PySpark 3.x or 4.x

- SparkMonitor requires Spark API mode "Spark Classic" (default in Spark 3.x and 4.0).

- Not compatible with Spark Client (Spark Connect), which uses the new decoupled client-server architecture.

Features

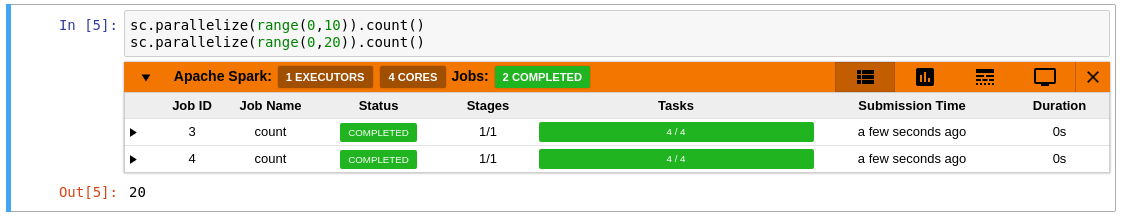

- Live Monitoring: Automatically displays an interactive monitoring panel below each cell that runs Spark jobs in your Jupyter notebook.

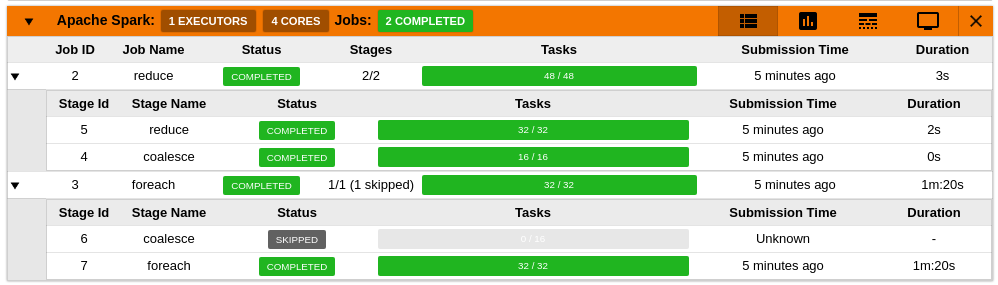

- Job and Stage Table: View a real-time table of Spark jobs and stages, each with progress bars for easy tracking.

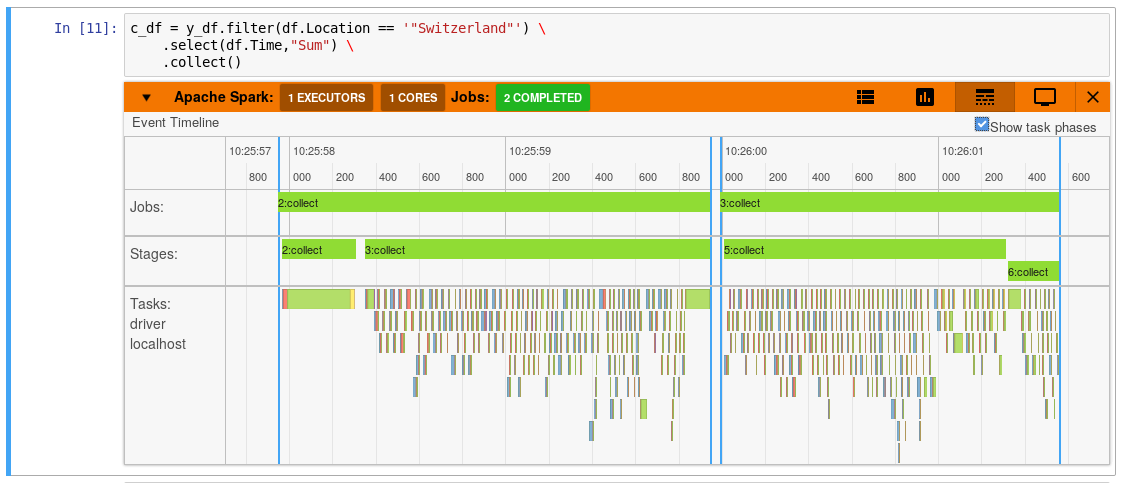

- Timeline Visualization: Explore a dynamic timeline showing the execution flow of jobs, stages, and tasks.

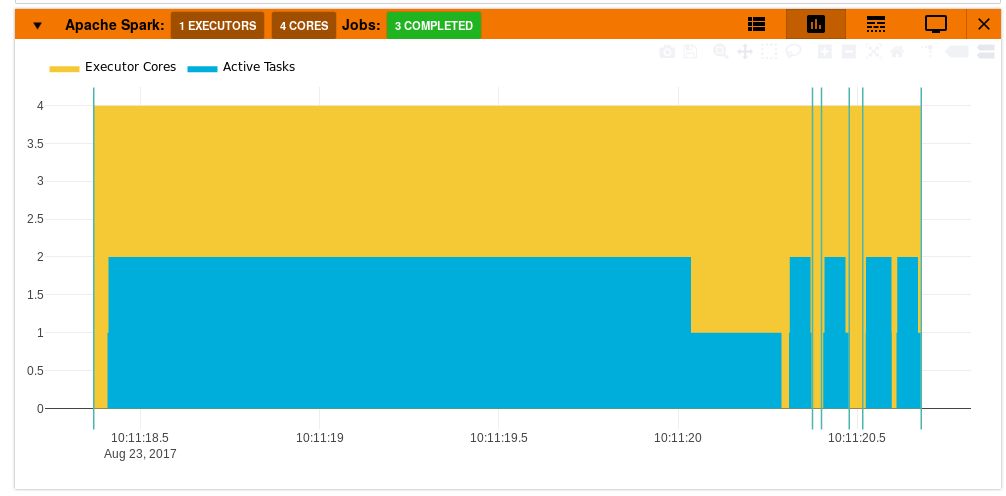

- Resource Graphs: Monitor active tasks and executor core usage over time with intuitive graphs.

|

|

|

Quick Start

Installation

pip install sparkmonitor # install the extension

# set up an ipython profile and add our kernel extension to it

ipython profile create # if it does not exist

echo "c.InteractiveShellApp.extensions.append('sparkmonitor.kernelextension')" >> $(ipython profile locate default)/ipython_kernel_config.py

# When using jupyterlab extension is automatically enabled

# When using older versions of jupyter notebook install and enable the nbextension with:

jupyter nbextension install sparkmonitor --py

jupyter nbextension enable sparkmonitor --py

How to use SparkMonitor in your notebook

Create your Spark session with the extra configurations to activate the SparkMonitor listener.

You will need to set spark.extraListeners to sparkmonitor.listener.JupyterSparkMonitorListener and

spark.driver.extraClassPath to the path to the sparkmonitor python package: path/to/package/sparkmonitor/listener_<scala_version>.jar

Example:

from pyspark.sql import SparkSession

spark = SparkSession.builder\

.config('spark.extraListeners', 'sparkmonitor.listener.JupyterSparkMonitorListener')\

.config('spark.driver.extraClassPath', 'venv/lib/python3.12/site-packages/sparkmonitor/listener_2.13.jar')\

.getOrCreate()

Legacy: with the extension installed, a SparkConf object called conf will be usable from your notebooks. You can use it as follows:

from pyspark import SparkContext

# Start the spark context using the SparkConf object named `conf` the extension created in your kernel.

sc=SparkContext.getOrCreate(conf=conf)

Development

If you'd like to develop the extension:

# See package.json scripts for building the frontend

yarn run build:<action>

# Install the package in editable mode

pip install -e .

# Symlink jupyterlab extension

jupyter labextension develop --overwrite .

# Watch for frontend changes

yarn run watch

# Build the spark JAR files

sbt +package

History

-

The first version of SparkMonitor was written by krishnan-r as a Google Summer of Code project with the SWAN Notebook Service team at CERN.

-

Further fixes and improvements were made by the team at CERN and members of the community maintained at swan-cern/jupyter-extensions/tree/master/SparkMonitor

-

Jafer Haider worked on updating the extension to be compatible with JupyterLab as part of his internship at Yelp.

- Jafer's work at the fork jupyterlab-sparkmonitor has since been merged into this repository to provide a single package for both JupyterLab and Jupyter Notebook.

-

Further development and maintenance is being done by the SWAN team at CERN and the community.

Changelog

This repository is published to pypi as sparkmonitor

-

2.x see the github releases page of this repository

-

1.x and below were published from swan-cern/jupyter-extensions and some initial versions from krishnan-r/sparkmonitor

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file sparkmonitor-3.1.0.tar.gz.

File metadata

- Download URL: sparkmonitor-3.1.0.tar.gz

- Upload date:

- Size: 3.5 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

92bb8a5a4b36eca5a6d384de2fdea8b04a1a470d5bdb527d22a1e7364c4a9e4e

|

|

| MD5 |

47827c1e4e4694d81cfad6e244e86207

|

|

| BLAKE2b-256 |

ffae4d13fa9b0eac43a628f81b398754fc63bb2c18530444fd2ab0b986fac708

|

File details

Details for the file sparkmonitor-3.1.0-py3-none-any.whl.

File metadata

- Download URL: sparkmonitor-3.1.0-py3-none-any.whl

- Upload date:

- Size: 3.3 MB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.13.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

d966bdcc475888045f8362dc80233b11936d18acdff7fbe5b9d8124aae6b2736

|

|

| MD5 |

12dd4886002009b46e544956578cf7e0

|

|

| BLAKE2b-256 |

49c1c464d9225f83ce21de696bede85f32e7886790731860704ecb51319b22f6

|