SpKit: Signal Processing ToolKit

Project description

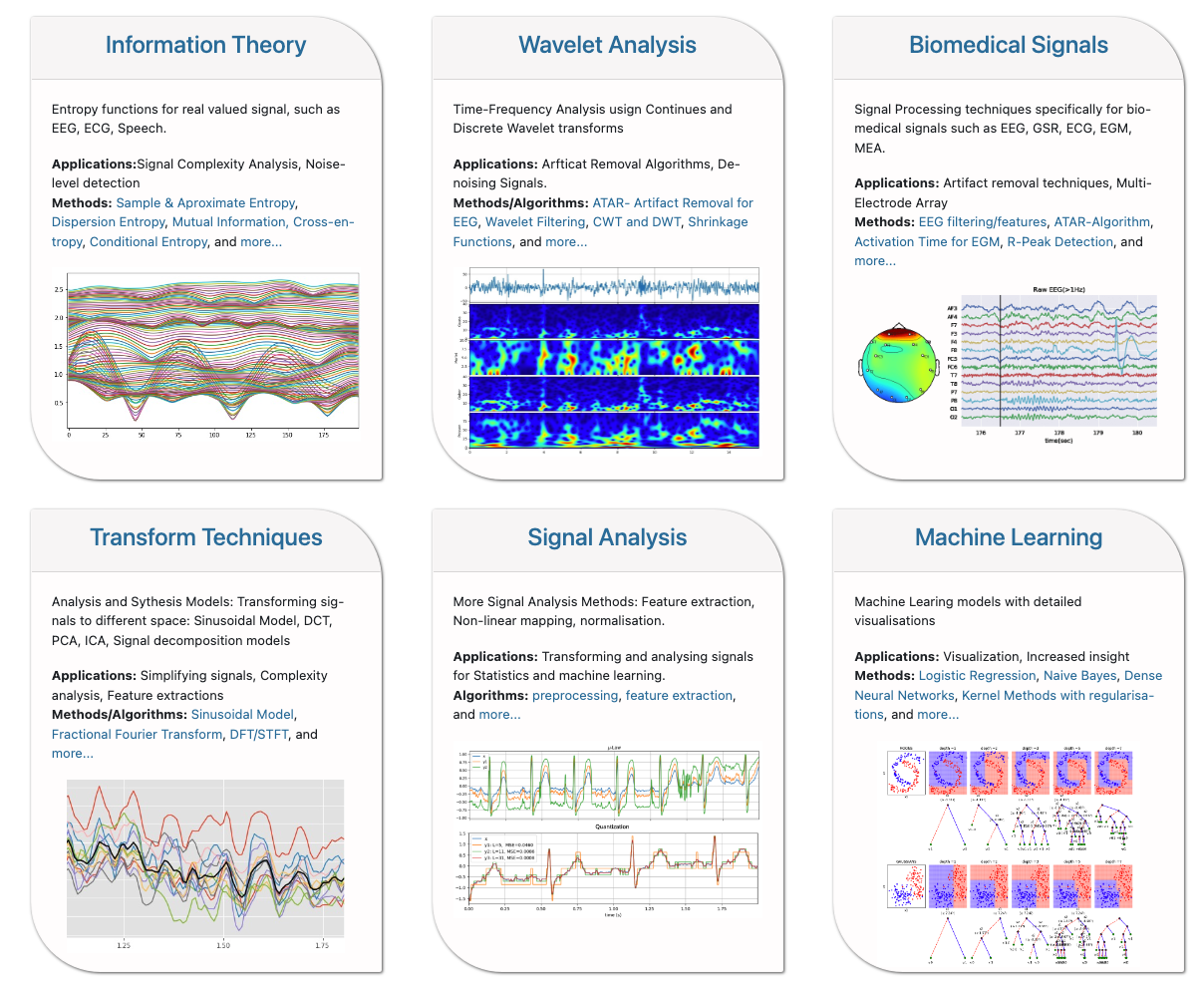

Signal Processing toolkit

Links: Homepage | Documentation | Github | PyPi - project |

Installation

Requirement: numpy, matplotlib, scipy.stats, scikit-learn, seaborn

with pip

pip install spkit

update with pip

pip install spkit --upgrade

For updated list of contents and documentation check github or Documentation

List of functions [check updated list on homepage]

Information Theory and Signal Processing functions

for real valued signals

-

Entropy

- Shannon entropy

- Rényi entropy of order α, Collision entropy,

- Joint entropy

- Conditional entropy

- Mutual Information

- Cross entropy

- Kullback–Leibler divergence

- Spectral Entropy

- Approximate Entropy

- Sample Entropy

- Permutation Entropy

- SVD Entropy

-

Dispersion Entropy (Advanced) - for time series signal

- Dispersion Entropy

- Dispersion Entropy - multiscale

- Dispersion Entropy - multiscale - refined

-

Differential Entropy (Advanced) - for time series signal

- Differential Entropy

- Mutual Information, Conditional, Joint, Entropy

- Transfer Entropy

Matrix Decomposition

- SVD

- ICA using InfoMax, Extended-InfoMax, FastICA & Picard

Continuase Wavelet Transform

- Gauss wavelet

- Morlet wavelet

- Gabor wavelet

- Poisson wavelet

- Maxican wavelet

- Shannon wavelet

Discrete Wavelet Transform

- Wavelet filtering

- Wavelet Packet Analysis and Filtering

Signal Filtering

- Removing DC/ Smoothing for multi-channel signals

- Bandpass/Lowpass/Highpass/Bandreject filtering for multi-channel signals

Biomedical Signal Processing

- EEG Signal Processing

- MEA Processing Toolkit

Artifact Removal Algorithm

- ATAR Algorithm Automatic and Tunable Artifact Removal Algorithm for EEG from artical

- ICA based Algorith

Analysis and Synthesis Models

- DFT Analysis & Synthesis

- STFT Analysis & Synthesis

- Sinasodal Model - Analysis & Synthesis

- to decompose a signal into sinasodal wave tracks

- f0 detection

Ramanajum Methods for period estimation

- Period estimation for a short length sequence using Ramanujam Filters Banks (RFB)

- Minizing sparsity of periods

Fractional Fourier Transform

- Fractional Fourier Transform

- Fast Fractional Fourier Transform

Machine Learning models - with visualizations

- Logistic Regression

- Naive Bayes

- Decision Trees

and many more ...

Cite As

@software{nikesh_bajaj_2021_4710694,

author = {Nikesh Bajaj},

title = {Nikeshbajaj/spkit: 0.0.9.4},

month = apr,

year = 2021,

publisher = {Zenodo},

version = {0.0.9.4},

doi = {10.5281/zenodo.4710694},

url = {https://doi.org/10.5281/zenodo.4710694}

}

Contacts:

- Nikesh Bajaj

- https://nikeshbajaj.in

- n.bajaj[AT]qmul.ac.uk, n.bajaj[AT]imperial[dot]ac[dot]uk

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

spkit-0.0.9.7.tar.gz

(4.2 MB

view details)

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file spkit-0.0.9.7.tar.gz.

File metadata

- Download URL: spkit-0.0.9.7.tar.gz

- Upload date:

- Size: 4.2 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/5.1.1 CPython/3.11.5

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

9ecdf0b811cfb5d7a68b7311407e21cfee9177db308f4f9166f247ede00603b3

|

|

| MD5 |

a0d5e6d3ab6acef948a7a655b3535a3b

|

|

| BLAKE2b-256 |

2b5dbec5efe3b11d0ed693774dad739b10b4813575ada9a4ea39e2659cdd7212

|

File details

Details for the file spkit-0.0.9.7-py3-none-any.whl.

File metadata

- Download URL: spkit-0.0.9.7-py3-none-any.whl

- Upload date:

- Size: 4.3 MB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/5.1.1 CPython/3.11.5

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

cf3b3542b72e3ae87c2a45b83223a5442f4fb253a2f7886b62b9cecd9b8cc316

|

|

| MD5 |

07da842953a6cd1cd15f0b7f93b1871a

|

|

| BLAKE2b-256 |

05e3d9f22f54eff617119b68cc66aa799b6212ecb4d7b765b0cc4ac0b39606cd

|