Fast probabilistic data linkage at scale

Project description

[!IMPORTANT] 🎉 Splink 4 has been released! Examples of new syntax are here and a release announcement is here.

Fast, accurate and scalable data linkage and deduplication

Splink is a Python package for probabilistic record linkage (entity resolution) that allows you to deduplicate and link records from datasets that lack unique identifiers.

It is used widely by within government, academia and the private sector - see use cases.

Key Features

⚡ Speed: Capable of linking a million records on a laptop in around a minute.

🎯 Accuracy: Support for term frequency adjustments and user-defined fuzzy matching logic.

🌐 Scalability: Execute linkage in Python (using DuckDB) or big-data backends like AWS Athena or Spark for 100+ million records.

🎓 Unsupervised Learning: No training data is required for model training.

📊 Interactive Outputs: A suite of interactive visualisations help users understand their model and diagnose problems.

Splink's linkage algorithm is based on Fellegi-Sunter's model of record linkage, with various customisations to improve accuracy.

What does Splink do?

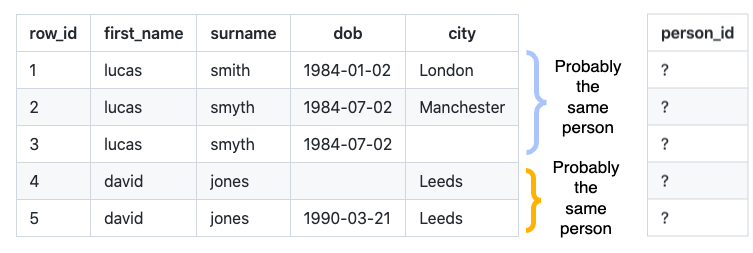

Consider the following records that lack a unique person identifier:

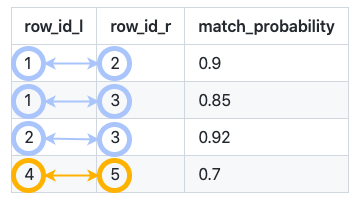

Splink predicts which rows link together:

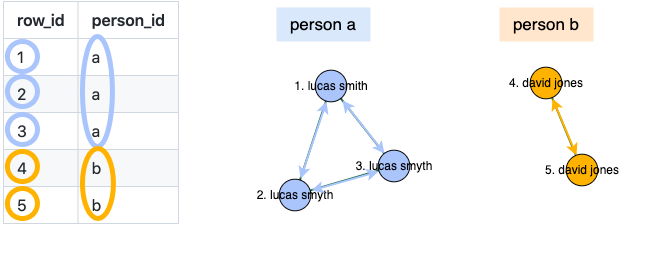

and clusters these links to produce an estimated person ID:

What data does Splink work best with?

Splink performs best with input data containing multiple columns that are not highly correlated. For instance, if the entity type is persons, you may have columns for full name, date of birth, and city. If the entity type is companies, you could have columns for name, turnover, sector, and telephone number.

High correlation occurs when one column is highly predictable from another - for instance, city can be predicted from postcode. Correlation is particularly problematic if all of your input columns are highly correlated.

Splink is not designed for linking a single column containing a 'bag of words'. For example, a table with a single 'company name' column, and no other details.

Documentation

The homepage for the Splink documentation can be found here, including a tutorial and examples that can be run in the browser.

The specification of the Fellegi Sunter statistical model behind splink is similar as that used in the R fastLink package. Accompanying the fastLink package is an academic paper that describes this model. The Splink documentation site and a series of interactive articles also explores the theory behind Splink.

The Office for National Statistics have written a case study about using Splink to link 2021 Census data to itself.

Installation

Splink supports python 3.9+. To obtain the latest released version of splink you can install from PyPI using pip:

pip install splink

or, if you prefer, you can instead install splink using conda:

conda install -c conda-forge splink

Installing Splink for Specific Backends

For projects requiring specific backends, Splink offers optional installations for Spark, Athena, and PostgreSQL. These can be installed by appending the backend name in brackets to the pip install command:

pip install 'splink[{backend}]'

Click here for backend-specific installation commands

Spark

pip install 'splink[spark]'

Athena

pip install 'splink[athena]'

PostgreSQL

pip install 'splink[postgres]'

Quickstart

The following code demonstrates how to estimate the parameters of a deduplication model, use it to identify duplicate records, and then use clustering to generate an estimated unique person ID.

For more detailed tutorial, please see here.

import splink.comparison_library as cl

from splink import DuckDBAPI, Linker, SettingsCreator, block_on, splink_datasets

db_api = DuckDBAPI()

df = splink_datasets.fake_1000

settings = SettingsCreator(

link_type="dedupe_only",

comparisons=[

cl.JaroWinklerAtThresholds("first_name", [0.9, 0.7]),

cl.JaroAtThresholds("surname", [0.9, 0.7]),

cl.DateOfBirthComparison(

"dob",

input_is_string=True,

datetime_metrics=["year", "month"],

datetime_thresholds=[1, 1],

),

cl.ExactMatch("city").configure(term_frequency_adjustments=True),

cl.EmailComparison("email"),

],

blocking_rules_to_generate_predictions=[

block_on("first_name"),

block_on("surname"),

]

)

linker = Linker(df, settings, db_api)

linker.training.estimate_probability_two_random_records_match(

[block_on("first_name", "surname")],

recall=0.7,

)

linker.training.estimate_u_using_random_sampling(max_pairs=1e6)

linker.training.estimate_parameters_using_expectation_maximisation(

block_on("first_name", "surname")

)

linker.training.estimate_parameters_using_expectation_maximisation(block_on("dob"))

pairwise_predictions = linker.inference.predict(threshold_match_weight=-10)

clusters = linker.clustering.cluster_pairwise_predictions_at_threshold(

pairwise_predictions, 0.95

)

df_clusters = clusters.as_pandas_dataframe(limit=5)

Videos

- Pydata Global 2024 talk

- A introductory presentation on Splink

- An introduction to the Splink Comparison Viewer dashboard

Support

To find the best place to ask a question, report a bug or get general advice, please refer to our Guide.

Awards

🥇 Civil Service Awards 2025: Innovation category - Winner

🥇 Civil Service Awards 2025: The Excellence In Delivery Award was won by a dashboard powered by Splink.

🥇 OpenUK Awards 2025: Open data category - Winner

🥈 Civil Service Awards 2023: Best Use of Data, Science, and Technology - Runner up

🥇 Analysis in Government Awards 2022: People's Choice Award - Winner

🥈 Analysis in Government Awards 2022: Innovative Methods - Runner up

🥇 Analysis in Government Awards 2020: Innovative Methods - Winner

🥇 MoJ Data and Analytical Services Directorate (DASD) Awards 2020: Innovation and Impact - Winner

Citation

If you use Splink in your research, please cite as follows:

@article{Linacre_Lindsay_Manassis_Slade_Hepworth_2022,

title = {Splink: Free software for probabilistic record linkage at scale.},

author = {Linacre, Robin and Lindsay, Sam and Manassis, Theodore and Slade, Zoe and Hepworth, Tom and Kennedy, Ross and Bond, Andrew},

year = 2022,

month = {Aug.},

journal = {International Journal of Population Data Science},

volume = 7,

number = 3,

doi = {10.23889/ijpds.v7i3.1794},

url = {https://ijpds.org/article/view/1794},

}

Acknowledgements

We are very grateful to ADR UK (Administrative Data Research UK) for providing the initial funding for this work as part of the Data First project.

We are extremely grateful to professors Katie Harron, James Doidge and Peter Christen for their expert advice and guidance in the development of Splink. We are also very grateful to colleagues at the UK's Office for National Statistics for their expert advice and peer review of this work. Any errors remain our own.

Related Repositories

While Splink is a standalone package, there are a number of repositories in the Splink ecosystem:

- splink_scalaudfs contains the code to generate User Defined Functions in scala which are then callable in Spark.

- splink_datasets contains datasets that can be installed automatically as a part of Splink through the In-build datasets functionality.

- splink_synthetic_data contains code to generate synthetic data.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file splink-4.0.12.tar.gz.

File metadata

- Download URL: splink-4.0.12.tar.gz

- Upload date:

- Size: 2.1 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: uv/0.9.18 {"installer":{"name":"uv","version":"0.9.18","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":null,"ci":true}

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

66a7a08e354d174cc9f126a74f3811080804fbc774506952fe618dad80f1900a

|

|

| MD5 |

dbfcdcfe51bbc2ede4f1969745f47ea0

|

|

| BLAKE2b-256 |

195f99942822c76a5672cef2a43bbff2bcf1dab3cf4b0721245670ff4417ed15

|

File details

Details for the file splink-4.0.12-py3-none-any.whl.

File metadata

- Download URL: splink-4.0.12-py3-none-any.whl

- Upload date:

- Size: 2.2 MB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: uv/0.9.18 {"installer":{"name":"uv","version":"0.9.18","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":null,"ci":true}

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

7d415e532596e5a696b7b622701e86a6ec6cc3b8d406b725c0aefc9479a7b118

|

|

| MD5 |

5daa3c4245b4b00f707c800ec9bf2ecb

|

|

| BLAKE2b-256 |

30b3131726f43cbbc563fc592096f2625565db8247117e03e7b64e63e206804b

|