DeepAgents implementation using Strands Agents SDK - creating planning-capable agents with sub-agents and virtual file systems

Project description

Strands Deep Agents

Build sophisticated AI agent systems with planning, sub-agent delegation, and multi-step workflows. Built on the Strands Agents SDK.

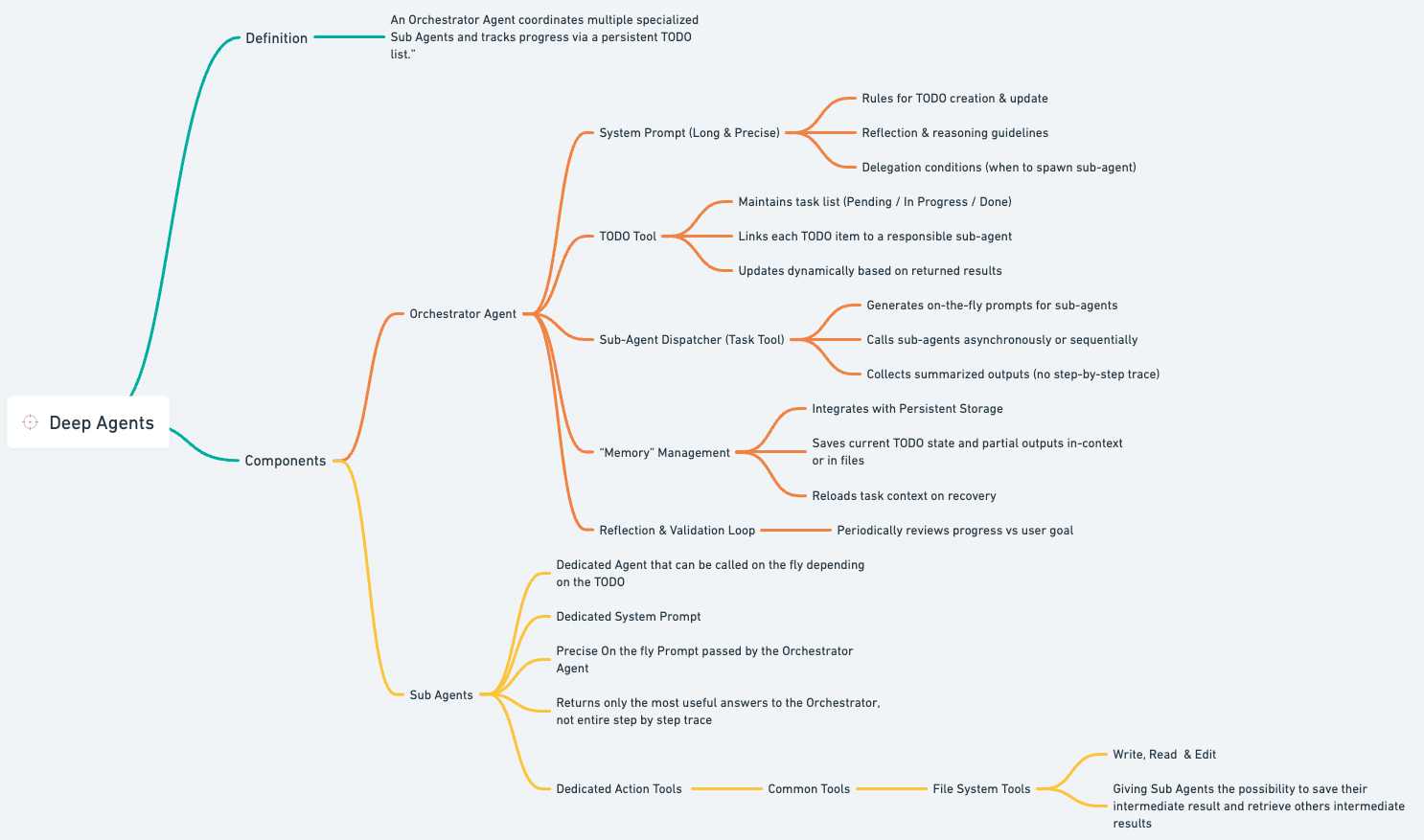

What is Deep Agents?

Deep Agents enables AI systems to handle complex, multi-step tasks through:

- 🧠 Strategic Planning - Break down complex tasks into actionable TODOs

- 🤝 Sub-agent Orchestration - Delegate specialized tasks to focused agents

- 📁 Context Management - Efficient file-based operations to keep context lean

- 💾 Session Persistence - Resume work across sessions

- 🔬 Research & Analysis - Multi-perspective investigation and synthesis

Featured Example: DeepSearch - A production-ready research agent demonstrating sub-agent orchestration, parallel research, and intelligent synthesis.

Check the related article for more details.

Installation

# Using UV

uv add strands-deep-agents

# Using pip

pip install strands-deep-agents

Requirements: Python >= 3.12

Quick Start

Basic Agent

from strands_deep_agents import create_deep_agent

agent = create_deep_agent(

instructions="You are a helpful coding assistant.",

model="global.anthropic.claude-sonnet-4-5-20250929-v1:0"

)

result = agent("Create a Python calculator module with add, subtract, multiply, divide functions")

print(result)

The agent will automatically:

- Plan the task using TODOs

- Create the file with proper structure

- Add docstrings and examples

Check Task Progress

# View the agent's planned tasks

for todo in agent.state.get("todos", []):

print(f"[{todo['status']}] {todo['content']}")

Output:

[completed] Plan calculator module structure

[completed] Create calculator.py file

[completed] Add arithmetic functions

[completed] Add docstrings and examples

DeepSearch: Advanced Research Agent

DeepSearch demonstrates the full power of Deep Agents through a production-ready research agent system with intelligent orchestration and parallel research capabilities.

For this example, we can use any internet search tool, for example [Linkup] (https://app.linkup.so/) or tavily tool for web search.

Architecture

DeepSearch uses a three-tier agent architecture:

- Research Lead Agent - Strategic planning, task decomposition, and synthesis

- Research Sub-agents - Parallel, focused investigation on specific topics

- Citations Agent - Post-processing to add proper source references

Key Features

- Intelligent Task Decomposition: Analyzes queries as depth-first (multiple perspectives) or breadth-first (independent sub-questions)

- Parallel Research: Deploys multiple sub-agents simultaneously for efficient information gathering

- Context Management: Sub-agents write findings to files, keeping context lean

- Source Citation: Automated citation agent adds proper references to final reports

- Session Persistence: Resume research across sessions

Running DeepSearch

cd examples/deepsearch

# Basic usage with default prompt

python agent.py

# Custom research prompt

python agent.py -p "Research the impact of AI on healthcare in 2025"

DeepSearch Implementation

from strands_deep_agents import create_deep_agent, SubAgent

from strands_tools import tavily, file_read, file_write

# Research sub-agent - performs focused investigations

research_subagent = SubAgent(

name="research_subagent",

description=(

"Specialized research agent for focused investigations. "

"Researches specific questions, gathers facts, analyzes sources. "

"Has access to web search. Writes findings to files."

),

tools=[tavily, file_write],

prompt="You are a thorough researcher. Gather comprehensive, accurate information..."

)

# Citations agent - adds source references

citations_agent = SubAgent(

name="citations_agent",

description="Adds proper citations to research reports.",

tools=[file_read, file_write],

prompt="Add citations to research text using provided sources..."

)

# Create the research lead agent

agent = create_deep_agent(

instructions="""

You are an expert research lead. Your role:

1. Analyze the research question

2. Create a research plan

3. Deploy research sub-agents for focused investigations

4. Synthesize findings into a comprehensive report

5. Call citations agent to add references

""",

subagents=[research_subagent, citations_agent],

tools=[file_read, file_write]

)

# Execute research

result = agent("""

Research AI safety in 2025:

1. Main challenges and concerns

2. Leading organizations and initiatives

Create a comprehensive report with executive summary.

""")

How DeepSearch Works

- Planning Phase: Lead agent analyzes the query and creates a research plan

- Parallel Research: Multiple research sub-agents investigate different aspects simultaneously

- File-Based Context: Sub-agents write findings to

./research_findings_*.mdfiles - Synthesis: Lead agent reads all findings and synthesizes a comprehensive report

- Citations: Citations agent adds proper source references to the final report

Example Output Structure

Research findings written to: research_findings_1.md, research_findings_2.md, ...

Final report: ai_safety_2025_report.md

TODOs:

✅ Analyze research question and create plan

✅ Deploy subagent: AI safety challenges

✅ Deploy subagent: Leading organizations

✅ Deploy subagent: Recent initiatives

✅ Synthesize findings into report

✅ Add citations to report

Core Patterns

Sub-agent Delegation

Deep Agents excel at delegating specialized tasks:

from strands_deep_agents import create_deep_agent

subagents = [

{

"name": "researcher",

"description": "Conducts focused research on specific topics",

"prompt": "You are a thorough researcher. Gather comprehensive information."

},

{

"name": "analyst",

"description": "Analyzes data and identifies patterns",

"prompt": "You are a data analyst. Find insights and patterns."

}

]

agent = create_deep_agent(

instructions="You are a research coordinator.",

subagents=subagents

)

Session Persistence

Resume work across sessions:

from strands.session.file_session_manager import FileSessionManager

session_manager = FileSessionManager(

session_id="project-xyz",

storage_dir="./sessions"

)

agent = create_deep_agent(

instructions="You are a research assistant.",

session_manager=session_manager

)

# First session

agent("Start researching quantum computing")

# Later - conversation history and TODOs restored

agent("Continue where we left off")

Custom Tools

Extend agents with your own tools:

from strands import tool

@tool

def web_search(query: str) -> str:

"""Search the web for information."""

# Your implementation

return search_results

agent = create_deep_agent(

instructions="You are a research assistant.",

tools=[web_search]

)

API Reference

create_deep_agent()

Create a deep agent with planning and sub-agent capabilities.

from strands_deep_agents import create_deep_agent

agent = create_deep_agent(

instructions="System prompt for the agent",

model=None, # Default: Claude Sonnet 4

subagents=None, # List of SubAgent configs

tools=None, # Additional custom tools

session_manager=None, # For persistence

disable_parallel_tool_calling=False, # Force sequential execution

**kwargs # Additional Agent params

)

SubAgent

Define specialized sub-agents:

from strands_deep_agents import SubAgent

subagent = SubAgent(

name="unique_name",

description="When to use this agent (helps main agent decide)",

prompt="System prompt for sub-agent",

tools=[...], # Optional: specific tools

model=model # Optional: override model

)

Async Support

from strands_deep_agents import async_create_deep_agent

agent = await async_create_deep_agent(

instructions="You are a research assistant."

)

result = await agent.invoke_async("Your task")

Configuration

Environment Variables

# AWS Bedrock (default provider)

export AWS_REGION=us-east-1

export AWS_ACCESS_KEY_ID=your_key

export AWS_SECRET_ACCESS_KEY=your_secret

# Optional: Skip tool consent prompts

export BYPASS_TOOL_CONSENT=true

Model Options

# Use AWS Bedrock models (default)

agent = create_deep_agent(

model="global.anthropic.claude-sonnet-4-5-20250929-v1:0"

)

# Custom model configuration

from strands.models import Model

custom_model = Model(

provider="bedrock",

name="claude-3-5-sonnet-20241022-v2:0",

region="us-east-1"

)

agent = create_deep_agent(model=custom_model)

Additional Examples

The examples/ directory contains more patterns:

# Basic agent usage

python examples/basic_usage.py

# Sub-agent delegation patterns

python examples/sub_agents.py

# Session persistence

python examples/session_persistence.py

# DeepSearch - Advanced research agent (recommended)

cd examples/deepsearch

python agent.py

Best Practices

- Clear Instructions - Be specific about agent roles and expectations

- Strategic Sub-agents - Use sub-agents for specialized, focused tasks

- Context Management - Use file operations to keep context lean

- Session Persistence - Enable for long-running or resumable tasks

- Prompt for Planning - Encourage agents to create plans before execution

Links

- GitHub: https://github.com/lemopian/strands-deep-agents

- Strands Agents SDK: https://github.com/strands-ai/strands-agents

- Article: Deep Agents Using Strands

- Inspiration: https://github.com/langchain-ai/deepagents

License

MIT License - see LICENSE file for details.

Built with ❤️ by PA

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file strands_deep_agents-0.1.1.tar.gz.

File metadata

- Download URL: strands_deep_agents-0.1.1.tar.gz

- Upload date:

- Size: 324.8 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

284220b5e9ba056eb7811347a6fd1c153664f519cc5e87bd2693c287e4322a90

|

|

| MD5 |

94fbbd57eaf350936a252b10f89ddaf0

|

|

| BLAKE2b-256 |

8b1d81da1017a90f0fe1b54922ef10e3bee0f6cc411dacc4ab72115c0f169253

|

Provenance

The following attestation bundles were made for strands_deep_agents-0.1.1.tar.gz:

Publisher:

publish.yml on lemopian/strands-deep-agents

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

strands_deep_agents-0.1.1.tar.gz -

Subject digest:

284220b5e9ba056eb7811347a6fd1c153664f519cc5e87bd2693c287e4322a90 - Sigstore transparency entry: 696522540

- Sigstore integration time:

-

Permalink:

lemopian/strands-deep-agents@a5260f6b096fc4c88fa2f28c2ca5f9088fe92bb7 -

Branch / Tag:

refs/tags/v0.1.1 - Owner: https://github.com/lemopian

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@a5260f6b096fc4c88fa2f28c2ca5f9088fe92bb7 -

Trigger Event:

push

-

Statement type:

File details

Details for the file strands_deep_agents-0.1.1-py3-none-any.whl.

File metadata

- Download URL: strands_deep_agents-0.1.1-py3-none-any.whl

- Upload date:

- Size: 23.8 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

07e8bfb95f5379345de8ba63a1c4722aa70ab3ab0d964172a0a43f9ed56235d3

|

|

| MD5 |

16ea21caca9ba25accec81ccbfcf2cfa

|

|

| BLAKE2b-256 |

14a69380338103240e21bfd68a15cebb7558adc1ad7f2dba35a85b9981121352

|

Provenance

The following attestation bundles were made for strands_deep_agents-0.1.1-py3-none-any.whl:

Publisher:

publish.yml on lemopian/strands-deep-agents

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

strands_deep_agents-0.1.1-py3-none-any.whl -

Subject digest:

07e8bfb95f5379345de8ba63a1c4722aa70ab3ab0d964172a0a43f9ed56235d3 - Sigstore transparency entry: 696522546

- Sigstore integration time:

-

Permalink:

lemopian/strands-deep-agents@a5260f6b096fc4c88fa2f28c2ca5f9088fe92bb7 -

Branch / Tag:

refs/tags/v0.1.1 - Owner: https://github.com/lemopian

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@a5260f6b096fc4c88fa2f28c2ca5f9088fe92bb7 -

Trigger Event:

push

-

Statement type: