Chain small language models to outperform large ones — runs locally on 8GB RAM

Project description

S.U.T.R.A

███████╗ ██╗ ██╗ ████████╗ ██████╗ █████╗

██╔════╝ ██║ ██║ ╚══██╔══╝ ██╔══██╗ ██╔══██╗

███████╗ ██║ ██║ ██║ ██████╔╝ ███████║

╚════██║ ██║ ██║ ██║ ██╔══██╗ ██╔══██║

███████║ ╚██████╔╝ ██║ ██║ ██║ ██║ ██║

╚══════╝ ╚═════╝ ╚═╝ ╚═╝ ╚═╝ ╚═╝ ╚═╝

Structured Universal Transfer via Retrieval Adaptation by Shubham Kumar

ok so idk how to explain this properly but basically i got tired of paying for API calls and also tired of my laptop dying trying to run a 70B model so i built this thing.

the idea came from a weird place — i was reading about how LLMs do in-context learning and thought, what if instead of trying to make one model smarter, you just... make multiple small models argue with each other and then have a slightly bigger one read the argument and write the answer. turns out that actually works. not always. but enough.

the numbers first

because that's what you're actually here for.

ran the full HumanEval benchmark. 164 python coding problems. standard eval everyone uses.

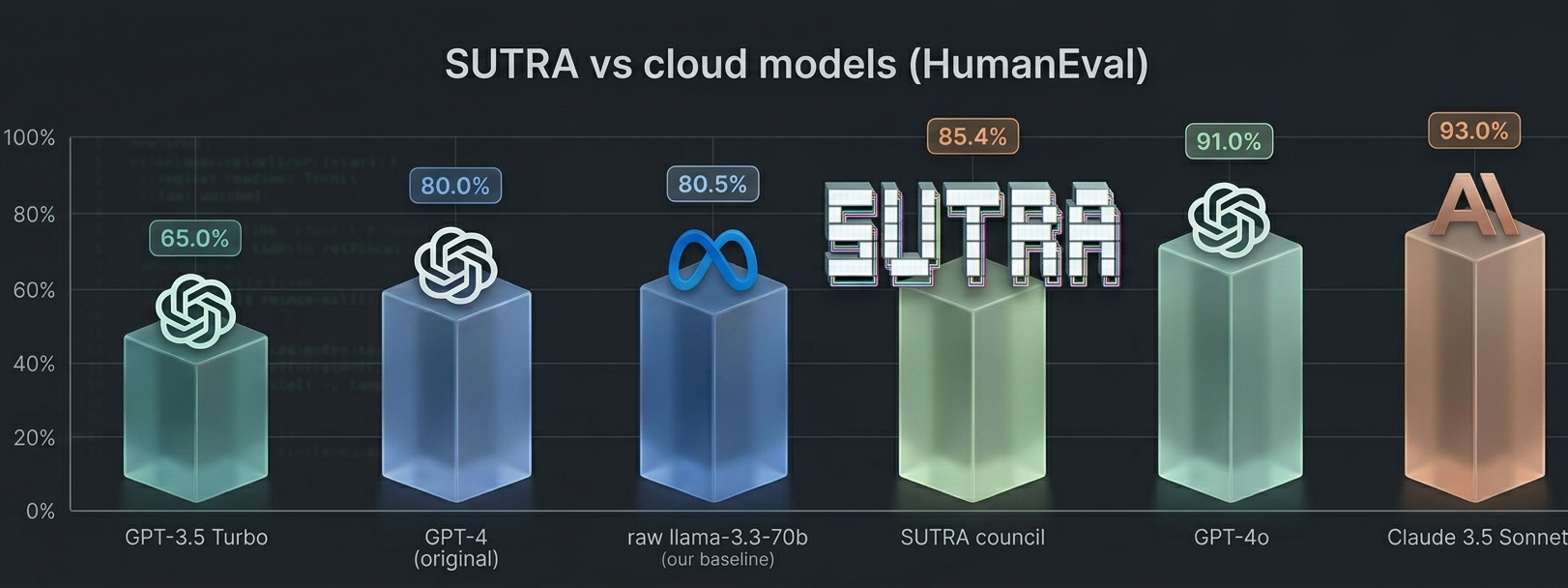

SUTRA vs cloud models (HumanEval pass@1)

SUTRA uses

llama-3.3-70bas the backbone + twollama-3.1-8bcouncil passes. cloud model scores from published benchmarks. this is not a parameter-count comparison — SUTRA costs 4 inference calls vs 1, but runs on consumer hardware with no API subscription.

SUTRA sits above GPT-4 original. on a machine with 8GB RAM. running entirely offline.

full benchmark breakdown

| method | pass@1 | solved | delta |

|---|---|---|---|

raw llama-3.3-70b (baseline) |

0.805 | 132/164 | — |

| SUTRA council | 0.854 | 140/164 | +8 problems |

council only ran on the 32 problems the baseline failed. rescued 8 of them. zero regressions — if baseline passed it, council never ran on it so it literally cannot make things worse.

rescued: HumanEval/8 /32 /64 /65 /83 /86 /93 /121

hard problems specifically (20 problems, HumanEval 32–163 range)

this is where it actually matters. easy problems don't need council — the large model handles them fine alone.

| method | pass@1 | solved |

|---|---|---|

raw llama-3.3-70b |

0.600 | 12/20 |

| SUTRA council | 0.800 | 16/20 |

| delta | +0.200 | +4 problems |

+20% on hard problems. 25% rescue rate on failures. that's the real signal.

easy problems (same 20-problem test)

| method | pass@1 | solved |

|---|---|---|

raw llama-3.3-70b |

0.900 | 18/20 |

| SUTRA council | 0.800 | 16/20 |

| delta | -0.100 | -2 problems |

council makes easy problems slightly worse. the 8b critique adds noise when the 70b already knows the answer. that's why /quick exists — skip council when you don't need it.

raw data in benchmark/sutra_full_results.json.

what even is this

it's a pipeline. four stages. runs entirely locally on ollama. never puts two models in RAM at the same time because 8GB doesn't go very far.

your query

↓

small model (temp 0.3) → answer A

small model (temp 0.8) → answer B ← same model, different temp = genuinely different answer

small model (temp 0.3) → critiques both

large model (temp 0.2) → reads everything, writes final answer

the temperature thing matters. running the same model twice at different temps gives you one conservative answer and one exploratory one. the critique finds where they disagree or where both went wrong. the large model synthesizes something that (hopefully) avoids both failure modes.

is this just fancy prompting? kind of. but it's fancy prompting with a real benchmark behind it so.

is it fast

lmaooo no. on CPU with 8GB RAM you're looking at 5-10 minutes per query in council mode. on a machine with a GPU it drops to like 1-2 minutes. on Groq's free API it runs in ~30 seconds.

the architecture is sound — the slowness is a hardware constraint not a design flaw. /quick mode skips council and just asks the large model directly when you need speed.

install

you need Ollama running. that's it.

git clone https://github.com/metalheadshubham/SUTRA

cd SUTRA

pip install -r requirements.txt

ollama pull qwen2.5-coder:3b

ollama pull qwen2.5-coder:7b

python -m agent_handoff

Python 3.10+. 8GB RAM minimum. works on Windows, Mac, Linux (Mac is faster bc Apple Silicon).

models

default setup i benchmarked:

- small model (stages 1, 2, 3):

qwen2.5-coder:3b - large model (synthesis):

qwen2.5-coder:7b

if you have more RAM or a GPU:

deepseek-r1:8bas large — actual chain-of-thought reasoning, significantly better on hard problems but very slow on CPUllama3.1:8bas large — good general purpose alternative

the CLI auto-detects your Ollama models and recommends roles based on parameter count.

commands

/quick <query> skip council, ask large model directly (fast path)

/council <query> force council mode explicitly

/save save last output — asks you where

/load <file> attach a file to context for next query

/unload remove attached files

/project see files saved + loaded this session

/logs last 5 runs with timing

/plugins loaded plugins + hooks

/install <url> install a plugin from a .py URL

/help all commands

multiline input — type """ to open a block, paste whatever, """ to send. good for pasting code you want fixed.

workspace

load files into context so the council reads them without you copy-pasting anything.

❯ implement a FastAPI router for user auth

❯ /save

Save to (path or filename): src/auth.py

✓ Saved to C:\project\src\auth.py · registered in workspace

❯ /load auth.py

✓ Loaded auth.py (~340 tokens) · attached to context

❯ add rate limiting to this

[council now sees auth.py automatically]

if the file is large (>800 tokens) it warns you to switch to a bigger model before continuing.

plugins

drop a .py file into ~/.sutra/plugins/. loaded on startup. hooks:

def pre_query(query): ...

def post_answer_a(text): ...

def post_answer_b(text): ...

def post_critique(text): ...

def post_synthesis(text): ...

built-in logger saves every run to ~/.sutra/logs/YYYY-MM-DD.jsonl automatically.

roadmap

- difficulty router — auto-detect when to use council vs quick

- MCP server — expose council as an MCP tool so Claude/Cursor can call it

- math + reasoning benchmarks — HumanEval is coding only, want GSM8K

- ablation study — prove the critique step is doing something (vs just calling twice)

- same benchmark on fully local models (no Groq)

project structure

agent_handoff/

├── cli.py # terminal UI — where you spend your time

├── handoff.py # AgentHandoff (old) + CouncilHandoff (new)

├── templates.py # prompts for each council stage

├── protocol.py # HandoffPacket, CouncilResult dataclasses

├── parser.py # extracts structured output from model responses

├── cache.py # SHA-256 keyed cache with TTL

└── utils.py # helpers

benchmark/

└── sutra_full_results.json # raw data behind the numbers above

tests/ # 56 tests, all pass

license

MIT. do whatever.just give me the credits

shit works.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file sutra_llm-0.2.1.tar.gz.

File metadata

- Download URL: sutra_llm-0.2.1.tar.gz

- Upload date:

- Size: 47.7 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.10.11

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

cdb5dcb194a50a5a2e8679a63b9ff251daa06d6bceea823e24b77d715ffd80e6

|

|

| MD5 |

188e3932979edd9a2c938c4abf1df61f

|

|

| BLAKE2b-256 |

e9826e06a92ed7b21475199e5ee1d9985a4b184ef4f06146deee3942c40079e8

|

File details

Details for the file sutra_llm-0.2.1-py3-none-any.whl.

File metadata

- Download URL: sutra_llm-0.2.1-py3-none-any.whl

- Upload date:

- Size: 43.1 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.10.11

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

efe8b58548bf0cc4bb1ef4ba9147d65d4dfcc8a697993a3b075cfe6ca5f7c6fb

|

|

| MD5 |

8acb7e8d980b61de2394345e32b7ad02

|

|

| BLAKE2b-256 |

da476efe618d871b023d6a7a7aef7d765e1809ee8f3bde42a516f08ce3bdfcee

|