OpenPaw is a **personal assistant** that runs in your own environment. It talks to you over multiple channels (DingTalk, Feishu, QQ, Discord, iMessage, etc.) and runs scheduled tasks according to your configuration. **What it can do is driven by Skills — the possibilities are open-ended.** Built-in skills include cron, news digest, file reading, and more; you can add custom skills. All data and tasks run on your machine; no third-party hosting. Derived from CoPaw (Apache 2.0).

Project description

OpenPaw

Works for you, grows with you.

Derived from CoPaw (Apache 2.0). Modified for redistribution.

Your Personal AI Assistant; easy to install, deploy on your own machine or on the cloud; supports multiple chat apps with easily extensible capabilities.

Core capabilities:

Every channel — DingTalk, Feishu, QQ, Discord, iMessage, and more. One assistant, connect as you need.

Under your control — Memory and personalization under your control. Deploy locally or in the cloud; scheduled reminders to any channel.

Skills — Built-in cron; custom skills in your workspace, auto-loaded. No lock-in.

What you can do

- Social: daily digest of hot posts (Xiaohongshu, Zhihu, Reddit), Bilibili/YouTube summaries.

- Productivity: newsletter digests to DingTalk/Feishu/QQ, contacts from email/calendar.

- Creative: describe your goal, run overnight, get a draft next day.

- Research: track tech/AI news, personal knowledge base.

- Desktop: organize files, read/summarize docs, request files in chat.

- Explore: combine Skills and cron into your own agentic app.

News

[2026-03-06] We released v0.0.5! See the v0.0.5 Release Notes for the full changelog.

- FEAT: Channel management; Twilio voice channel; DeepSeek Reasoner support; daemon mode; agent interruption API; version update notifications.

- PERF: Memory system upgrade (reme-ai 0.3.0.3); console UI improvements (request cancellation, collapsible sidebar); optional channel lazy loading; Windows installation script.

- BUGFIX: Configuration persistence for Docker; Ollama base URL; channel fixes (DingTalk, Feishu, Telegram); Windows compatibility; MCP client stability, shell timeout hang fix.

- DOCS: Model configuration guide; Docker + Ollama guide; Japanese README; release notes system, improve channel guide.

- COMM: Special thanks to all new contributors: @qoli, @qbc2016, @yunlzheng, @BlueSkyXN, @sidonsoft, @lishengzxc, @pikaxinge, @linshengli, @eltociear, @liuxiaopai-ai, @Leirunlin, @pan-x-c, @garyzhang99, @celestialhorse51D, @wwx814, @nszhsl, @DavdGao, @zhangckcup.

[2026-03-02] We released v0.0.4! See the v0.0.4 Release Notes for the full changelog.

Table of Contents

Recommended reading:

- I want to run OpenPaw in 3 commands: Quick Start → open Console in browser.

- I want to chat in DingTalk / Feishu / QQ: Quick Start → Channels.

- I don’t want to install Python: One-line install handles Python automatically, or use ModelScope one-click for cloud.

- News

- Quick Start

- API Key

- Local Models

- Documentation

- FAQ

- Roadmap

- Contributing

- Install from source

- Why OpenPaw?

- Built by

- License

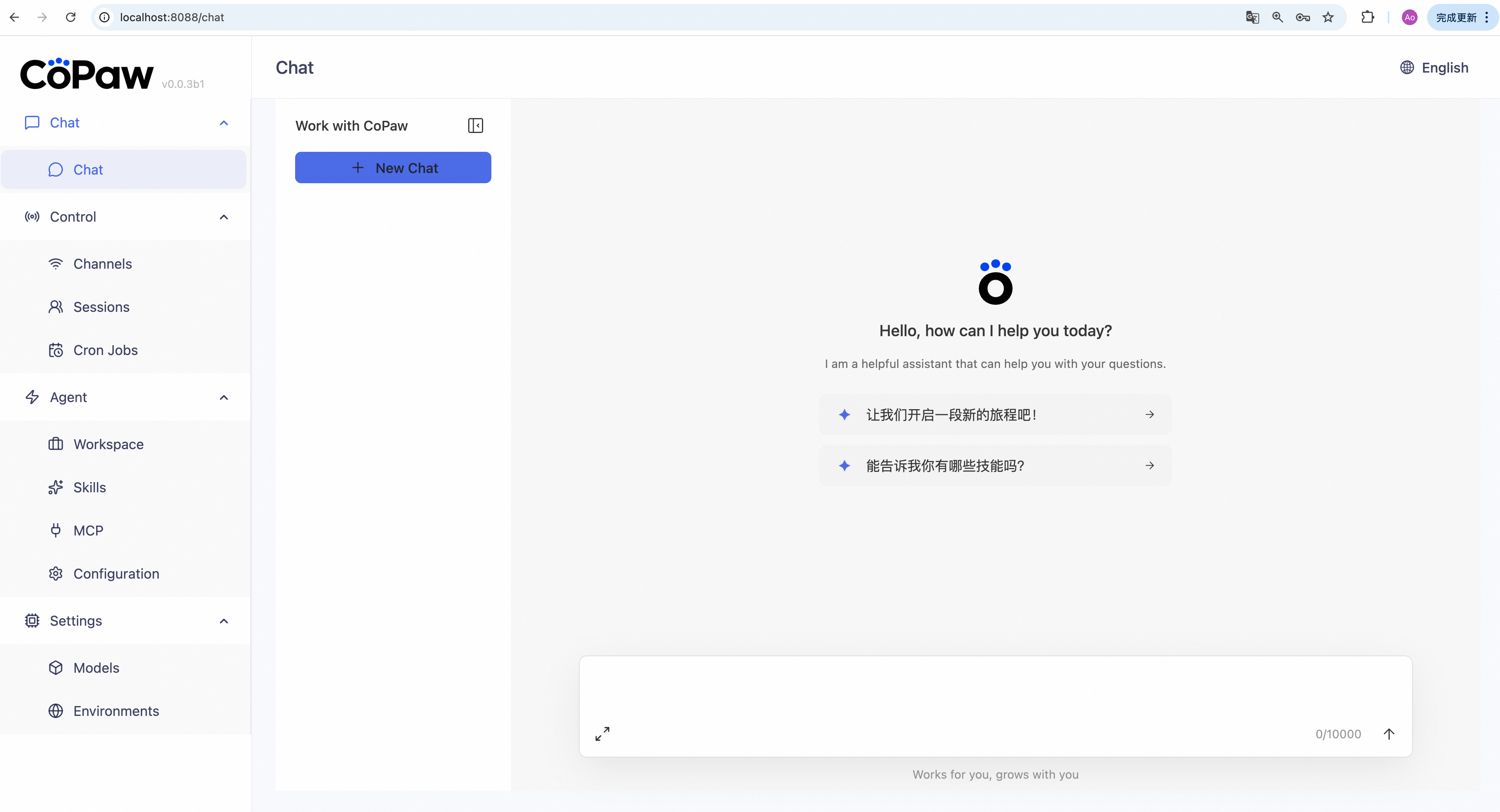

Quick Start

pip install (recommended)

If you prefer managing Python yourself:

pip install openpaw

openpaw init --defaults

openpaw app

Then open http://127.0.0.1:8088/ in your browser for the Console (chat with OpenPaw, configure the agent). To talk in DingTalk, Feishu, QQ, etc., add a channel in the docs.

One-line install (beta, continuously improving)

No Python required — the installer handles everything:

macOS / Linux:

curl -fsSL https://openpaw.agentscope.io/install.sh | bash

To install with Ollama support:

curl -fsSL https://openpaw.agentscope.io/install.sh | bash -s -- --extras ollama

To install with multiple extras (e.g., Ollama + llama.cpp):

curl -fsSL https://openpaw.agentscope.io/install.sh | bash -s -- --extras ollama,llamacpp

Windows (CMD):

curl -fsSL https://openpaw.agentscope.io/install.bat -o install.bat && install.bat

Windows (PowerShell):

irm https://openpaw.agentscope.io/install.ps1 | iex

Note: The installer will automatically check the status of uv. If it is not installed, it will attempt to download and configure it automatically. If the automatic installation fails, please follow the on-screen prompts or execute

python -m pip install -U uv, then rerun the installer.

⚠️ Special Notice for Windows Enterprise LTSC Users

If you are using Windows LTSC or an enterprise environment governed by strict security policies, PowerShell may run in Constrained Language Mode, potentially causing the following issue:

If using CMD (.bat): Script executes successfully but fails to write to

PathThe script completes file installation. Due to Constrained Language Mode, it cannot automatically update environment variables. Manually configure as follows:

- Locate the installation directory:

- Check if

uvis available: Enteruv --versionin CMD. If a version number appears, only configure the OpenPaw path. If you receive the prompt'uv' is not recognized as an internal or external command, operable program or batch file,configure both paths.- uv path (choose one based on installation location; use if

uvfails): Typically%USERPROFILE%\.local\bin,%USERPROFILE%\AppData\Local\uv, or theScriptsfolder within your Python installation directory- OpenPaw path: Typically located at

%USERPROFILE%\.openpaw\bin.- Manually add to the system's Path environment variable:

- Press

Win + R, typesysdm.cpland press Enter to open System Properties.- Click “Advanced” -> “Environment Variables”.

- Under “System variables”, locate and select

Path, then click “Edit”.- Click “New”, enter both directory paths sequentially, then click OK to save.

If using PowerShell (.ps1): Script execution interrupted

Due to Constrained Language Mode, the script may fail to automatically download

uv.

- Manually install uv: Refer to the GitHub Release to download

uv.exeand place it in%USERPROFILE%\.local\binor%USERPROFILE%\AppData\Local\uv; or ensure Python is installed and runpython -m pip install -U uv.- Configure

uvenvironment variables: Add theuvdirectory and%USERPROFILE%\.openpaw\binto your system'sPathvariable.- Re-run the installation: Open a new terminal and execute the installation script again to complete the

OpenPawinstallation.- Configure the

OpenPawenvironment variable: Add%USERPROFILE%\.openpaw\binto your system'sPathvariable.

Once installed, open a new terminal and run:

openpaw init --defaults # or: openpaw init (interactive)

openpaw app

Install options

macOS / Linux:

# Install a specific version

curl -fsSL ... | bash -s -- --version 0.0.2

# Install from source (dev/testing)

curl -fsSL ... | bash -s -- --from-source

# With local model support

bash install.sh --extras llamacpp # llama.cpp (cross-platform)

bash install.sh --extras mlx # MLX (Apple Silicon)

bash install.sh --extras llamacpp,mlx

# Upgrade — just re-run the installer

curl -fsSL ... | bash

# Uninstall

openpaw uninstall # keeps config and data

openpaw uninstall --purge # removes everything

Windows (PowerShell):

# Install a specific version

irm ... | iex; .\install.ps1 -Version 0.0.2

# Install from source (dev/testing)

.\install.ps1 -FromSource

# With local model support

.\install.ps1 -Extras llamacpp # llama.cpp (cross-platform)

.\install.ps1 -Extras mlx # MLX

.\install.ps1 -Extras llamacpp,mlx

# Upgrade — just re-run the installer

irm ... | iex

# Uninstall

openpaw uninstall # keeps config and data

openpaw uninstall --purge # removes everything

Using Docker

Images are on Docker Hub (agentscope/openpaw). Image tags: latest (stable); pre (PyPI pre-release).

docker pull agentscope/openpaw:latest

docker run -p 127.0.0.1:8088:8088 -v openpaw-data:/app/working agentscope/openpaw:latest

Also available on Alibaba Cloud Container Registry (ACR) for users in China: agentscope-registry.ap-southeast-1.cr.aliyuncs.com/agentscope/openpaw (same tags).

Then open http://127.0.0.1:8088/ for the Console. Config, memory, and skills are stored in the openpaw-data volume. To pass API keys (e.g. DASHSCOPE_API_KEY), add -e VAR=value or --env-file .env to docker run.

Connecting to Ollama or other services on the host machine

Inside a Docker container,

localhostrefers to the container itself, not your host machine. If you run Ollama (or other model services) on the host and want OpenPaw in Docker to reach them, use one of these approaches:Option A — Explicit host binding (all platforms):

docker run -p 127.0.0.1:8088:8088 \ --add-host=host.docker.internal:host-gateway \ -v openpaw-data:/app/working agentscope/openpaw:latestThen in OpenPaw Settings → Models → Ollama, change the Base URL to

http://host.docker.internal:11434/v1or your corresponding port.Option B — Host networking (Linux only):

docker run --network=host -v openpaw-data:/app/working agentscope/openpaw:latestNo port mapping (

-p) is needed; the container shares the host network directly. Note that all container ports are exposed on the host, which may cause conflicts if the port is already in use.

The image is built from scratch. To build the image yourself, please refer to the Build Docker image section in scripts/README.md, and then push to your registry.

Using ModelScope

No local install? ModelScope Studio one-click cloud setup. Set your Studio to non-public so others cannot control your OpenPaw.

Deploy on Alibaba Cloud ECS

To run OpenPaw on Alibaba Cloud (ECS), use the one-click deployment: open the OpenPaw on Alibaba Cloud (ECS) deployment link and follow the prompts. For step-by-step instructions, see Alibaba Cloud Developer: Deploy your AI assistant in 3 minutes.

API Key

If you use a cloud LLM (e.g. DashScope, ModelScope), you must set an API key before chatting. OpenPaw will not work until a valid key is configured.

Where to set it:

openpaw init— When you runopenpaw init, the command has a step to configure the LLM provider and API key. Follow the prompts to choose a provider and enter your key.- Console — After

openpaw app, open http://127.0.0.1:8088/ → Settings → Models. Select a provider, fill in the API Key field, then activate that provider and model. - Environment variable — For DashScope you can set

DASHSCOPE_API_KEYin your shell or in a.envfile in the working directory.

Tools that need extra keys (e.g. TAVILY_API_KEY for web search) can be set in Console Settings → Environment variables, or see Config for details.

Using local models only? If you use Local Models (llama.cpp or MLX), you do not need any API key.

Local Models

OpenPaw can run LLMs entirely on your machine — no API keys or cloud services required.

| Backend | Best for | Install |

|---|---|---|

| llama.cpp | Cross-platform (macOS / Linux / Windows) | pip install 'openpaw[llamacpp]' or bash install.sh --extras llamacpp |

| MLX | Apple Silicon Macs (M1/M2/M3/M4) | pip install 'openpaw[mlx]' or bash install.sh --extras mlx |

| Ollama | Cross-platform (requires Ollama service) | pip install 'openpaw[ollama]' or bash install.sh --extras ollama |

After installing, download a model and start chatting:

openpaw models download Qwen/Qwen3-4B-GGUF

openpaw models # select the downloaded model

openpaw app # start the server

You can also download and manage local models from the Console UI.

Documentation

| Topic | Description |

|---|---|

| Introduction | What OpenPaw is and how you use it |

| Quick start | Install and run (local or ModelScope Studio) |

| Console | Web UI for chat and agent config |

| Channels | DingTalk, Feishu, QQ, Discord, iMessage, and more |

| Heartbeat | Scheduled check-in or digest |

| Local Models | Run models locally with llama.cpp or MLX |

| CLI | Init, cron jobs, skills, clean |

| Skills | Extend and customize capabilities |

| FAQ | Common questions and troubleshooting tips |

| Memory | Context management and long-term memory |

| Config | Working directory and config file |

Full docs in this repo: website/public/docs/.

FAQ

For common questions, troubleshooting tips, and known issues, please visit the FAQ page.

Roadmap

| Area | Item | Status |

|---|---|---|

| Horizontal Expansion | More channels, models, skills, MCPs — community contributions welcome | Seeking Contributors |

| Existing Feature Extension | Display optimization, download hints, Windows path compatibility, etc. — community contributions welcome | Seeking Contributors |

| Compatibility & Ease of Use | App-level packaging (DMG, EXE) | In Progress |

| One-click deployment: built-in deps, dev extras, install/upgrade tutorials | In Progress | |

| Release & Contributing | Contributing docs and test framework | In Progress |

| Responsive handling of community contributions | In Progress | |

| Contributing guidance for vibe coding agents | Planned | |

| Bugfixes & Enhancements | Message collapse/hide in UI | Planned |

| Skills and MCP runtime install, hot-reload improvements | Planned | |

| Context management and compression (long tool outputs, lower token usage) | Planned | |

| Multimodal support | In Progress | |

| Security | Shell execution confirmation | Planned |

| Tool/skills security | Planned | |

| Configurable security levels (user-configurable) | Planned | |

| Multimodal | Voice/video calls and real-time interaction | Long-term Planning |

| Multi-agent | Built on AgentScope, native multi-agent workflows | Long-term Planning |

| Sandbox | Deeper integration with AgentScope Runtime sandboxes | Long-term Planning |

| Self-healing | Daemon agent for automated recovery and health monitoring | Long-term Planning |

| OpenPaw-optimized local models | LLMs tuned for OpenPaw's native skills and common tasks; better local personal-assistant usability | Long-term Planning |

| Small + large model collaboration | Local LLMs for sensitive data; cloud LLMs for planning and coding; balance of privacy, performance, and capability | Long-term Planning |

| Cloud-native | Deeper integration with AgentScope Runtime; leverage cloud compute, storage, and tooling | Long-term Planning |

| Skills Hub | Enrich the AgentScope Skills repository and improve discoverability of high-quality skills | Long-term Planning |

Status: In Progress — actively being worked on; Planned — queued or under design, also welcome contributions; Seeking Contributors — we strongly encourage community contributions; Long-term Planning — longer-horizon roadmap.

Get involved

We are building OpenPaw in the open and welcome contributions of all kinds! Check the Roadmap above (especially items marked Seeking Contributors) to find areas that interest you, and read CONTRIBUTING to get started. We particularly welcome:

- Horizontal expansion — new channels, model providers, skills, MCPs.

- Existing feature extension — display and UX improvements, download hints, Windows path compatibility, and the like.

Join the conversation on GitHub Discussions to suggest or pick up work.

Install from source

git clone https://github.com/agentscope-ai/OpenPaw.git

cd OpenPaw

pip install -e .

- Dev (tests, formatting):

pip install -e ".[dev]" - Console (build frontend):

cd console && npm ci && npm run build, thenopenpaw appfrom project root.

Why OpenPaw?

OpenPaw represents both a Co Personal Agent Workstation and a "co-paw"—a partner always by your side. More than just a cold tool, OpenPaw is a warm "little paw" always ready to lend a hand (or a paw!). It is the ultimate teammate for your digital life.

Built by

AgentScope team · AgentScope · AgentScope Runtime · ReMe

Contact us

| Discord | X (Twitter) | DingTalk |

|---|---|---|

|

|

|

License

OpenPaw is released under the Apache License 2.0. This project is derived from CoPaw; see NOTICE for attribution.

Contributors

All thanks to our contributors:

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file symbio_openpaw-0.0.6.post7.tar.gz.

File metadata

- Download URL: symbio_openpaw-0.0.6.post7.tar.gz

- Upload date:

- Size: 7.5 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.10.19

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

45ce9dd6b42a787eab8d651ba3a7f7f61e04012d686bf8195b53bde251dde054

|

|

| MD5 |

a10a57e54c528bbff485cac58d4f28f0

|

|

| BLAKE2b-256 |

3f9c7a63a30ad524b4c46ba08820a0855883785d6b5169c490ca2dcafa27e81f

|

File details

Details for the file symbio_openpaw-0.0.6.post7-py3-none-any.whl.

File metadata

- Download URL: symbio_openpaw-0.0.6.post7-py3-none-any.whl

- Upload date:

- Size: 7.7 MB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.10.19

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

dd61fe26342b87a400963d922e9518f4e2c972975d491f4ea6338cac8e05e7f6

|

|

| MD5 |

087f29a52897187d5d47277f94954749

|

|

| BLAKE2b-256 |

4084e26c9e580a897fd695ffe57840d3ec9c7ea2747d54b236dd27c3297b66a8

|