A video-to-sound theremin

Project description

theremin

A video-to-sound theremin.

To install: pip install theremin

About this project

The theremin, an iconic electronic musical instrument invented in the 1920s by Léon Theremin, is played without physical contact, using hand movements to control pitch and volume through electromagnetic fields.

Today, with advancements in digital sensors, AI-based models for real-time sensor data processing, and sophisticated audio generation tools, there is an unprecedented opportunity to generalize the theremin’s concept into a flexible framework and platform. This initiative, the theremin Project, aims to explore the sonification of physical activity, opening new avenues for musical expression.

Musical instruments are fundamentally devices that translate physical interactions into sound. The physical properties of an instrument—be it the strings of a violin or the keys of a piano—not only shape the sound produced but also influence how musicians interact with them. These interactions impose constraints that, far from being restrictive, inspire and guide creativity in profound ways. The theremin Project leverages modern digital technologies to expand this paradigm, enabling users to explore countless ways to produce sound through movement. By mapping visual cues (e.g., gestures, facial expressions), tactile inputs, or even audio signals (e.g., sounds from objects or voice) to sound, the project empowers users to create entirely new instruments, each with its own creative constraints ripe for exploration.

The complexity of these mappings introduces a learning curve, but also vast creative potential. A key advantage of the project’s flexible mapping approach is its adaptability: complex mappings can be simplified for beginners to quickly express themselves, with the option to gradually increase complexity as their skills develop. This scalability bridges the spectrum of musical expression, from effortless AI-generated music—where anyone can create high-quality songs with minimal input—to the nuanced, skill-intensive artistry of acoustic instruments. While AI-driven tools risk flooding the music space with low-effort content, the theremin Project seeks to explore the middle ground, fostering tools that balance accessibility with expressive depth.

Consider the extremes: on one end, AI allows someone with no musical training to generate a song by clicking a button or describing its style, offering high quality but limited personal expressivity. On the other, acoustic instruments demand years of practice to unlock their full potential, rewarding players with profound expressive control. The theremin Project envisions a platform where users can navigate this spectrum, creating instruments that adapt to their abilities while encouraging growth. By providing intuitive tools to map movement to sound, the project aims to democratize musical expression, uncover novel modes of creativity, and inspire a new generation of digital instruments.

Along with advancements in Music AI, we should be able to explore a large range of possibilities of expressive control. One point on this spectrum would be to take an existing piece of music, and direct many aspects of it's rendition via visual cues, like a conductor (which was one of the candidate names for this project, along with maestro, director, and orchestrator -- all already taken on pypi, the repository of all python packages).

The theremin project is a bold step toward this future, inviting exploration of the vast possibilities at the intersection of movement, sound, and technology.

Work in Progress

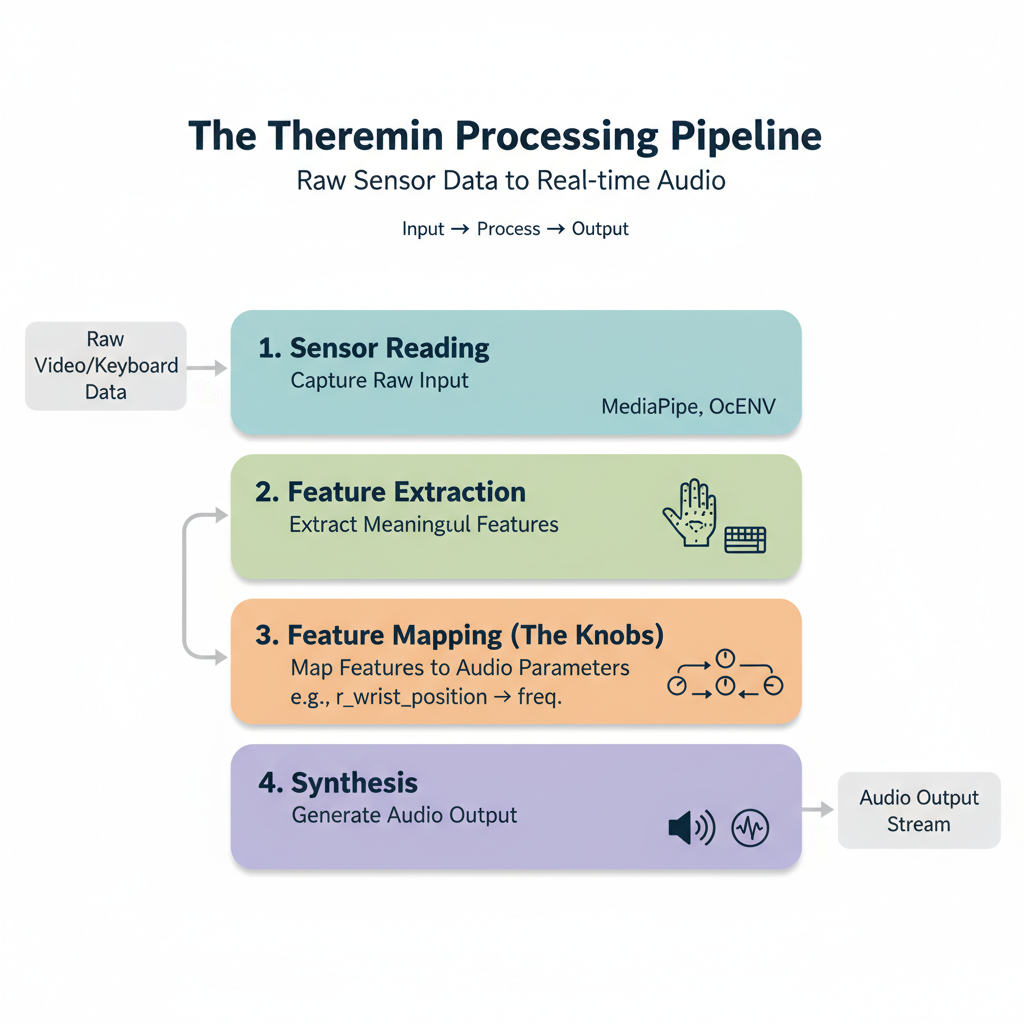

The objective of this project is to enable users to easily make mappings between sensors and sound, in such a way that is advantageous for whatever their goal is: playing music, learning how to play music, making some sound producing installation, sonifying some data stream, etc.

Note that here were's starting with video and keyboard as the main "sensors", but the plan is to generalize this to all possible streams of input control. One of the main targets being controlling via sound itself. That is, giving our sound producing system the ability to hear as well. This, we hope, will open up many interesting abilities when paired with AI music gen, both for education and for improvisation.

A few links:

See Leon theremin playing his own instrument, or also here, playing "Deep Night" (1930). The first one I even heard: Samuel Hoffman playing "over the rainbox". Someone might complain if I don't mention Led's Zepplin's Jimmy Page... playing around with a theremin -- but personally, though I appreciate the exploration, I don't consider it as being "playing the theremin".

Old "about"

The objective of this project is to make "instruments" that work like a theremin, that is, whereby the features of the sounds produced are controlled by the position of the left and right hand.

That, but generalized. A theremin is an analog device that whose sound is parametrized by two features only: Pitch and volume, respectively controlled by the left and right hand; the distance from the device's sensor determines the pitch and volume of the sound produced.

Here, though, we'll use video as our sensor (and will consider more kinds of sensors later): So we can not only detect the positions of the hands, but also the positions of the fingers, and the angles between them, detect gestures, and so on. Further, we can use the facial expressions to add to the parametrization of the sound produced.

What the project wants to grow up to be is a platform for creating musical instruments that are controlled by the body, enabling the user to determine the mapping between the video stream and the sound produced. We hope this will enable the creation of new musical instruments that are more intuitive and expressive than the traditional theremin.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file theremin-0.0.6.tar.gz.

File metadata

- Download URL: theremin-0.0.6.tar.gz

- Upload date:

- Size: 39.3 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.10.13

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f5c1512b6cde4e25448e237b5c9c24e9d3974049d6da89192b611cdd17dc3007

|

|

| MD5 |

62915f8b3dae68def344a718c874e2c4

|

|

| BLAKE2b-256 |

37ef0fbad9dcd8386b53157ee4d5a8a47c3491c229a23ef5b797a14e29a92207

|

File details

Details for the file theremin-0.0.6-py3-none-any.whl.

File metadata

- Download URL: theremin-0.0.6-py3-none-any.whl

- Upload date:

- Size: 40.8 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.10.13

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b114c93d0aba0d59163915649364a44224376c7400bb88dd3476293c93436f7f

|

|

| MD5 |

717682edec50d652c945374f62688360

|

|

| BLAKE2b-256 |

84b071705dfa2a5c426981fb0f7de8f7b484f4b44161f5122a3d6b781b31712b

|