A tiny scalar-valued autograd engine with a small PyTorch-like neural network library on top.

Project description

tonygrad

A tiny scalar-valued autograd engine with a small PyTorch-like neural network library on top. Implements backpropagation (reverse-mode autodiff) over a dynamically built DAG. The DAG only operates over scalar values, so e.g. we chop up each neuron into all of its individual tiny adds and multiplies. However, this is enough to build up entire deep neural nets doing binary classification, as the demo notebook shows.

Installation

pip install tonygrad

Example usage

Below there is a simple example showing a number of possible supported operations:

"""tanh() VERSION"""

# inputs x1,x2

x1 = Value(2.0, label='x1')

x2 = Value(0.0, label='x2')

# weights w1,w2

w1 = Value(-3.0, label='w1')

w2 = Value(1.0, label='w2')

# bias of the neuron

b = Value(6.8813735870195432, label='b')

# x1*w1 + x2*w2 + b

x1w1 = x1*w1; x1w1.label = 'x1w1'

x2w2 = x2*w2; x2w2.label = 'x2w2'

x1w1x2w2 = x1w1 + x2w2; x1w1x2w2.label = 'x1*w1 + x2*w2'

#output

n = x1w1x2w2 + b; n.label = 'n'

#apply tanh to the output

o = n.tanh(); o.label = 'o'

#launch backprop on the built graph

o.backward()

Tracing / visualization

For added convenience, the notebook trace_graph.py produces graphviz visualizations. Here we draw the neural network graph built in the example above.

from tonygrad.trace_graph import draw_dot, trace

draw_dot(o)

Training a neural net

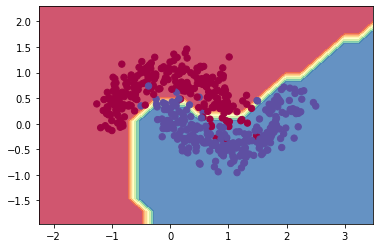

The notebook demo.ipynb provides a full demo of training an 2-layer neural network (MLP) binary classifier. This is achieved by initializing a neural net from tonygrad.nn module, implementing a simple svm "max-margin" binary classification loss and using stochastic gradient descent for optimization. As shown in the notebook, using a 2-layer neural net with two 16-node hidden layers we achieve the following decision boundary on the moon dataset:

License

MIT

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file tonygrad-2.0.0.tar.gz.

File metadata

- Download URL: tonygrad-2.0.0.tar.gz

- Upload date:

- Size: 4.9 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.10.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f3878b39602231cbedba6039798c9ad38f7fc2aac731277a0acdaca91298f351

|

|

| MD5 |

586999b5f2d5280dc76de20777a502c6

|

|

| BLAKE2b-256 |

4a075a074b0edff4a9cedb26c5e1b2d4195243a97c2aa8e7d4706ed33d30916d

|

File details

Details for the file tonygrad-2.0.0-py3-none-any.whl.

File metadata

- Download URL: tonygrad-2.0.0-py3-none-any.whl

- Upload date:

- Size: 5.6 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.10.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

92d6d8cef84e4c4253bed42ff11fdc63647064b1b6ec96c8920b9c5abec66adf

|

|

| MD5 |

e2a17ca8fcc061bdabff1d5f5c234050

|

|

| BLAKE2b-256 |

230df659fbcd659530cb417c002f4071dfd4a41842f1c48063b1d6ed87b7b46d

|