ADOPT: Modified Adam Can Converge with Any β2 with the Optimal Rate

Project description

ADOPT: Modified Adam Can Converge with Any $β_2$ with the Optimal Rate

Official Implementation of "ADOPT: Modified Adam Can Converge with Any β2 with the Optimal Rate", which is presented at NeurIPS 2024.

Update on Nov 22, 2024

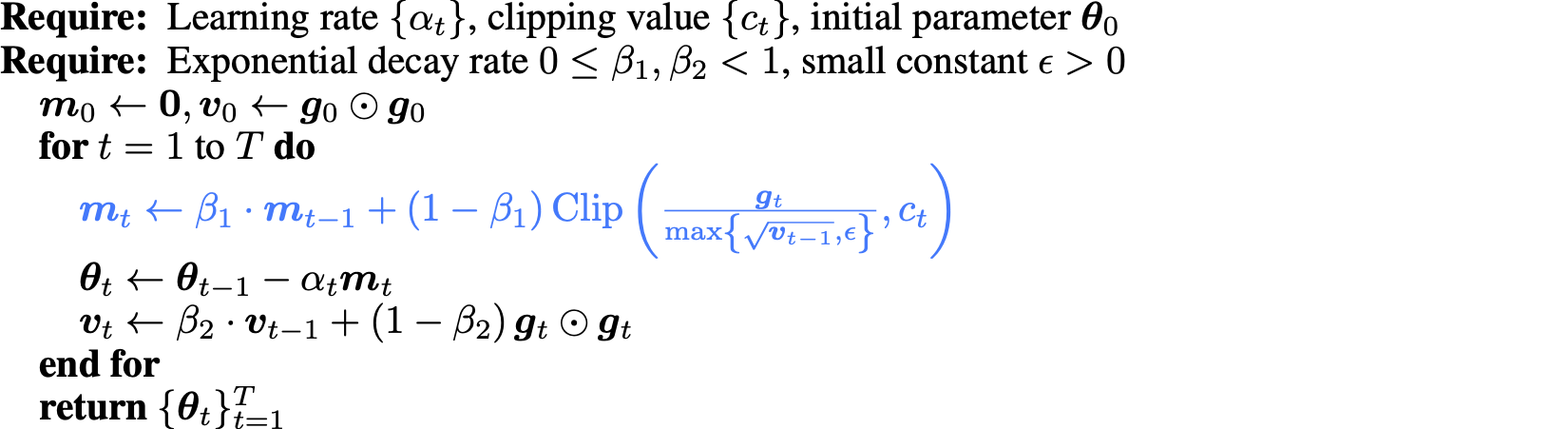

Based on feedbacks from some practitioners, we have updated the implementation to improve the stability of our ADOPT algorithm. In the original version, ADOPT sometimes gets unstable especially in the early stage of training. This seems to be because the near-zero division by the second memont estimate occurs when some elements of the parameter gradient are near zero at initialization. For example, when some parameters (e.g., the last layer of a neural net) are initialized with zero, which is often-used technique in deep learing, a near-zero gradient is observed at the first parameter update. To avoid such near-zero divisions, we have decided to add a clipping operation in the momentum update.

Even when clipping is applied, the convergence guarantee in theory is maintained by properly scheduling the clipping value (see the updated arXiv paper).

In our implementation, the clipping value is controlled by the argument clip_lambda, which is a callable function that determines the schedule of the clipping value depending on the number of gradient steps.

By default, the clipping value is set to step**0.25, which aligns with the theory to ensure the convergence.

We observe that the clipped ADOPT works much more stably than the original one, so we recommend to use it over the unclipped version.

If you want to reproduce the behaivior of the original version, you should set clip_lambda = None.

Requirements

ADOPT requires PyTorch 2.5.0 or later.

Usage

You can use ADOPT just like any other PyTorch optimizers by copying adopt.py to your project.

When you replace the Adam optimizer to our ADOPT, you should just replace the optimizer as follows:

from adopt import ADOPT

# optimizer = Adam(model.parameters(), lr=1e-3)

optimizer = ADOPT(model.parameters(), lr=1e-3)

When you are using AdamW as a default optimizer, you should set decouple=True for our ADOPT:

# optimizer = AdamW(model.parameters(), lr=1e-3)

optimizer = ADOPT(model.parameters(), lr=1e-3, decouple=True)

Citation

If you use ADOPT in your research, please cite the paper.

@inproceedings{taniguchi2024adopt,

author={Taniguchi, Shohei and Harada, Keno and Minegishi, Gouki and Oshima, Yuta and Jeong, Seong Cheol and Nagahara, Go and Iiyama, Tomoshi and Suzuki, Masahiro and Iwasawa, Yusuke and Matsuo, Yutaka},

booktitle = {Advances in Neural Information Processing Systems},

title = {ADOPT: Modified Adam Can Converge with Any β2 with the Optimal Rate},

year = {2024}

}

License

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file torch_adopt-0.1.0.tar.gz.

File metadata

- Download URL: torch_adopt-0.1.0.tar.gz

- Upload date:

- Size: 460.2 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.0.1 CPython/3.12.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

1a7c2a6275b8d76cc88fc3ca72ba6f0692294a466e0fa12d228945b161e15eb3

|

|

| MD5 |

39b08215941ac5feef7b6b5b7883bc81

|

|

| BLAKE2b-256 |

f38e7fb4311d54bf955c721fb57b2bc432f28d3b61bca70150befa41c52d9955

|

File details

Details for the file torch_adopt-0.1.0-py3-none-any.whl.

File metadata

- Download URL: torch_adopt-0.1.0-py3-none-any.whl

- Upload date:

- Size: 11.6 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.0.1 CPython/3.12.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

0fe57afe71e6e11a788494ce60e5e27597e85f47c2616b22ce61d73a7663689d

|

|

| MD5 |

b4437eebbb9fd8ac1c3c6c9924a39024

|

|

| BLAKE2b-256 |

c51e707776135f4abb2775bd08342a2fbd1746f322547068eff555b12918bad3

|