PyTorch-native Bayesian neural networks for uncertainty quantification in deep learning

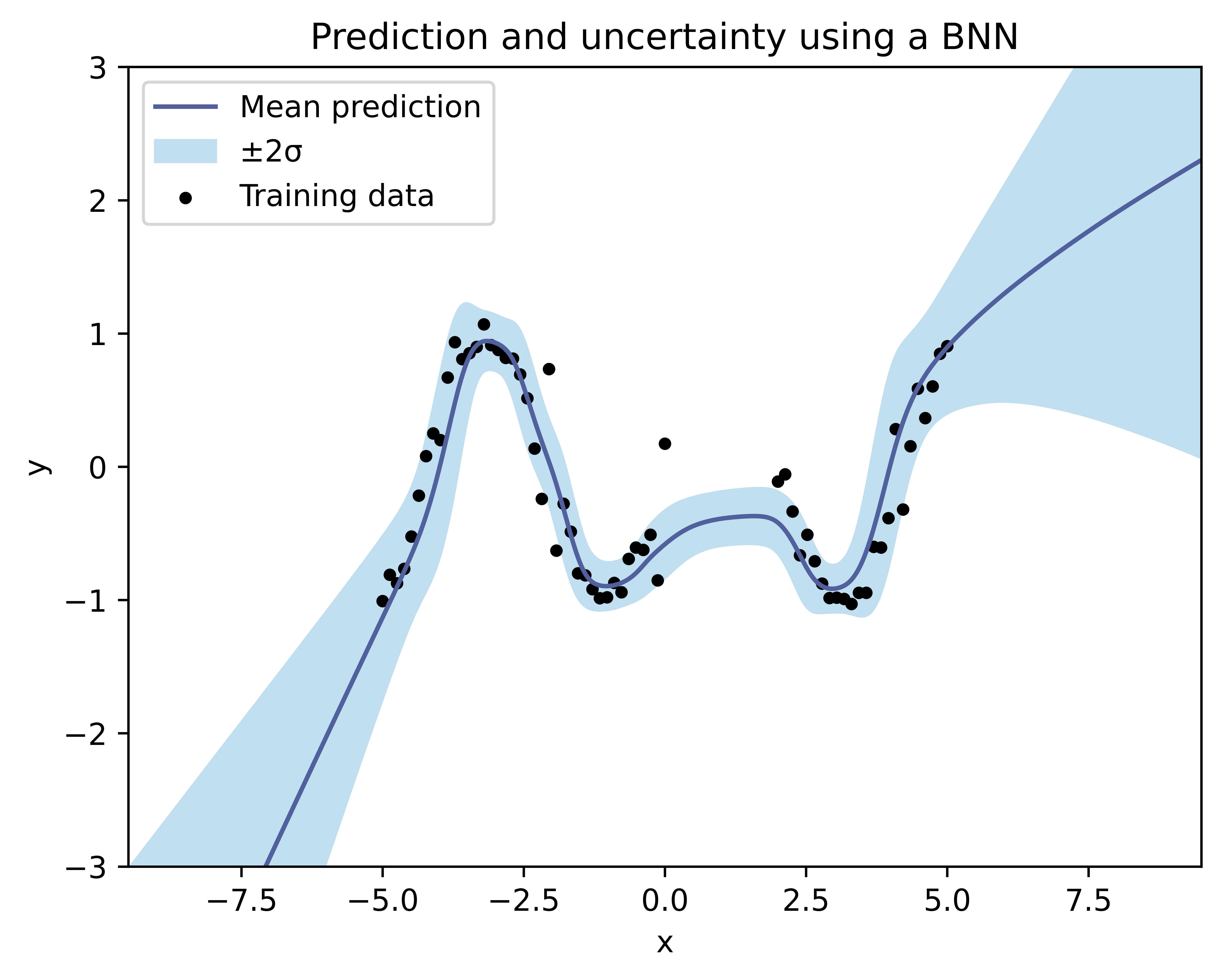

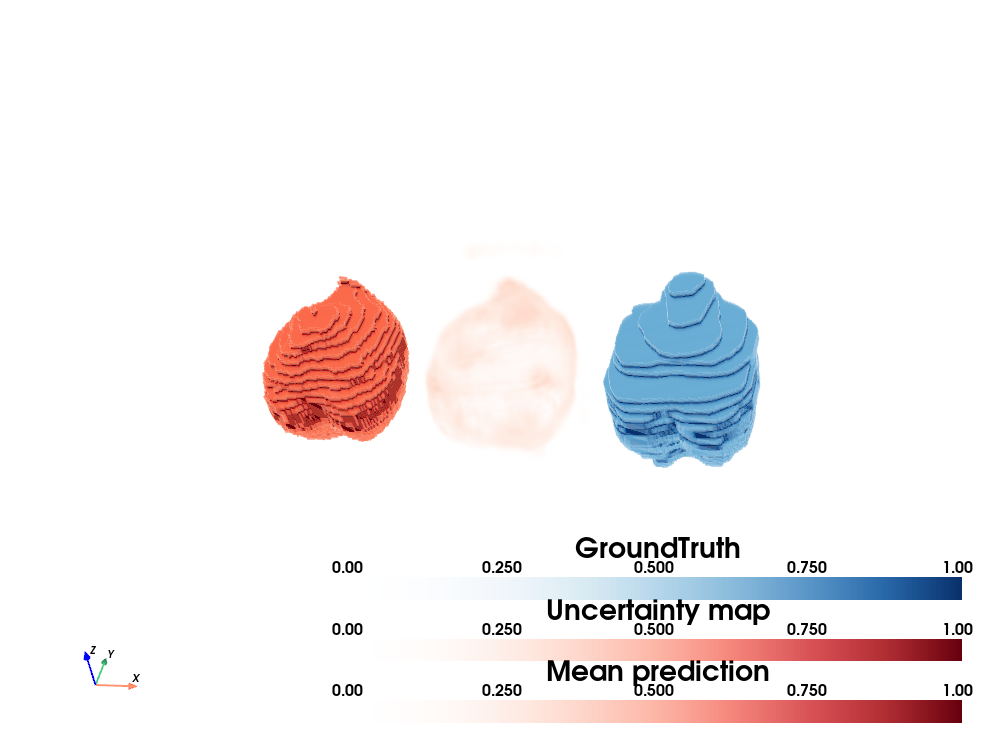

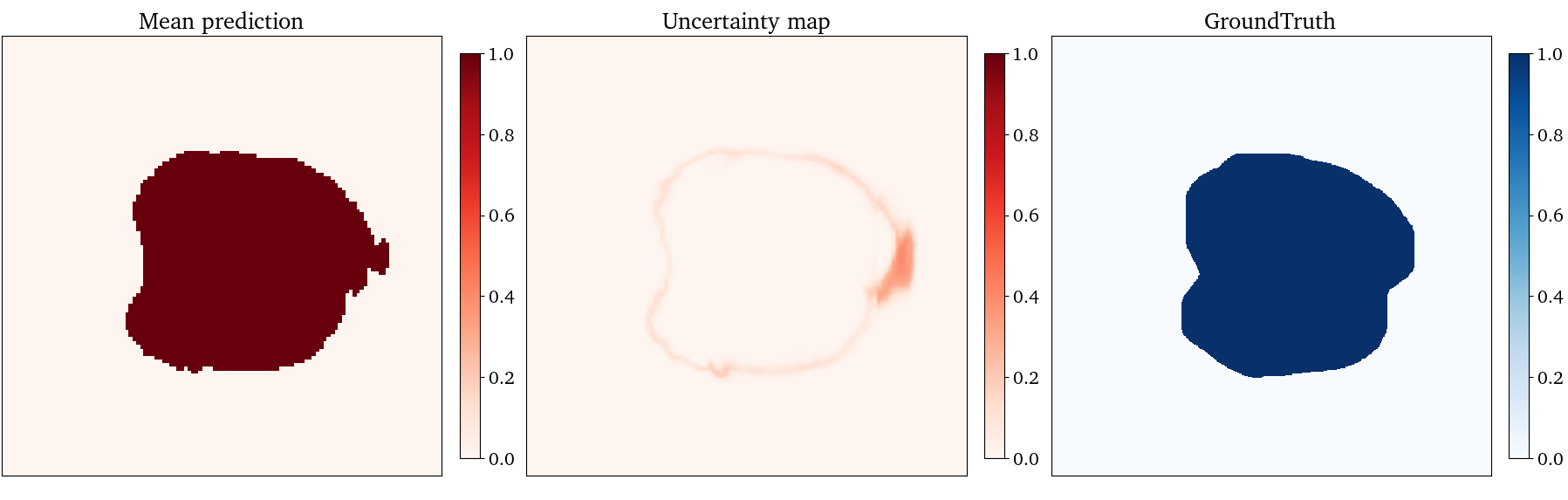

Project description

The simplest way to build Bayesian Neural Networks in PyTorch.

torchbayesian

torchbayesian is a PyTorch-based, open-source library for building uncertainty-aware neural networks. It serves as a lightweight extension to PyTorch, providing support for Bayesian deep learning and principled uncertainty quantification of model predictions while preserving the standard PyTorch workflow.

The package integrates seamlessly into the PyTorch Ecosystem, enabling the conversion of existing models into Bayesian neural networks via Bayes-by-Backprop variational inference. Its modular design supports configurable priors and variational posteriors, and also includes support for other uncertainty estimation methods such as Monte Carlo dropout.

Quickly turn any PyTorch model into a Bayesian neural network

Any nn.Module can be wrapped with the module container bnn.BayesianModule to make it a Bayesian neural network (BNN):

import torchbayesian.bnn as bnn

net = bnn.BayesianModule(net)

The resulting module remains a standard nn.Module, fully compatible with the PyTorch API.

Internally, its parameters are reparameterized as variational distributions over the weights, enabling approximate Bayesian inference and uncertainty-aware predictions.

Key features

- Deterministic-to-Bayesian conversion — Convert any existing model into a Bayesian neural network with a single line of code.

- Compatible with any

nn.Module— Rather than replacing a few specific layers (e.g.,nn.Linear,nn.ConvNd) with Bayesian counterparts, torchbayesian operates at thenn.Parameterlevel. Any trainable parameter, including those defined in custom or third-party modules, can be reparameterized as a variational distribution, ensuring the entire model is treated as Bayesian. - Modular priors and posteriors — Configure different priors and variational posteriors directly, or use custom implementations.

- Direct KL divergence access — Bayesian models expose their KL divergence via

.kl_divergence(), using analytic computation when available and Monte Carlo estimation otherwise. - PyTorch-native design — Preserves existing workflows and is fully compatible with other PyTorch-based tools such as MONAI.

- Monte Carlo dropout support — Includes dedicated layers and utilities for Monte Carlo dropout uncertainty estimation.

Installation

torchbayesian works with Python 3.10+ and has a direct dependency on PyTorch.

Current release

To install the current release with pip, run the following:

pip install torchbayesian

Getting started

A complete working example is available on GitHub.

The model's Kullback-Leibler divergence can be retrieved at any point via .kl_divergence() on a bnn.BayesianModule instance.

Different or custom priors and variational posteriors can be used.

For Monte Carlo dropout, dropout layers can be activated during evaluation using the enable_mc_dropout() utility.

Dedicated Bayesian dropout layers are also provided.

Motivation

Modern deep learning models are remarkably powerful but often make predictions with high confidence even when they are wrong. In safety-critical domains such as health, finance, or autonomous systems, this overconfidence makes it difficult to trust model outputs and impedes automatization. The torchbayesian package was created to make Bayesian Neural Networks (BNN) and uncertainty quantification in PyTorch as simple as possible. The goal is to lower the barrier to practical Bayesian deep learning, enabling researchers and practitioners to integrate principled uncertainty estimation directly into their existing framework.

Contributing

This library is still a work in progress. All contributions via pull requests are welcome.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file torchbayesian-0.2.1.tar.gz.

File metadata

- Download URL: torchbayesian-0.2.1.tar.gz

- Upload date:

- Size: 27.0 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.10.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

57d34df3baefa36ecff870b78a5fa3201e09c079995182ff1a22bd00f48c8613

|

|

| MD5 |

bdbe005f9b5745e63cc80fd198cc9f85

|

|

| BLAKE2b-256 |

2ffa5dd19e93e953bb03e8ee19d96927386b6dbb3db63903eeb163b55184ecd7

|

File details

Details for the file torchbayesian-0.2.1-py3-none-any.whl.

File metadata

- Download URL: torchbayesian-0.2.1-py3-none-any.whl

- Upload date:

- Size: 34.2 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.10.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

bdc56f3922b80f526fb2b3bffeb99a06afc42ae795840f9e8002d8f7303d58dc

|

|

| MD5 |

591a8b5a836a090e794c4c3a15a71cde

|

|

| BLAKE2b-256 |

50002b2e3c5e1010acfe3356861840f5badd1cd7c0b905f65831f562572e4811

|