TRL Jobs.

Project description

🏭 TRL Jobs

TRL Jobs is a simple wrapper around hfjobs that makes it easy to run TRL (Transformer Reinforcement Learning) workflows directly on 🤗 Hugging Face infrastructure.

Think of it as the quickest way to kick off Supervised Fine-Tuning (SFT) and more, without worrying about all the boilerplate setup.

📦 Installation

Get started with a single command:

pip install trl-jobs

⚡ Quick Start

Run your first supervised fine-tuning job in just one line:

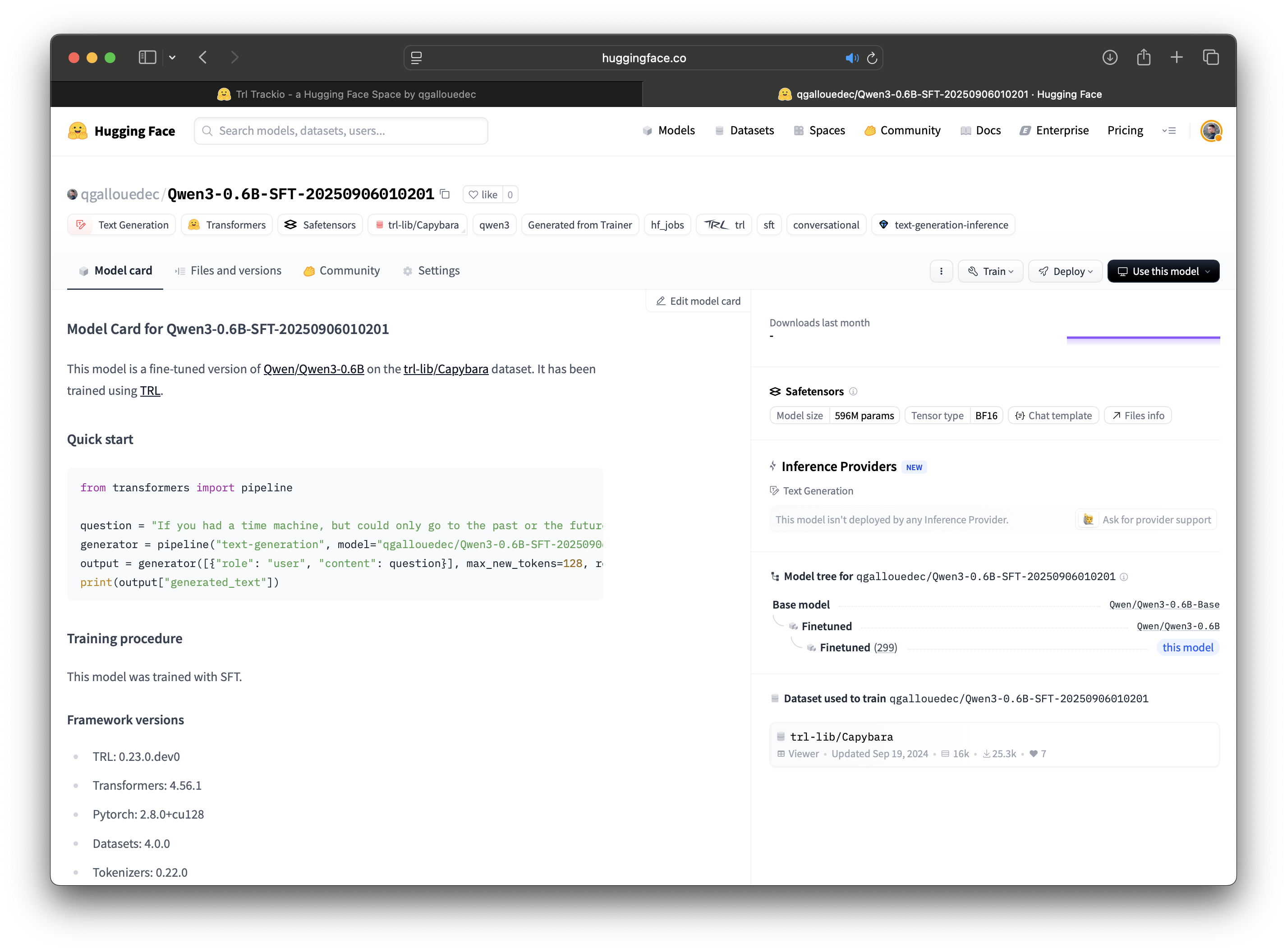

trl-jobs sft --model_name Qwen/Qwen3-0.6B --dataset_name trl-lib/Capybara

The training is tracked with Trackio and the fine-tuned model is automatically pushed to the 🤗 Hub.

🛠 Available Commands

Right now, SFT (Supervised Fine-Tuning) is supported. More workflows will be added soon!

🔹 SFT (Supervised Fine-Tuning)

trl-jobs sft --model_name Qwen/Qwen3-0.6B --dataset_name trl-lib/Capybara

Required arguments

--model_name→ Model to fine-tune (e.g.Qwen/Qwen3-0.6B)--dataset_name→ Dataset to train on (e.g.trl-lib/Capybara)

Optional arguments

--peft→ Use PEFT (LoRA) (default:False)--flavor→ Hardware flavor (default:a100-large, only option for now)--timeout→ Max runtime (1hby default). Supportss,m,h,d-d, --detach→ Run in background and print job ID--namespace→ Namespace where the job will run (default: your user namespace)--token→ Hugging Face token (only needed if not logged in)

➡️ You can also pass any arguments supported by trl sft. E.g.

trl-jobs sft --model_name Qwen/Qwen3-0.6B --dataset_name trl-lib/Capybara --learning_rate 3e-5

For the full list, see the TRL CLI docs.

📊 Supported Configurations

Here are some ready-to-go setups you can use out of the box.

🦙 Meta LLaMA 3

| Model | Max context length | Tokens / batch | Example command |

|---|---|---|---|

| Meta-Llama-3-8B | 4096 | 262,144 | trl-jobs sft --model_name meta-llama/Meta-Llama-3-8B --dataset_name ... |

| Meta-Llama-3-8B-Instruct | 4096 | 262,144 | trl-jobs sft --model_name meta-llama/Meta-Llama-3-8B-Instruct --dataset_name ... |

🦙 Meta LLaMA 3 with PEFT

| Model | Max context length | Tokens / batch | Example command |

|---|---|---|---|

| Meta-Llama-3-8B | 24,576 | 196,608 | trl-jobs sft --model_name meta-llama/Meta-Llama-3-8B --peft --dataset_name ... |

| Meta-Llama-3-8B-Instruct | 24,576 | 196,608 | trl-jobs sft --model_name meta-llama/Meta-Llama-3-8B-Instruct --peft --dataset_name ... |

🐧 Qwen3

| Model | Max context length | Tokens / batch | Example command |

|---|---|---|---|

| Qwen3-0.6B-Base | 32,768 | 65,536 | trl-jobs sft --model_name Qwen/Qwen3-0.6B-Base --dataset_name ... |

| Qwen3-0.6B | 32,768 | 65,536 | trl-jobs sft --model_name Qwen/Qwen3-0.6B --dataset_name ... |

| Qwen3-1.7B-Base | 24,576 | 98,304 | trl-jobs sft --model_name Qwen/Qwen3-1.7B-Base --dataset_name ... |

| Qwen3-1.7B | 24,576 | 98,304 | trl-jobs sft --model_name Qwen/Qwen3-1.7B --dataset_name ... |

| Qwen3-4B-Base | 20,480 | 163,840 | trl-jobs sft --model_name Qwen/Qwen3-4B-Base --dataset_name ... |

| Qwen3-4B | 20,480 | 163,840 | trl-jobs sft --model_name Qwen/Qwen3-4B --dataset_name ... |

| Qwen3-8B-Base | 4,096 | 262,144 | trl-jobs sft --model_name Qwen/Qwen3-8B-Base --dataset_name ... |

| Qwen3-8B | 4,096 | 262,144 | trl-jobs sft --model_name Qwen/Qwen3-8B --dataset_name ... |

🐧 Qwen3 with PEFT

| Model | Max context length | Tokens / batch | Example command |

|---|---|---|---|

| Qwen3-8B-Base | 24,576 | 196,608 | trl-jobs sft --model_name Qwen/Qwen3-8B-Base --peft --dataset_name ... |

| Qwen3-8B | 24,576 | 196,608 | trl-jobs sft --model_name Qwen/Qwen3-8B --peft --dataset_name ... |

| Qwen3-14B-Base | 20,480 | 163,840 | trl-jobs sft --model_name Qwen/Qwen3-14B-Base --peft --dataset_name ... |

| Qwen3-14B | 20,480 | 163,840 | trl-jobs sft --model_name Qwen/Qwen3-14B --peft --dataset_name ... |

| Qwen3-32B | 4,096 | 131,072 | trl-jobs sft --model_name Qwen/Qwen3-32B --peft --dataset_name ... |

🤖 OpenAI GPT-OSS (with PEFT)

🚧 Coming soon!

💡 Want support for another model?

Open an issue or submit a PR—we’d love to hear from you!

🔑 Authentication

You’ll need a Hugging Face token to run jobs. You can provide it in any of these ways:

- Login with

huggingface-cli login - Set the environment variable

HF_TOKEN - Pass it directly with

--token

📜 License

This project is under the MIT License. See the LICENSE file for details.

🤝 Contributing

We welcome contributions! Please open an issue or a PR on GitHub.

Before committing, run formatting checks:

ruff check . --fix && ruff format . --line-length 119

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file trl_jobs-0.1.12.tar.gz.

File metadata

- Download URL: trl_jobs-0.1.12.tar.gz

- Upload date:

- Size: 6.5 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

97b819cbffffad4211c51464d5d77a5ef59488b7007ed08b5f2fd164c0d9247d

|

|

| MD5 |

b812cc3641c355947c90a2ac0db75645

|

|

| BLAKE2b-256 |

171249afc9083bf7eb742340863635e649e83d0a5dad4140e6b061f4dcb94e10

|

File details

Details for the file trl_jobs-0.1.12-py3-none-any.whl.

File metadata

- Download URL: trl_jobs-0.1.12-py3-none-any.whl

- Upload date:

- Size: 18.8 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

0fe9eda99ab2a3f6263445477a7dc9a5eb9648df815b11d78e529bb5ebfa42f3

|

|

| MD5 |

0a2240ab68c81c7531d338c45315c3c6

|

|

| BLAKE2b-256 |

6afc345951992e9a1e0d38a20f0a99d71c601d19b731a059134a54e47b435f22

|