Optical Character Recognition Model

Project description

TrorYong OCR Model

TrorYongOCR, is an Optical Character Recognition Model implemented by KrorngAI.

TrorYong (ត្រយ៉ង) is Khmer word for giant ibis, the bird that symbolises Cambodia.

Support My Work

While this work comes truly from the heart, each project represents a significant investment of time -- from deep-dive research and code preparation to the final narrative and editing process. I am incredibly passionate about sharing this knowledge, but maintaining this level of quality is a major undertaking. If you find my work helpful and are in a position to do so, please consider supporting my work with a donation. You can click here to donate or scan the QR code below. Your generosity acts as a huge encouragement and helps ensure that I can continue creating in-depth, valuable content for you.

Installation

You can easily install tror-yong-ocr using pip command as the following:

pip install tror-yong-ocr

Usage

Loading tokenizer

TrorYongOCR is a small optical character recognition model that you can train from scratch.

With this goal, you can use your own tokenizer to pair with TrorYongOCR.

Just make sure that the tokenizer used for training and the tokenizer used for inference is the same.

Your tokenizer must contain begin of sequence (bos), end of sequence (eos) and padding (pad) tokens.

bos token id and eos token id are used in decoding function.

pad token id is used during training.

I also provide a tokenizer that supports Khmer and English.

from tror_yong_ocr import get_tokenizer

tokenizer = get_tokenizer(charset=None)

print(len(tokenizer)) # you should receive 185

text = 'Amazon បង្កើនការវិនិយោគជិត១'

print(tokenizer.decode(tokenizer.encode(data[0]['text'], add_special_tokens=True), ignore_special_tokens=False))

# this should print <s>Amazon បង្កើនការវិនិយោគជិត១</s>

When preparing a dataset to train TrorYongOCR, you just need to transform the text into token ids using the tokenizer

sentence = 'Cambodia needs peace.'

token_ids = tokenizer.encode(sentence, add_special_tokens=True)

NOTE: I want to highlight that my tokenizer works at character level.

Loading TrorYongOCR model

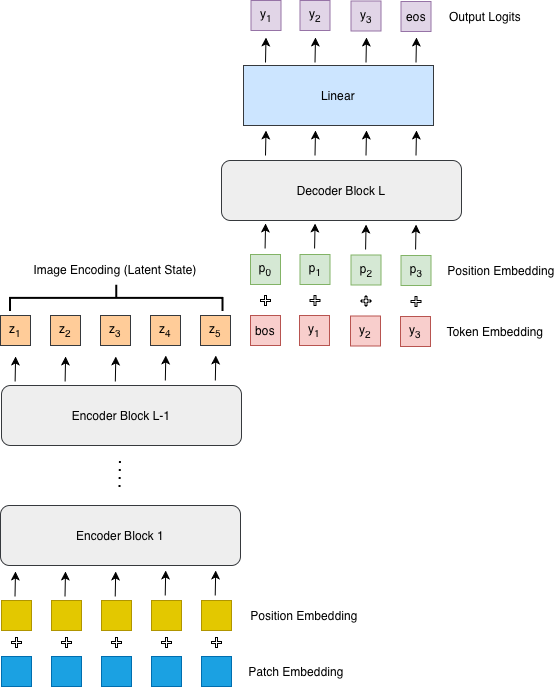

Inspired by PARSeq and DTrOCR, I design TrorYongOCR as the following: given n_layer transformer layers

n_layer-1are encoding layers for encoding a given image- the final layer is a decoding layer without cross-attention mechanism

- for the decoding layer,

- the latent state of an image (the output of encoding layers) is concatenated with the input character embedding (token embedding including

bostoken plus position embedding) to create context vector, i.e. key and value vectors (think of it like a prompt prefill) - and the input character embedding (token embedding plus position embedding) is used as query vector.

- the latent state of an image (the output of encoding layers) is concatenated with the input character embedding (token embedding including

The architecture of TrorYongOCR can be found in Figure 1 below.

New technologies in Attention mechanism such as Rotary Positional Embedding (RoPE), and Sigmoid Linear Unit (SiLU) and Gated Linear Unit (GLU) in MLP of Transformer block are implemented in TrorYongOCR.

Compared to PARSeq

For PARSeq model which is an encoder-decoder architecture, text decoder uses position embedding as query vector, character embedding (token embedding plus position embedding) as context vector, and the latent state from image encoder as memory for the cross-attention mechanism (see Figure 3 of their paper).

Compared to DTrOCR

For DTrOCR which is a decoder-only architecture, the image embedding (patch embedding plus position embedding) is concatenated with input character embedding (a [SEP] token is added at the beginning of input character embedding to indicate sequence separation. [SEP] token is equivalent to bos token in TrorYongOCR), and causal self-attention mechanism is applied to the concatenation from layer to layer to generate text autoregressively (see Figure 2 of their paper).

from tror_yong_ocr import TrorYongOCR, TrorYongConfig

from tror_yong_ocr import get_tokenizer

tokenizer = get_tokenizer()

config = TrorYongConfig(

img_size=(32, 128),

patch_size=(4, 8),

n_channel=3,

vocab_size=len(tokenizer),

block_size=192,

n_layer=4,

n_head=6,

n_embed=384,

dropout=0.1,

bias=True,

)

model = TrorYongOCR(config, tokenizer)

Train TrorYongOCR

You can check out the notebook below to train your own Small OCR Model.

I also have a video about training TrorYongOCR below

Inference

I also provide decode function to decode image in TrorYongOCR class.

Note that it can process only one image at a time.

from tror_yong_ocr import TrorYongOCR, TrorYongConfig

from tror_yong_ocr import get_tokenizer

tokenizer = get_tokenizer()

config = TrorYongConfig(

img_size=(32, 128),

patch_size=(4, 8),

n_channel=3,

vocab_size=len(tokenizer), # exclude pad and unk tokens

block_size=192,

n_layer=4,

n_head=6,

n_embed=384,

dropout=0.1,

bias=True,

)

model = TrorYongOCR(config, tokenizer)

model.load_state_dict(torch.load('path/to/your/weights.pt', map_location='cpu'))

pred = model.decode(batch['img_tensor'][0], max_tokens=192, temperature=0.001, top_k=None)

print(tokenizer.decode(pred[0].tolist(), ignore_special_tokens=True))

TODO:

- implement model with KV cache

TrorYongOCR - notebook colab for training

TrorYongOCR - benchmarking

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

File details

Details for the file tror_yong_ocr-0.1.0.tar.gz.

File metadata

- Download URL: tror_yong_ocr-0.1.0.tar.gz

- Upload date:

- Size: 12.9 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.10

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

2db79fd803cca80038b253f2fa06eab826f50dc25baa1fd4bded1eacbb2824f3

|

|

| MD5 |

bce82d1838bf80aef885162861c00a3b

|

|

| BLAKE2b-256 |

d1c3751cc4e0df82e9921ac7e2b87128d397c4ac02d4ddeeaf0958a6ad777423

|