tune-easy: A hyperparameter tuning tool, extremely easy to use.

Project description

tune-easy

A hyperparameter tuning tool, extremely easy to use.

This package supports scikit-learn API estimators, such as SVM and LightGBM.

Usage

Example of All-in-one Tuning

from tune_easy import AllInOneTuning

import seaborn as sns

# Load Dataset

iris = sns.load_dataset("iris")

iris = iris[iris['species'] != 'setosa'] # Select 2 classes

TARGET_VARIALBLE = 'species' # Target variable

USE_EXPLANATORY = ['petal_width', 'petal_length', 'sepal_width', 'sepal_length'] # Explanatory variables

y = iris[OBJECTIVE_VARIALBLE].values

X = iris[USE_EXPLANATORY].values

###### Run All-in-one Tuning######

all_tuner = AllInOneTuning()

all_tuner.all_in_one_tuning(X, y, x_colnames=USE_EXPLANATORY, cv=2)

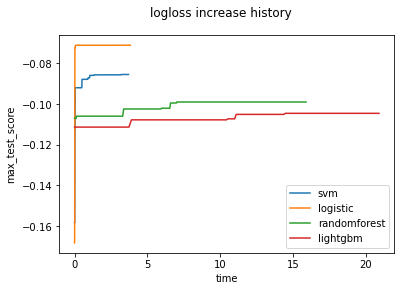

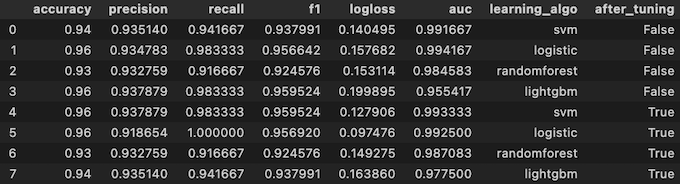

all_tuner.df_scores

If you want to know usage of the other classes, see API Reference and Examples

Example of Detailed Tuning

from tune_easy import LGBMClassifierTuning

from sklearn.datasets import load_boston

import seaborn as sns

# Load dataset

iris = sns.load_dataset("iris")

iris = iris[iris['species'] != 'setosa'] # Select 2 classes

OBJECTIVE_VARIALBLE = 'species' # Target variable

USE_EXPLANATORY = ['petal_width', 'petal_length', 'sepal_width', 'sepal_length'] # Explanatory variables

y = iris[OBJECTIVE_VARIALBLE].values

X = iris[USE_EXPLANATORY].values

###### Run Detailed Tuning######

tuning = LGBMClassifierTuning(X, y, USE_EXPLANATORY) # Initialize tuning instance

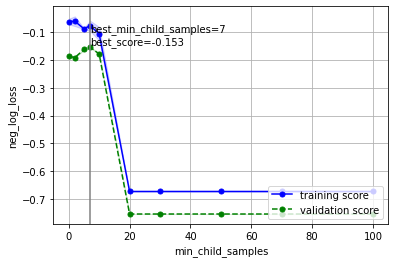

tuning.plot_first_validation_curve(cv=2) # Plot first validation curve

tuning.optuna_tuning(cv=2) # Optimization using Optuna library

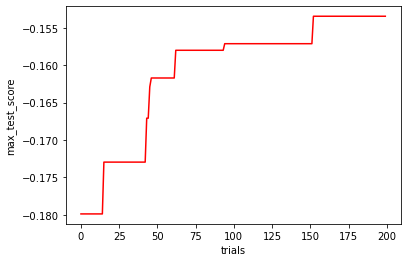

tuning.plot_search_history() # Plot score increase history

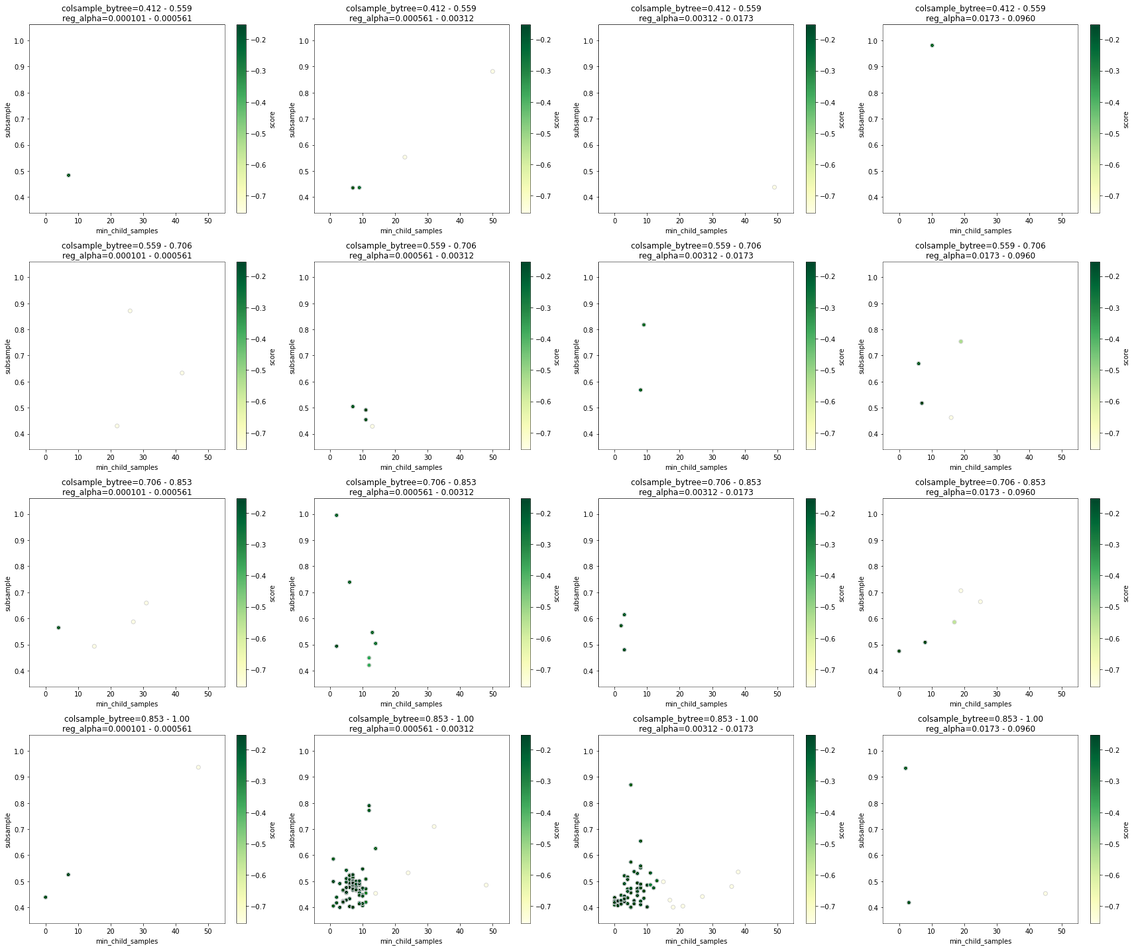

tuning.plot_search_map() # Visualize relationship between parameters and validation score

tuning.plot_best_learning_curve() # Plot learning curve

tuning.plot_best_validation_curve() # Plot validation curve

If you want to know usage of the other classes, see API Reference and Examples

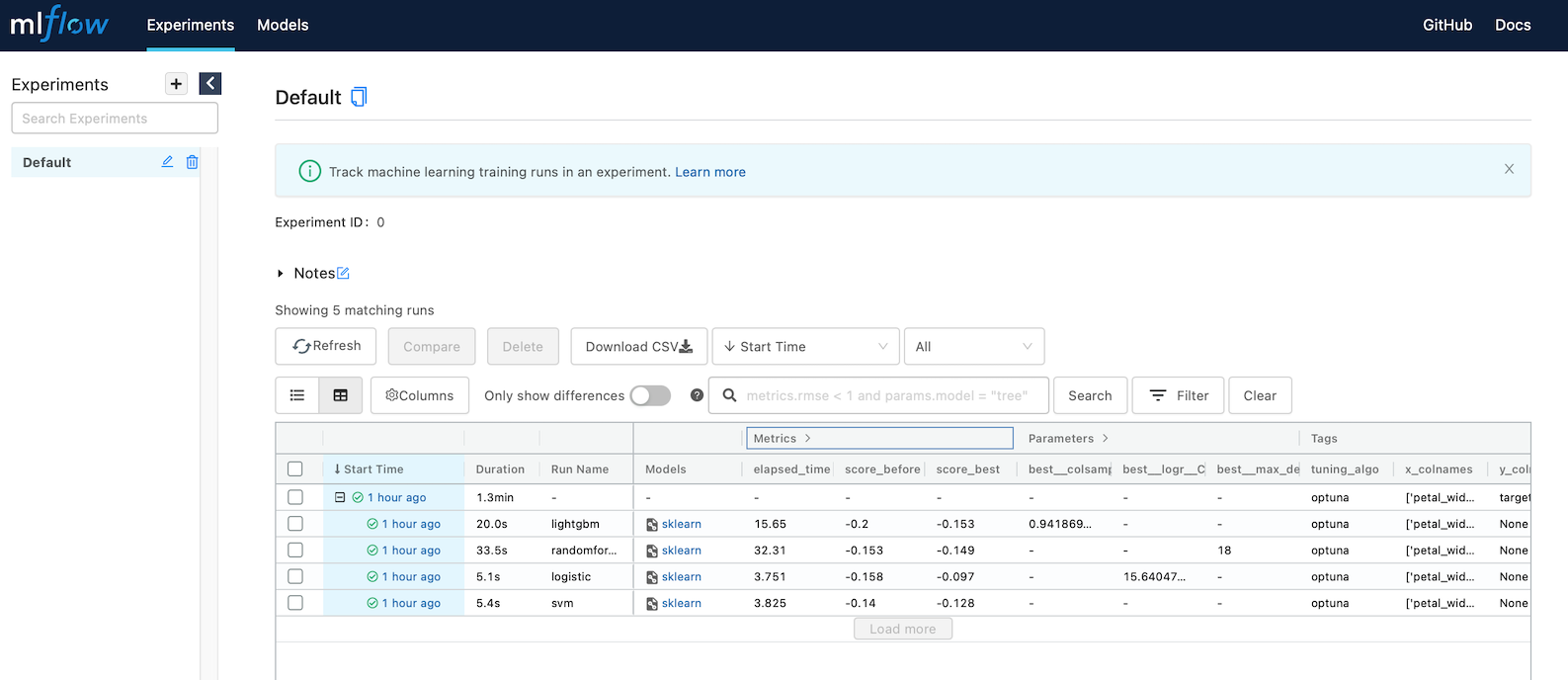

Example of MLflow logging

from tune_easy import AllInOneTuning

import seaborn as sns

# Load dataset

iris = sns.load_dataset("iris")

iris = iris[iris['species'] != 'setosa'] # Select 2 classes

TARGET_VARIALBLE = 'species' # Target variable

USE_EXPLANATORY = ['petal_width', 'petal_length', 'sepal_width', 'sepal_length'] # Explanatory variables

y = iris[TARGET_VARIALBLE].values

X = iris[USE_EXPLANATORY].values

###### Run All-in-one Tuning with MLflow logging ######

all_tuner = AllInOneTuning()

all_tuner.all_in_one_tuning(X, y, x_colnames=USE_EXPLANATORY, cv=2,

mlflow_logging=True) # Set MLflow logging argument

If you want to know usage of the other classes, see API Reference and Examples

Requirements

param-tuning-utility 0.2.1 requires

Python >=3.6

Scikit-learn >=0.24.2

Numpy >=1.20.3

Pandas >=1.2.4

Matplotlib >=3.3.4

Seaborn >=0.11.0

Optuna >=2.7.0

BayesianOptimization >=1.2.0

MLFlow >=1.17.0

LightGBM >=3.3.2

XGBoost >=1.4.2

seaborn-analyzer >=0.2.11

Installing tune-easy

Use pip to install the binary wheels on PyPI

$ pip install tune-easySupport

Bugs may be reported at https://github.com/c60evaporator/tune-easy/issues

Contact

If you have any questions or comments about param-tuning-utility, please feel free to contact me via eMail: c60evaporator@gmail.com or Twitter: https://twitter.com/c60evaporator This project is hosted at https://github.com/c60evaporator/param-tuning-utility

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file tune-easy-0.2.1.tar.gz.

File metadata

- Download URL: tune-easy-0.2.1.tar.gz

- Upload date:

- Size: 45.5 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.4.2 importlib_metadata/4.6.1 pkginfo/1.7.1 requests/2.25.1 requests-toolbelt/0.9.1 tqdm/4.60.0 CPython/3.9.5

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

a4e6a77636cba0923baa8d38021acb2f80ab130912b0fb71cf30cc34c727d6db

|

|

| MD5 |

78b68a7068397ed94cea198a3d0e0c32

|

|

| BLAKE2b-256 |

b3b0b144ed12ed6067188767780f7034582d48d0f13b73936bfb5649dbea4037

|

File details

Details for the file tune_easy-0.2.1-py3-none-any.whl.

File metadata

- Download URL: tune_easy-0.2.1-py3-none-any.whl

- Upload date:

- Size: 52.3 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.4.2 importlib_metadata/4.6.1 pkginfo/1.7.1 requests/2.25.1 requests-toolbelt/0.9.1 tqdm/4.60.0 CPython/3.9.5

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

616c44d4022f0be828aae9c38018e09e042a685ea9db3ec6abacb4b2d49f7a4e

|

|

| MD5 |

47a6f68b06fac043732fb621727670f9

|

|

| BLAKE2b-256 |

94cf1ce8ee3504566bac3afb140d7fd8960acaa4887e3acd531982d920e1c098

|