A python based wrapper for GPT-4 & GPT-3.5 PLUS.

Project description

TurboGpt

A python based wrapper for GPT-4 & GPT-3.5 PLUS.

Youtube demo / tutorial

Benefits and why.

There is currently no way to use GPT-4 outside the online chat.openai.com interface. This wrapper allows you to use GPT-4 in your own projects.

the current API is extremely slow and even if you have premium it does not speed up the response time. TurboGpt does not use the API and instead uses the same interfaces as the chat.openai.com website.

This means that you can use GPT-4 and GPT-3.5 PLUS in your own projects without having to wait 20+ seconds for a response.

Install

To use GPT-4 you need to have a GPT PLUS subscription. If you don't, you can get one here.

pip install turbogpt

Getting the PUID & ACCESS_TOKEN

REMEMBER YOU NEED TO HAVE CHAT GPT PLUS SUBSCRIPTION TO USE THIS LIBRARY

1. Head over to https://chat.openai.com/chat

2. Open the developer console (F12)

3. Go to the application tab

4. Go to local cookies

5. Copy the value of the _puid cookie

6. Go to the network tab and click on Fetch/XHR

7. hit refresh and locate the models request

8. Copy the value of the Authorization header after berear (ey....). (this is the ACCESS_TOKEN)

9. Paste the values into the .env file like so:

ACCESS_TOKEN=AUTHORIZATION HEADER

PUID=_puid

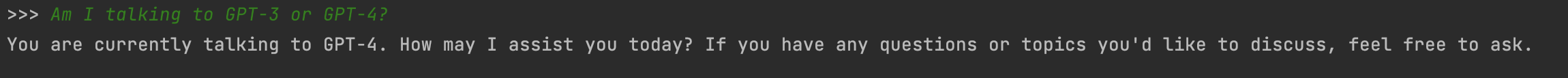

Usage

Start a new session

from turbogpt import TurboGpt

turbogpt = TurboGpt(model="gpt-4") # or "text-davinci-002-render-sha" (default)(AKA GPT-3.5)

session = turbogpt.start_session()

q = turbogpt.send_message(input(">>> "), session)

print(q['message']['content']['parts'][0])

Resume existing session

from turbogpt import TurboGpt

turbogpt = TurboGpt(model="gpt-4") # or "text-davinci-002-render-sha" (default)(AKA GPT-3.5)

session = turbogpt.resume_session("uuid-uuid-uuid-uuid")

q = turbogpt.send_message(input(">>> "), session)

print(q['message']['content']['parts'][0])

Info

✅ - cloudflare bypassed

✅ - automatic refresh of _puid

✅ - GPT-4 Support

✅ - GPT-3.5 PLUS Support

✅ - GPT-3.5 Support (Free)

✅ - Fast

✅ - Easy to use

✅ - No API

✅ - No rate limits

✅ - No waiting

✅ - Back and forth conversation support

TurboGpt-CLI

Check out a TurboGpt based CLI here

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file turbogpt_pro-1.1.1.tar.gz.

File metadata

- Download URL: turbogpt_pro-1.1.1.tar.gz

- Upload date:

- Size: 5.4 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/5.1.0 CPython/3.11.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

97a046589149934be64510a18b6136e1ea7d8d119e675524904a245be2cd3949

|

|

| MD5 |

f7ef41659190a0b151fe1a2da3ede572

|

|

| BLAKE2b-256 |

eafed87a0ec694a5c92f7caa189e94fc1eb8ed543d83c83c2946d211f01b50c6

|

File details

Details for the file turbogpt_pro-1.1.1-py3-none-any.whl.

File metadata

- Download URL: turbogpt_pro-1.1.1-py3-none-any.whl

- Upload date:

- Size: 5.9 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/5.1.0 CPython/3.11.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

78e7ae878bfd1793356bb915020b9791cc570e055d5f71d0ac1dec481f0f4731

|

|

| MD5 |

770c56908fd40e16f57adffc0212a8a2

|

|

| BLAKE2b-256 |

db2e8961ba36da45a2de7a39f5b9ffa271a313aca80f31b17eea96c88fdb2156

|